Official statement

Other statements from this video 14 ▾

- □ Peut-on vraiment utiliser un sous-répertoire unique pour gérer plusieurs marchés internationaux avec hreflang ?

- □ Pourquoi Google n'indexe-t-il pas toutes les URLs de votre site ?

- □ Peut-on utiliser des avis tiers pour les résultats enrichis produits ?

- □ Comment savoir si Google vous pénalise vraiment ?

- □ Faut-il abandonner les URI de thésaurus NALT pour optimiser son référencement ?

- □ Pourquoi les erreurs robots.txt unreachable sont-elles toujours de votre faute ?

- □ Faut-il vraiment rediriger vos 404 vers la homepage ?

- □ Faut-il vraiment maintenir les redirections lors d'une migration de domaine ?

- □ Faut-il s'inquiéter de millions d'URLs non indexées sur son site ?

- □ Faut-il vraiment éviter le cloaking de codes HTTP entre Googlebot et utilisateurs ?

- □ Google traite-t-il vraiment les redirections 308 et 301 de la même manière ?

- □ WiFi vs Wi-Fi : Google fait-il vraiment la différence pour le référencement ?

- □ Un nombre d'avis à zéro pénalise-t-il le référencement d'une page produit ?

- □ Pourquoi certains sites migrés apparaissent-ils dans Google en quelques minutes et d'autres mettent des mois ?

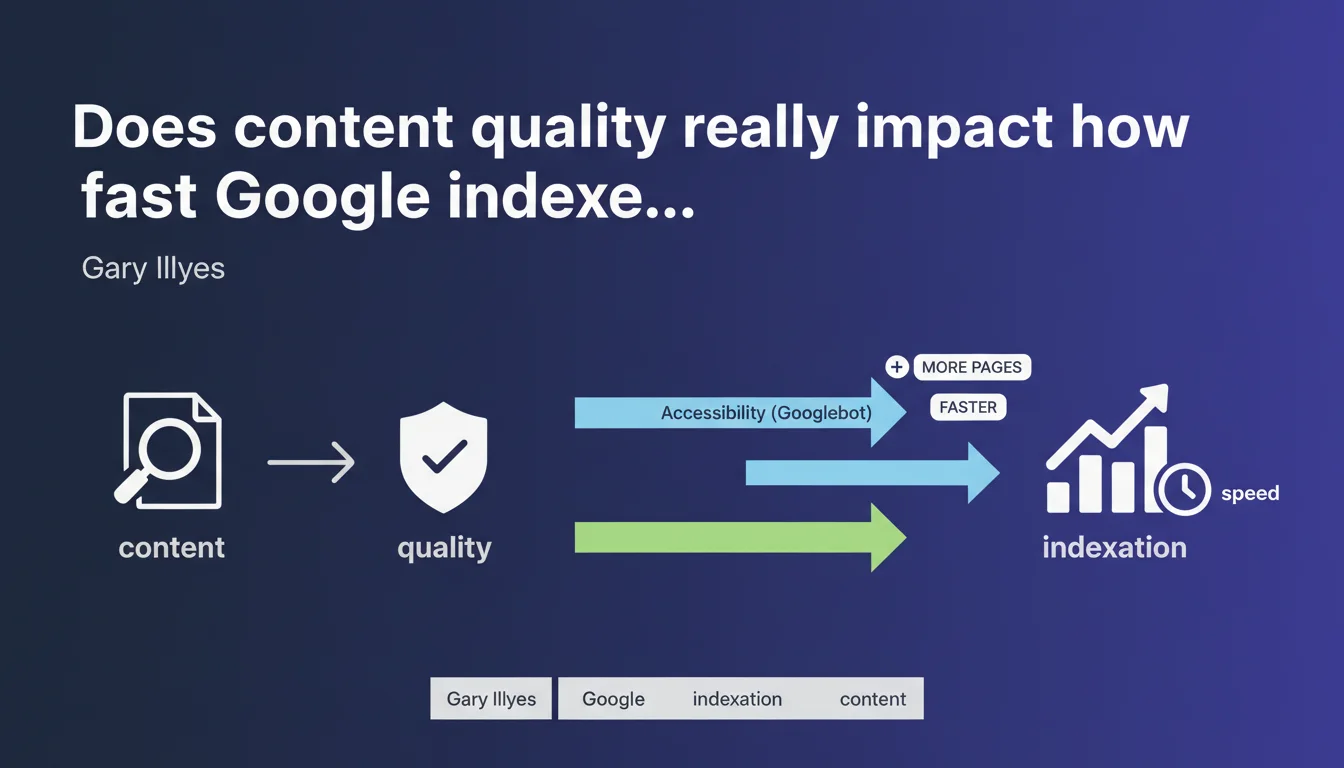

Google states that the speed and volume of pages indexed depend directly on two factors: technical accessibility of the site for Googlebot and content quality. The higher the quality, the faster Google indexes more pages. This puts the crawl budget debate into perspective and confirms that quality is not just a ranking criterion.

What you need to understand

How does Google connect quality to indexation?

Gary Illyes' statement introduces a frequently overlooked notion: content quality serves not only ranking purposes, but also conditions indexation itself. In other words, a site stuffed with mediocre or duplicated pages will see Google deliberately slow down its crawl and limit the number of indexed pages.

This logic fits into optimized crawl resource management. Google doesn't want to waste server time on valueless content. Technical accessibility remains a prerequisite — correct robots.txt file, clean sitemap, acceptable server response time — but it's no longer enough if the content behind it is weak.

What does Google mean by 'quality' in this context?

Google remains deliberately vague, but we can infer that quality combines multiple signals: thematic relevance, content uniqueness, depth of treatment, potential user engagement. It's not just about the absence of duplicates or keyword stuffing.

Concretely, a page that provides a complete answer to a search intent has a better chance of being indexed quickly than a thin page generated automatically. The difference also depends on the site's overall editorial consistency — a site with 80% solid content will be treated better than a site with 20% good content buried in filler.

Is technical accessibility enough without quality?

No, and this is where the statement challenges some conventional wisdom. You can have an ultra-fast server, impeccable XML sitemap, perfect internal linking — if your pages are mediocre, Google will choose to index fewer of them and more slowly.

This approach opposes the purely technical vision of SEO where people believed that optimizing crawl was sufficient. Now you need to think about indexation in two stages: first remove technical blockers, then prove that the content deserves to be crawled frequently.

- Technical accessibility (robots.txt, sitemap, server performance) is a necessary but not sufficient condition

- Content quality determines indexation speed and volume

- Google allocates its crawl budget based on perceived site value

- A site with a lot of weak content will be penalized in terms of crawl frequency

- Indexation is no longer guaranteed — it must be earned through editorial quality

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, and it confirms what many SEO professionals have observed for several months. Sites that have cleaned up their zombie pages or removed low-quality auto-generated content often see improved crawl frequency on their strategic pages. Google redistributes the budget to what really matters.

However, the notion of 'high quality' remains fuzzy. [To verify]: Google provides no threshold, no concrete indicator to measure whether our content reaches this level. We're navigating blind, crossing indirect signals — click-through rate, session duration, bounce rate — without absolute certainty.

What nuances should be added to this statement?

First nuance: site size plays a role. A 50-page site of high quality will be fully indexed quickly, but a 500,000-page site — even with 60% solid content — must contend with crawl budget constraints. Google cannot crawl everything at the same time, even if everything is good.

Second nuance: some sectors naturally generate many pages (e-commerce, classifieds, real estate). For them, maintaining 'high quality' on each page is sometimes impossible. The reality is that you must prioritize — and accept that some pages will never be indexed or will be updated rarely.

In what cases does this rule not apply fully?

News sites often benefit from special treatment. Even if editorial quality varies, Google indexes new articles quickly to feed Google News and real-time results. Freshness partially compensates for quality in this context.

Another exception: sites with very strong domain authority. An established site with a solid link profile will see its new pages indexed faster than a recent site, even if content quality is comparable. History and trust act as an accelerator.

Practical impact and recommendations

What should you do concretely to improve indexation speed?

First, ruthlessly audit existing content. Identify pages generating zero organic traffic for 6 months, those with bounce rates above 90%, those created automatically without added value. Then decide: substantial improvement, merge with another page, or outright deletion with 301 redirect if necessary.

At the same time, ensure that technical accessibility is flawless. Verify that the sitemap only lists indexable pages (no noindex, no canonicals to other URLs). Check server response times — aim for under 200 ms. Eliminate redirect chains and 4xx/5xx errors.

What mistakes should you absolutely avoid?

Classic mistake: publishing massive amounts of mediocre content hoping some will get indexed. This volume strategy works less and less. Google adjusts its crawl downward when it detects an unfavorable quality/quantity ratio.

Another trap: believing that a technically perfect page will necessarily be indexed fast. If it covers a topic already addressed by 50 other pages on the same site without differentiation, Google will put it in a low-priority queue. Internal cannibalization slows indexation.

How can you verify that your site benefits from optimal crawling?

Regularly check the Crawl Stats report in Google Search Console. Look at the evolution of requests per day — a progressive increase signals that Google is growing its trust. Compare the number of pages submitted via sitemap and the number actually indexed.

Also use server logs to analyze crawl depth. If Googlebot only visits the first 3 levels of navigation, that's a warning signal. Either your internal linking is insufficient, or Google believes deeper pages don't deserve its visit.

- Remove or improve pages with no organic traffic for 6+ months

- Verify that the XML sitemap only lists indexable pages (HTTP 200, no noindex)

- Reduce server response time below 200 ms

- Eliminate redirect chains and 4xx/5xx errors

- Avoid cannibalization: one search intent = one reference page

- Analyze server logs to measure actual crawl depth

- Track the evolution of Googlebot requests in Search Console

- Prioritize publishing high-value content rather than volume

❓ Frequently Asked Questions

Un sitemap XML garantit-il une indexation rapide ?

Faut-il supprimer les pages peu visitées pour améliorer le crawl budget ?

Comment Google mesure-t-il la qualité d'une page avant de l'indexer ?

Un site récent peut-il être indexé aussi vite qu'un site établi ?

L'utilisation d'un CDN ou d'un serveur plus rapide améliore-t-elle l'indexation ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 12/04/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.