Official statement

Other statements from this video 14 ▾

- □ Peut-on vraiment utiliser un sous-répertoire unique pour gérer plusieurs marchés internationaux avec hreflang ?

- □ Peut-on utiliser des avis tiers pour les résultats enrichis produits ?

- □ Comment savoir si Google vous pénalise vraiment ?

- □ Faut-il abandonner les URI de thésaurus NALT pour optimiser son référencement ?

- □ Pourquoi les erreurs robots.txt unreachable sont-elles toujours de votre faute ?

- □ Faut-il vraiment rediriger vos 404 vers la homepage ?

- □ Faut-il vraiment maintenir les redirections lors d'une migration de domaine ?

- □ Faut-il s'inquiéter de millions d'URLs non indexées sur son site ?

- □ Faut-il vraiment éviter le cloaking de codes HTTP entre Googlebot et utilisateurs ?

- □ Google traite-t-il vraiment les redirections 308 et 301 de la même manière ?

- □ La qualité du contenu influence-t-elle vraiment la vitesse d'indexation par Google ?

- □ WiFi vs Wi-Fi : Google fait-il vraiment la différence pour le référencement ?

- □ Un nombre d'avis à zéro pénalise-t-il le référencement d'une page produit ?

- □ Pourquoi certains sites migrés apparaissent-ils dans Google en quelques minutes et d'autres mettent des mois ?

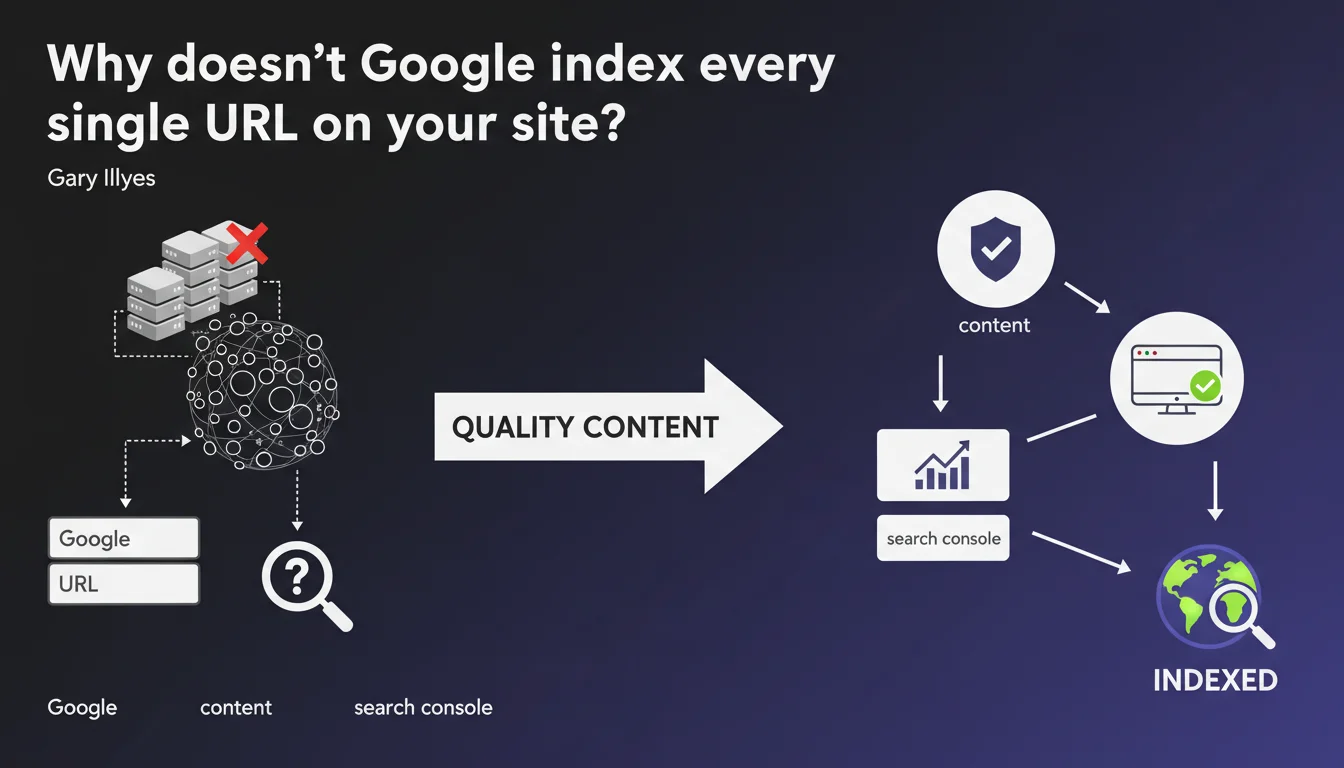

Google cannot and will not index every URL on the web. Only pages deemed to be high quality pass the filter. The real question isn't how many pages you have, but how many actually deserve to be in the index.

What you need to understand

Does Google really have the capacity to index the entire web?

The answer is no — and it's as much a technical constraint as an editorial choice. The web contains billions of pages, a huge portion of which is duplicate content, outdated material, or simply useless. Even with Google's colossal infrastructure, indexing every URL would be a waste of resources.

What Gary Illyes doesn't explicitly say is that this selection happens at multiple levels: crawl budget, quality filters during indexation, then ranking. A URL can be crawled without being indexed. It can be indexed without ever appearing in results.

What counts as a "high quality" URL in Google's eyes?

Here, things get murky. Google doesn't provide an objective scoring sheet. We know that signals like content depth, domain authority, user engagement, and freshness matter. But no one can tell you with certainty why one page gets indexed and another doesn't.

What we observe in the field: sites with clean architecture, coherent internal linking, and original content have a significantly higher indexation rate. Orphaned pages, weak content, and duplicates are often left behind.

- Google performs active selection of URLs to index—it's not automatic

- Crawling doesn't guarantee indexation — these are two distinct steps

- The criteria for "high quality" remain opaque and constantly evolving

- Search Console is your only reliable source for verifying actual indexation status

How can you tell if your pages are considered "quality"?

Practically speaking? You'll only know after the fact by checking Search Console. The URL Inspection tool will tell you if a page is indexed, and potentially why it isn't—but the reasons given are often vague ("Discovered, currently not indexed" being the most frustrating).

There's no displayed quality score. You need to cross-reference the data: overall indexation rate, average time before indexation, presence or absence in results for branded queries. If your pages take weeks to get indexed or disappear from the index, that's a warning signal.

SEO Expert opinion

Is this statement consistent with what we observe in the real world?

Yes, largely so. For years, we've seen Google massively de-index content deemed weak or redundant. Updates like Helpful Content have accelerated this sorting. Sites with thousands of pages sometimes see 70% of their URLs removed from the index without notice.

What's less obvious is that the notion of "high quality" varies by vertical. A product listing e-commerce page with 50 words can be indexed if it's unique and answers a specific commercial query. A blog post with 800 words can be ignored if it weakly rehashes a topic covered a thousand times elsewhere.

What nuances should be added?

Gary Illyes remains evasive on one critical point: perceived quality isn't binary. There's a massive gray zone between "excellent page" and "pure spam." Much mediocre content remains indexed without ever generating traffic—it occupies the index with no real utility.

Another blind spot: niche or highly specialized sites. They may offer rare and relevant content for a restricted audience, but if Google doesn't have enough popularity signals (backlinks, direct traffic, mentions), these pages risk being undervalued. [To verify]: does Google systematically favor sites with high overall authority, even if the content is locally less relevant?

In what cases does this rule not apply?

Sites with very high authority — think Wikipedia, government sites, major news outlets — benefit from much broader tolerance. Their pages are crawled and indexed massively, even if individual page quality is average. It's the halo effect: the trust granted to the domain compensates.

Conversely, a new site or a domain penalized in the past must prove itself. Even with excellent content, indexation will be slower and more selective. Domain history matters — and Google doesn't shout that from the rooftops.

Practical impact and recommendations

What should you do concretely to maximize your indexation chances?

First, eliminate the noise. If you have thousands of weak pages, they dilute your crawl budget and send negative signals about your site's overall quality. Identify orphaned pages, duplicates, obsolete content — and decide: merge, 301 redirect, or outright deletion.

Next, strengthen your internal linking. A page linked from your homepage or from strong thematic hubs has far better chances of being crawled and indexed than a page lost 6 clicks deep. Structure your content into coherent silos, with relevant contextual links.

Finally, submit your new URLs via Search Console and monitor their status. Don't rely on automatic crawling to discover everything — especially if your site has a low crawl budget. Use XML sitemaps intelligently: include only priority URLs, not every URL that exists.

- Regularly audit indexed vs. non-indexed pages in Search Console

- Remove or improve weak content identified as "Discovered, not indexed"

- Strengthen internal linking to strategic pages

- Limit the number of pages submitted in your XML sitemap to high-value-add URLs

- Track average indexation time: if it exceeds several days, your crawl budget is likely saturated

- Verify that key pages aren't blocked by robots.txt or an accidental noindex tag

What mistakes should you absolutely avoid?

Don't multiply pages "because more = better." It's wrong. A site with 500 excellent pages will be better indexed and ranked than a site with 5,000 mediocre pages. Google penalizes quality dilution.

Also avoid forcing indexation through artificial techniques (sitemap spam, repeated submissions). It doesn't work anymore, and it can actually attract manual actions. If a page isn't indexed despite your efforts, it's often a signal that it doesn't deserve to be — or that your domain lacks overall authority.

How can you verify your strategy is working?

Track three metrics in Search Console: indexation rate (indexed pages / submitted pages), average indexation delay for new URLs, and the evolution of "Discovered, not indexed" pages. If that last number explodes, you have a perceived quality problem.

Cross-reference with your analytics: how many indexed pages actually generate organic traffic? If 80% of your indexed pages have zero visits, it's a signal that Google keeps them in the index out of courtesy, but doesn't judge them useful. Focus your efforts on the 20% that perform.

Google's selective indexation imposes brutal rationalization: fewer pages, but better ones. Rather than chasing quantity, focus on relevance, depth, and uniqueness of your content. Regularly audit your index, eliminate noise, and strengthen internal architecture to guide crawl toward your strategic pages.

These optimizations touch on technical aspects (crawl budget, architecture) and editorial ones (quality, relevance) that can be complex to orchestrate, especially on large sites. If you notice persistent indexation issues or want to structure an ambitious overhaul, bringing in a specialized SEO agency can save you months of iteration and avoid costly mistakes.

❓ Frequently Asked Questions

Combien de temps faut-il pour qu'une nouvelle page soit indexée par Google ?

Pourquoi certaines de mes pages sont « Explorées, actuellement non indexées » ?

Faut-il supprimer les pages non indexées pour améliorer le crawl budget ?

Est-ce que soumettre une URL via la Search Console garantit son indexation ?

Un site avec beaucoup de pages a-t-il un avantage SEO ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 12/04/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.