Official statement

Other statements from this video 16 ▾

- □ Google attribue-t-il vraiment le même poids à tous vos backlinks ?

- □ L'emplacement des liens internes a-t-il vraiment un impact sur le SEO ?

- □ Google classe-t-il vraiment les sites dans des catégories fixes ?

- □ La cohérence NAP impacte-t-elle vraiment le référencement local ou seulement le Knowledge Graph ?

- □ Comment éviter que Google se trompe à cause d'informations conflictuelles entre votre site et votre profil d'établissement ?

- □ Les liens réciproques sont-ils vraiment sans risque pour votre SEO ?

- □ La fréquence des mots-clés influence-t-elle vraiment le classement Google ?

- □ Faut-il vraiment nettoyer TOUTES les pages hackées ou peut-on laisser Google faire le tri ?

- □ Pourquoi Google refuse-t-il d'indexer une partie de votre site même s'il est techniquement parfait ?

- □ Les emojis dans les balises title et meta description apportent-ils un avantage SEO ?

- □ Pourquoi vos FAQ n'apparaissent-elles pas en rich results malgré un balisage correct ?

- □ Faut-il vraiment réutiliser la même URL pour les pages saisonnières chaque année ?

- □ Les Core Web Vitals n'affectent-ils vraiment ni le crawl ni l'indexation ?

- □ Pourquoi Google réinitialise-t-il l'évaluation d'un site lors d'une migration de sous-domaine vers domaine principal ?

- □ Le TLD .edu booste-t-il vraiment votre référencement ?

- □ Les géo-redirects peuvent-ils réellement bloquer l'indexation de votre contenu ?

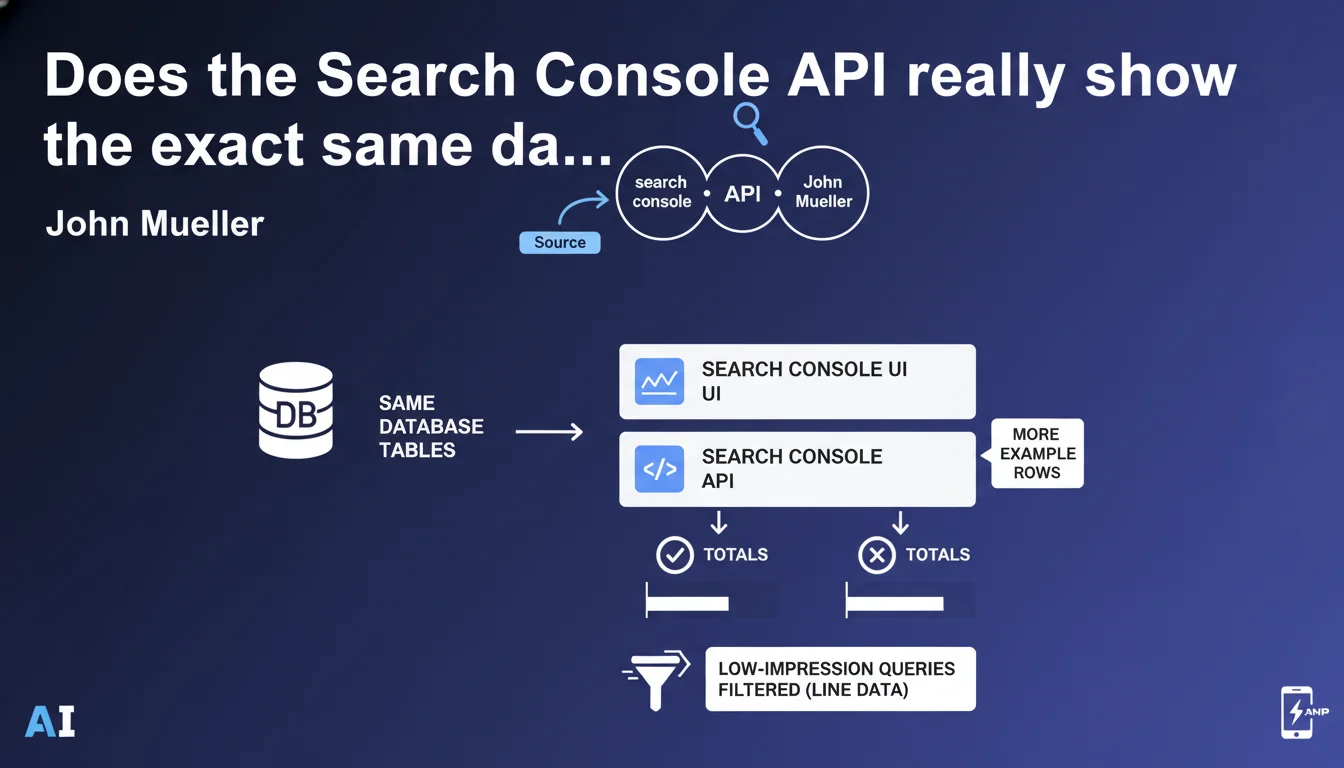

Search Console API and user interface pull from identical database tables. The main difference: the API lets you extract more example rows, but totals can vary because certain low-impression queries are filtered from detailed line data. This clarification explains why your API exports don't always perfectly match what you see in the UI.

What you need to understand

Why is Google making this clarification?

Many SEO practitioners have noticed numerical discrepancies between data exported via the Search Console API and data displayed in the web interface. Google is addressing this confusion head-on.

The database is unified. Both channels query the same tables. There's no double counting, no alternative data source. The divergence comes from the processing applied when displaying detailed line items.

What's the concrete difference between API and UI?

The API offers a larger volume of exportable rows. You can retrieve up to 50,000 rows per API request versus only 1,000 rows in the web interface by default.

But beware: some queries generate very few impressions. Google filters these low-volume rows from detailed exports to avoid cluttering reports with statistical noise. This filtering doesn't affect aggregated totals, but can create row-by-row discrepancies.

How should you interpret totals that differ?

The totals displayed at the top of the interface (total clicks, total impressions) include all data, including rows filtered from detailed breakdowns. This is why the sum of exported rows can be lower than the global total.

This filtering mechanism is documented: Google considers that queries with fewer than a handful of impressions don't deserve to appear in detailed exports to preserve signal quality.

- API and UI data come from exactly the same database tables

- The API allows you to obtain up to 50,000 rows versus 1,000 in the web interface

- Certain queries with very low impressions are filtered from detailed line data

- Aggregated totals include all data, even rows filtered from the detailed breakdown

- This difference explains why sum(rows) ≠ global total in some cases

SEO Expert opinion

Does this statement really clarify the issue?

Yes and no. It confirms what many suspected: the source is singular. No more debate about potential double counting or contradictory data between channels.

But it remains vague on the exact filtering threshold. At what impression count is a row retained? Google doesn't specify. [To verify] based on field observations, this threshold appears to vary and could depend on the site's total data volume.

What are the implications for your long-tail analysis?

Filtering low-impression queries poses a real problem for anyone wanting to analyze the long tail in depth. These ultra-niche queries, often with 1-3 impressions, disappear from exports.

Concretely: if you're trying to identify all queries generating traffic, even marginal traffic, you're working with partially truncated data. The API provides more rows, sure, but the floor remains opaque.

Is this logic consistent with observed practices?

Perfectly. Discrepancies between row sum and global total have been documented for years. Google is finally justifying this behavior through a data quality choice rather than raw exhaustiveness.

Be cautious, however: some third-party tools built on the API may display totals different from the interface, creating confusion among clients. Let's be honest, you need to explain this nuance in every report presentation.

Practical impact and recommendations

What should you concretely do with this information?

Prioritize the API for bulk exports if you need depth (50,000 rows vs 1,000). But stay aware that even the API filters ultra-marginal queries.

In your performance analyses, always base yourself on aggregated totals rather than the sum of exported rows. It's the global total that reflects exhaustive reality.

How do you avoid interpretation errors?

Never directly compare the sum of API rows with a UI total without checking whether data has been filtered. This classic mistake distorts variance analyses over time.

If you're building automated dashboards, integrate an alert when the gap between sum(rows) and total exceeds a certain threshold. This signals an unusual volume of filtered queries, often a symptom of highly dispersed long-tail traffic.

What tools or processes should you implement?

Automate your API exports with a script that retrieves global totals in parallel with detailed rows. Store both in your database to maintain historical consistency.

Document this methodological difference in your client reports. A simple footnote explaining Google's filtering is enough to prevent misunderstandings and strengthens your technical credibility.

- Use the Search Console API to retrieve up to 50,000 rows per export

- Base your KPIs on aggregated totals, not the sum of detailed rows

- Store global totals and detailed rows in parallel in your analysis tools

- Document Google's filtering in your reports to anticipate client questions

- Set up alerts if the sum/total gap exceeds an unusual threshold

- Explain this technical nuance during SEO performance presentations

❓ Frequently Asked Questions

L'API Search Console est-elle plus fiable que l'interface web ?

Pourquoi la somme de mes lignes exportées ne correspond pas au total affiché ?

Peut-on récupérer toutes les requêtes sans filtrage ?

Quel canal utiliser pour mes analyses SEO : API ou interface ?

Les outils tiers qui utilisent l'API sont-ils fiables ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 30/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.