Official statement

Other statements from this video 13 ▾

- □ Google accorde-t-il vraiment un traitement de faveur aux nouvelles pages d'accueil ?

- □ Google privilégie-t-il vraiment les pages de qualité dans son crawl ?

- □ Googlebot est-il vraiment stupide ou Google cache-t-il quelque chose ?

- □ La qualité d'une page détermine-t-elle vraiment le crawl des pages suivantes ?

- □ Google peut-il vraiment pénaliser certaines sections de votre site en fonction de leur qualité ?

- □ Faut-il vraiment déplacer le contenu UGC de faible qualité pour améliorer le crawl ?

- □ La fréquence de mise à jour influence-t-elle vraiment le crawl de vos pages ?

- □ Google filtre-t-il vraiment certains sujets lors du crawl et de l'indexation ?

- □ Pourquoi Google refuse-t-il d'indexer un contenu qu'il a pourtant crawlé ?

- □ Le contenu dupliqué est-il vraiment sans danger pour votre SEO ?

- □ Les liens d'affiliation peuvent-ils coexister avec une stratégie SEO de qualité ?

- □ Faut-il vraiment faire relire vos traductions automatiques par des humains ?

- □ Pourquoi Google privilégie-t-il les liens depuis des « sites normaux » pour évaluer votre importance ?

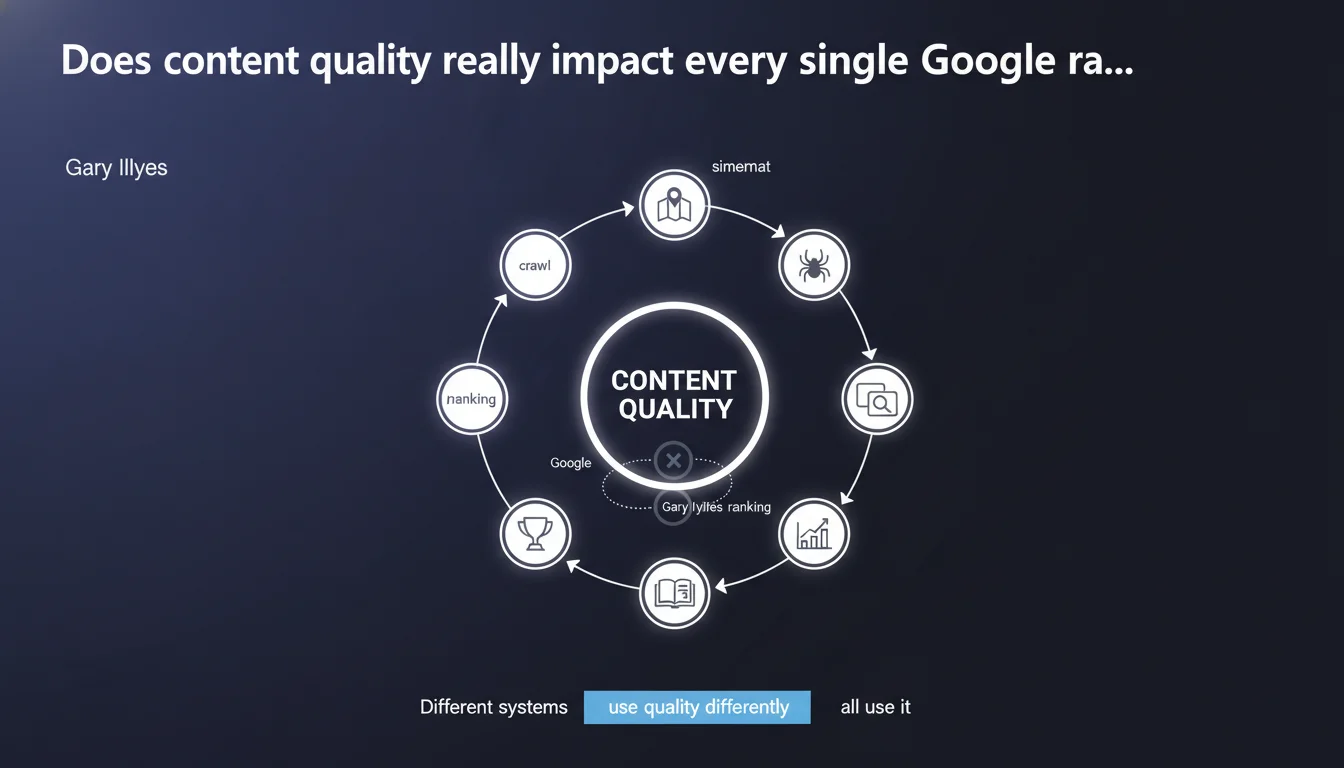

Gary Illyes states that content quality impacts all of Google's search systems — from crawling to indexing, ranking selection, and ranking itself. Each system uses this quality signal differently, but none ignores it. For SEO practitioners, this means that low-quality content can be penalized long before it ever reaches the search results pages.

What you need to understand

When Google talks about "quality," what exactly do they mean?

Gary Illyes's statement deliberately remains vague about how content quality is defined. We understand it to be a composite signal — not a single metric — that cuts across all technical layers of Google search.

What stands out here is the scope of the perimeter: sitemaps, crawling, ranking, indexing, index selection, and finally ranking. In other words, content deemed low-quality can be disadvantaged from the very first stages of the pipeline, long before ranking even comes into play.

Why does this statement matter for SEO professionals?

Because it challenges the common assumption that quality only matters at ranking time. If content is judged as weak upstream, it may never be indexed, or be relegated to a secondary index rarely consulted.

Concretely, this explains why some sites see their pages crawled but never ranked, or why similar content — technically optimized — receives radically different treatment. The quality signal acts as a multi-level filter.

Which search systems are affected?

- Sitemaps: Google may prioritize crawling URLs from sitemaps based on the site's historical quality.

- Crawling: Crawl resources (crawl budget) are allocated differently based on perceived content quality.

- Ranking: The sorting and prioritization of URLs for indexing also depends on this signal.

- Indexing: A page can be crawled but excluded from the main index if deemed low-quality.

- Index selection: Google maintains multiple indexes (primary, secondary), and quality determines where a page lands.

- Ranking: Finally, quality plays a role in ranking algorithms, which isn't new but is now confirmed as part of a broader continuum.

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, but with important nuances. In practice, we do observe that some low-quality sites experience crawl budget collapse with no apparent technical reason. Similarly, technically optimized content can remain invisible in the SERPs if the domain has accumulated low-quality signals.

That said, Gary Illyes's statement remains vague on one crucial point: how is this quality measured at each step? Is it the same algorithm everywhere? Different signals depending on the layer? [To verify] — Google provides no concrete data to allow practitioners to diagnose precisely where their content is filtered.

What are the limitations of this claim?

First limitation: the circularity of the reasoning. If quality affects crawling, indexing, and ranking, how can a new site — without history — be evaluated before it's even crawled? Google must necessarily use external or contextual signals, but which ones? Backlinks? Perceived domain authority? Topic relevance?

Second limitation: not all content is equal. An e-commerce site with thousands of similar product pages may be penalized for "low quality" even though it perfectly meets user expectations. Conversely, a news site can publish quick, shallow content that gets indexed and ranked without issue — because freshness takes priority over depth.

Should you rethink your SEO priorities given this statement?

Yes, but without over-optimizing. The main takeaway is that isolated work on on-page or internal linking isn't enough if the content itself is judged as weak. You need to go upstream: what real value does each page provide? Can it be consolidated, removed, or improved?

But let's be realistic: you can't directly measure this "quality signal" used by Google. You observe its effects — reduced crawl, non-indexation, ranking drops — but you can't fix it with certainty. What you can do is strengthen known signals: E-E-A-T, user engagement, content depth, regular updates. The rest involves field experimentation.

Practical impact and recommendations

What should you concretely do to improve Google's perceived quality?

First action: audit all existing content. Use Google Search Console to identify crawled but not indexed pages, or indexed pages never ranked. These are your first suspects. Ask yourself: do these pages offer unique value, or are they redundant variations?

Second action: consolidate or delete weak content. If you have hundreds of low-traffic pages with few backlinks and no engagement, consider merging them into more comprehensive content or deindexing them via robots.txt or noindex. A site with 500 high-quality pages will be treated better than one with 5,000 mediocre pages.

Third action: strengthen E-E-A-T signals on strategic pages. Add identified authors, credible sources, and regular updates. Google doesn't reveal how it measures quality, but we know it's been monitoring these signals for years.

Which mistakes must you absolutely avoid?

- Don't assume technically perfect content (tags, structure, speed) will automatically rank well if editorial quality is weak.

- Don't publish in bulk without qualitative evaluation — better 10 solid pages monthly than 100 average ones.

- Don't ignore "zombie" pages (crawled but invisible): they consume crawl budget and dilute your site's overall quality signal.

- Don't focus solely on final ranking — if your content is filtered upstream (crawl, indexing), you'll never see the problem in the SERPs.

- Don't neglect actual user experience: time spent, bounce rate, and interactions are quality proxies Google can measure indirectly.

How can you verify that your site meets these quality requirements?

Use Google Search Console to monitor indexation rates. If a significant portion of your URLs are discovered but not indexed, that's an alarm signal. Cross this data with Google Analytics to identify low-engagement pages.

Run a quarterly content audit: categorize your pages by performance (traffic, conversions, backlinks) and subjective editorial quality. Prioritize improving pages with high potential but low performance, and consider removing or consolidating low-potential pages.

❓ Frequently Asked Questions

La qualité du contenu peut-elle vraiment affecter le crawl budget ?

Google utilise-t-il le même algorithme de qualité à chaque étape ?

Une page techniquement parfaite peut-elle être écartée pour faible qualité ?

Comment savoir si mon contenu est jugé de faible qualité par Google ?

Faut-il supprimer les pages à faible trafic pour améliorer la qualité globale du site ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 19/09/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.