Official statement

Other statements from this video 13 ▾

- □ La qualité du contenu influence-t-elle vraiment tous les systèmes de classement Google ?

- □ Google accorde-t-il vraiment un traitement de faveur aux nouvelles pages d'accueil ?

- □ Google privilégie-t-il vraiment les pages de qualité dans son crawl ?

- □ Googlebot est-il vraiment stupide ou Google cache-t-il quelque chose ?

- □ La qualité d'une page détermine-t-elle vraiment le crawl des pages suivantes ?

- □ Google peut-il vraiment pénaliser certaines sections de votre site en fonction de leur qualité ?

- □ Faut-il vraiment déplacer le contenu UGC de faible qualité pour améliorer le crawl ?

- □ Google filtre-t-il vraiment certains sujets lors du crawl et de l'indexation ?

- □ Pourquoi Google refuse-t-il d'indexer un contenu qu'il a pourtant crawlé ?

- □ Le contenu dupliqué est-il vraiment sans danger pour votre SEO ?

- □ Les liens d'affiliation peuvent-ils coexister avec une stratégie SEO de qualité ?

- □ Faut-il vraiment faire relire vos traductions automatiques par des humains ?

- □ Pourquoi Google privilégie-t-il les liens depuis des « sites normaux » pour évaluer votre importance ?

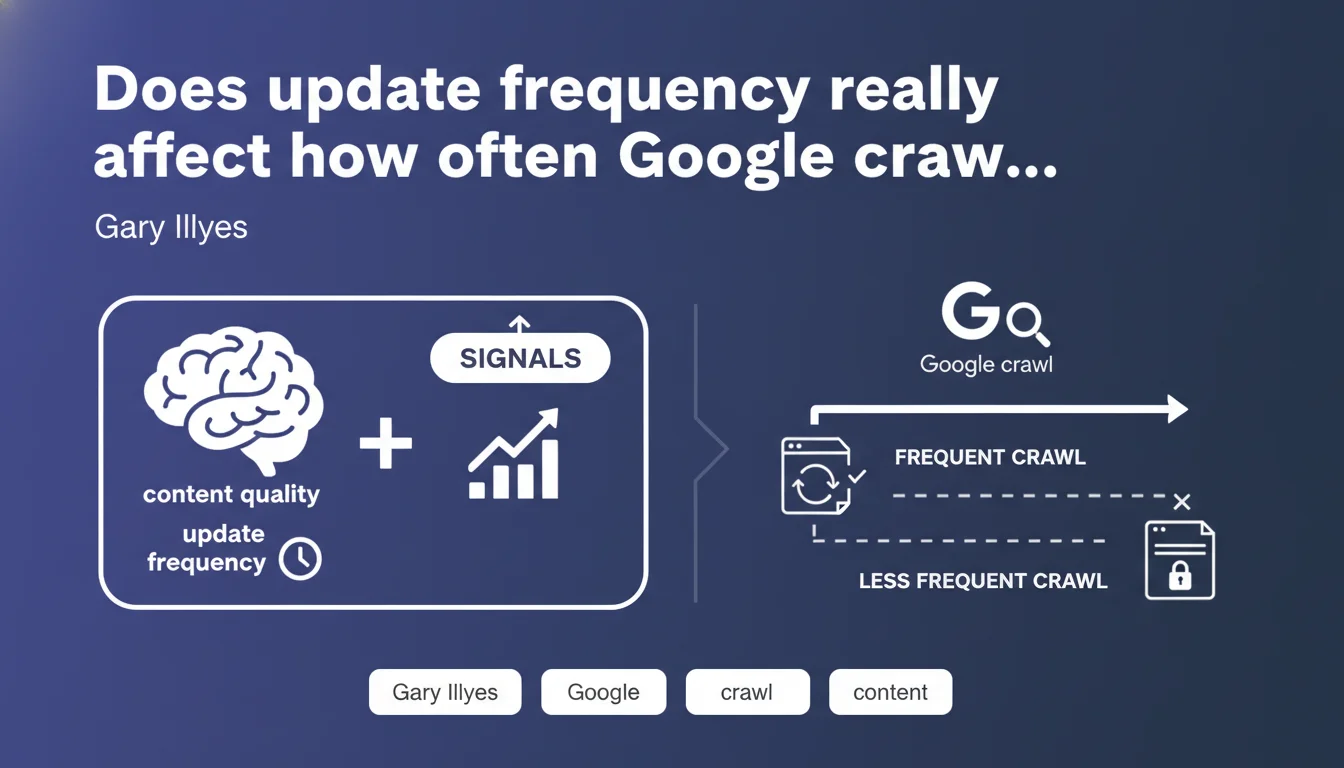

Google uses two criteria to decide crawl frequency: content quality (the priority signal) and how often pages change. Static pages like legal notices are naturally crawled less frequently. The takeaway: high-quality content remains the dominant factor, but keeping your important pages updated can accelerate their crawl rate.

What you need to understand

What are the two signals that drive crawl frequency?

Google clearly distinguishes content quality as the primary signal and update frequency as the secondary signal. This hierarchy matters: it means a mediocre page updated daily won't necessarily be crawled more often than exceptional but static content.

Update frequency acts as a potential interest indicator — if a page changes regularly, Googlebot anticipates it deserves revisiting. But without quality, this signal loses its weight.

Why are legal pages crawled less frequently?

Gary Illyes's example is telling: inherently static pages (terms of service, legal notices, privacy policies) rarely change and typically don't offer differentiated SEO value. Google therefore optimizes its crawl budget by visiting them less often.

Concretely? If your "Legal Notice" page hasn't been updated in 18 months, Googlebot might visit every 2-3 months instead of weekly. It's rational and frees up crawl budget for more strategic URLs.

How does this logic apply to dynamic sites?

On an e-commerce site where product pages change (inventory, pricing, customer reviews), Google naturally adjusts crawl frequency upward on these URLs. On a blog where you publish weekly, recent pages are crawled more often than old, static archives.

- Quality takes priority: mediocre content updated daily won't gain crawl frequency if Google judges it poorly relevant

- Update frequency influences crawl, but remains a secondary signal — it never compensates for quality deficits

- Static pages (legal, institutional) are naturally deprioritized in the crawl schedule

- Crawl budget optimization therefore relies on two approaches: improve quality and update strategic pages

SEO Expert opinion

Does this statement align with real-world observations?

Yes, and that's rare enough to highlight. Log audits consistently show that Googlebot focuses its visits on changing, quality URLs: high-traffic product pages, recently published well-ranked articles, regularly updated categories.

Conversely, "utility" pages with few links and rare updates (aging FAQs, legal notice pages, old archives) are indeed crawled much less frequently — sometimes once per quarter on large sites. The quality > frequency hierarchy holds up in practice.

What nuances should we add?

Gary Illyes doesn't specify how Google evaluates "quality" in this specific context — is it EAT, traffic, engagement, relevance signals? [To verify] what exact criteria underpin this quality judgment. We can assume a mix of signals, but no official confirmation exists.

Another point: update frequency doesn't mean "change for change's sake." Artificially adding a date or changing one word won't speed up crawling if Google detects the substantive content remains identical. Bots are trained to spot real changes from fake ones.

In what cases doesn't this rule fully apply?

On small sites (under 1,000 pages), crawl budget typically isn't a constraint — Google crawls the entire site regularly, including static pages. The quality/frequency hierarchy plays less of a role.

Another exception: pages manually submitted via Search Console (URL inspection) are crawled quickly regardless of their typical update frequency. It's a temporary override of the automatic system.

Practical impact and recommendations

What should you do concretely to optimize crawling?

First priority: identify your strategic pages (those generating traffic or conversions) and ensure they're regularly updated with quality content. You don't need daily changes, but monthly or quarterly refreshes depending on content type.

Next, analyze your crawl logs to spot unnecessarily crawled URLs: filter facets, session parameters, technical duplicates. Block them via robots.txt or canonicalize them to free up crawl budget for important pages.

What mistakes should you absolutely avoid?

Don't artificially modify pages just to "simulate" change. Google detects cosmetic modifications (adding dates, changing isolated words) and it won't positively influence crawling — conversely, it can degrade quality signals if content becomes inconsistent.

Also avoid completely neglecting important static pages. An "About Us" or "Contact" page well-constructed contributes to EAT even if it changes rarely — ensure it's at least accessible and crawlable, even if visit frequency is low.

- Audit crawl logs to identify patterns (which pages are visited often, which never?)

- Prioritize updates on strategic pages: flagship products, pillar articles, main categories

- Block or de-index valueless URLs that waste crawl budget unnecessarily

- Verify important pages are well-linked from internal linking (orphaned pages are crawled less often)

- Monitor crawl frequency via Search Console ("Crawl Statistics" section) and adjust if needed

- Never modify content just to "force" crawling — quality of changes matters more than frequency

❓ Frequently Asked Questions

Est-ce qu'un blog qui publie quotidiennement sera crawlé plus souvent qu'un site statique ?

Faut-il mettre à jour mes mentions légales régulièrement pour améliorer le crawl ?

Comment Google évalue-t-il la qualité d'une page pour décider du crawl ?

Modifier la date de publication d'un article suffit-il à relancer le crawl ?

Le crawl budget est-il un problème pour tous les sites ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 19/09/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.