Official statement

Other statements from this video 13 ▾

- □ La qualité du contenu influence-t-elle vraiment tous les systèmes de classement Google ?

- □ Google accorde-t-il vraiment un traitement de faveur aux nouvelles pages d'accueil ?

- □ Google privilégie-t-il vraiment les pages de qualité dans son crawl ?

- □ Googlebot est-il vraiment stupide ou Google cache-t-il quelque chose ?

- □ La qualité d'une page détermine-t-elle vraiment le crawl des pages suivantes ?

- □ Google peut-il vraiment pénaliser certaines sections de votre site en fonction de leur qualité ?

- □ Faut-il vraiment déplacer le contenu UGC de faible qualité pour améliorer le crawl ?

- □ La fréquence de mise à jour influence-t-elle vraiment le crawl de vos pages ?

- □ Google filtre-t-il vraiment certains sujets lors du crawl et de l'indexation ?

- □ Pourquoi Google refuse-t-il d'indexer un contenu qu'il a pourtant crawlé ?

- □ Le contenu dupliqué est-il vraiment sans danger pour votre SEO ?

- □ Les liens d'affiliation peuvent-ils coexister avec une stratégie SEO de qualité ?

- □ Pourquoi Google privilégie-t-il les liens depuis des « sites normaux » pour évaluer votre importance ?

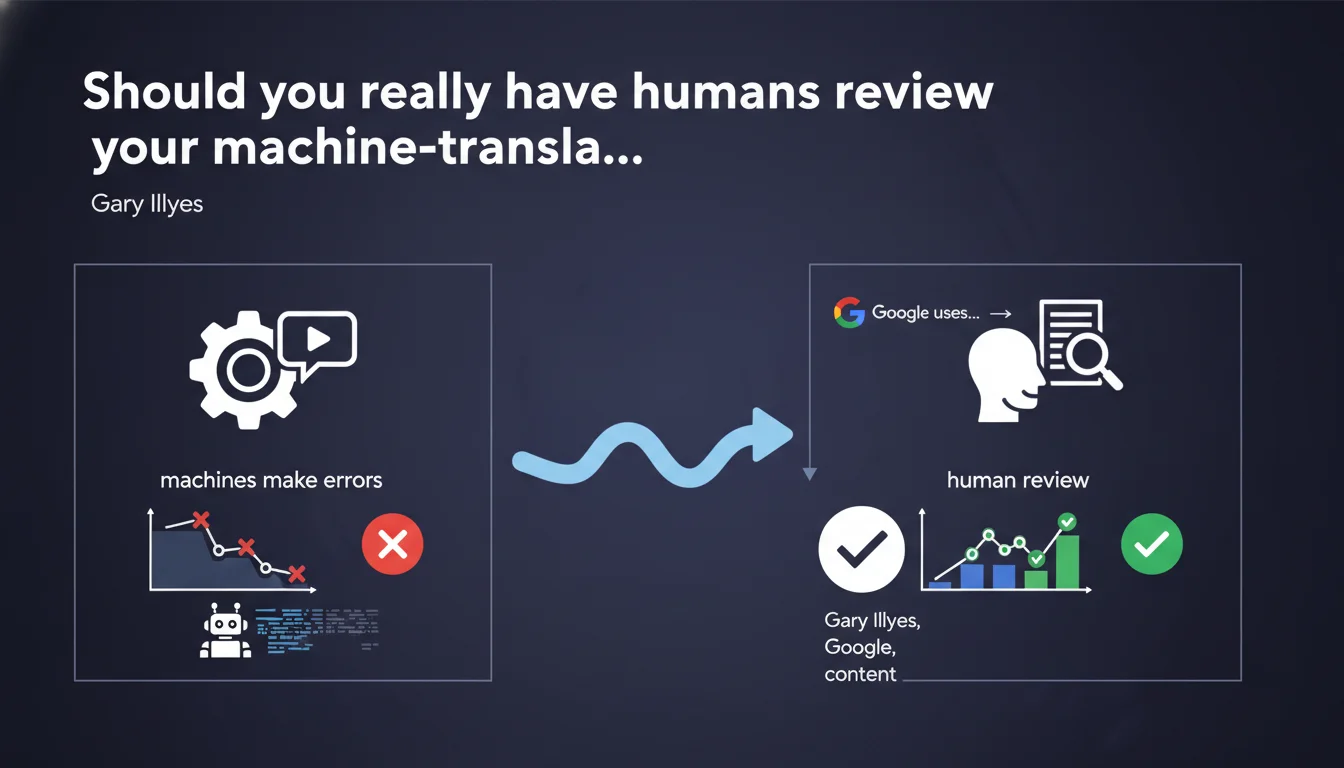

Google explicitly recommends having all machine-translated content reviewed by humans before publication. The Mountain View company applies this rule internally itself: machine translation + systematic human review. Machine translation errors can harm user experience and potentially impact rankings.

What you need to understand

Why does Google insist on human review of machine-translated content?

Gary Illyes's position is unequivocal: machines make errors. Even with the spectacular advances in neural machine translation models, automatic translators regularly produce mistranslations, awkward phrasings, or contextual errors.

Google itself does not blindly trust its own tools. The company uses machine translation for its multilingual website, but each language version goes through systematic human review. If Google doesn't allow itself to publish machine-translated content without verification, that's a strong signal.

Is this recommendation meant to avoid an algorithmic penalty?

Let's be clear: Gary Illyes doesn't say that machine-translated content will be penalized. He's talking about a strong recommendation, not an explicit ranking criterion. The nuance matters.

The real risk lies at the level of user experience. Poorly translated content generates high bounce rates, low engagement, and doesn't properly answer search intent. Indirectly, this impacts behavioral signals and quality perception.

What's the difference between machine translation and auto-generated content?

Crucial point: this statement specifically concerns translation of existing content, not generation of multilingual content from scratch by AI. The distinction is important.

Google doesn't condemn machine translation in itself — the company uses it. But it insists that human quality control remains essential to ensure relevance and accuracy.

- Automatic translators produce systematic errors, even with the best models

- Google itself applies human review to its own machine translations

- The recommendation aims at the quality of user experience, not direct algorithmic punishment

- It's about translating existing content, not automatic multilingual generation

- Main risk: negative behavioral signals on poorly translated language versions

SEO Expert opinion

Is this position consistent with practices observed in the field?

Absolutely. Multilingual sites that settle for raw machine translations often show mediocre performance on their secondary versions. Native speakers immediately spot awkward phrasings and loss of meaning.

I've audited dozens of international sites where unreviewed automatically translated versions generate 3 to 5 times less engagement than the source version. Time on page cut in half, bounce rate skyrocketing — it's systematic.

What level of human review is really necessary?

This is where Google's statement remains intentionally vague [To verify]. "Having reviewed" can mean many things: light correction of obvious errors, or a complete rewrite to adapt tone and local style.

My field experience: for strategic SEO content (category pages, guides, landing pages), light correction isn't enough. You need transcreation — cultural adaptation, rephrasing to respect local language usage, adjustment of target queries by market.

For less critical content (secondary blog, simple FAQs), correction review might suffice. But ask yourself: if this content doesn't deserve real adaptation, does it deserve to exist?

When can you skip human review?

Let's be honest: some content can probably skip extensive review. Structured data, technical specification tables, price lists — anything factual and standardized poses less risk.

But as soon as you touch editorial content meant to rank and convert, skipping human review is a risky bet. Short-term savings come due in poor performance.

Practical impact and recommendations

What do you need to implement concretely for multilingual sites?

If you use an automatic translation plugin (WPML, Weglot, TranslatePress), systematically activate the option for manual review before publication. Many of these tools publish by default without validation — classic mistake.

For sites with high volume of multilingual content, establish a validation workflow: automatic translation in draft, then review by native speaker before going live. Even partial, this step filters out 80% of critical errors.

Budget linguistic resources from the start when designing your international strategy. A site in 10 languages with no review budget means 9 versions that will underperform — better to focus on 3 well-treated languages.

How do you prioritize review efforts with limited budgets?

Start by identifying strategic pages in each language version: main category pages, top organic landing pages, critical conversion paths. These pages deserve thorough review, even full localized rewriting.

For secondary editorial content (blog, news), you can calibrate review level based on traffic potential. Quick correction of obvious errors is better than nothing.

Use analytics data to prioritize: language versions already generating traffic but with weak engagement metrics are ideal candidates for in-depth review work.

- Disable automatic publication of translations in your tools

- Implement a human validation workflow before going live

- Budget native review resources for each target language

- Prioritize review of strategic pages and conversion paths

- Audit existing language versions: identify those with decent traffic but weak engagement

- Train your reviewers on SEO stakes: target keyword preservation, tag respect

- Test translation quality with native speakers of your target audience

- Document recurring errors from your translation tool to refine the process

❓ Frequently Asked Questions

La traduction automatique est-elle considérée comme du spam par Google ?

Dois-je faire relire 100% de mon contenu traduit automatiquement ?

Quels sont les risques SEO concrets d'une traduction automatique non relue ?

Les traducteurs IA comme DeepL ou GPT-4 nécessitent-ils aussi une relecture ?

Comment mesurer si mes traductions automatiques sont suffisamment bonnes ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 19/09/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.