Official statement

Other statements from this video 14 ▾

- □ Google choisit-il vraiment les titres de page indépendamment de la requête de l'utilisateur ?

- □ Changer un nom de ville suffit-il à créer des doorway pages condamnables par Google ?

- □ Faut-il vraiment centraliser son contenu compétitif plutôt que le dupliquer ?

- □ Découvert mais non indexé : Google n'a-t-il vraiment jamais crawlé ces pages ?

- □ Faut-il vraiment faire confiance aux recommandations de vos outils SEO ?

- □ Faut-il encore corriger les redirections cassées longtemps après une migration ?

- □ Passer d'un ccTLD à un gTLD suffit-il pour conquérir de nouveaux marchés internationaux ?

- □ Sous-domaine ou sous-répertoire : Google a-t-il vraiment une préférence ?

- □ Pourquoi les clics par page et par requête diffèrent-ils dans Search Console ?

- □ Les erreurs de données structurées bloquent-elles vraiment l'indexation de vos pages ?

- □ Le maillage interne révèle-t-il vraiment l'importance de vos pages à Google ?

- □ L'attribut target des liens a-t-il un impact sur le référencement Google ?

- □ Faut-il vraiment supprimer tous les breadcrumbs schema sauf un pour éviter la confusion ?

- □ Pourquoi vos images CSS background-image sont-elles invisibles pour Google Images ?

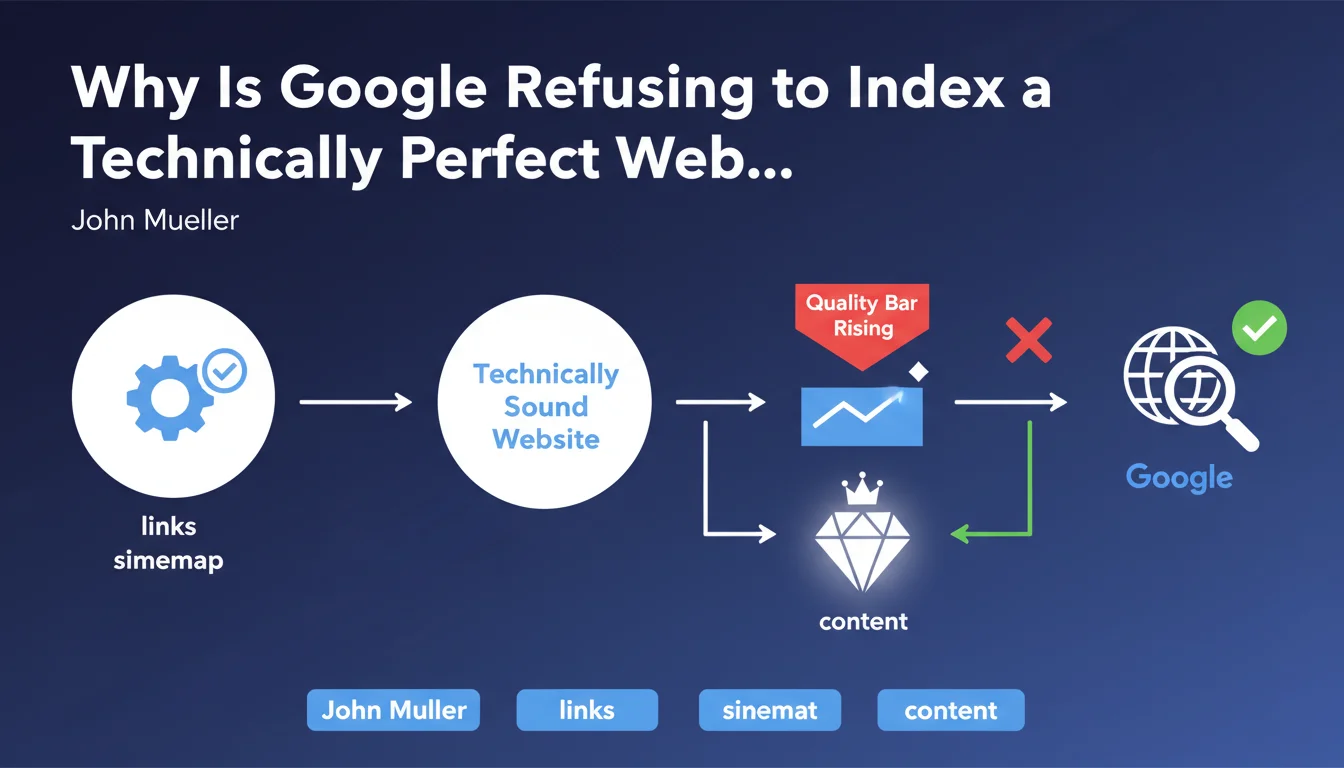

Google is indexing fewer and fewer pages, even on technically flawless sites. The quality bar is rising: having a clean sitemap and coherent internal links no longer guarantees anything. Only content deemed 'critical' by the algorithm deserves a place in the index.

What you need to understand

What Does "Technically Correct" Mean According to Google?

A technically correct site respects the fundamentals: up-to-date XML sitemap, logical internal link structure, absence of detectable spam. These criteria were once quasi-sufficient conditions for indexation.

Today, Google claims these elements have become the norm. Too many sites tick these basic boxes for it to be a differentiator. The search engine must therefore filter differently.

What Does Google Mean by "Critical Content"?

The term remains deliberately vague. Google speaks of content critical for users, without specifying exact metrics. We can assume this refers to pages answering a clear search intent, providing unique value, or generating engagement.

In practice, this means Google now filters upstream: certain pages will never make it through the index door, even if they're crawlable and error-free from a technical standpoint.

Why Is This Quality Bar Rising Now?

The quantitative explosion of the web forces Google to be more selective. Crawl and indexation resources are not infinite. The search engine therefore prioritizes perceived quality to limit the size of its index.

This rising bar also coincides with the massive arrival of auto-generated or AI-produced content, often technically clean but poor in added value. Google must filter this industrial output.

- Technical compliance has become a prerequisite, not a competitive advantage

- Google is indexing fewer and fewer pages, even on well-structured sites

- The "critical content" criterion remains subjective and difficult to measure from an SEO perspective

- This selectivity aims to reduce indexation load in the face of exponential web growth

SEO Expert opinion

Is This Statement Consistent With Real-World Observations?

Yes, massively. For several months now, many sites have reported a sharp drop in indexed pages, with no correlation to technical degradation. Entire sections disappear from the index while Search Console shows no errors.

Google now seems to filter at the source: certain content categories (low-differentiation product pages, generic informational articles, low-content pages) no longer pass the initial filter. Crawling happens, but indexation doesn't follow.

What Nuances Should Be Added to This Message?

Mueller doesn't specify how Google evaluates this "critical" nature. [To verify]: Is it based on behavioral signals (CTR, time on page), backlinks, existing traffic, or automatic semantic evaluation? The fog is complete.

Another crucial point: this bar-raising doesn't affect all sites equally. Established authority sites seem less impacted than newcomers or niche sites. Historical trust probably plays a buffering role.

In Which Cases Does This Rule Not Apply?

News sites, paradoxically, seem less affected. Google quickly indexes news content even if poorly differentiated, probably because freshness remains a priority criterion in certain contexts.

Similarly, transactional pages (e-commerce) continue to be indexed if they present a unique or rare offering. The "critical" nature also seems linked to information availability elsewhere on the web: if no one else covers this specific product, Google indexes it. If 50 sites sell the same article with the same manufacturer description, selection becomes drastic.

Practical impact and recommendations

What Should You Concretely Do to Clear This New Quality Bar?

Stop immediately producing content by volume. Better to have 10 excellent indexed pages than 100 mediocre ones being ignored. Concentrate your resources on depth and treatment uniqueness.

Work on engagement signals: reading time, bounce rate, interactions. If Google evaluates "critical" nature through behavioral metrics, these indicators become strategic. Content that nobody reads will never be judged critical.

- Audit your current index via Search Console: identify crawled but non-indexed pages

- Analyze these rejected pages to detect patterns: thin content, internal duplication, low engagement

- Strengthen editorial differentiation: unique expertise, proprietary data, original angle

- Reduce the number of pages: merge weak content, eliminate semantic duplicates

- Increase internal visibility of strategic pages through interlinking and menus

- Measure real engagement: install scroll tracking tools, heatmaps, actual reading time

- Work on backlinks to rejected pages: a strong external signal can force indexation

What Mistakes Should You Avoid in This New Context?

Don't bet everything on the XML sitemap. It's no longer a guaranteed indexation lever, just a suggestion Google can ignore. Some SEOs continue to massively add URLs to the sitemap hoping to force indexation — it no longer works.

Also avoid producing AI content in series without human enrichment. Google probably detects auto-generation patterns and classifies them outright as "non-critical". AI can help, but the final content must go through real curation.

How Can You Verify That Your Content Is Judged "Critical"?

No official tool exists. The only reliable indicator remains actual indexation, verifiable via site:yourdomain.com or Search Console. If a page disappears from the index without technical errors, it has been judged non-critical.

Also measure organic impressions on your new pages. Critical content quickly generates impressions, even with few clicks. Ignored content stays at zero impressions for weeks, a sign it was never considered for ranking.

❓ Frequently Asked Questions

Un site peut-il être parfaitement optimisé techniquement et ne pas être indexé ?

Comment savoir si mon contenu est jugé « critique » par Google ?

Faut-il supprimer les pages non indexées pour améliorer le crawl budget ?

Le contenu généré par IA est-il automatiquement exclu de l'index ?

Cette barre qualité affecte-t-elle tous les types de sites de la même manière ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 22/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.