Official statement

Other statements from this video 12 ▾

- □ E-A-T n'est-il vraiment pas un facteur de classement Google ?

- □ Avoir plusieurs URLs pour un même contenu entraîne-t-il vraiment une pénalité Google ?

- □ Faut-il adopter une démarche expérimentale pour optimiser son référencement naturel ?

- □ Faut-il avouer qu'on ne sait pas tout en SEO ?

- □ Faut-il vraiment éliminer toutes les chaînes de redirections pour préserver son crawl budget ?

- □ La matrice impact/effort est-elle vraiment la clé pour prioriser vos tâches SEO ?

- □ Faut-il imposer des solutions techniques aux développeurs ou simplement exposer les problèmes SEO ?

- □ Faut-il vraiment distinguer les redirections 301 et 302 pour le SEO ?

- □ Pourquoi développer du contenu invisible dans les moteurs de recherche revient-il à travailler pour rien ?

- □ Google déploie-t-il vraiment des mises à jour algorithme chaque minute ?

- □ Faut-il vraiment intégrer le SEO dès la phase de développement pour éviter les corrections coûteuses ?

- □ Les pages SEO sans valeur utilisateur peuvent-elles encore se classer dans Google ?

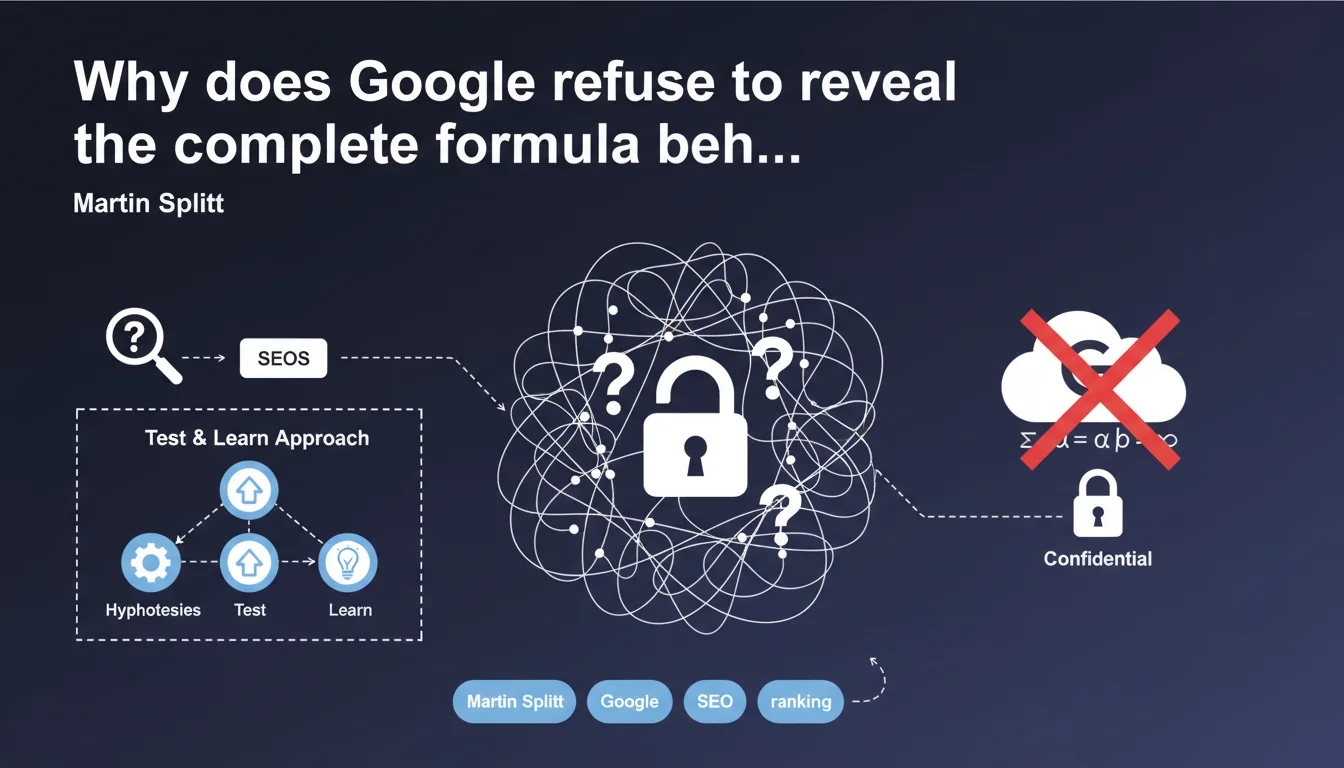

Google officially confirms it will never share the exact formula of its ranking algorithm. SEO practitioners must therefore build their strategy on validated hypotheses through field experimentation, adopting a rigorous test & learn methodology.

What you need to understand

Is Google really hiding the entirety of its algorithm?

The answer is yes, unambiguously. Martin Splitt and Jenn Mathews have reaffirmed it: Google will never communicate the entirety of ranking factors, nor their exact weighting. This opacity is not new, but the official confirmation puts an end to illusions.

Unlike public patents or fragmented guidelines, the internal mechanics remain a trade secret. Machine learning algorithms evolve continuously, and even some Google engineers do not master all the system's workings.

Why is this opacity maintained?

Three main reasons. First, competitive protection — revealing the algorithm would facilitate manipulation of results. Second, technical complexity: with thousands of signals interacting via ML, there is no linear "formula" to share.

Finally, a control strategy. By remaining vague, Google keeps the upper hand on the evolution of its ecosystem and discourages over-optimization tactics.

- Google will never reveal the exact weighting of each ranking signal

- ML algorithms create non-linear interactions difficult to summarize

- This opacity protects against industrial manipulations of SERPs

- Even internally, no one holds the exhaustive vision of the system

What room for maneuver remains for SEOs?

The empirical approach becomes essential. Professionals must formulate hypotheses, test them on significant page corpora, measure results, and adjust. This is a scientific approach applied to SEO.

Google still provides directional guidance: Search Quality Guidelines, announced Core Updates, Search Central documentation. But between these broad strokes and operational reality, there is a gap that only experimentation fills.

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. In the field, we have observed for years that two sites can apply the same "best practices" and obtain divergent results. Contextual factors — domain authority, history, vertical, user intent — create unique combinations.

The correlations observed in SEO studies are never direct causalities. A site can rank despite mediocre loading time if its content is judged exceptionally relevant. There is no universal recipe, and Google finally assumes this publicly.

What nuances must be made to this position?

Google does communicate on certain confirmed signals: backlinks, HTTPS, E-E-A-T, Core Web Vitals, mobile-first indexing. These are not secrets. But their relative weight and their interactions remain opaque. [To verify]: the real share of CWV in overall ranking has never been officially quantified.

Another nuance — this opacity sometimes favors misinformation. Some "experts" sell magical formulas based on questionable correlations. The lack of total transparency opens a space for charlatans, something Google never mentions in its communications.

In what cases does this rule experience exceptions?

During major Core Updates, Google sometimes provides post-deployment guidance. For example, the emphasis on E-E-A-T in YMYL content. These are not formulas, but strategic directions precise enough to guide optimization.

Similarly, certain algorithmic penalties are documented: duplicate content, cloaking, link schemes. In these cases, Google is explicit about what it penalizes, even if triggering thresholds remain secret.

Practical impact and recommendations

What must be done concretely in the face of this opacity?

Adopt a rigorous experimental methodology. Identify homogeneous segments of pages, formulate an optimization hypothesis, apply it to a controlled sample, measure impact over 4-8 weeks, then deploy or abandon.

Invest in advanced analytics tools capable of correlating on-page modifications with organic traffic fluctuations. Google Search Console, combined with a position tracker and a crawl tool, forms the minimal foundation.

Never bet everything on a single lever. Diversification — expert content, flawless UX, quality netlinking, solid technique — reduces risk related to algorithmic uncertainty.

What mistakes to avoid in this context?

Do not seek the magic formula. The "200 ranking factors" listed in some guides are often speculative reconstructions. Focus on confirmed signals and overall user experience.

Avoid over-interpreting official statements. When Google says "quality content is important," it does not define what quality is. Test your own criteria rather than mimicking others' interpretations.

Beware of correlations without causality. Just because 80% of top 3 pages have a certain word count does not mean this volume generates ranking. Context always wins.

How to structure an effective SEO approach without knowing the algorithm?

Start with confirmed fundamentals: clean technical architecture, content precisely answering search intent, coherent authority signals, smooth user experience. These pillars work regardless of the exact weight of signals.

Then iterate through testable micro-optimizations. Change one element at a time, measure, adjust. This incremental approach limits risks and allows isolating effective levers in your specific context.

- Set up a test environment with controlled page segments

- Document each hypothesis and each modification in an experimentation register

- Use GSC, a position tracker and a crawler to measure impact

- Never deploy global optimization without validation on reduced sample

- Regularly consult official Google documentation on Search Central to capture evolutions

- Diversify SEO levers to reduce dependency on a single signal

- Train the team in a data-driven culture rather than mechanical application of recipes

❓ Frequently Asked Questions

Google divulguera-t-il un jour la formule complète de son algorithme ?

Comment optimiser mon site sans connaître l'algorithme précis ?

Les 200 facteurs de ranking listés dans certains guides sont-ils fiables ?

Peut-on encore faire du SEO efficacement malgré cette opacité ?

Pourquoi Google garde-t-il son algorithme secret ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 26/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.