Official statement

Other statements from this video 12 ▾

- □ E-A-T n'est-il vraiment pas un facteur de classement Google ?

- □ Avoir plusieurs URLs pour un même contenu entraîne-t-il vraiment une pénalité Google ?

- □ Pourquoi Google refuse-t-il de dévoiler la recette complète de son algorithme ?

- □ Faut-il adopter une démarche expérimentale pour optimiser son référencement naturel ?

- □ Faut-il avouer qu'on ne sait pas tout en SEO ?

- □ Faut-il vraiment éliminer toutes les chaînes de redirections pour préserver son crawl budget ?

- □ Faut-il imposer des solutions techniques aux développeurs ou simplement exposer les problèmes SEO ?

- □ Faut-il vraiment distinguer les redirections 301 et 302 pour le SEO ?

- □ Pourquoi développer du contenu invisible dans les moteurs de recherche revient-il à travailler pour rien ?

- □ Google déploie-t-il vraiment des mises à jour algorithme chaque minute ?

- □ Faut-il vraiment intégrer le SEO dès la phase de développement pour éviter les corrections coûteuses ?

- □ Les pages SEO sans valeur utilisateur peuvent-elles encore se classer dans Google ?

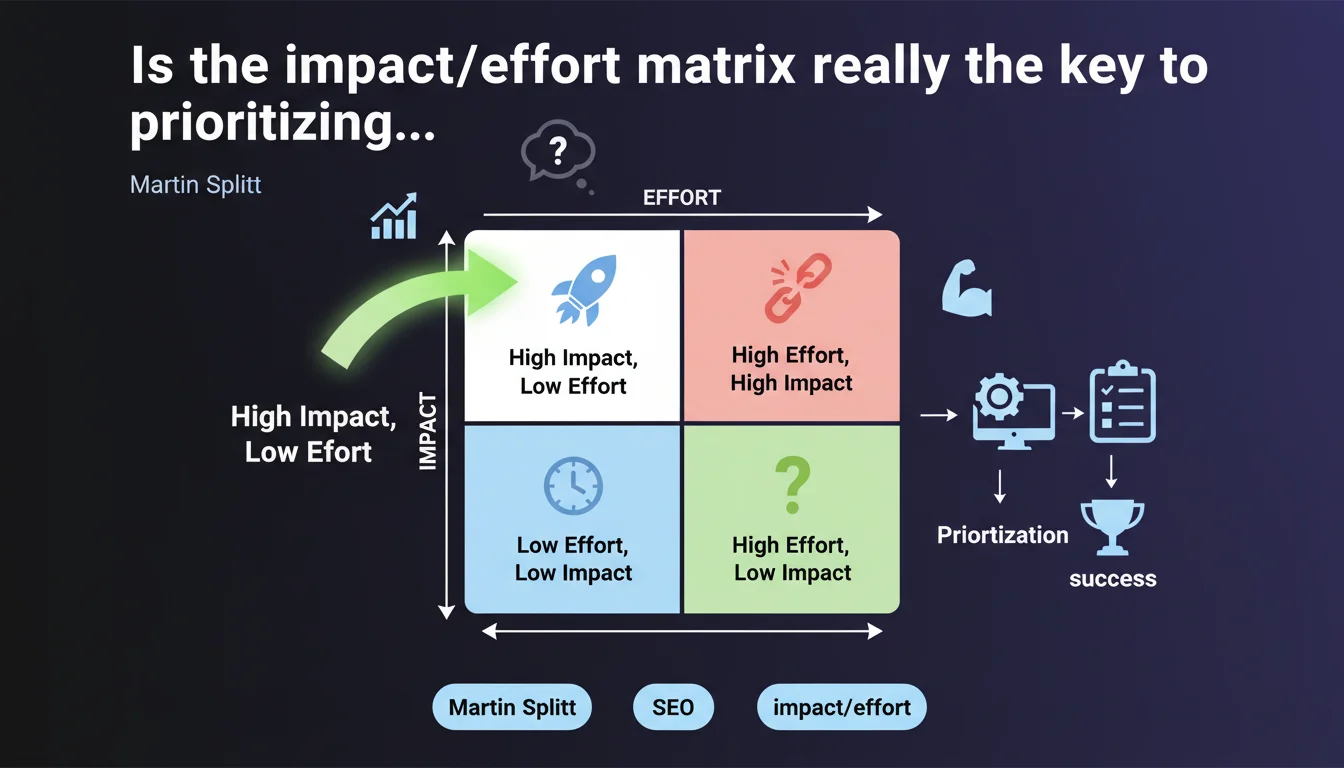

Martin Splitt from Google recommends using an impact/effort matrix to prioritize technical SEO requests. The goal: facilitate collaboration with developers by focusing first on high-impact, low-effort tasks. A pragmatic approach aimed at maximizing the ROI of development resources allocated to SEO.

What you need to understand

Why does Google insist on this prioritization methodology?

Splitt's statement directly addresses the recurring problem of friction between SEO and development teams. All too often, SEOs bombard developers with requests without clear hierarchy, creating resistance and slowing execution.

The impact/effort matrix is a classic project management tool that Splitt adapts to the SEO context. It allows you to categorize each task along two axes: potential impact on search rankings and required development effort. Quick wins (high impact, low effort) naturally become top priority.

What types of tasks fall into the "high impact, low effort" category?

Concretely, we're talking about fixes like duplicate title tags, adding missing alt attributes, correcting simple redirect chains, or implementing hreflang tags. These interventions have measurable SEO weight and don't require architectural overhauls.

Conversely, a complete migration to server-side JavaScript can have enormous impact but requires weeks of development — it slides into another category. The matrix forces you to distinguish between urgent and strategic.

Does this approach really change the dynamic with technical teams?

Yes, if applied correctly. Presenting requests sorted by potential ROI transforms SEO from a taskmaster into a strategic partner. Developers better understand why one task takes precedence over another.

The risk: that this matrix becomes an excuse to indefinitely postpone complex but foundational projects (internal linking restructuring, technical migration). Splitt doesn't say to ignore high-effort tasks — just not to mix them with quick gains.

- The impact/effort matrix helps objectively prioritize technical SEO requests

- High-impact, low-effort tasks should be handled first to maximize ROI

- This method improves collaboration between SEO and developers by bringing clarity

- Don't confuse prioritization with abandonment: heavy-lift projects remain necessary but must be planned differently

- The approach transforms SEO into a strategic partner rather than a mere requester

SEO Expert opinion

Is this recommendation really new or just best practice repackaged?

Let's be honest: the impact/effort matrix is nothing revolutionary. It's a classic of project management that any sensible SEO consultant already applies — or should apply. Splitt isn't revealing a new Google technical discovery; he's reminding everyone of an organizational best practice.

What's interesting is that Google feels the need to remind people publicly. This suggests that a significant portion of SEOs continue to submit requests haphazardly without hierarchy, creating unnecessary friction. The statement is more a diagnosis of common dysfunctions than an algorithmic revelation.

In what cases can this approach become counterproductive?

The matrix works well for sites with limited developer resources, where every sprint counts. But it can create dangerous biases. For example, some optimizations with low immediate impact (improving crawl budget in deep sections) have cumulative long-term effects that the matrix risks underestimating.

Another pitfall: rating "impact" without quantified data. If you estimate the impact of a canonicals correction without crawling the site or analyzing server logs, your matrix rests on guesswork. [Always verify] with analytical tools before deciding.

How do you prevent this method from becoming a brake on technical innovation?

The main risk: that teams focus exclusively on quick, visible gains, neglecting heavy technical investments that pay off in 12-18 months. An internal linking restructure or advanced pre-rendering implementation don't fit the "low effort" box, but can multiply organic traffic by 2 or 3 times.

The solution: use the matrix for short sprints (2-4 weeks), but maintain a separate quarterly roadmap for foundational projects. The two must coexist — otherwise, you're optimizing details while competitors rebuild their infrastructure.

Practical impact and recommendations

How do you concretely build an impact/effort matrix for your SEO tasks?

Start by listing all pending technical tasks in a spreadsheet. For each one, estimate the potential impact on a scale of 1 to 5 (based on data: search volume involved, pages impacted, crawl rate affected). Then estimate the effort in developer days — ask developers directly, don't guess.

Next, place each task in a quadrant: low effort/high impact (do immediately), high effort/high impact (plan on roadmap), low effort/low impact (handle end of sprint), high effort/low impact (abandon or rethink). This graphical visualization facilitates discussions with technical teams.

What mistakes must you absolutely avoid with this method?

First mistake: overestimate impact without data. "Fix title tags" sounds good, but if those pages generate 10 visits per month, the actual impact is negligible. Crawl, analyze server logs, check Search Console before estimating.

Second mistake: underestimate technical effort. What seems like "just a bit of code" can involve complex dependencies, regression testing, and security validations. Always consult developers before categorizing a task as "low effort".

Third mistake: ignore dependencies. One optimization may seem isolated but depend on a larger overhaul. If you prioritize poorly, you create technical debt that you'll pay later with interest.

What should you implement right now to apply this approach?

Organize a quarterly prioritization workshop with dev, product, and SEO teams. Present your matrix with hard data (potential traffic volume, number of pages impacted, estimated crawl rate gains). Transform the discussion into rational negotiation rather than a power struggle.

Set up a categorized ticket system in your project management tool (Jira, Asana, Monday). Each SEO request must explicitly state its impact/effort score and the data justifying it. This forces thinking before submitting and makes sorting easier for technical teams.

- Crawl the site and analyze server logs before estimating the impact of each task

- Consult developers to assess real effort, never guess

- Create a visual matrix (4-quadrant graph) to facilitate discussions

- Prioritize "high impact, low effort" tasks for short sprints

- Maintain a separate roadmap for foundational high-effort projects

- Document each request with quantified data (traffic volume, pages impacted, estimated gains)

- Organize quarterly prioritization workshops with all stakeholders

- Implement a categorized ticket system with visible impact/effort score

❓ Frequently Asked Questions

La matrice impact/effort s'applique-t-elle aussi aux optimisations de contenu ou seulement aux tâches techniques ?

Comment convaincre des développeurs réticents d'adopter cette méthode de priorisation ?

Quelle fréquence de révision de la matrice est recommandée ?

Faut-il partager publiquement la matrice avec toute l'entreprise ou la garder entre SEO et développeurs ?

Comment gérer les tâches qui ont un fort impact mais un effort très élevé ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 26/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.