Official statement

Other statements from this video 9 ▾

- □ Un audit SEO technique doit-il vraiment se limiter au crawl et à l'indexation ?

- □ Pourquoi votre audit technique SEO passe probablement à côté de l'essentiel ?

- □ Pourquoi votre audit SEO échoue-t-il avant même d'avoir commencé ?

- □ Quels sont vraiment les points techniques à auditer en priorité selon Google ?

- □ Comment exploiter vraiment les données de crawl de Google Search Console ?

- □ Faut-il vraiment s'inquiéter d'un pic d'erreurs 404 dans la Search Console ?

- □ Pourquoi un audit SEO standardisé peut-il nuire à votre stratégie ?

- □ Faut-il vraiment suivre tous les conseils de vos outils d'audit SEO ?

- □ Pourquoi votre audit SEO technique échoue-t-il sans l'équipe de dev ?

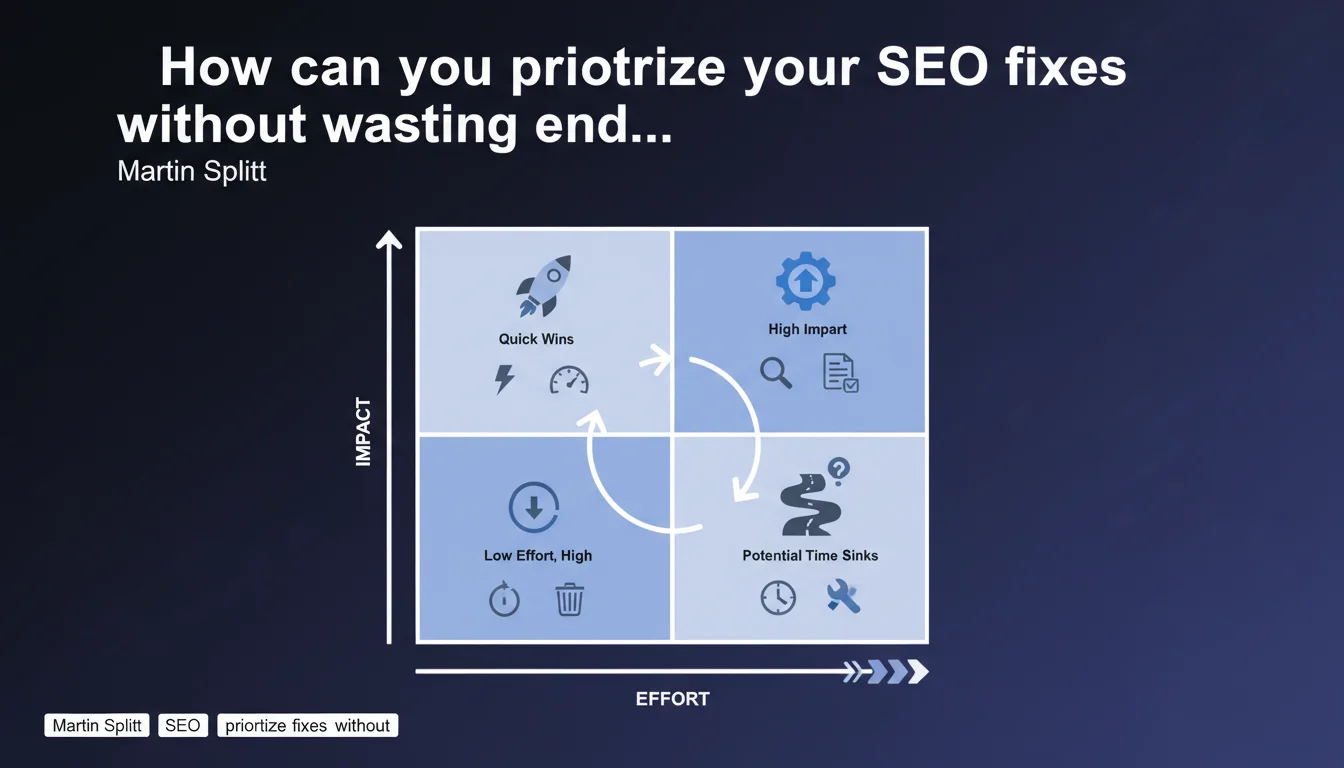

Google recommends sorting SEO audit results along two axes: correction effort and expected impact. Rather than tackling everything in order, this effort/impact matrix helps identify quick wins (high impact, low effort) and avoid squandering resources on marginal optimizations. An approach that seems obvious, yet rarely applied with real rigor.

What you need to understand

Why is Martin Splitt making this statement now?

Modern SEO audits generate hundreds of recommendations. Between automated tools and manual analysis, teams end up with endless lists with no idea where to start.

Martin Splitt, Developer Advocate at Google, reminds us of a common-sense principle often overlooked: not all SEO problems are equal. Some require three days of development work to gain 0.2% traffic, while others can be fixed in an hour with measurable impact.

What does the effort/impact matrix look like in practice?

It's about mapping each audit recommendation across two dimensions. The vertical axis represents estimated impact (traffic, conversions, user experience). The horizontal axis measures required effort (development time, technical risks, resources needed).

Optimizations with high impact and low effort become top priority. Corrections with low impact and high effort get relegated to the bottom of the list or abandoned entirely. Simple in theory — but it requires accurately estimating these two variables.

What are the pitfalls of this approach?

The main risk: underestimating technical effort. What seems like "just changing a few tags" may involve CMS modifications, multiple validations, and unforeseen regressions.

Another common mistake: confusing theoretical impact with real impact. Fixing 10,000 duplicate pages sounds massive on paper, but if those pages generate zero traffic, the business impact is null.

- Prioritize based on both effort AND impact, not just technical severity

- Quick wins (high impact, low effort) should be tackled first

- Some high-effort problems can be ignored if their impact is marginal

- Effort estimation must include hidden technical dependencies

- Impact must be measured in business terms (traffic, conversions), not tool scores

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes and no. Competent SEO agencies already apply this prioritization logic — implicitly or explicitly. The problem is that many clients judge audit quality by the number of recommendations, not their relevance.

The result: 80-page audits with 150 items to fix, of which 120 are trivial. And that's where it breaks down. Technical teams get overwhelmed, handle what's easy rather than what matters, and eventually treat SEO as bureaucratic overhead.

What nuances should you add to this recommendation?

First, effort isn't always proportional to impact. Overhauling a site's architecture takes six months — but if the current structure sabotages indexation of key pages, it's non-negotiable.

Second, some technical problems create cascading dependencies. Fixing pagination before addressing canonicals can force you to redo everything twice. Prioritization must account for these interdependencies, not just isolated scores.

Finally — and this is rarely mentioned — the impact of a fix depends on competitive context. Optimizing featured snippets in a saturated niche demands disproportionate effort for marginal gains. In an under-optimized sector, it can be a major lever.

What do you do when effort or impact estimation remains unclear?

Test at small scale. Rather than estimating blindly, apply the fix to a sample of pages and measure the real effect. It takes a few days, but you get factual data instead of wild guesses.

If even testing isn't feasible (complete redesign, CMS migration…), accept the uncertainty and base the decision on fundamentals: does this problem block indexation, degrade UX, or undermine Google's content comprehension? If yes, the effort is justified.

Practical impact and recommendations

What should you do concretely to prioritize effectively?

Start by segmenting your audit recommendations into four categories: indexation/crawl, content, technical, UX/Core Web Vitals. Within each segment, assess effort (in realistic person-days) and impact (in percentage of potential traffic or conversions).

Use a simple spreadsheet with three columns: issue, effort (1-5), impact (1-5). Multiply the scores to get a priority index. High ratios rise to the top — but keep a critical eye; numbers don't replace judgment.

Next, validate this prioritization with dev and business teams. What looks like low effort to an SEO might involve changes across three different systems. Alignment prevents mid-course surprises.

What mistakes should you avoid in this prioritization?

Don't batch all problems of the same type. Fixing 5,000 duplicate title tags seems logical, but if 4,800 affect pages with zero traffic, focus on the 200 that matter.

Also avoid neglecting "invisible" but foundational fixes. Repairing crawl budget or cleaning up redirect chains doesn't impress anyone in reports — but it conditions everything else.

Another classic trap: multiplying quick wins at the expense of foundational work. Yes, it feels great to check ten items off in a week. But if the core problem is site architecture, you're optimizing details on a shaky building.

- Segment the audit into categories (indexation, content, technical, UX)

- Assess effort and impact on a consistent scale (1-5, for example)

- Prioritize high impact/low effort fixes first

- Validate technical feasibility with dev teams before locking the roadmap

- Test uncertain fixes on a sample of pages

- Don't neglect heavy structural projects just because they take time

- Re-evaluate prioritization quarterly based on observed results

How do you ensure this approach works long-term?

Put in place quarterly tracking of deployed fixes with their measured impact (organic traffic, rankings, conversions). This refines your estimates for future audits and challenges initial assumptions.

If you find your prioritizations regularly miss the mark — effort underestimated, impact overvalued — it signals you lack perspective or data. In that case, bringing in an external SEO agency that has handled similar projects and can calibrate estimates with greater precision might be wise.

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 06/11/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.