Official statement

Other statements from this video 9 ▾

- □ Pourquoi le rendu côté client (CSR) met-il votre indexation Google en danger ?

- □ Pourquoi un échec de rendu JavaScript peut-il retarder votre indexation de plusieurs semaines ?

- □ Le JavaScript est-il vraiment indexé par Google ou faut-il encore s'en méfier ?

- □ Pourquoi le rendu côté client pose-t-il un problème structurel pour le crawl Google ?

- □ Le rendu côté serveur est-il vraiment plus fiable que le rendu client ?

- □ Faut-il vraiment privilégier le code 410 au 404 pour signaler une page supprimée ?

- □ Est-ce que Google traite vraiment les codes 429, 503 et 500 de la même manière ?

- □ Les domaines Web3 (.eth) sont-ils crawlables par Google ?

- □ Pourquoi vos utilisateurs tapent-ils le nom de votre marque dans Google plutôt que votre URL ?

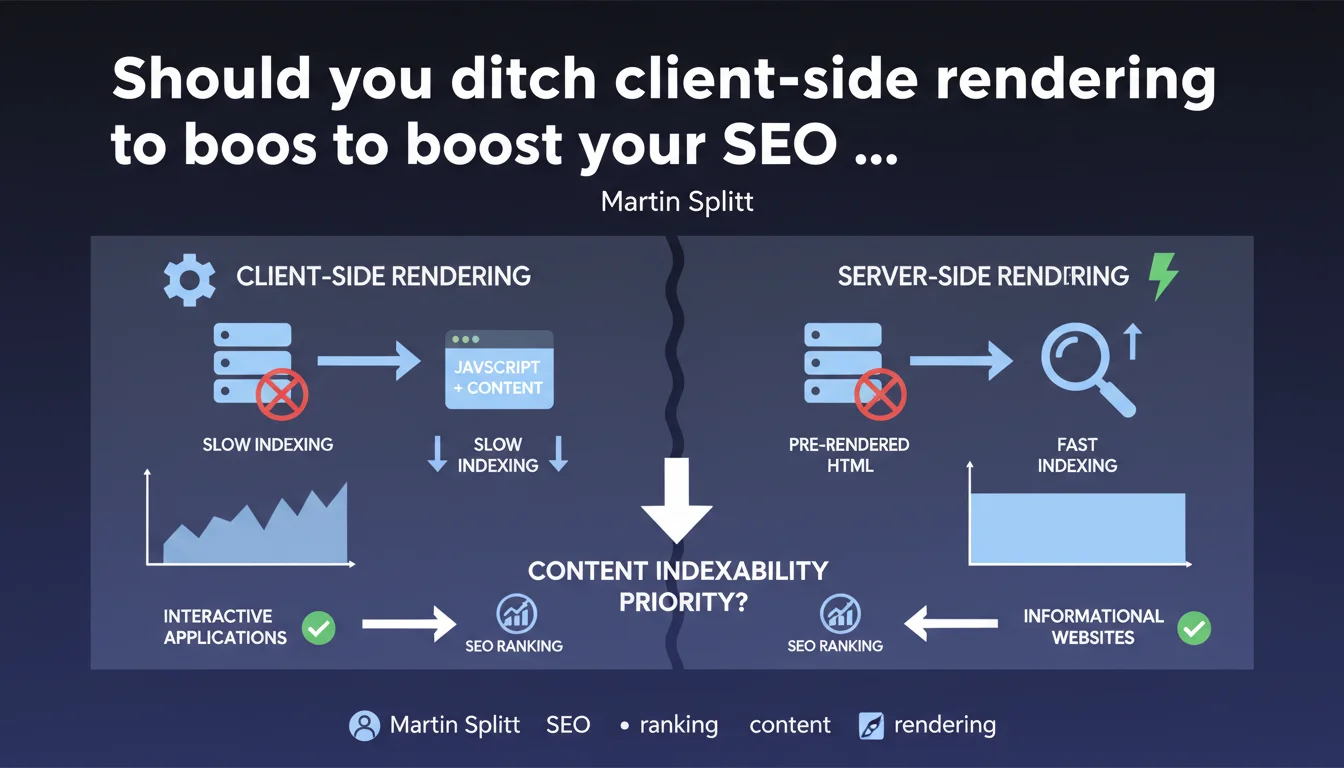

Martin Splitt confirms that client-side rendering (CSR) is not the best strategy for informational websites where indexability is the priority. Google favors architectures that enable direct access to content, particularly server-side rendering or hybrid approaches. Interactive applications remain a legitimate use case for CSR.

What you need to understand

Why does Google discourage client-side rendering for informational websites?

Client-side rendering forces Googlebot through an extra step: download empty HTML, execute JavaScript, wait for the DOM to build, and only then index the content. This process consumes crawl budget and delays indexation timelines.

For an informational website — blog, media outlet, standard e-commerce — this technical complexity delivers no tangible SEO benefit. Instead, it multiplies friction points: blocking JavaScript, execution errors, renderer timeouts.

What exactly does Google mean by an "informational website"?

The line remains blurry, but Google clearly targets sites whose core value lies in static text content: articles, product sheets, category pages, documentation. Anything that can be indexed directly without user interaction.

Complex web applications — dashboards, product configurators, SaaS interfaces — aren't subject to this recommendation. Their primary function isn't to be crawled but to be used.

What technical alternatives does Google suggest?

Martin Splitt doesn't detail the recommended architectures, but the subtext is crystal clear: server-side rendering (SSR), static generation (SSG), or at minimum hybrid rendering that delivers critical content as pure HTML.

Next.js, Nuxt, Astro and similar frameworks enable precisely this type of architecture. JavaScript enhances the experience without blocking initial indexation.

- CSR slows indexation and unnecessarily consumes crawl budget for informational websites

- Google clearly distinguishes between informational websites (content priority) and interactive applications (UX priority)

- Modern frameworks enable hybrid architectures that reconcile SEO and interactivity

- No performance metrics provided on CSR's actual impact on rankings

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. SEO audits systematically reveal that pure CSR websites encounter indexation problems: pages discovered but not indexed, empty snippets in Search Console, abnormally long delays between publication and ranking.

A/B tests migrating from CSR to SSR regularly show significant gains: +30% to +50% of pages indexed within 48 hours, improved positions on long-tail queries. You don't need an official statement to observe this — it's been measurable for years.

In what cases doesn't this rule apply?

Let's be honest: if your site is a complex application behind authentication, you probably don't care about indexation. A CRM, project management tool, or technical configurator has no need to be crawled by Google.

The trap is the middle ground: an e-commerce site wanting both ultra-responsive UX AND strong SEO performance. There, pure CSR is a bad compromise. Hybrid rendering becomes essential. [To verify]: Google never clarifies at what threshold of interactive complexity CSR becomes acceptable again for an informational website.

What nuances should be added to this statement?

Martin Splitt remains vague on one critical point: actual impact on rankings. Saying CSR "isn't recommended" doesn't mean it directly penalizes positions. The effect is indirect: fewer indexed pages = less potential organic visibility.

Another blind spot: no mention of Core Web Vitals. A poorly optimized SSR site can easily show catastrophic LCP, while a well-architected CSR (smart code splitting, intelligent lazy loading) can outperform it. Rendering mode is just one variable among many.

Practical impact and recommendations

What concrete steps should I take if my site is pure CSR?

First step: audit actual indexation. Compare published pages with indexed pages (Search Console → Coverage). If the gap exceeds 20-30%, CSR is likely the culprit.

Next, test Googlebot rendering with the URL Inspection tool in Search Console. Verify that critical content appears in the rendered HTML. If entire blocks are missing or display with several seconds delay, that's a red flag.

What mistakes should you avoid when migrating CSR to SSR?

Don't underestimate the complexity of migration. Moving from React as a SPA to Next.js with SSR means rethinking component architecture, managing hydration, avoiding inconsistencies between server and client rendering.

Classic error: migrating without measuring server response times. SSR that generates each page in 800ms destroys user experience and tanks TTFB. You must systematically pair SSR with caching (CDN, application cache, ISR).

How do I verify my site meets Google's recommendations?

Run a crawl with Screaming Frog in JavaScript disabled mode. If main content disappears, your site depends too heavily on CSR. Search engines must access content without executing a single line of JS.

Also check server logs: if Googlebot re-crawls the same URLs systematically at short intervals, it's having trouble extracting content in a single pass.

- Audit actual indexation rate via Search Console

- Test Googlebot rendering with the URL Inspection tool

- Crawl the site with JavaScript disabled to identify inaccessible content

- Measure server response times before any SSR migration

- Implement caching (CDN, ISR) to prevent performance regressions

- Monitor Googlebot logs to detect abnormal crawl patterns

❓ Frequently Asked Questions

Le rendu côté client pénalise-t-il directement le ranking dans Google ?

Un site e-commerce peut-il utiliser du rendu côté client sans impacter son SEO ?

Faut-il abandonner React ou Vue.js pour faire du SEO ?

Comment savoir si mon site souffre de problèmes d'indexation liés au CSR ?

Le passage au SSR améliore-t-il automatiquement les Core Web Vitals ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 30/05/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.