Official statement

Other statements from this video 9 ▾

- □ Pourquoi le rendu côté client (CSR) met-il votre indexation Google en danger ?

- □ Pourquoi un échec de rendu JavaScript peut-il retarder votre indexation de plusieurs semaines ?

- □ Le JavaScript est-il vraiment indexé par Google ou faut-il encore s'en méfier ?

- □ Pourquoi le rendu côté client pose-t-il un problème structurel pour le crawl Google ?

- □ Le rendu côté serveur est-il vraiment plus fiable que le rendu client ?

- □ Faut-il abandonner le rendu côté client pour améliorer son référencement naturel ?

- □ Faut-il vraiment privilégier le code 410 au 404 pour signaler une page supprimée ?

- □ Les domaines Web3 (.eth) sont-ils crawlables par Google ?

- □ Pourquoi vos utilisateurs tapent-ils le nom de votre marque dans Google plutôt que votre URL ?

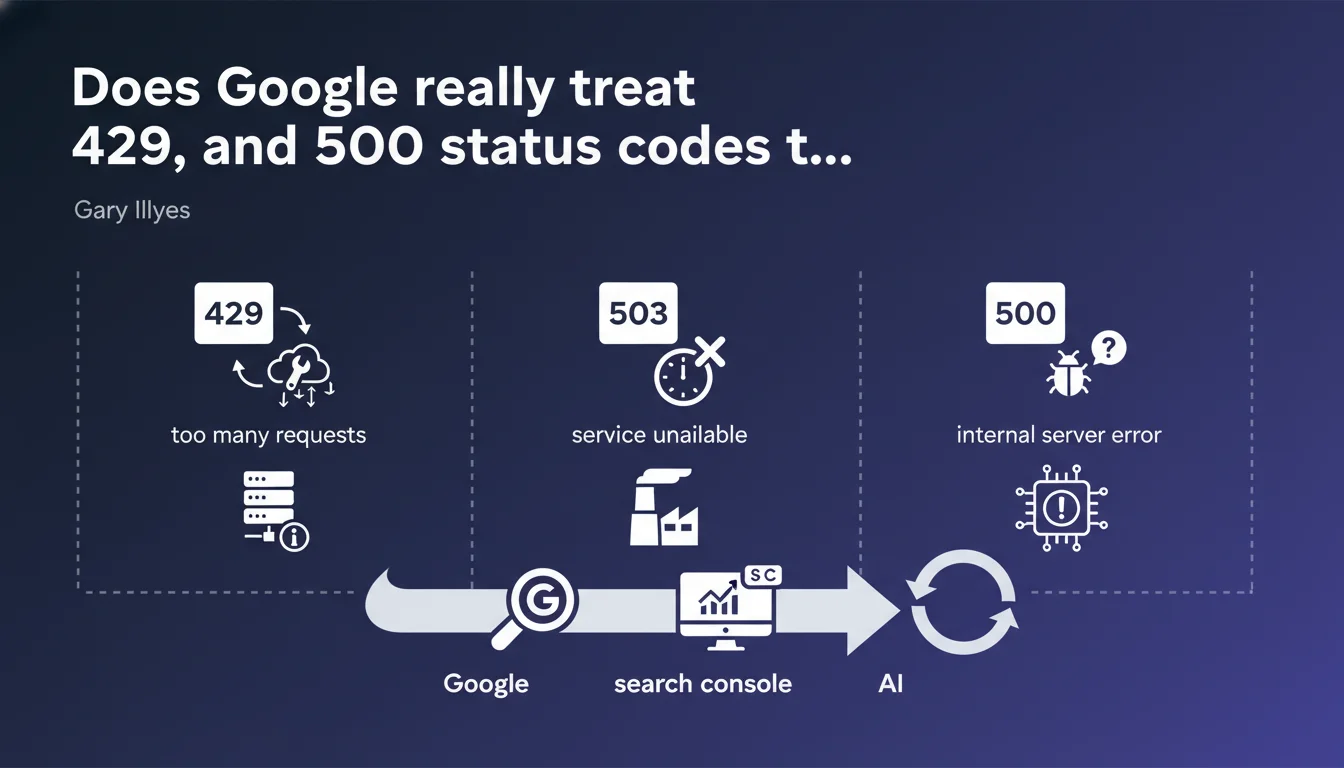

Google treats HTTP status codes 429 (too many requests), 503 (service unavailable), and probably 500 (server error) in a similar manner. Search Console also groups them together. For SEO, this means these errors trigger nearly identical crawl behavior: progressive slowdown, retries, and temporary maintenance of indexation.

What you need to understand

Why does Google group these error codes together?

These three HTTP codes (429, 503, 500) share a common characteristic: they signal a temporary unavailability rather than a permanent error. Unlike a 404 or a 410, they don't say "this page doesn't exist" but rather "try again later".

For Googlebot, the logic is simple: it won't immediately penalize a site experiencing a traffic spike or temporary technical incident. The bot reduces its crawl frequency and retries access at increasingly longer intervals.

What's the technical difference between these codes?

The 429 explicitly indicates rate limiting — you're asking the bot to slow down. The 503 signals a service unavailability (maintenance, overload). The 500 is an internal server error, often unintentional.

In theory, these nuances exist. In practice, Gary Illyes confirms that Google treats them with the same temporary tolerance. Search Console even aggregates them in the same error reports.

What happens if these codes persist?

If your site returns these codes in a repeated or prolonged manner, Googlebot will eventually consider the URLs as durably inaccessible. They can then be deindexed, even though technically the code only signals a temporary error.

The duration of tolerance is not public — we're talking about several days to several weeks depending on other signals (site history, usual crawl frequency, authority).

- The codes 429, 503, and 500 trigger similar behavior in Googlebot

- The bot slows down crawling and retries access at regular intervals

- Indexation is maintained temporarily, but can be lost if the error persists

- Search Console groups these codes in server error reports

- No immediate penalty, but limited tolerance over time

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes — and it's actually one of the most empirically verifiable statements. In practice, we indeed observe that sites returning a 503 or 429 see their crawl budget drop quickly, with recrawl attempts spaced further apart. The 500, however, often generates more confusion on Google's side.

Let's be honest: the 500 is supposed to be an unintended error, not a rate limiting signal. Yet Gary Illyes says "probably" — which leaves room for uncertainty. [To verify]: we lack public data on the exact treatment of 500 compared to the other two.

What nuances should be clarified?

First nuance: not all 500s are equal. A sporadic 500 on a single URL won't have the same impact as a widespread 500 across the entire site. Google adjusts its reaction based on the error rate and history.

Second point: 429 is the only code that explicitly communicates a desire to limit crawling. It can be accompanied by a Retry-After header to tell the bot when to come back. Googlebot respects this header — which is not the case with a 503 or a 500.

In which cases does this rule not apply?

If your site returns a 500 on all URLs for several days, Google won't wait indefinitely. Past a certain threshold — variable depending on site authority — pages will be deindexed, even though the code only indicates a temporary error.

Another edge case: some sites use a 503 for scheduled maintenance with a Retry-After of a few hours. Google generally respects this timeframe. But if you forget to remove the 503 and it stays active for several weeks, indexation will eventually be lost.

Practical impact and recommendations

What should I do concretely if my site returns these codes?

First action: identify the cause. A 429 is often intentional (rate limiting enabled), a 503 can signal maintenance or overload, a 500 indicates a server bug. Apache/Nginx logs and application monitoring are your best allies.

If the code is temporary and justified (traffic spike, planned maintenance), add a Retry-After header to tell Googlebot when to come back. This limits crawl budget loss.

What mistakes should you absolutely avoid?

Never return a 429, 503, or 500 across the entire site for several days without communication. Google may interpret this as abandonment or a prolonged outage — and may deindex massively.

Another common mistake: using a 503 to block crawling on certain sections. It's tempting, but risky. If Google retries multiple times without success, it may consider these URLs as permanently inaccessible.

How can I verify that my site handles these codes correctly?

Check Search Console regularly, in the "Coverage" and "Crawl Statistics" sections. The 429/503/500 errors are grouped there. A sudden spike should trigger an alert.

Test your HTTP headers with a tool like curl or an online validator. Verify that Retry-After is present and consistent if you intentionally use a 429 or 503.

- Monitor server logs to detect 429, 503, 500 codes in real time

- Add a Retry-After header on 429s and 503s to guide Googlebot

- Never leave these codes active for more than 48-72 hours without corrective action

- Check Search Console weekly to spot server errors

- Test HTTP headers with curl or a dedicated validator

- Document planned maintenance and inform Google via Search Console if possible

- Segment errors by URL type (strategic vs secondary) to prioritize fixes

❓ Frequently Asked Questions

Google désindexe-t-il immédiatement les pages qui renvoient un 503 ?

Dois-je utiliser un 429 ou un 503 pour limiter le crawl de Googlebot ?

Le header Retry-After est-il obligatoire avec un 429 ou 503 ?

Combien de temps Google tolère-t-il un code 500 avant de désindexer ?

Search Console distingue-t-il les codes 429, 503 et 500 dans les rapports ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 30/05/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.