Official statement

Other statements from this video 18 ▾

- □ Does Google really penalize multiple domains targeting the same market, or is this just another SEO myth?

- □ Are your JavaScript library vulnerabilities causing your Google rankings to plummet?

- □ Can you really prevent Google from crawling certain parts of a webpage?

- □ Is it really worth your time submitting an XML sitemap to Google?

- □ Why isn't schema.org compliance enough to guarantee Google rich results?

- □ Do HSTS headers really impact your SEO performance?

- □ Does Google really reprocess your sitemap on every crawl?

- □ Does Google really care about the difference between HTML and XML sitemaps? Here's what John Mueller revealed

- □ Does Google really ignore structured data that contains parsing errors?

- □ Do numbers in your URLs really hurt your search rankings?

- □ Does index bloat really exist at Google?

- □ How can you permanently block Googlebot from crawling your website?

- □ Does Google really issue official SEO certifications?

- □ Do multiple navigation menus really hurt your SEO?

- □ Are host groups really a sign of cannibalization you need to fix?

- □ Can you really disavow toxic backlinks by targeting their IP address in Google's tool?

- □ Should you remove the NOODP meta tag from your Blogger sites?

- □ How do you get a video thumbnail in Google search results: what does Google really mean by 'main content'?

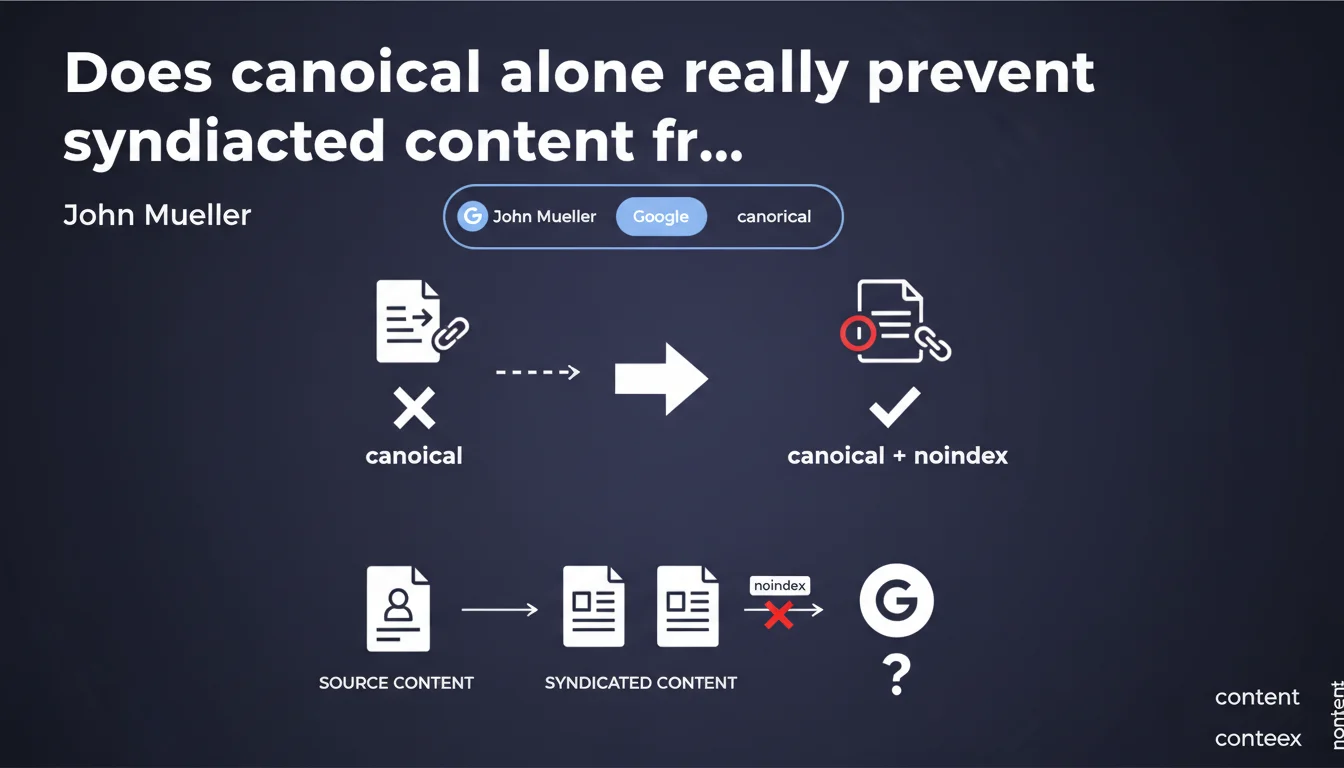

Canonical alone doesn't guarantee the exclusion of syndicated content from Google Discover. Google recommends adding a meta robots noindex tag on syndicated versions to completely block their appearance. A recommendation that challenges the traditional use of canonical as a deduplication signal.

What you need to understand

Why isn't canonical enough for Discover anymore?

The canonical link has always been presented as the preferred tool to tell Google which version of duplicate content to prioritize. In traditional SEO logic, a properly implemented canonical should be enough to consolidate signals toward the original version.

Except that Google Discover works differently. The feed doesn't just crawl and index: it actively selects and pushes content to users. In this context, canonical remains an indicative signal — Google can choose to ignore it if other criteria (perceived freshness, the syndicator's trusted domain, anticipated engagement) point to the syndicated version.

What does Mueller's statement actually mean in practice?

Mueller recommends adding a meta robots noindex tag on syndicated versions if you want to guarantee their exclusion from Discover. The noindex transforms a weak signal (canonical) into a strict directive: the page should not appear in results, including Discover.

This is a paradigm shift. Until now, you used canonical to say "this page exists elsewhere in better form." Now, for Discover, you need to say "this page should not be shown at all." The distinction is important.

What are the risks if you ignore this recommendation?

Without noindex, your syndicated versions can appear in Discover instead of the original. Result: you lose qualified traffic, engagement signals (clicks, time spent), and potentially the visibility you should have captured on your own domain.

Even worse, if the syndicator is a large news site with strong authority, Google may prioritize its version — even if the canonical points to you. This is exactly the scenario this directive aims to prevent.

- Canonical alone is an indicative signal, not an absolute directive for Discover

- Noindex completely blocks syndicated content from appearing in Discover

- Without noindex, syndicated versions can overshadow the original in the feed

- This recommendation applies specifically to Discover, not necessarily to traditional search

SEO Expert opinion

Is this guidance consistent with real-world observations?

Yes and no. We do observe cases where syndicated versions appear in Discover despite properly implemented canonical. This confirms that Google treats Discover with its own rules — and that canonical doesn't carry the absolute weight we attribute to it elsewhere.

On the other hand, recommending noindex for syndicated content poses an obvious problem: strict noindex prevents all indexation, not just Discover appearance. If the syndicator wants their content to remain in traditional search (with canonical pointing to the original), this directive becomes inapplicable. [To verify]: Mueller doesn't clarify whether Google is considering a more granular mechanism (like data-nosnippet or conditional X-Robots-Tag) to target only Discover.

In which cases doesn't this rule apply?

If you're the original publisher and want your syndication partners to index the content while pointing to you via canonical, noindex isn't an option. You'd lose link distribution, brand signals, and the expanded reach that syndication can provide.

Mueller's directive is better suited to situations where you control both versions (original + syndicated) or have an agreement with the syndicator to completely block their version from Discover. In other cases, you face a trade-off between Discover visibility and traditional SEO benefits of syndication.

What's the alternative if you want to keep syndicated content indexable?

Frankly, Google doesn't offer a clean solution for this scenario. Data-nosnippet prevents rich snippets but doesn't affect Discover. X-Robots-Tag with server-side conditions could theoretically target Discover, but nothing is officially documented.

In practice, you're stuck: either you accept that syndicated content sometimes appears in Discover (and lose traffic), or you block it entirely with noindex (and lose syndication's SEO benefits). [To verify]: it would be helpful if Google clarified whether there's a way to target Discover without impacting traditional indexation.

Practical impact and recommendations

What do you need to do concretely if you syndicate your content?

If you control syndicated versions (for example, republishing on a partner site or third-party platform), add meta name="robots" content="noindex, follow" in the

of the syndicated version. The follow allows you to preserve SEO juice from the canonical, even if the page isn't indexed.If you don't directly control the syndicator, integrate this clause into your syndication contracts: require that the partner apply noindex on their version to preserve your Discover visibility. This is a negotiation to conduct upfront.

How do you verify your implementation is correct?

Use the URL Inspection tool in Search Console on syndicated versions. Verify that Google detects the noindex and that the page isn't indexed. In parallel, check that the canonical points to the original.

Monitor your appearances in Discover via the Discover report in Search Console. If syndicated URLs continue to appear despite noindex, there's an implementation problem or processing delay on Google's end.

What mistakes should you absolutely avoid?

Never put noindex on the original — this seems obvious, but deployment errors happen more often than you'd think. A misconfigured template or CMS applying the directive at the wrong level can cost you all visibility.

Also avoid combining noindex and canonical in contradictory ways. If a page has noindex, canonical no longer really makes sense from an indexation standpoint — even though technically Google can still follow the link. Clarify your intention: either deduplication (canonical alone) or blocking (noindex).

- Add meta robots noindex on all syndicated versions meant to be excluded from Discover

- Keep the canonical pointing to the original even with noindex, to preserve consolidation signals

- Verify implementation using the URL Inspection tool in Search Console

- Integrate this clause into syndication contracts if you don't directly control the partner

- Monitor the Discover report to detect any undesired appearances

- Clearly document which version should be indexed and which should be blocked

❓ Frequently Asked Questions

Le noindex sur une version syndiquée empêche-t-il aussi son indexation dans la recherche classique ?

Peut-on utiliser le robots.txt pour bloquer uniquement Discover ?

Si le syndicateur refuse d'ajouter noindex, quelles options reste-t-il ?

Le noindex affecte-t-il le transfert de jus SEO via le canonical ?

Cette recommandation s'applique-t-elle aussi aux agrégateurs de flux RSS ?

🎥 From the same video 18

Other SEO insights extracted from this same Google Search Central video · published on 07/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.