Official statement

Other statements from this video 15 ▾

- □ Hreflang booste-t-il vraiment le ranking dans un pays ciblé ?

- □ Faut-il vraiment réduire le nombre de pages pour optimiser son SEO international ?

- □ Comment Google détermine-t-il vraiment la langue d'une page multilingue ?

- □ Pourquoi Google ignore-t-il vos titres de page si la langue ne correspond pas au contenu ?

- □ Google utilise-t-il vraiment l'autorité de domaine pour classer les sites ?

- □ Pourquoi Googlebot refuse-t-il de cliquer sur vos boutons ?

- □ Les interstitiels JavaScript sont-ils vraiment sans risque pour le SEO ?

- □ Un bug technique pendant une Core Update peut-il vraiment faire chuter votre site ?

- □ Les problèmes techniques peuvent-ils vraiment déclencher une chute lors d'un Core Update ?

- □ La traduction de contenu est-elle pénalisée par Google ?

- □ Les traductions automatiques de mauvaise qualité peuvent-elles vraiment saboter votre SEO international ?

- □ Faut-il vraiment utiliser l'API d'indexation pour tous vos contenus ?

- □ Googlebot peut-il accéder à votre fichier .htaccess ?

- □ Google favorise-t-il réellement ses propres plateformes dans les résultats de recherche ?

- □ La meta description influence-t-elle vraiment le classement dans Google ?

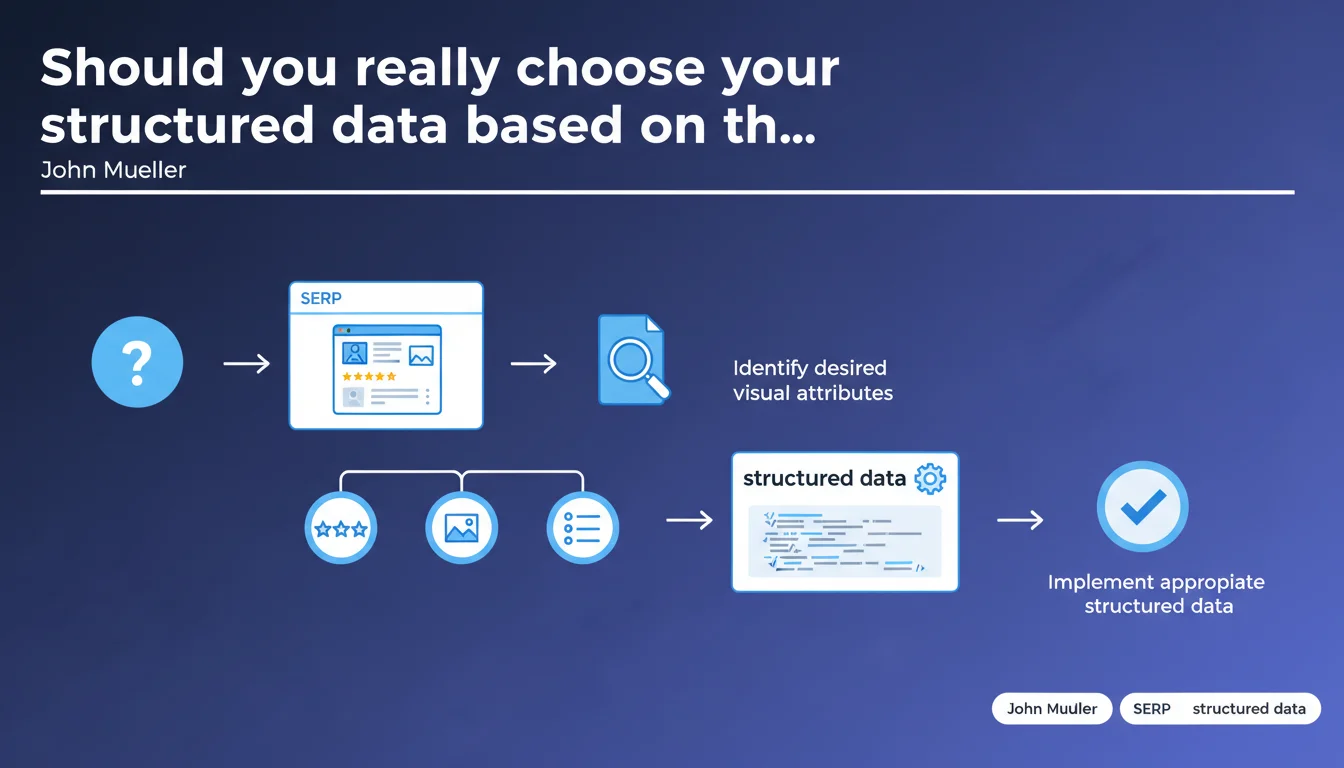

Google inverts traditional logic: instead of implementing structured data according to your site's type, you must first identify which rich snippets you want to appear in the SERPs, then implement only the schemas that trigger these displays. A results-driven approach rather than blind compliance.

What you need to understand

John Mueller's statement challenges a common practice: systematically implementing all available schemas for a given content type. Many e-commerce sites, for example, add Product, Offer, Review, AggregateRating, BreadcrumbList... without really questioning what they're for.

The proposed approach reverses the reasoning. It starts from the desired result in the SERPs — review stars, prices, availability, accordion FAQs, breadcrumb trails — then identifies the specific structured data that triggers these displays.

Why is Google encouraging this targeted method?

Because the inflation of structured data poses a quality problem. Too many sites implement unnecessary schemas, sometimes poorly filled out, which pollute semantic extraction without adding value for either the search engine or the user.

By pushing webmasters to focus on what has a visible impact, Google implicitly filters implementations. Only attributes that generate a display gain justify the effort — and therefore the accuracy of the data.

Concretely, what changes for SEO practitioners?

The approach becomes strategic rather than technical. Before coding, you must scan competitor SERPs, identify which rich snippets appear for your target queries, then check in Google's documentation which schemas trigger these displays.

It also means there's no point implementing schema that has no visual equivalent in the results. If Google never displays recipes in carousel format for your niche, implementing Recipe becomes pointless — unless you're targeting other search engines or voice assistants.

- Visual objective takes priority over theoretical schema compliance

- A minimalist targeted implementation is better than an exhaustive poorly-filled catalog

- You must analyze SERPs before implementing, not after

- Structured data becomes a display lever, not a compliance checklist

SEO Expert opinion

Is this approach really new or just a reframing?

Let's be honest: experienced practitioners have been doing this for years already. Nobody wastes time implementing schemas without visible returns. But the official statement legitimizes a principle that many were applying empirically.

What changes is that Google is saying it publicly. It provides legitimacy to refuse client requests like "we want all possible schema." The gain: focusing efforts on what actually moves the needle in the SERPs.

What are the blind spots of this recommendation?

The problem is that Google doesn't always clearly document which schemas trigger which displays. Some rich snippets appear in unpredictable ways, even intermittently. [To verify]: the algorithm that decides whether to display a rich snippet or not remains opaque.

Another point: this logic works well for standard results, but what about voice assistants, Google Discover, complex featured snippets? Some schemas may have no visible impact on desktop but play a role in other display contexts.

In which cases does this rule not fully apply?

For news sites, for example, implementing NewsArticle with complete metadata remains essential even if Top Stories display eligibility is never guaranteed. Google uses this data to determine eligibility itself.

Same for local sites: LocalBusiness must be implemented exhaustively, even if not all fields generate visible display. Some attributes serve semantic matching more than visual enhancement.

Practical impact and recommendations

What must you do concretely to apply this approach?

First, audit competitor SERPs on your target queries. Identify which types of rich snippets appear: stars, prices, FAQs, How-To, videos, images... Note which generate the most clicks or visual attention.

Next, consult Google's official documentation on eligible rich results. Cross-reference with your field observations: some documented displays never appear in your sector, others undocumented ones may emerge.

Once targets are identified, implement only the corresponding schemas. Test with Google's validation tool, then measure real impact in Search Console: impressions, CTR, appearance in enhancement reports.

What errors must you absolutely avoid?

Don't implement structured data by mimicry without verifying it generates a display. Many sites copy a competitor's schema without analyzing whether it actually produces a rich snippet.

Also avoid the opposite trap: neglecting a schema because it doesn't generate immediate display. Some attributes serve the overall semantic context — they're not useless, just less directly measurable.

Finally, don't forget that eligibility rules evolve. A schema that's inactive today may become a trigger tomorrow. Regular monitoring of SERP evolution remains essential.

- Scan competitor SERPs to identify rich snippets present

- Check Google's documentation to know triggering schemas

- Implement only structured data that corresponds to the displays you're targeting

- Test implementation with the Rich Results Testing Tool

- Measure impact via Search Console (impressions, CTR, enhancement reports)

- Document which schemas generate which displays in your specific sector

- Schedule quarterly SERP reviews to detect new formats

❓ Frequently Asked Questions

Dois-je supprimer les données structurées qui ne génèrent pas d'affichage enrichi ?

Comment savoir quels schémas déclenchent quels affichages dans ma niche ?

Cette approche s'applique-t-elle aux sites d'actualités et aux sites locaux ?

Faut-il implémenter des données structurées qui n'ont aucun impact visible aujourd'hui ?

L'outil de test des résultats enrichis garantit-il l'affichage effectif ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 29/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.