Official statement

Other statements from this video 15 ▾

- □ Hreflang booste-t-il vraiment le ranking dans un pays ciblé ?

- □ Faut-il vraiment réduire le nombre de pages pour optimiser son SEO international ?

- □ Comment Google détermine-t-il vraiment la langue d'une page multilingue ?

- □ Pourquoi Google ignore-t-il vos titres de page si la langue ne correspond pas au contenu ?

- □ Google utilise-t-il vraiment l'autorité de domaine pour classer les sites ?

- □ Pourquoi Googlebot refuse-t-il de cliquer sur vos boutons ?

- □ Les interstitiels JavaScript sont-ils vraiment sans risque pour le SEO ?

- □ Un bug technique pendant une Core Update peut-il vraiment faire chuter votre site ?

- □ Les problèmes techniques peuvent-ils vraiment déclencher une chute lors d'un Core Update ?

- □ La traduction de contenu est-elle pénalisée par Google ?

- □ Faut-il vraiment utiliser l'API d'indexation pour tous vos contenus ?

- □ Googlebot peut-il accéder à votre fichier .htaccess ?

- □ Google favorise-t-il réellement ses propres plateformes dans les résultats de recherche ?

- □ La meta description influence-t-elle vraiment le classement dans Google ?

- □ Faut-il vraiment choisir ses données structurées en fonction des résultats enrichis visés ?

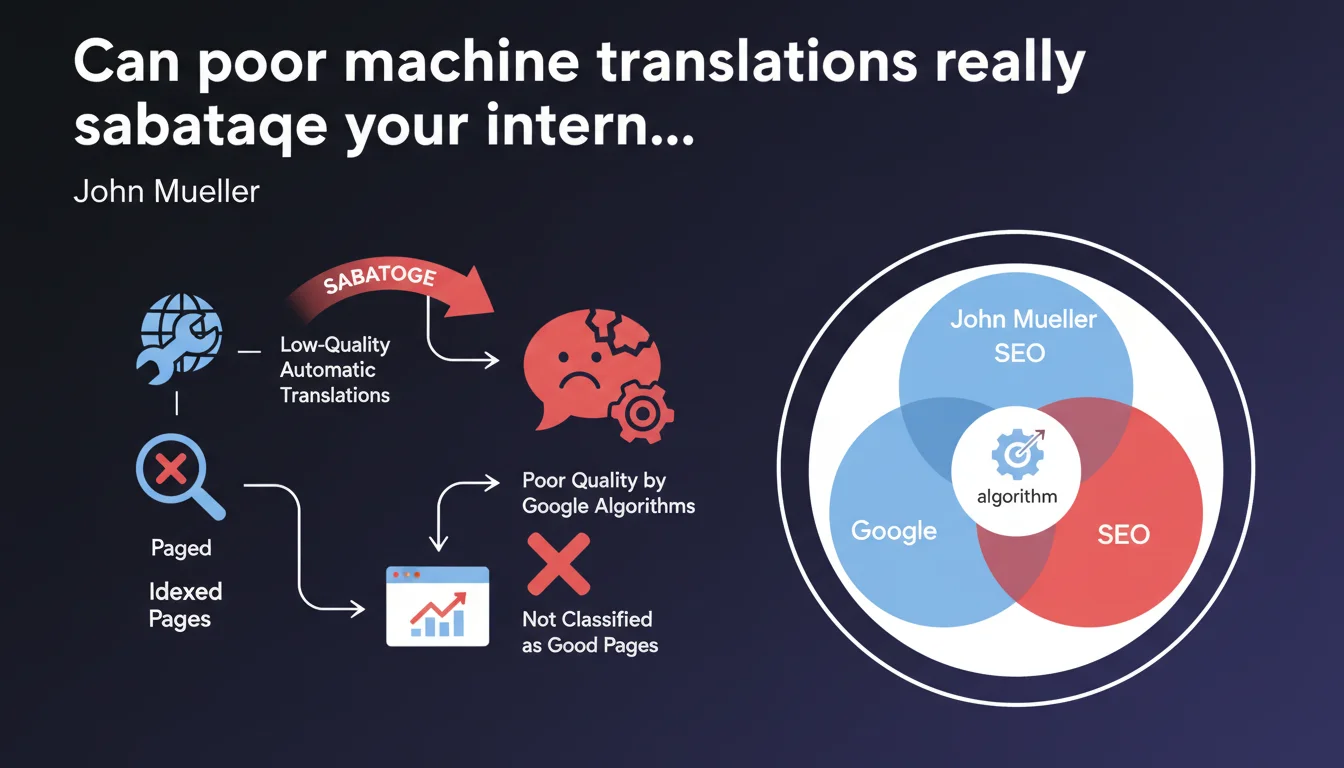

Google may treat automatically translated pages of low quality as poor-quality content and penalize them in search results. Indexing raw, unreviewed translations can harm the overall quality perception of a multilingual website. Machine translation remains acceptable if it is reworked and validated.

What you need to understand

Why does Google specifically target automatic translations?

Google has long differentiated low-quality automatically generated content from useful content. Poorly executed machine translations fall into this first category: awkward syntax, mistranslations, incomprehensible idioms.

The problem doesn't lie with the translation tool itself — DeepL, Google Translate, or otherwise. It's the quality of the final indexed result that matters. If your translated pages offer a degraded user experience, Google's algorithms will detect this through multiple signals: high bounce rate, low time on page, lack of engagement.

What does Google mean by "algorithms" in this context?

Mueller remains intentionally vague here. We're likely talking about global quality systems (formerly Panda) that evaluate page relevance and usefulness. Not necessarily a targeted manual penalty.

Behavioral signals also play a role: if users immediately leave your translated pages to return to results, that's a clear indicator something is wrong. Google then adjusts the perceived quality score of these pages, or even the entire domain if the issue is widespread.

Does this statement apply only to large-scale multilingual sites?

No. Even a site with 3-4 language versions can be impacted if translations are poorly done. The volume of affected pages certainly aggravates the problem — an e-commerce site with 10,000 product sheets translated automatically without review presents a systemic risk.

But a corporate blog with 20 poorly translated articles can also suffer locally: the translated pages won't rank, and if they represent a significant portion of indexed content, they can pull down the overall domain quality perception.

- Machine translation is not prohibited: it's the quality of the final content that matters

- Algorithms evaluate user experience: syntax, relevance, usefulness to the reader

- High volume of mediocre translations can affect the quality perception of the entire site

- Behavioral signals: high bounce rate and low engagement reveal translation problems

- No distinction between tools: DeepL, Google Translate, ChatGPT — only the indexed result matters

SEO Expert opinion

Is this position consistent with field observations?

Absolutely. I've seen sites lose 40-60% of organic traffic on certain language versions after deploying raw machine translations. The pattern is classic: massive rollout, rapid indexing, then gradual drop in SERPs over 2-3 months.

What's interesting — and what Mueller doesn't specify — is that the impact varies enormously depending on the target language. EN→FR or EN→ES translations are generally better than EN→JA or EN→AR, where cultural and syntactic nuances create catastrophic results without human intervention. [To verify]: Does Google apply different quality thresholds depending on the language pair? No public data on this.

What nuances should be added to this statement?

Mueller speaks of "low-quality automatic translations that are indexed." The key word here is "indexed." If you use machine translation as an unpublished internal draft, no problem. The risk emerges when you publish and let Google crawl mediocre content.

Another nuance: not all content requires the same level of linguistic quality. Standardized product descriptions, technical FAQs, legal notices — these elements can often pass with slightly polished machine translation. In contrast, high-value pages (guides, in-depth articles, sales pages) require full human intervention.

Let's be honest: many multilingual sites thrive with machine translations plus partial human review. The key is the quality-to-volume ratio. If 80% of your translated content is correct and useful, the remaining 20% poses less of a problem.

In what cases does this rule not really apply?

If your original content is already mediocre quality, translation isn't the main problem. I've audited sites where the EN version was thin content stuffed with keywords — the FR translation only amplified the existing mediocrity. Google penalizes content poverty, not translation specifically.

Another case: highly technical sites with ultra-specialized vocabulary. Current machine translation tools (especially with custom glossaries) often produce more consistent results than human translators unfamiliar with the domain. In this context, the "machine translation = poor quality" argument doesn't hold.

Practical impact and recommendations

What should you actually do with your machine-translated content?

First instinct: audit your existing language versions. Identify machine-translated pages, check their performance in Search Console (impressions, CTR, average position). If certain languages significantly underperform, it's likely a signal of insufficient quality.

Next, prioritize. You don't have the time or budget to retranslate everything humanly. Focus on high-potential pages: those already generating traffic but with low conversion rates, or those targeting strategic keywords but stagnating in positions 15-30.

For new translations, adopt a hybrid approach: machine translation plus targeted human review focused on critical points (titles, meta descriptions, opening paragraphs, CTAs). This is an acceptable cost-to-efficacy compromise for most projects.

What mistakes should you absolutely avoid?

Never deploy machine translations at scale without a testing phase. Start with a sample — 50-100 pages — and measure impact over 60-90 days before scaling. Too many sites end up with 10,000 mediocre translated pages they must later clean up.

Also avoid the pitfall of word-for-word keyword translation. A popular search query in English doesn't necessarily translate literally into other languages. Do independent local keyword research for each market — otherwise your translations, even linguistically perfect, will target the wrong expressions.

And that's where it gets tricky: most translation tools completely ignore local SEO context. They translate the text, not the search intent or cultural variations in search queries.

- Audit performance of your translated pages in Search Console (traffic, CTR, rankings)

- Prioritize high-potential pages for human revision

- Use machine translation as a draft, never as a final version published directly

- Perform independent local keyword research for each target language

- Test on a limited sample before mass deployment (50-100 pages over 60-90 days)

- Implement at minimum human review of titles, meta descriptions, and CTAs

- Monitor behavioral signals (bounce rate, time on page) by language

- Create custom glossaries for recurring technical terms in your machine translations

Machine translation is not an enemy of international SEO — it's a tool to handle with discernment. Google doesn't penalize using DeepL or ChatGPT to translate; it penalizes publishing mediocre content that degrades user experience.

The winning approach combines operational efficiency with quality control: machine translation for volume, human intervention for critical touchpoints. Concretely? Automate standardized product descriptions, but have strategic landing pages reviewed by native speakers.

These multilingual optimizations can become complex to orchestrate alone, especially when juggling multiple markets with different cultural expectations. If your international SEO strategy represents a significant business stake, support from an agency specializing in multilingual SEO can prove decisive in avoiding costly pitfalls and structuring a sustainable process.

❓ Frequently Asked Questions

Google pénalise-t-il tous les sites utilisant des traductions automatiques ?

Faut-il bloquer l'indexation des pages traduites automatiquement en attendant une relecture ?

Les traductions automatiques affectent-elles le budget crawl ?

Peut-on utiliser ChatGPT ou Claude pour traduire du contenu SEO ?

Comment détecter si mes traductions automatiques posent problème ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 29/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.