Official statement

Other statements from this video 21 ▾

- □ Faut-il arrêter d'utiliser l'outil de soumission manuelle dans Search Console ?

- □ Les balises H2 dans le footer posent-elles un problème pour le référencement ?

- □ Les balises <header> et <footer> HTML5 améliorent-elles vraiment le SEO ?

- □ Faut-il vraiment se fier au validateur schema.org pour optimiser ses données structurées ?

- □ La vitesse de page améliore-t-elle vraiment le classement aussi vite qu'on le croit ?

- □ Google crawle-t-il tous les sitemaps au même rythme ?

- □ Google continue-t-il vraiment de crawler un sitemap supprimé de Search Console ?

- □ Pourquoi Google n'indexe-t-il pas une page crawlée régulièrement si elle ne présente aucun problème technique ?

- □ Peut-on utiliser des canonical bidirectionnels entre deux versions d'un site sans risque ?

- □ Les structured data peuvent-elles remplacer le maillage interne classique ?

- □ Pourquoi un seul x-default suffit-il pour toute votre configuration hreflang multi-domaines ?

- □ Faut-il vraiment éviter le structured data produit sur les pages catégories ?

- □ Faut-il vraiment choisir une langue principale pour chaque page si vous visez plusieurs marchés ?

- □ Pourquoi Google ignore-t-il complètement votre version desktop en mobile-first indexing ?

- □ Le contenu 'commodity' peut-il vraiment survivre dans les résultats Google ?

- □ Faut-il isoler ses FAQ dans des pages séparées pour mieux ranker ?

- □ Pourquoi Google réduit-il drastiquement l'affichage des FAQ dans les résultats de recherche ?

- □ Pourquoi Google n'indexe-t-il qu'une infime fraction de vos URLs ?

- □ Peut-on héberger son sitemap XML sur un domaine différent de son site principal ?

- □ Les Core Web Vitals : pourquoi le passage de « Bad » à « Medium » change tout pour votre ranking ?

- □ La vitesse serveur impacte-t-elle vraiment le crawl budget des gros sites ?

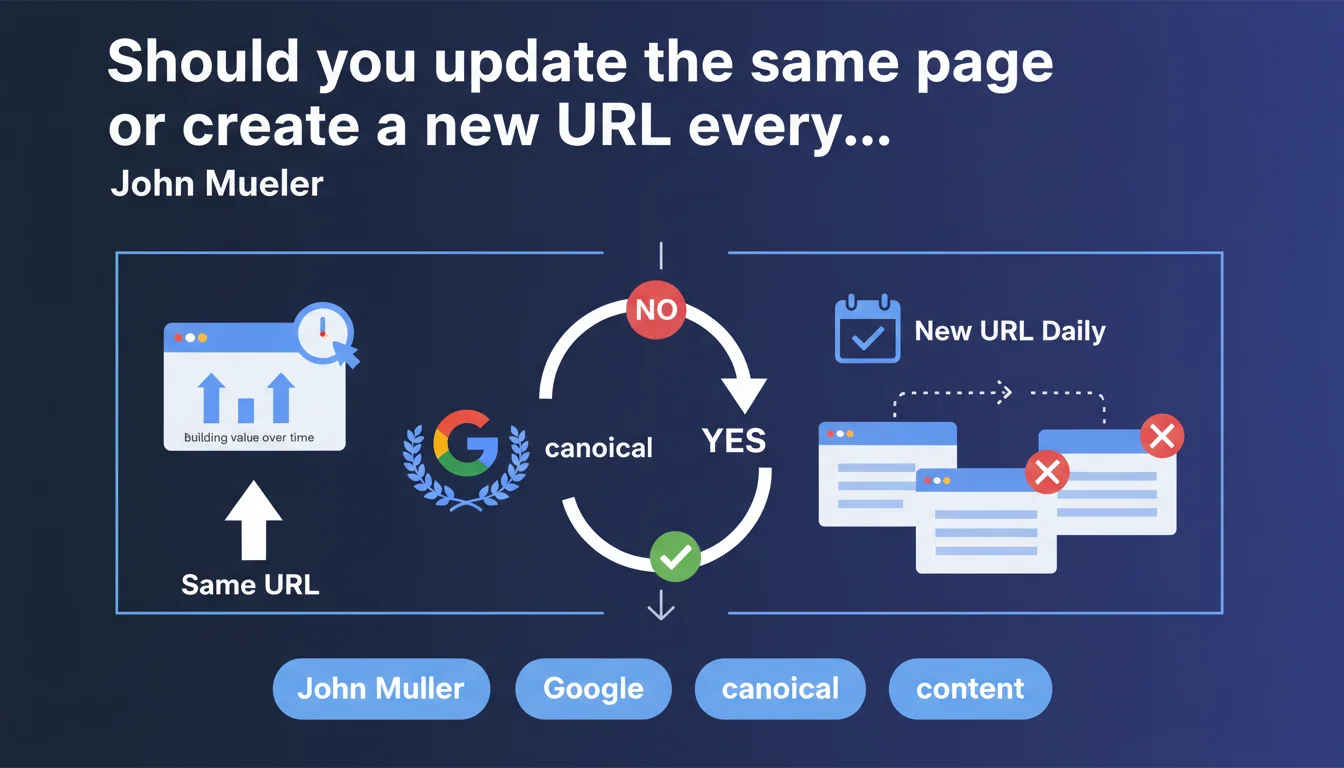

Google recommends keeping the same URL for daily updated content (prices, dynamic data) rather than creating new pages each day. This approach accumulates SEO value on a single canonical page and avoids signal dilution. In practice: one evolving page is worth far more than 365 different pages.

What you need to understand

Why does Google insist on URL stability for daily updated content?

The problem with creating new pages daily is SEO signal dilution. Each new URL starts from scratch: no click history, no accumulated backlinks, no established trust.

Google also has to determine which version holds authority for a given query. If you publish 30 pages on "Bitcoin price" in 30 days, the algorithm wastes time identifying the canonical page — the one that deserves to rank.

What does this actually change in terms of crawling and indexation?

A stable URL you update regularly accumulates freshness signals without fragmenting your crawl budget. Googlebot revisits the page regularly, detects modifications, and adjusts ranking accordingly.

Conversely, multiplying URLs dilutes crawl budget and creates technical duplicate content if pages are too similar. Result: cannibalization and confusion for the search engine.

Does this approach apply to all types of daily content?

No, and that's where it gets tricky. Mueller talks about updated data (prices, indicators), not distinct news articles. A page "Bitcoin Price Today" should remain unique and update itself. But an article "Analysis of Bitcoin Crash on March 12" deserves its own URL.

The nuance lies in search intent: if the user seeks the most recent data ("current price"), a stable URL suffices. If the event warrants specific editorial treatment, create a new page.

- Stable URL: accumulates authority, freshness signals, and backlinks on a single point

- Crawl budget preserved: Googlebot optimizes its visits rather than dispersing resources

- Canonicalization simplified: no ambiguity about the reference page for a given query

- Crucial distinction: updated data vs distinct editorial events

SEO Expert opinion

Is this recommendation truly new or just a reminder?

Let's be honest: this advice isn't groundbreaking. Google has been repeating for years that content freshness matters more than multiplying URLs. Yet many sites keep creating daily pages out of habit — sometimes due to poorly configured CMS constraints.

What's missing from this statement is quantification. [To verify]: at what update frequency does Google consider a page accumulates enough positive signals? Once a day, multiple times per hour? No concrete data.

In what cases does this rule absolutely not apply?

If your daily content generates naturally specific backlinks for each edition, creating distinct URLs may make sense. Example: a daily sports ranking cited by media outlets that link to "ranking from March 15" rather than a generic page.

Another edge case: financial news sites publishing daily analyses. There, editorial intent trumps technical optimization. A reader searching "stock market analysis March 15" doesn't expect the same page as "stock prices today".

What risks does this approach present if misapplied?

The trap is transforming your stable page into a catch-all. If you completely replace content each day without archiving previous versions, you lose all historical context — and some backlinks become obsolete or misleading.

Another common mistake: failing to clearly date the last update. Google and users must instantly know if the displayed data is fresh. A "Last Updated" field with structured data is essential.

Practical impact and recommendations

What should you concretely do if you publish daily content?

First, identify pages that fall under updated data vs those deserving unique editorial treatment. For the former, configure your CMS to update the existing URL rather than generating a new route.

Implement a versioning system if needed: display today's data at the top of the page, archive history further down. This preserves value for old backlinks while delivering freshness.

How do you correctly signal freshness to Google?

Add Article structured data with datePublished and dateModified fields — the latter must update with each revision. Ensure your server sends a consistent Last-Modified header.

In your XML sitemap, include the <lastmod> tag and update it automatically. Google pings this file regularly; a date change triggers priority recrawl.

What mistakes must you absolutely avoid in this strategy?

Don't leave orphaned content if consolidating multiple pages into one. Implement 301 redirects from old daily URLs to the stable URL, especially if they've accumulated backlinks.

Avoid cosmetic updates (date changes without actual content modification). Google detects these manipulations and may ignore your freshness signals if you abuse them.

- Audit your daily content: data vs editorial events

- Configure CMS to update the stable URL instead of creating new routes

- Implement structured data with automatically updated

dateModified - Verify that

Last-Modifiedheader is sent and consistent - Update

<lastmod>tag in XML sitemap with each modification - Redirect (301) old daily URLs to stable version if migrating

- Clearly display last update date for users

- Archive historical versions if they provide editorial value

❓ Frequently Asked Questions

Dois-je supprimer mes anciennes pages quotidiennes si je consolide sur une URL stable ?

Comment Google détecte-t-il qu'une page a été mise à jour plutôt que remplacée ?

Cette approche fonctionne-t-elle pour un site d'actualités avec plusieurs articles par jour ?

Faut-il mettre à jour la date de publication ou utiliser une date de modification séparée ?

Une page mise à jour quotidiennement peut-elle bénéficier du filtre Freshness de Google ?

🎥 From the same video 21

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.