Official statement

Other statements from this video 20 ▾

- □ Does Google really index iframe content as part of the parent page — or treats it as completely separate?

- □ Should you really prioritize a hierarchical structure for large websites?

- □ Is blocking crawl via robots.txt really the miracle solution against toxic links?

- □ Should you translate your URLs to boost international SEO rankings?

- □ Does Googlebot really ignore the meta prerender-status-code 404 tag in JavaScript applications?

- □ Why do site migrations fail so often even with careful SEO preparation?

- □ Are double slashes in URLs really hurting your SEO performance?

- □ Is your video being penalized by Google for appearing below the fold, and how can you fix it?

- □ How can you successfully transfer your image rankings to new URLs without losing search visibility?

- □ Should you really worry about 404 errors on your website?

- □ Is returning HTTP 200 on a 404 page really cloaking or just a soft 404?

- □ Should you worry when Googlebot crawls your API endpoints and generates 404 errors?

- □ Is web accessibility really a Google ranking factor or just a smoke screen?

- □ Does Google really penalize paid link purchases, or is it just a myth?

- □ Should you still report bad backlinks to Google in 2024?

- □ Why does blocking crawl via robots.txt prevent Google from seeing your noindex directive?

- □ Is Google really rejecting the idea of a magic formula to rank higher?

- □ Why is Google displaying your special characters as gibberish in search results?

- □ Why are the data discrepancies between Google Analytics and Search Console causing so much confusion for SEO professionals?

- □ Should you really be chasing perfect SEO?

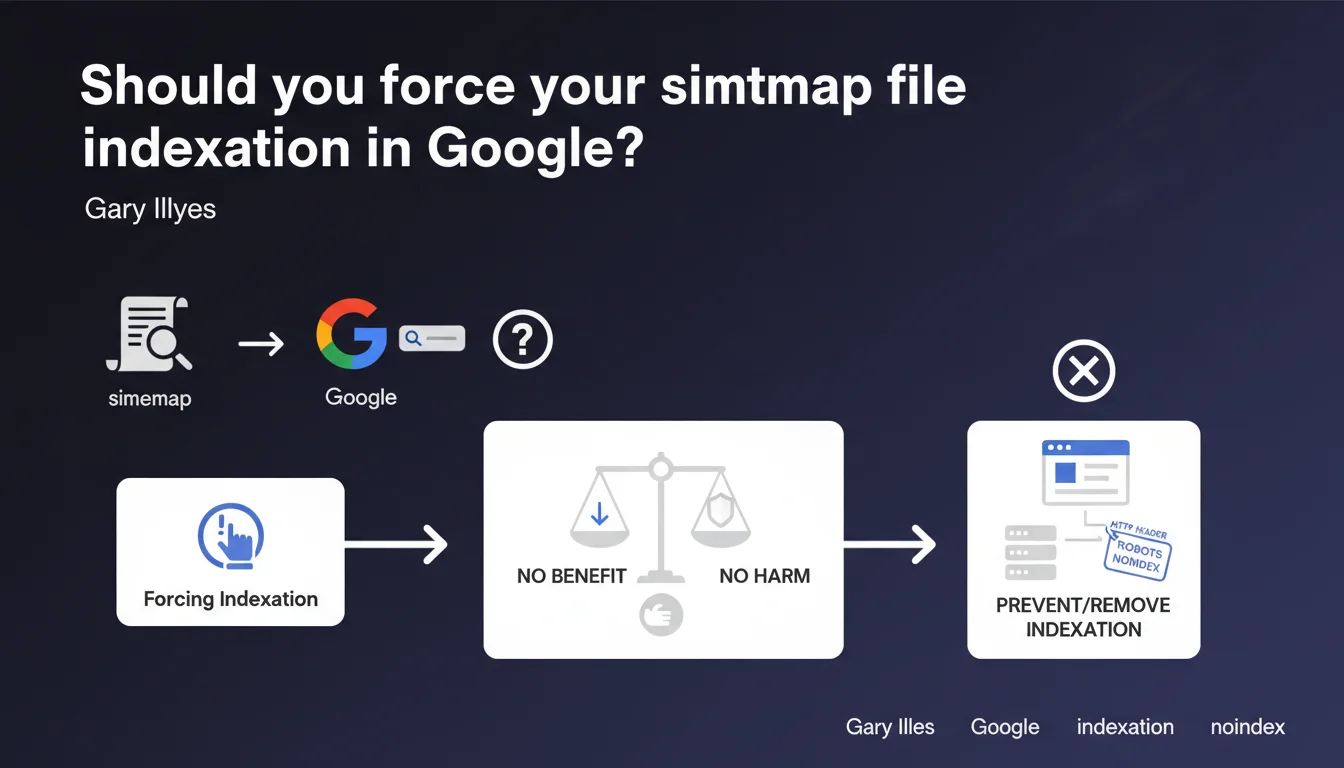

Google may index your sitemap, but forcing this indexation serves absolutely no purpose. If you want to exclude it from search results, use an HTTP robots noindex header — it's the only effective method. Contrary to popular belief, sitemap indexation has neither a positive nor negative impact on your SEO performance.

What you need to understand

Why does Google sometimes index sitemap files?

A sitemap file is technically a web resource like any other. If Googlebot discovers it through an internal link, external link, or submission in Search Console, it may decide to index it. There's nothing abnormal about that.

Sitemap indexation typically occurs when it's publicly accessible and no exclusion directives are in place. This is a default crawler behavior — not a bug, not a quality signal.

Does sitemap indexation harm your SEO?

The answer is straightforward: no. Gary Illyes is categorical on this point. An indexed sitemap doesn't consume your crawl budget significantly, doesn't dilute your thematic relevance, and causes no algorithmic penalty.

It's just noise in your Search Console reports, nothing more. Many SEOs have a visceral reaction seeing their sitemap.xml in the index, but it's a non-technical issue.

What's the official method to block sitemap indexation?

If you absolutely want to remove your sitemap from the index, Google recommends adding an HTTP header X-Robots-Tag: noindex in the server response of your sitemap.xml file. This is the standard directive for non-HTML resources.

robots.txt alone isn't sufficient here — blocking the crawl prevents Googlebot from seeing the noindex directive, so the resource can remain indexed with a generic snippet. This is a classic pitfall.

- A sitemap can be indexed, it's normal Googlebot behavior

- Sitemap indexation has no negative impact on SEO

- Forcing sitemap indexation through artificial techniques is useless

- To exclude the sitemap from the index, use an HTTP header X-Robots-Tag: noindex

- Don't block the sitemap in robots.txt if you want to effectively deindex it

SEO Expert opinion

Does this statement match real-world observations?

Yes, completely. Across hundreds of audits, I've never found a correlation between sitemap indexation and SEO performance degradation. Confirmed: no measurable impact.

However, I've seen SEOs waste time trying to force deindexation through questionable methods — robots.txt, URL removal in Search Console, etc. Result: the sitemap stays indexed and the time could have been invested elsewhere.

Why do some SEOs insist on deindexing their sitemap?

It's often a matter of perceived cleanliness. A sitemap in the index is seen as search result pollution, a technical hygiene flaw. Except Google doesn't care at all.

There's also confusion between crawl budget and indexation. Some think an indexed sitemap consumes precious resources. Wrong — the file is crawled once, and then it's anecdotal. [To be verified]: Google has never published detailed data on the exact weight of an indexed sitemap in the global crawl budget of an average site.

In what cases should you actually block sitemap indexation?

Honestly? If your sitemap contains sensitive data (staging URLs, API endpoints, directory structures you prefer to keep private), then yes, block it. But that's rare.

For a standard site with a typical sitemap.xml, it's cosmetic. If it obsesses you, add the noindex header and move on. Otherwise, let it go — your SEO time has more value than that.

Practical impact and recommendations

What if my sitemap is currently indexed?

If it doesn't bother you and you understand that it's without SEO consequence, do nothing. Save your energy for projects with measurable ROI.

If you still want to remove it, configure your server to return an X-Robots-Tag: noindex header on all requests to sitemap.xml. On Apache: Header set X-Robots-Tag "noindex" in your .htaccess or server configuration. On Nginx: add_header X-Robots-Tag "noindex";.

How do you verify the noindex directive is active?

Use your browser's DevTools (Network tab) or a curl command to inspect the HTTP headers of your sitemap. You should see X-Robots-Tag: noindex in the response.

Then be patient — deindexation can take several weeks depending on your site's crawl frequency. You can request temporary removal via Search Console to speed things up, but it's not mandatory.

What technical mistakes should you absolutely avoid?

Don't block the sitemap in robots.txt if your goal is to deindex it. Googlebot won't be able to see the noindex header, and the URL will remain in the index with an empty snippet. This is counterproductive.

Also avoid returning a 404 code on the sitemap to make it disappear — you then lose its primary function, which is to help Google discover your URLs. If you want it crawled but not indexed, keep it accessible with a 200 + noindex.

- Decide whether sitemap indexation truly warrants action (spoiler: probably not)

- Configure an HTTP header X-Robots-Tag: noindex on sitemap.xml if deindexation desired

- Verify header presence with curl or browser DevTools

- Never block the sitemap in robots.txt to deindex it

- Keep the sitemap accessible with HTTP 200 so Google continues to crawl it

- Wait several weeks to see effective deindexation

❓ Frequently Asked Questions

Un sitemap indexé consomme-t-il du crawl budget ?

Peut-on utiliser robots.txt pour empêcher l'indexation du sitemap ?

Combien de temps faut-il pour qu'un sitemap disparaisse de l'index après ajout du noindex ?

L'indexation du sitemap peut-elle provoquer du duplicate content ?

Dois-je supprimer mon sitemap de Search Console s'il est indexé ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 18/12/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.