Official statement

Other statements from this video 20 ▾

- □ Does Google really index iframe content as part of the parent page — or treats it as completely separate?

- □ Should you really prioritize a hierarchical structure for large websites?

- □ Is blocking crawl via robots.txt really the miracle solution against toxic links?

- □ Should you translate your URLs to boost international SEO rankings?

- □ Does Googlebot really ignore the meta prerender-status-code 404 tag in JavaScript applications?

- □ Why do site migrations fail so often even with careful SEO preparation?

- □ Is your video being penalized by Google for appearing below the fold, and how can you fix it?

- □ How can you successfully transfer your image rankings to new URLs without losing search visibility?

- □ Should you really worry about 404 errors on your website?

- □ Is returning HTTP 200 on a 404 page really cloaking or just a soft 404?

- □ Should you force your sitemap file indexation in Google?

- □ Should you worry when Googlebot crawls your API endpoints and generates 404 errors?

- □ Is web accessibility really a Google ranking factor or just a smoke screen?

- □ Does Google really penalize paid link purchases, or is it just a myth?

- □ Should you still report bad backlinks to Google in 2024?

- □ Why does blocking crawl via robots.txt prevent Google from seeing your noindex directive?

- □ Is Google really rejecting the idea of a magic formula to rank higher?

- □ Why is Google displaying your special characters as gibberish in search results?

- □ Why are the data discrepancies between Google Analytics and Search Console causing so much confusion for SEO professionals?

- □ Should you really be chasing perfect SEO?

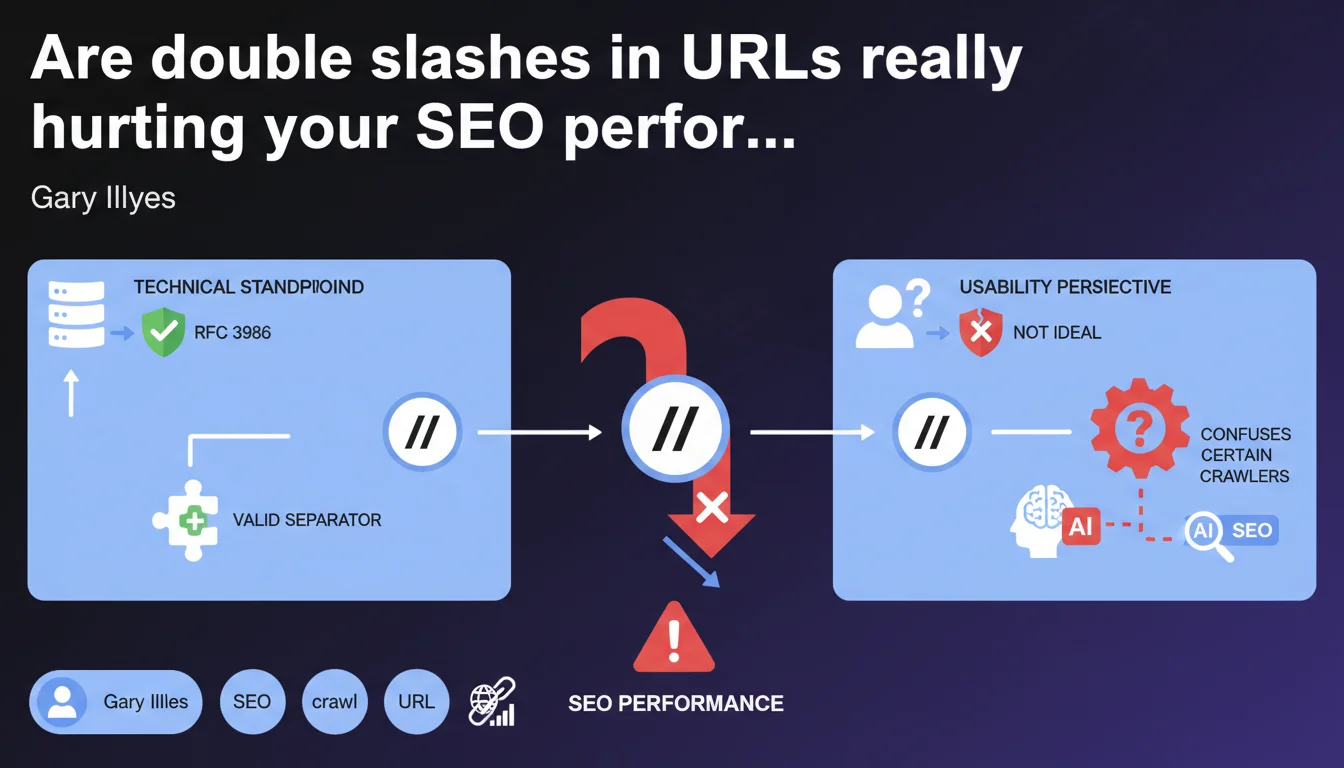

Technically speaking, double slashes in URLs comply with RFC 3986 and don't pose validity issues. But when it comes to usability and crawling, it's a different story: some crawlers can get confused and user experience suffers. Google tolerates it, but clearly doesn't recommend it.

What you need to understand

What is Google's technical position on double slashes?

Gary Illyes reminds us that the slash is a valid separator according to the RFC 3986 standard, which governs URL syntax. In practice, a URL like example.com/category//page doesn't violate any technical standards.

But here's the catch — just because a URL is technically valid doesn't mean it's optimal. Google makes a clear distinction between technical compliance and actual practicality.

Why do some crawlers get confused by double slashes?

The problem isn't in the specification, but in how crawlers implement it. Some bots misinterpret double slashes and may treat /category//page and /category/page as two different URLs.

Result: risk of duplicate content, PageRank dilution, and indexation confusion. Even though Googlebot handles this fairly well, other crawlers — including those used for log analysis or technical SEO — can stumble.

What's the impact on user experience?

A clean URL inspires confidence. A URL with double slashes looks visually odd and gives the impression of an error or a poorly maintained site.

This is especially true when the URL is shared on social media, copied and pasted in an email, or displayed in the SERPs. Users might hesitate to click.

- Double slashes are technically valid according to RFC 3986

- Some crawlers may misinterpret these URLs and create duplication

- User experience is degraded: impression of an error

- Google tolerates but implicitly advises against this practice

SEO Expert opinion

Does this technical tolerance hide a real SEO problem?

Let's be honest: if Gary Illyes takes the time to clarify "it's not ideal from a usability perspective," there's something to worry about. Google never explicitly says "avoid this," but the message is clear.

In the field, we observe that double slashes generate URL variations that can fragment ranking signals. Even if Googlebot makes the effort to normalize, why take this risk?

In what cases do double slashes appear most often?

Generally, it's a bug in dynamic URL generation: poorly managed path concatenation, empty variables, incorrect URL rewrite configuration (mod_rewrite, nginx). It also happens with certain improperly configured CMS or frameworks.

Less often, it's intentional — and that's even worse. There's no SEO benefit to deliberately structuring URLs with double slashes. [To verify]: some mention a possible interpretation as an encoded parameter, but no solid field data supports this hypothesis.

Should these URLs be systematically corrected?

Yes, unless the volume is tiny and the impact is negligible. But in most cases, cleaning up these URLs improves technical consistency and prevents nasty surprises during a migration or in-depth SEO audit.

Practical impact and recommendations

What should I do if my site contains double slashes?

First step: identify the source. Complete crawl with Screaming Frog or Oncrawl, server log analysis, XML sitemap verification. Find all affected URLs.

Next, fix the URL generation on the code side. If it's a CMS, check plugins, rewrite configuration, and templates. If it's custom code, track down the faulty concatenation.

How do I handle URLs already indexed with double slashes?

Set up 301 redirects from the double slash versions to the clean versions. Make sure canonicals point to the correct version.

Then, submit a new clean sitemap and request a reindex via Google Search Console to speed up the index update.

What mistakes should I absolutely avoid?

Never let both versions coexist without a canonical or redirect. This creates duplicate content and fragments your ranking signals.

Also avoid redirect chains (double slash → clean version → another redirect). Google follows redirects, but each hop dilutes the signals a bit more.

- Crawl the site to identify all URLs with double slashes

- Fix URL generation on the code side (CMS, framework, templates)

- Set up proper 301 redirects to normalized URLs

- Verify canonicals and ensure they point to the correct version

- Submit a cleaned XML sitemap

- Monitor indexation via Google Search Console

- Verify that other crawlers (analytics, SEO tools) no longer index these faulty URLs

❓ Frequently Asked Questions

Google pénalise-t-il les sites avec des doubles slashes dans les URLs ?

Les doubles slashes créent-ils systématiquement de la duplication de contenu ?

Peut-on utiliser des canonicals au lieu de corriger les URLs ?

Les doubles slashes affectent-ils les performances de crawl ?

Comment vérifier si mes URLs avec doubles slashes sont indexées ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 18/12/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.