Official statement

Other statements from this video 14 ▾

- 5:33 Peut-on vraiment contrôler quelle image apparaît dans les résultats de recherche texte ?

- 7:30 Pourquoi vos rapports Search Console se contredisent-ils constamment ?

- 8:40 Faut-il vraiment uploader sa liste de désaveu uniquement sur le domaine actuel ?

- 10:06 Pourquoi Google classe-t-il vos pages internes au-dessus de votre page catégorie ?

- 11:21 Pourquoi le test d'URL publique échoue-t-il si souvent dans Search Console ?

- 13:33 Pourquoi Google privilégie-t-il la qualité du contenu sur la technique face au statut 'Crawlé - non indexé' ?

- 15:15 Est-ce que des pages « Crawlé - non indexé » pénalisent tout votre site ?

- 16:27 Pourquoi Google détecte-t-il mes pages catégories e-commerce comme du contenu dupliqué ?

- 18:55 Comment Google interprète-t-il réellement l'intention derrière vos requêtes ?

- 22:22 Pourquoi Google peut-il ignorer votre JavaScript si vous placez un noindex dans le head ?

- 24:24 Les iframes dans le <head> sabotent-elles vraiment votre SEO ?

- 26:06 Comment vérifier précisément le comportement des redirections pour Googlebot ?

- 28:06 Une redirection 301 mal configurée peut-elle bloquer l'indexation de vos pages ?

- 30:28 Comment contrôler la date affichée dans les résultats de recherche Google ?

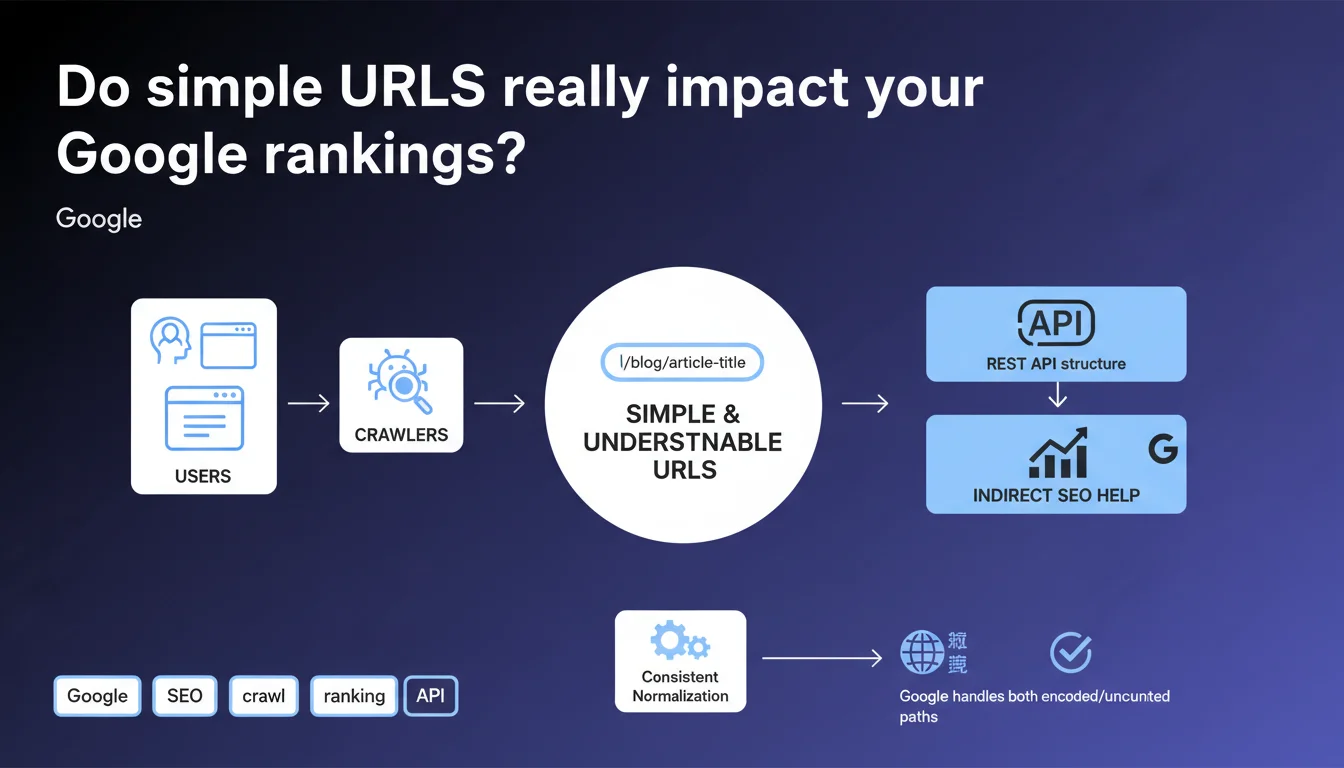

Google confirms that a clear URL structure benefits both users and crawlers equally. The SEO impact remains indirect, but a coherent REST-like architecture facilitates exploration and content comprehension. Regarding Japanese characters, Google treats them identically whether encoded or not — but recommends normalizing to avoid duplicates.

What you need to understand

Why does Google emphasize URL simplicity so much?

A readable URL plays a dual role: it guides the user and helps the crawler understand your site structure. Google advocates for a "REST-like" architecture, meaning an explicit hierarchy that clearly identifies each resource.

Direct impact on rankings? None. Google states it plainly: it's an indirect benefit. A clear URL improves UX, boosts CTR in SERPs, reduces confusion between similar pages — all signals that can cascade and influence performance.

What does "consistent normalization" really mean for non-Latin URLs?

Google clarifies that it recognizes two versions of the same Japanese URL: the encoded form (percent-encoding) and the raw form. Technically, they're identical to the algorithm.

But the trap lies in duplicate management. If your CMS generates one version sometimes and the other at other times, you fragment your signals. Hence the recommendation to normalize: pick one format and stick with it, especially in internal linking and sitemaps.

What's the connection between URL structure and crawl budget?

A well-designed REST architecture reduces click depth and organizes content predictably. The crawler navigates more efficiently, detects new pages faster, redistributes its crawl budget better.

Let's be honest: on a 500-page site, the impact is marginal. On an e-commerce catalog with 100,000 URLs, every gain in structural clarity counts.

- Simple URL = better user and crawler comprehension

- Indirect SEO impact via UX, CTR, reduced confusion

- Normalization essential for non-Latin characters

- REST architecture promotes crawling and scalability

SEO Expert opinion

Is this recommendation consistent with real-world observations?

Yes, largely. We've seen for years that sites with chaotic URL structures (multiple parameters, obscure IDs, excessive depth) encounter more indexing issues. But be careful: it's not the URL itself that penalizes, it's the underlying disorganization.

Google doesn't penalize ugly URLs. However, an incomprehensible URL often reveals a flawed architecture, failing internal linking, duplicate content risks. So yes, simplifying helps — but not for the reasons some imagine.

What nuances isn't Google clarifying here?

The statement remains deliberately vague on one point: what does Google mean by "indirect benefit"? No metrics, no order of magnitude. [Verify this] on your own data: measure before/after a URL restructure if you get the opportunity.

Another gray area: maximum recommended length. Google technically accepts very long URLs, but experience shows URLs over 100 characters cause issues (truncation in SERPs, social sharing, Analytics). Google stays silent on this.

In what cases doesn't this rule apply?

On some high-authority sites, an obscure URL poses strictly no problem. Amazon, eBay, Wikipedia work great with technical, even cryptic URLs. Their internal linking, popularity, and content volume more than compensate.

For an average site without strong backlinks or established traffic, every small signal matters. URL simplicity becomes a lever among others for optimization, not a magic wand.

Practical impact and recommendations

What should you do concretely on your site?

Start with a structure audit. List your main URLs, identify those exceeding 80-100 characters, those accumulating unnecessary parameters, those not reflecting site hierarchy.

Favor a maximum 3-level hierarchy for 80% of your content: domain.com/category/subcategory/page. Avoid redundant segments (domain.com/blog/article/post/title — "article" and "post" serve no purpose).

For multilingual sites with non-Latin characters, choose an encoding and stick with it. If you opt for encoded URLs, configure your CMS to always generate this version. If you prefer raw characters, validate that your server and Analytics tools handle them correctly.

What mistakes should you avoid at all costs?

Never overhaul your URLs without a comprehensive redirect matrix. Test every 301 before production. A common mistake: bulk-redirecting to the homepage or generic categories — you lose semantic relevance.

Avoid non-canonicalized dynamic URLs. If your site generates ?sort=price, ?color=red, ?page=2, ensure each variant points to a clean canonical URL. Otherwise, you fragment your signals.

Don't fall into the opposite trap: rewriting all URLs into ultra-short slugs without business logic. A URL must remain descriptive. /mens-running-shoes-nike-air-zoom-pegasus-39 is long but effective. /p12345 is short but useless.

How do you verify your structure is optimal?

- Audit your URLs in Google Search Console: identify indexed pages with unnecessary parameters

- Verify internal linking consistency: every link should point to the normalized version

- Test click depth: no strategic page should be more than 3 clicks from the homepage

- Check canonical tags: every URL variant must point to the master version

- Analyze server logs to identify unnecessarily crawled URLs (facets, filters, sessions)

- Measure organic CTR by URL: a drop may signal truncation or confusion in SERPs

❓ Frequently Asked Questions

Google pénalise-t-il les URLs longues ou complexes ?

Faut-il absolument passer ses URLs en HTTPS pour profiter de cette recommandation ?

Les URLs avec accents ou caractères spéciaux posent-elles problème ?

Modifier mes URLs actuelles améliora-t-il mon classement ?

Quelle longueur maximale recommander pour une URL ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.