Official statement

Other statements from this video 14 ▾

- 5:33 Peut-on vraiment contrôler quelle image apparaît dans les résultats de recherche texte ?

- 8:40 Faut-il vraiment uploader sa liste de désaveu uniquement sur le domaine actuel ?

- 10:06 Pourquoi Google classe-t-il vos pages internes au-dessus de votre page catégorie ?

- 11:21 Pourquoi le test d'URL publique échoue-t-il si souvent dans Search Console ?

- 13:33 Pourquoi Google privilégie-t-il la qualité du contenu sur la technique face au statut 'Crawlé - non indexé' ?

- 15:15 Est-ce que des pages « Crawlé - non indexé » pénalisent tout votre site ?

- 16:27 Pourquoi Google détecte-t-il mes pages catégories e-commerce comme du contenu dupliqué ?

- 18:55 Comment Google interprète-t-il réellement l'intention derrière vos requêtes ?

- 21:21 Les URLs simples influencent-elles vraiment le classement Google ?

- 22:22 Pourquoi Google peut-il ignorer votre JavaScript si vous placez un noindex dans le head ?

- 24:24 Les iframes dans le <head> sabotent-elles vraiment votre SEO ?

- 26:06 Comment vérifier précisément le comportement des redirections pour Googlebot ?

- 28:06 Une redirection 301 mal configurée peut-elle bloquer l'indexation de vos pages ?

- 30:28 Comment contrôler la date affichée dans les résultats de recherche Google ?

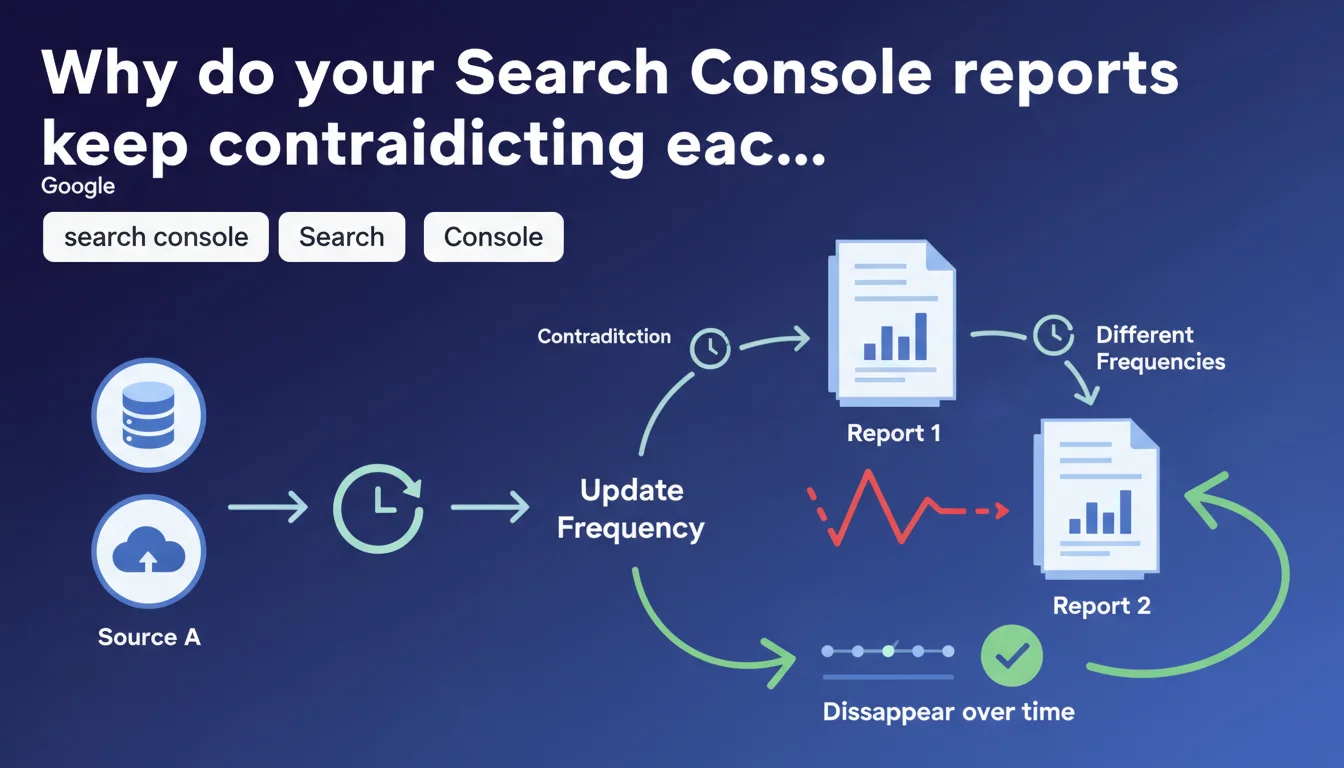

Google confirms that different Search Console reports pull from separate data sources and update at varying frequencies. These lags create temporary inconsistencies between tools, but these contradictions eventually disappear over time. In practice: stop panicking when the numbers don't match.

What you need to understand

Why does Search Console display contradictory data?

Each Search Console tool doesn't pull from the same database. The performance report, URL inspection, coverage report, and page experience report all operate with different sources and asynchronous update cycles.

Concretely? A page can appear indexed in the URL inspection tool but be missing from the performance report. Or the opposite: impressions show up in your statistics while the page is flagged as an error elsewhere.

Are these inconsistencies a bug worth reporting?

No. Google states it clearly: these lags are normal and temporary. The different systems synchronize at their own pace, and the gaps eventually close.

The problem is that Google doesn't specify typical timelines or the conditions that prolong these inconsistencies. This ambiguity feeds anxiety among practitioners monitoring their migrations or technical fixes.

What are the key takeaways?

- Search Console reports use separate data sources and don't update simultaneously

- Contradictions between tools are expected and temporary, not anomalies

- These gaps usually disappear over time without manual intervention

- Google provides no specific timeline for resolving these inconsistencies

- Making SEO decisions based on a single report can lead to analytical errors

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, and it's actually a relief that Google officially acknowledges it. In the field, these contradictions are commonplace — especially after a migration, a large-scale rollout of fixes, or when launching a new section.

The issue is the complete lack of granularity. Google doesn't say which reports are authoritative, or which ones synchronize first. A practitioner who sees a page validated in URL inspection but still flagged as an error in the coverage report doesn't know if they should wait 24 hours or 3 weeks.

What nuances should be added to this claim?

[To verify]: Google claims these inconsistencies "generally disappear over time", but never defines what "time" means. On sites with low crawl frequency, these gaps can persist for weeks, even months.

Another critical point: some contradictions never resolve. When a page is blocked by robots.txt but still shows impressions in performance data, that's not a temporary lag — it's a signal that Google cached this URL before the block and continues serving it in certain contexts.

When should you still be concerned?

If an inconsistency persists beyond 2-3 weeks on a daily-crawled site, that's a warning sign. It can indicate a structural problem: orphaned pagination, URLs canonicalized to non-existent pages, or conflicts between sitemaps and robot directives.

Practical impact and recommendations

What should you actually do when facing these contradictions?

First, don't panic. If you just fixed a technical error or submitted a new sitemap, wait at least 48-72 hours before concluding it's not working.

Then document everything. Take screenshots of different reports with timestamps. This lets you track progress and see if an inconsistency resolves or stalls. Your server logs remain your source of truth: if Googlebot actually crawls your pages and gets a 200, everything else is just a matter of propagation time.

What mistakes should you absolutely avoid?

Don't repeatedly submit your URLs for indexing via the inspection tool. It doesn't fix any report synchronization issues — in fact, it can exhaust your priority crawl quota and dilute the tool's effectiveness.

Another trap: bulk recrawling your site by artificially modifying last-modified dates in your XML sitemap. Google detects these manipulations and may slow its crawl in response. If a page is technically correct, Google will eventually crawl it — forcing the issue is counterproductive.

How do you verify your analysis is reliable?

- Always cross-reference at least 3 sources: URL inspection, coverage report, server logs

- Wait a minimum of 72 hours after a technical fix before concluding it failed

- Document inconsistencies with timestamped screenshots to track their evolution

- Verify in your server logs that Googlebot actually accesses the affected pages

- Never rely solely on the performance report to validate indexation

- If a contradiction persists beyond 3 weeks on an active site, look for a structural problem

❓ Frequently Asked Questions

Combien de temps faut-il attendre avant qu'une incohérence entre rapports Search Console se résorbe ?

Quel rapport Search Console est le plus fiable pour vérifier l'indexation ?

Puis-je soumettre mes URLs plusieurs fois pour accélérer la synchronisation ?

Une page peut-elle générer des impressions même si elle apparaît en erreur dans le rapport de couverture ?

Comment savoir si une incohérence est normale ou révèle un vrai problème technique ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.