Official statement

Other statements from this video 16 ▾

- □ Faut-il vraiment prévenir Google lors d'une refonte de site ?

- □ Google détecte-t-il vraiment le format WEBP par l'en-tête HTTP plutôt que par l'extension du fichier ?

- □ Comment Google évalue-t-il vraiment la proéminence d'une vidéo sur une page ?

- □ Le contenu dupliqué multilingue pénalise-t-il vraiment votre référencement international ?

- □ Faut-il préférer un ccTLD au .com pour cibler un marché local ?

- □ Pourquoi Google insiste-t-il pour isoler les migrations de site de toute autre refonte ?

- □ Pourquoi AdsBot fausse-t-il vos statistiques de crawl dans Search Console ?

- □ Hreflang : faut-il regrouper toutes les annotations dans un seul sitemap ou les séparer par langue ?

- □ Strong vs Bold : Google fait-il vraiment la différence entre ces deux balises ?

- □ Le LCP ne mesure-t-il vraiment que le viewport visible au chargement ?

- □ Le sitemap XML est-il vraiment indispensable pour être indexé par Google ?

- □ Faut-il utiliser hreflang 'de' ou 'de-de' pour cibler les germanophones ?

- □ Google réessaie-t-il vraiment d'indexer vos pages après une erreur 401 ou serveur down ?

- □ Faut-il vraiment imbriquer ses données structurées pour indiquer le focus principal d'une page ?

- □ Faut-il vraiment privilégier l'attribut alt plutôt que l'OCR pour le texte dans les images ?

- □ Pourquoi le scroll infini pénalise-t-il l'indexation de vos pages e-commerce ?

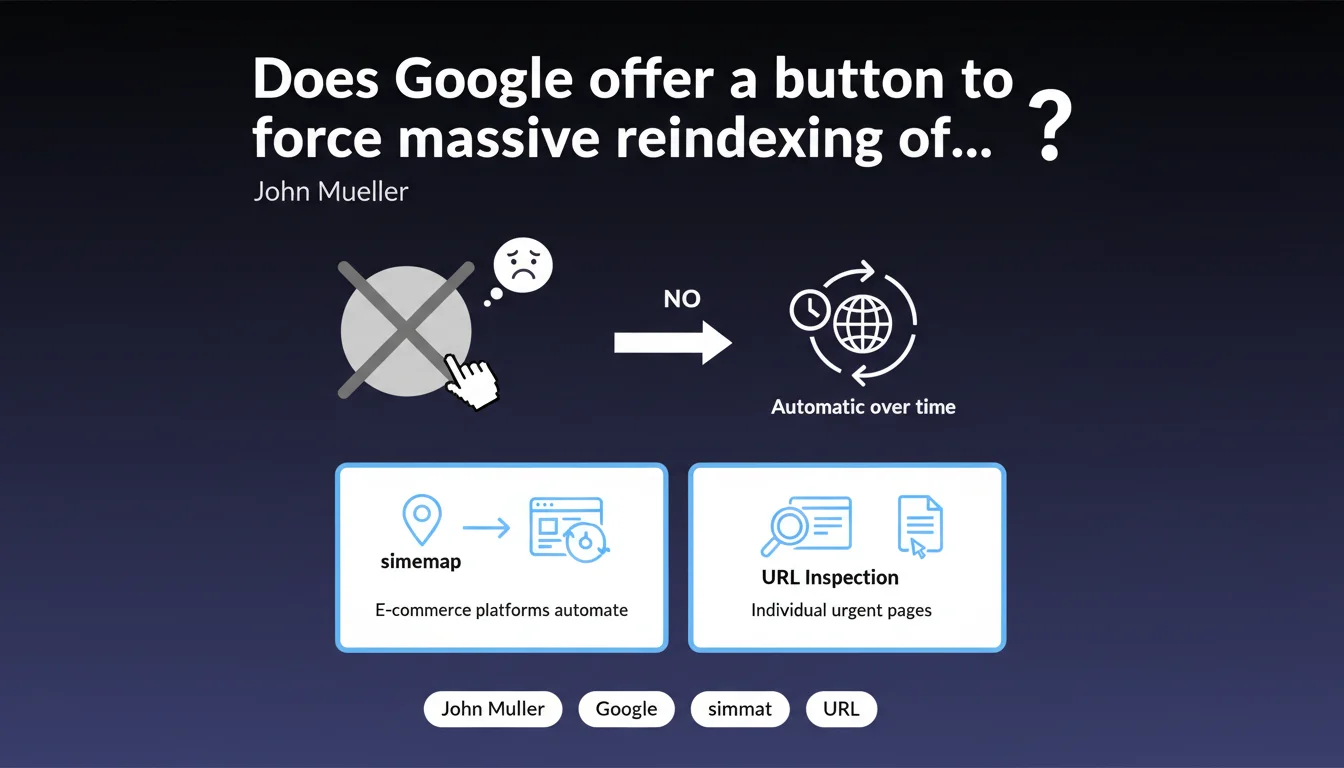

Google provides no button to force massive reprocessing of an entire website. The process happens automatically, at its own pace. To occasionally accelerate indexing of critical pages, only the sitemap and URL Inspection tool are available — but they don't force anything at scale.

What you need to understand

This statement addresses a recurring expectation: being able to force Google to reindex an entire website after a migration, redesign, or major structural change. The reality is harsher — Google provides no lever in your hands to trigger this global reprocessing.

The search engine alone decides the pace and depth of its crawl. You can signal, suggest, but never impose.

Why does Google refuse to provide this type of functionality?

Imagine if a "reindex everything" button were accessible: every website could request Googlebot at will, including for unjustified reasons. Google's crawl budget would become unmanageable. Millions of sites would trigger this request at the slightest change, overwhelming servers and diluting resources allocated to truly priority sites.

Google therefore favors a declarative approach: you signal your content via sitemap, it decides when and how to process it. This isn't a matter of goodwill, it's an infrastructure constraint.

Are the sitemap and URL Inspection tool truly sufficient?

The XML sitemap serves as an informational signal, not an execution order. Google consults it, detects lastmod changes, but nothing guarantees an immediate crawl. On websites with thousands of pages, certain URLs may wait weeks before being recrawled, even when listed in the sitemap.

The URL Inspection tool allows you to request indexing of a single page — useful for an occasional emergency (error correction, time-sensitive content). But using this tool for 500 URLs manually? Impractical. And above all, Google reserves the right to refuse these requests if it deems them too frequent or unjustified.

What does "automatically over time" concretely mean?

This vague formula hides an uncomfortable truth: you don't control the timeline. The reprocessing delay depends on your authority, your crawl budget, the site's usual update frequency, and Googlebot's load at that moment.

An established e-commerce site may see its strategic pages recrawled within hours. A niche site with few backlinks and a history of low activity may wait several weeks. Google gives no guarantees, no SLA. Patience becomes an SEO skill.

- No global reindexing button exists or will exist, for infrastructure reasons.

- The XML sitemap and URL Inspection are the only official levers, but remain suggestions, not commands.

- The reprocessing delay varies based on site authority, history, and crawl budget.

- Google remains sovereign in its crawl priorities — you signal, it decides.

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Completely. Practitioner feedback has confirmed this reality for years: no sitemap manipulation, no manual submission forces massive recrawl. Sites thinking they accelerate indexing by submitting their sitemap daily are wasting their time.

However, certain unofficial techniques — modifying structured data markup, injecting new backlinks to strategic pages, updating related content — sometimes seem to trigger a Googlebot visit. But these observations remain anecdotal and hardly reproducible at scale. [To verify] whether these correlations represent causality or mere calendar coincidence.

What nuances should be added to this official position?

Mueller implies that e-commerce platforms automatically manage sitemaps — true for Shopify, WooCommerce, or PrestaShop in their default configurations. But many custom or hybrid sites lack dynamic sitemap generation, or worse, publish obsolete sitemaps that no one updates.

Another point: the URL Inspection tool is presented as a solution for "individual urgent pages." But in practice, Google limits the number of requests possible per day. On a 10,000-page site undergoing migration, you can only submit a handful. The rest must await Googlebot's discretion.

In what cases might this rule pose a problem?

During an urgent migration under legal or commercial constraint, the lack of a reindexing lever can become critical. Imagine a brand must remove a product for health reasons: if Google delays de-indexing the old listing, it remains accessible in SERPs for days.

Similarly, news or event-driven sites face a structural disadvantage: their content loses value within hours, but Google may take several days to incorporate new URLs. Competitors who benefit from higher crawl budgets gain the advantage mechanically. This is a treatment inequality that Google never explicitly acknowledges.

Practical impact and recommendations

What should you concretely do to maximize your chances of rapid reprocessing?

Prioritize your site's perceived freshness. Google recrawls sites that publish new content regularly more frequently. An active blog, product listing updates, additions to a news section — any activity signal increases the probability of frequent Googlebot visits.

Next, optimize your sitemap to be truly informative. Remove URLs blocked by robots.txt, redirects, error pages. Use the <lastmod> tag consistently: if you modify it without reason, Google will eventually ignore it. A clean and reliable sitemap becomes a genuine signal.

Finally, work on your crawl budget. Reduce unnecessary facets, redundant URL parameters, redirect chains. The more time Googlebot spends on valueless pages, the fewer resources it dedicates to strategic content. The goal: maximize efficiency of each visit.

What mistakes should you absolutely avoid?

Never ever bombard Google with mass manual indexing requests. Some third-party tools promise to automate submissions via the Inspection API — Google detects these patterns and may ignore them, or even penalize the site for abuse.

Another classic mistake: publishing a giant 50,000-URL sitemap all at once after a redesign. Google will crawl it, but at its own pace. Better to segment sitemaps by category or priority, and submit critical sections progressively first.

Finally, don't confuse crawling and indexing. Google may crawl a page without indexing it if deemed low quality or duplicate. Requesting a recrawl solves nothing if the problem is structural — thin content, cannibalization, poor architecture.

How can you verify that your site is properly configured for automatic reprocessing?

- Verify that the XML sitemap is declared in

robots.txtand submitted in Google Search Console. - Check that

<lastmod>dates align with actual content modifications. - Ensure no sitemap URL is blocked by robots.txt, noindex, or 4xx/5xx errors.

- Monitor crawl stats in GSC: a sharp drop signals a technical issue or budget problem.

- Identify strategic pages and track their recrawl frequency via server logs.

- Reduce chained redirects, loops, and unnecessary URL parameters to save crawl budget.

- Publish fresh content regularly to maintain a high Googlebot visit frequency.

❓ Frequently Asked Questions

Peut-on forcer Google à réindexer un site entier après une refonte ?

Le sitemap XML garantit-il une indexation rapide des nouvelles pages ?

L'outil d'inspection d'URL peut-il remplacer un bouton de réindexation massive ?

Combien de temps faut-il attendre pour qu'un site soit entièrement recrawlé après une migration ?

Modifier les dates lastmod du sitemap accélère-t-il le recrawl ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 09/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.