Official statement

Other statements from this video 20 ▾

- □ Faut-il vraiment bloquer les traductions automatiques par IA de votre site en noindex ?

- □ Les recherches site: polluent-elles vos données Search Console ?

- □ Faut-il vraiment arrêter d'optimiser les Core Web Vitals à tout prix ?

- □ Faut-il se méfier d'un domaine expiré racheté ?

- □ L'IA peut-elle vraiment produire du contenu SEO de qualité avec une simple relecture humaine ?

- □ La traduction automatique peut-elle vraiment pénaliser votre classement SEO ?

- □ Les liens d'affiliation pénalisent-ils vraiment le référencement de vos pages ?

- □ Faut-il vraiment réparer tous les backlinks cassés pointant vers votre site ?

- □ NextJS impose-t-il vraiment des bonnes pratiques SEO spécifiques ?

- □ Peut-on canonicaliser des pages à 93% identiques sans risque pour son SEO ?

- □ Faut-il rediriger ou désactiver un sous-domaine SEO non utilisé ?

- □ Faut-il encore s'inquiéter des liens toxiques pointant vers votre site ?

- □ Faut-il vraiment faire correspondre le titre et le H1 d'une page ?

- □ Le contenu localisé échappe-t-il vraiment à la pénalité pour duplicate content ?

- □ Pourquoi Google déconseille-t-il d'utiliser les requêtes site: pour vérifier l'indexation ?

- □ Pourquoi un bon classement ne garantit-il pas un CTR élevé sur Google ?

- □ Les erreurs JavaScript dans la console impactent-elles vraiment le référencement de votre site ?

- □ Pourquoi afficher toutes les variantes produits à Googlebot peut-il détruire votre indexation ?

- □ Faut-il vraiment une page dédiée par vidéo pour ranker dans les résultats enrichis ?

- □ La syndication de contenu est-elle un pari risqué pour votre visibilité organique ?

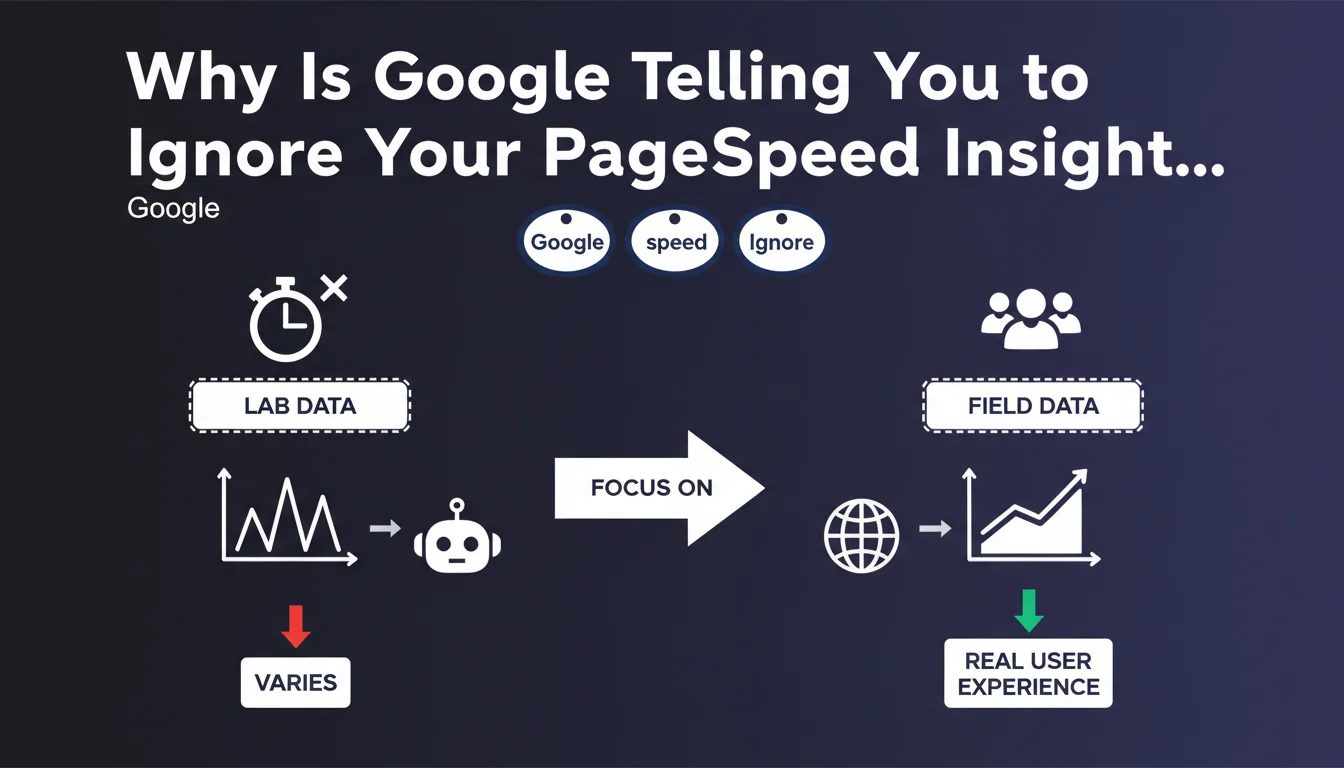

Google recommends prioritizing field data from real users over PageSpeed Insights lab tests, which fluctuate naturally. Synthetic measurements are useful for diagnosis, but only real-world metrics reflect actual user experience and impact rankings.

What you need to understand

What's the actual difference between field data and lab testing?

Your field data comes from the Chrome User Experience Report (CrUX), which collects real-world performance from actual users visiting your pages. These metrics shift based on device type, connection speed, and usage context.

Your lab data from PageSpeed Insights simulates a page load in a controlled, standardized environment. Lighthouse generates these scores with fixed parameters — the same virtual device, the same connection, the same empty cache every time. Result: these tests can swing 10-15 points between runs even if your site hasn't changed at all.

Why is Google making such a big deal about this distinction right now?

Because too many SEO professionals obsess over the Lighthouse score displayed at the top of PageSpeed Insights. That 0-100 number becomes an obsession, even though it only represents a single snapshot in a controlled lab environment.

Google uses exclusively the Core Web Vitals measured in real-world conditions (LCP, FID/INP, CLS) for ranking. A site with a mediocre lab score but excellent field metrics won't be penalized. The reverse is equally true: a 95/100 in the lab won't save you from a ranking drop if real users are experiencing degraded performance.

How do I actually access field data for my own website?

Three main sources: the CrUX report in PageSpeed Insights (top section, before the Lighthouse recommendations), Google Search Console (Experience tab), and the CrUX Dashboard on Google Data Studio. These tools show you the 75th percentile of metrics — the exact threshold Google uses to evaluate your site.

If your site doesn't have enough traffic, CrUX might lack sufficient data. In that case, lab tests are your only indicator — but they tell you nothing about how you'll rank.

- Field data (CrUX) reflects real experience and directly impacts rankings

- Lab tests (Lighthouse) are useful diagnostics but naturally fluctuate

- Google uses the 75th percentile of real metrics to determine whether your site passes Core Web Vitals thresholds

- A strong Lighthouse score is no guarantee of good rankings if your field data is poor

- For low-traffic sites, CrUX may lack data — rely on lab tests as a temporary placeholder

SEO Expert opinion

Does this advice match what we actually see working in the real world?

Absolutely. We regularly see sites with catastrophic Lighthouse scores (30-40/100) ranking beautifully in search results because their CrUX metrics are excellent. Conversely, sites optimized obsessively for Lighthouse complain about zero ranking improvement — because in the real world, with dynamic content, A/B tests, and third-party scripts, the actual experience degrades.

The core problem: too many SEO audits just grab a PageSpeed screenshot and make technical recommendations based on Lighthouse. That's incomplete. A thorough audit must cross-reference both data sources — and critically, track field data trends over several weeks.

What important nuances should we add to this recommendation?

If your site doesn't have enough traffic to appear in CrUX, lab tests become your only signal. In that case, optimize for Lighthouse — not for the overall score, but for individual metrics (LCP, CLS, TBT) that foreshadow your real Core Web Vitals.

Watch out for geographic variations too. CrUX aggregates data by origin (domain + protocol), but a site performing well in Europe might tank in Asia if your CDN is misconfigured. PageSpeed Insights now offers regional filters — use them if you're targeting multiple geographic areas.

And let's be real: a Lighthouse score remains an effective communication tool with clients or leadership. But you absolutely must explain clearly that this number is only diagnostic, not a business objective. [Verify]: Google has never published a hard correlation between Core Web Vitals improvements and traffic gains — case studies exist, but results vary wildly by industry and use case.

When does this rule actually break down?

For staging sites or unpublished pages, field data simply doesn't exist. Here, lab tests are essential for catching regressions before you go live.

Similarly, if you're deploying a major redesign, you'll need to wait 28 days (CrUX collection window) before measuring real impact. Until then, Lighthouse helps validate that your technical foundation is solid.

Practical impact and recommendations

What concrete steps should I take to actually prioritize field data?

First move: install Google Search Console and check Experience > Core Web Vitals. Google shows you which URLs fail, need improvement, or pass thresholds. This is your source of truth — not PageSpeed Insights.

Next, set up RUM monitoring (Real User Monitoring) using tools like SpeedCurve, Treo, or Google's native web-vitals API. These continuously collect metrics from your actual visitors without depending on CrUX's 28-day rolling window.

Then, segment your analysis ruthlessly. A mediocre global CrUX can hide massive disparities: your homepage might be flawless while your product pages drag down the average. Identify critical URLs and prioritize those.

What mistakes should I actively avoid when analyzing performance?

Never compare a lab test run on a MacBook Pro over fiber with field data from users on 3G mobile. The execution environments are completely different. Lighthouse simulates a Moto G4 on throttled 4G — more realistic than a desktop, but still an average.

Don't obsess over Lighthouse's composite score (that green number everyone stares at). This score weights different metrics with arbitrary coefficients that don't reflect your actual business priorities. Focus instead on LCP, CLS, and INP — the three metrics Google actually uses for ranking.

Final trap: ignoring seasonal variation. An e-commerce site's metrics can tank during sales season due to traffic surges, extra promotional banners, and intensive A/B testing. These temporary dips don't justify panic-driven technical overhauls.

How do I verify my site meets Google's expectations?

Check the Core Web Vitals report in Search Console regularly. If all your URLs show green (Good), you're compliant. If some fail, Google tells you exactly which URLs and which metrics are problematic.

Then compare with PageSpeed Insights data for those same URLs. If CrUX shows a problem but Lighthouse is pristine, look at third-party resources (analytics, ads, chat widgets), CDN caching, or geographic variations.

Set up automated alerting whenever a CrUX metric breaches your critical threshold. Tools like Treo or SpeedCurve let you receive a notification if median LCP exceeds 2.5 seconds. Quick response prevents isolated regressions from spreading.

- Install and actively monitor Search Console's Core Web Vitals report

- Configure RUM monitoring to track real-world metrics in real-time

- Segment analysis by page type (homepage, category, product detail, article)

- Run 3-5 consecutive Lighthouse tests and calculate the median score

- Compare CrUX and lab data side-by-side to identify gaps and root causes

- Set up automatic alerts on critical thresholds (LCP > 2.5s, CLS > 0.1, INP > 200ms)

- Stop chasing Lighthouse's composite score — focus on individual metrics instead

- Regularly audit third-party resources (analytics scripts, ads, widgets) that degrade real-world performance

❓ Frequently Asked Questions

Les données CrUX sont-elles disponibles pour tous les sites ?

Faut-il ignorer complètement les recommandations de Lighthouse ?

Quelle est la différence entre FID et INP dans les Core Web Vitals ?

Un mauvais score Lighthouse peut-il pénaliser mon référencement ?

Combien de temps faut-il pour que mes optimisations apparaissent dans CrUX ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 13/06/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.