Official statement

Other statements from this video 20 ▾

- □ Les recherches site: polluent-elles vos données Search Console ?

- □ Pourquoi Google vous demande d'ignorer les scores de PageSpeed Insights ?

- □ Faut-il vraiment arrêter d'optimiser les Core Web Vitals à tout prix ?

- □ Faut-il se méfier d'un domaine expiré racheté ?

- □ L'IA peut-elle vraiment produire du contenu SEO de qualité avec une simple relecture humaine ?

- □ La traduction automatique peut-elle vraiment pénaliser votre classement SEO ?

- □ Les liens d'affiliation pénalisent-ils vraiment le référencement de vos pages ?

- □ Faut-il vraiment réparer tous les backlinks cassés pointant vers votre site ?

- □ NextJS impose-t-il vraiment des bonnes pratiques SEO spécifiques ?

- □ Peut-on canonicaliser des pages à 93% identiques sans risque pour son SEO ?

- □ Faut-il rediriger ou désactiver un sous-domaine SEO non utilisé ?

- □ Faut-il encore s'inquiéter des liens toxiques pointant vers votre site ?

- □ Faut-il vraiment faire correspondre le titre et le H1 d'une page ?

- □ Le contenu localisé échappe-t-il vraiment à la pénalité pour duplicate content ?

- □ Pourquoi Google déconseille-t-il d'utiliser les requêtes site: pour vérifier l'indexation ?

- □ Pourquoi un bon classement ne garantit-il pas un CTR élevé sur Google ?

- □ Les erreurs JavaScript dans la console impactent-elles vraiment le référencement de votre site ?

- □ Pourquoi afficher toutes les variantes produits à Googlebot peut-il détruire votre indexation ?

- □ Faut-il vraiment une page dédiée par vidéo pour ranker dans les résultats enrichis ?

- □ La syndication de contenu est-elle un pari risqué pour votre visibilité organique ?

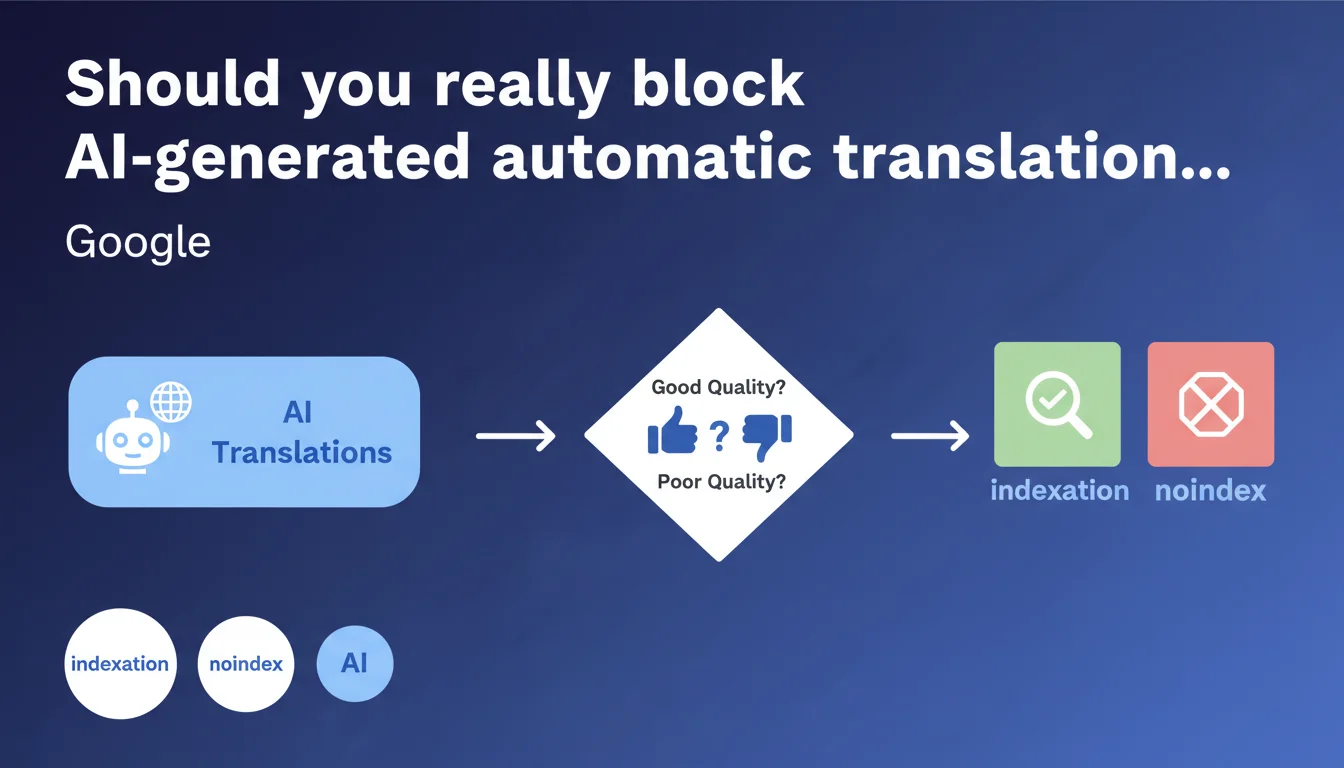

Google states that no specific markup is required to flag automatic translations. The only condition: the translated content must be of high quality and useful. Otherwise, noindex is necessary to avoid polluting your index.

What you need to understand

Does Google penalize automatic translations by default?

No. The official position is clear: an automatic translation is not a ranking demotion criterion in itself. What matters is the final quality and usefulness to the user. Google makes no distinction between human translation and AI-generated translation if the result is usable.

This statement continues Google's position on AI-generated content: the method of production matters less than the result. No technical signal to send, no mandatory declaration. Only delivered value counts.

What is a "high-quality" automatic translation according to Google?

That's where it gets tricky — Google deliberately remains vague. We can infer that this covers the usual criteria: absence of gross grammatical errors, semantic coherence, respect for cultural context, natural readability. But no precise metric is provided.

Practically speaking? If your users bounce immediately or if time on page plummets on translated versions, that's a strong signal that quality isn't there. Behavioral signals become your main barometer.

Why doesn't Google propose dedicated markup?

Because introducing specific markup would amount to creating differentiated treatment between human and automatic translations. Which Google officially refuses to do. The absence of a technical marker forces publishers to focus on quality rather than optimizing signals.

It also simplifies crawling: no new structured data to parse, no risk of manipulation through false signaling. Google prefers to rely on its existing quality detection algorithms.

- No specific markup is required for automatic translations

- Quality and usefulness are the only stated indexation criteria

- Noindex remains the default option for low-quality translations

- Google relies on its existing algorithms to evaluate relevance

- Behavioral signals become a key indicator of quality

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes and no. On large-scale multilingual sites, we do observe that good-quality automatic translations index without issue. No manual penalty, no systematic demotion. E-commerce platforms generating thousands of automatically translated product sheets don't suffer sanctions if readability remains acceptable.

But — and this is where official discourse becomes more debatable — the notion of "good quality" remains completely subjective. [To be verified] Google provides no threshold, no concrete examples, no metric. We're facing a purely evaluative criterion, which leaves enormous room for interpretation.

In what cases do automatic translations really pose problems?

Three critical scenarios emerge from the field. First case: literal translations that break meaning. Idiomatic expressions, wordplay, cultural references — everything that requires human adaptation. Recent generative AI tools (GPT-4, DeepL Pro) perform better than older translation engines, but remain fallible.

Second case: technical or legal content. A translation error in a contract clause or usage notice can have legal consequences. Google may not penalize, but your users will leave. And engagement signals will plummet.

Third case: multiplying language versions without real demand. Creating 50 language versions of a blog targeting only France dilutes your crawl budget and pollutes the index. Even if each page is technically "high-quality," the whole becomes noise.

What nuances should be added to this official statement?

Google says "no problem if it's high quality." Let's be honest: this is a position of principle, not an operational guarantee. In practice, automatic detection of linguistic quality remains imperfect. Google likely relies on language models to evaluate coherence, but these models have limitations.

Furthermore, the absence of specific markup doesn't mean the absence of detection. Google very likely can detect automatic translations via linguistic patterns, repetitive phrasing, typical errors. It simply chooses not to make this an explicit penalty criterion.

Practical impact and recommendations

What should you do concretely before deploying automatic translations?

Test on a restricted sample. Don't deploy 10,000 translated pages at once. Start with 50 to 100 representative pages, wait 2 to 4 weeks, analyze performance. If engagement signals are comparable to original versions, you can scale.

Next, segment by content type. An e-commerce product sheet with structured fields translates well automatically. A blog article with cultural nuances, much less. Adapt your strategy based on content nature.

Finally, implement human review on strategic pages. Homepage, service pages, landing pages with paid ads — anything with direct business impact deserves manual quality control. For the rest, automation may suffice if overall quality is acceptable.

What mistakes must you absolutely avoid with automatic translations?

First mistake: translating URLs without proper 301 redirects. If you switch from /produit-example to /product-example, ensure ranking signals are transferred. Otherwise, you start from scratch on each language version.

Second mistake: not implementing hreflang tags correctly. Google needs to understand the relationship between your language versions. An hreflang error can cause duplicate content or prevent certain versions from indexing.

Third mistake: indexing incomplete or in-progress translations. If your automatic translation tool generates content progressively, temporarily block indexation until the page is complete. Truncated content will be perceived as low-quality.

How do you verify that your automatic translations are indexable?

First step: manual audit on a sample. Read your translations as if you were a native speaker. If you're not bilingual, get someone who is. Gross errors jump out.

Second step: analyze engagement metrics by language in Google Analytics. Compare user behavior between original and translated versions. A significant gap (>30%) in average time or bounce rate is an alarm signal.

Third step: use Search Console to identify indexed but unclicked pages. If your translated versions appear in the index but generate zero clicks, it's either metadata was poorly translated or content doesn't match local search intent.

- Test translations on a sample of 50-100 pages before mass deployment

- Segment by content type (product sheets vs editorial articles)

- Manually review pages with high business impact

- Verify URL consistency and implement 301 redirects if necessary

- Implement hreflang tags correctly for each language version

- Never publish incomplete translations — temporarily block them with noindex

- Compare engagement metrics (time on page, bounce) between versions

- Monitor indexed but unclicked pages in Search Console

- Establish a quality control process for strategic pages

❓ Frequently Asked Questions

Dois-je déclarer à Google que mes traductions sont automatiques ?

Puis-je indexer des traductions générées par ChatGPT ou DeepL ?

Comment savoir si mes traductions sont de bonne qualité selon Google ?

Que faire si mes traductions automatiques sont moyennes mais pas catastrophiques ?

Les traductions automatiques consomment-elles du crawl budget inutilement ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 13/06/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.