Official statement

Other statements from this video 20 ▾

- □ Faut-il vraiment bloquer les traductions automatiques par IA de votre site en noindex ?

- □ Les recherches site: polluent-elles vos données Search Console ?

- □ Pourquoi Google vous demande d'ignorer les scores de PageSpeed Insights ?

- □ Faut-il vraiment arrêter d'optimiser les Core Web Vitals à tout prix ?

- □ Faut-il se méfier d'un domaine expiré racheté ?

- □ L'IA peut-elle vraiment produire du contenu SEO de qualité avec une simple relecture humaine ?

- □ La traduction automatique peut-elle vraiment pénaliser votre classement SEO ?

- □ Les liens d'affiliation pénalisent-ils vraiment le référencement de vos pages ?

- □ Faut-il vraiment réparer tous les backlinks cassés pointant vers votre site ?

- □ NextJS impose-t-il vraiment des bonnes pratiques SEO spécifiques ?

- □ Peut-on canonicaliser des pages à 93% identiques sans risque pour son SEO ?

- □ Faut-il encore s'inquiéter des liens toxiques pointant vers votre site ?

- □ Faut-il vraiment faire correspondre le titre et le H1 d'une page ?

- □ Le contenu localisé échappe-t-il vraiment à la pénalité pour duplicate content ?

- □ Pourquoi Google déconseille-t-il d'utiliser les requêtes site: pour vérifier l'indexation ?

- □ Pourquoi un bon classement ne garantit-il pas un CTR élevé sur Google ?

- □ Les erreurs JavaScript dans la console impactent-elles vraiment le référencement de votre site ?

- □ Pourquoi afficher toutes les variantes produits à Googlebot peut-il détruire votre indexation ?

- □ Faut-il vraiment une page dédiée par vidéo pour ranker dans les résultats enrichis ?

- □ La syndication de contenu est-elle un pari risqué pour votre visibilité organique ?

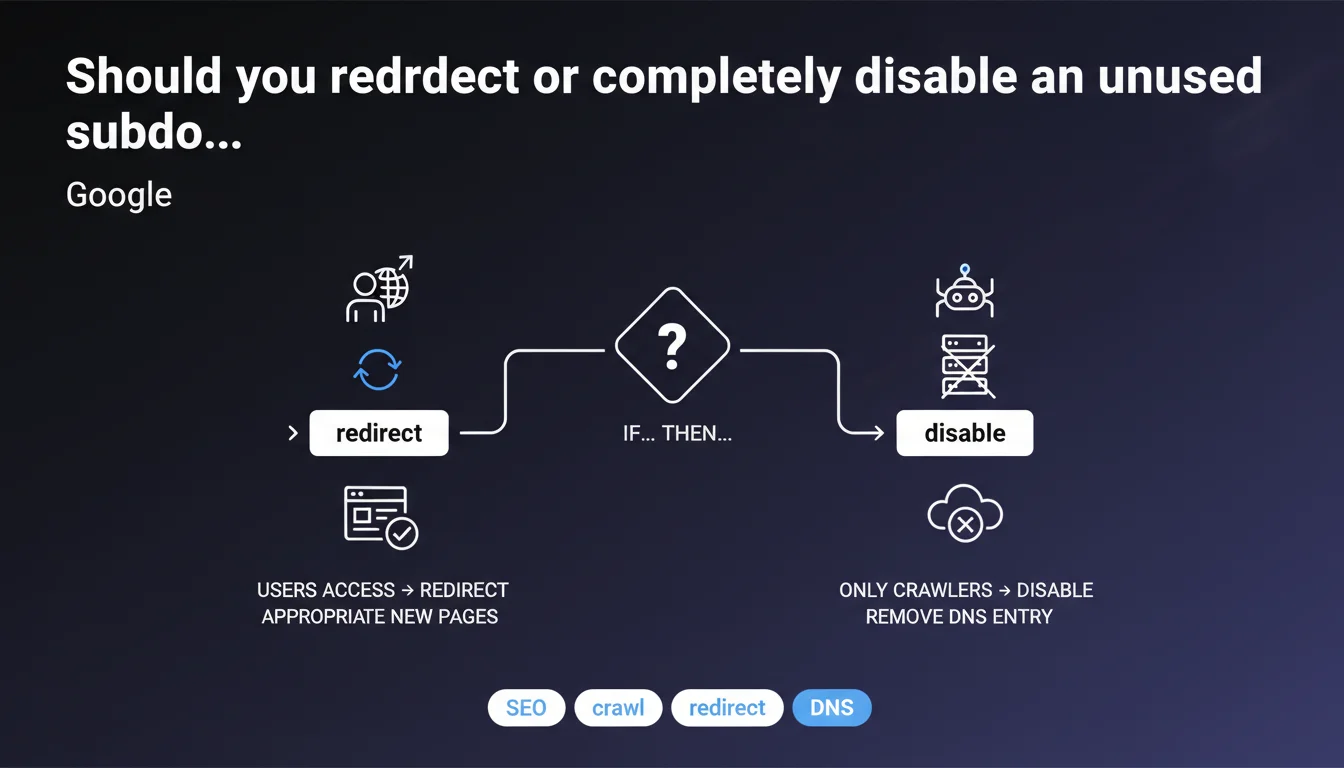

Google distinguishes two scenarios: if users still visit the old subdomain, a 301 redirect to new pages is essential. If only bots access it, completely disabling the subdomain (DNS removal) is acceptable and saves unnecessary crawl budget.

What you need to understand

Why does Google make this distinction between user traffic and crawl activity?

The logic is straightforward: user experience comes first. If someone types your old subdomain URL or clicks an external link pointing to it, they should land somewhere relevant — not on a 404 error or DNS failure. That's why Google recommends redirection in this case.

But if the subdomain only receives crawler visits — because there are no active external links, no user bookmarks — then keeping it online only wastes crawl budget. There's no point maintaining it.

What exactly does Google mean by "removing the DNS entry"?

Concretely, deleting the DNS record (A, AAAA, or CNAME) for the subdomain means the server never even receives the requests. The domain becomes technically nonexistent to the web.

It's more drastic than a simple 410 HTTP code or robots.txt disindexing. The crawler attempts to resolve the domain name, fails, and quickly gives up. Google eventually removes the URLs from its index.

What are the essential points to remember?

- Existing user traffic: mandatory 301 redirect to relevant new pages

- Bot crawl only: DNS disabling acceptable to save crawl budget

- DNS removal: prevents the server from receiving requests, cleanest solution if no humans access the subdomain

- Verify before acting: analyze server logs to ensure there's truly no organic user traffic

SEO Expert opinion

Is this recommendation consistent with real-world practices?

Yes, and it's common sense from a technical standpoint. I've seen dozens of sites maintain zombie subdomains (abandoned blog.example.com, obsolete support.example.com) simply because "it's always been there." Result: Googlebot keeps crawling these dead URLs, exhausting budget that could be allocated to strategic pages on the main domain.

The important nuance Google doesn't specify: before cutting the DNS entry, you must ensure there are no valuable backlinks still pointing to that subdomain. If you have incoming links from authority sites, a 301 redirect is smarter than outright deletion — even if user traffic is zero.

What are the gray areas in Google's statement?

Google says nothing about the deindexing timeline after DNS removal. From experience, it can take weeks or even months if the subdomain had many indexed pages. During that time, the URLs remain in the index and generate DNS errors.

Another point: [To verify] Google doesn't clarify whether disabling a subdomain can have any impact (even minimal) on the main domain if the two were linked by internal linking or structured data. On an e-commerce site with a blog subdomain brutally disabled, I once observed a slight crawl decrease overall — difficult to say if it was correlated or coincidental.

In what cases does this rule not apply?

If your subdomain still hosts valuable historical content (e.g., forum archives, old technical documentation) accessible through Google search, disabling it abruptly will frustrate users finding these pages in SERPs. In that case, maintaining a redirect or even keeping the content accessible as read-only may be more prudent.

Practical impact and recommendations

What should you concretely do before disabling a subdomain?

First step: analyze server logs for at least 90 days. Distinguish bot traffic from human traffic. If you see only Googlebot, Bingbot, and a few scrapers, you're probably safe to disable.

Second step: check external backlinks with Ahrefs, Majestic, or Search Console. If quality links still point to the subdomain, prioritize a 301 redirect — even if direct traffic is zero, you preserve SEO juice.

What mistakes should you avoid when migrating or disabling?

Never disable a subdomain without first setting up monitoring for 4xx/5xx errors on your main domain. Why? Because if you had internal links (links from the main domain to the subdomain), those links will become broken and generate 404s.

Another classic trap: removing the DNS entry before setting up redirects. If you later decide a redirect is necessary, it's too late — the subdomain no longer exists and you can't redirect at the server level.

How do you verify that disabling goes smoothly?

- Monitor Search Console: DNS errors should appear in "Coverage" within a few days

- Verify that the number of indexed pages from the subdomain decreases gradually (search command site:old-subdomain.example.com)

- Check the logs: crawl attempts should stop after 2-3 weeks

- Ensure no traffic drop occurs on the main domain (sign of broken internal linking)

- Document the disabling date and reasons in your internal technical wiki

Disabling an unused subdomain is a technical operation that seems simple on the surface, but requires rigorous analysis of logs, backlinks, and internal linking to avoid side effects. A manipulation error can cost significant organic traffic.

If you manage a site with a complex multi-subdomain architecture or if you're torn between redirecting and pure disabling, consulting a specialized SEO agency can help you avoid costly mistakes. A thorough technical audit allows you to decide based on your specific context — backlinks, traffic history, internal linking — rather than applying a generic rule.

❓ Frequently Asked Questions

Combien de temps faut-il pour que Google désindexe complètement un sous-domaine après suppression DNS ?

Peut-on désactiver un sous-domaine et le réactiver plus tard sans pénalité SEO ?

Faut-il garder une redirection 301 active indéfiniment ou peut-on la retirer après un certain temps ?

Un sous-domaine désactivé peut-il impacter négativement le domaine principal en termes de confiance Google ?

Vaut-il mieux un code 410 Gone ou une suppression DNS pour un sous-domaine définitivement abandonné ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 13/06/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.