Official statement

Other statements from this video 11 ▾

- □ La documentation Google Search Central bénéficie-t-elle d'un avantage dans les résultats de recherche ?

- □ Faut-il vraiment consulter Search Console tous les jours ?

- □ Faut-il vraiment toujours utiliser une redirection 301 pour un changement d'URL permanent ?

- □ Faut-il vraiment corriger tous les 404 de votre site ?

- □ Faut-il vraiment segmenter vos sitemaps au-delà de 50 000 URLs ?

- □ Faut-il vraiment automatiser les balises hreflang pour gérer le multilingue ?

- □ Les titres et meta descriptions influencent-ils vraiment le SEO au-delà du CTR ?

- □ Google réécrit-il vraiment vos balises title comme bon lui semble ?

- □ Faut-il vraiment utiliser des liens nofollow dans vos études de cas clients ?

- □ Comment convaincre une équipe de développement de prioriser les Core Web Vitals ?

- □ Les FAQ en structured data sont-elles vraiment efficaces pour générer des rich snippets ?

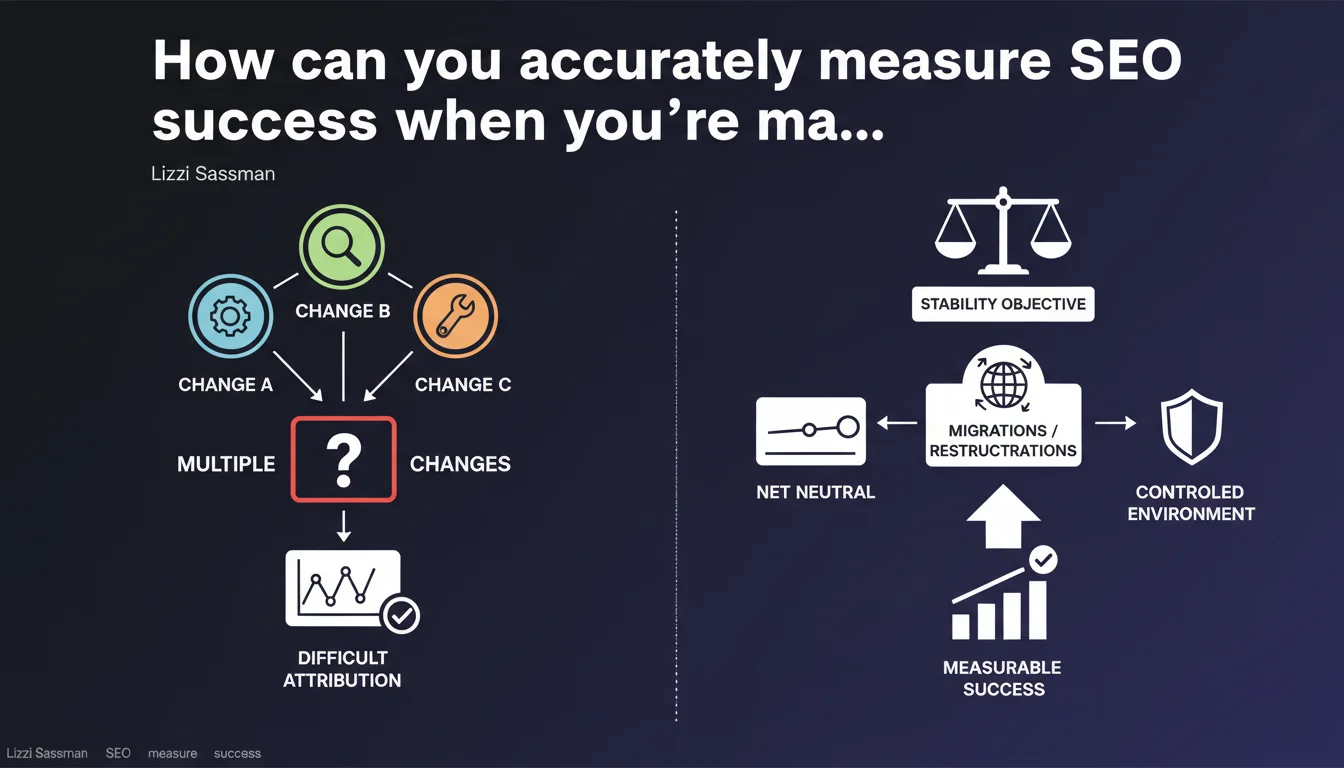

Google acknowledges that it's difficult to isolate the impact of a single optimization when multiple SEO changes are applied simultaneously. In certain cases—particularly during migrations or restructurations—aiming for stability (net neutral) rather than growth is a legitimate and realistic objective.

What you need to understand

Why does Google address this attribution question?

SEO practitioners are constantly seeking to identify the levers that generate the most impact. But in real-world scenarios, it's rare that a single modification is deployed in isolation. Most of the time, several optimizations go live within a compressed timeframe: technical redesign, content adjustments, internal linking modifications.

Google acknowledges here an operational reality: precise attribution of performance to one action or another becomes unclear the moment multiple parameters change. This statement validates what many of us have observed for years—and it introduces an interesting concept: the objective of "net neutral" during structural operations.

What is this "stability" (net neutral) objective?

During a site migration or architecture restructuring, the primary objective isn't always to gain traffic. It's to not lose it. Google suggests here that a neutral result—maintaining visibility, organic traffic, and rankings—can be considered a success.

In practice, this means that a successful migration isn't necessarily one that doubles traffic in three months. It's one that preserves what's been gained, avoids massive losses in rankings and conversions, and establishes the foundation for future growth.

What are the practical consequences for reporting?

This statement challenges the linear attribution model often sold to clients. "We did X, so Y increased" becomes difficult to defend when ten optimizations are deployed in two weeks. You must accept—and make clients accept—that certain results are the product of a combined effect, not a single action.

That said, it doesn't mean you can't measure anything. You simply need to adjust your KPIs and time windows: observe overall trends, segment by page type, cross-reference multiple data sources.

- Precise attribution of an improvement to a specific optimization is rarely possible when multiple actions are undertaken simultaneously

- The "net neutral" objective (stability) is entirely acceptable during major migrations or restructurations

- Client reporting must integrate this reality and avoid promises of direct attribution when multiple levers are activated in parallel

- Observing overall trends and segmenting data by page type helps better understand the combined impact of optimizations

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes, and it's even refreshing. Google steps away here from the overly polished discourse of "test and measure everything." The reality is that pure SEO testing environments are rare. Few companies have the resources to deploy a single modification at a time, wait three months, measure, then move to the next one. SEO projects overlap, deadlines collide, and business dictates the pace.

What Google validates here—perhaps without saying it explicitly—is that the ideal "A/B test" approach is often a luxury. On complex sites with multiple teams, optimization is incremental, continuous, and attribution becomes an exercise in correlation, not causation.

Should you abandon any attempt at attribution?

No. But you need to adjust expectations. Fine-grained attribution remains possible in specific contexts: testing on a page segment, phased rollout by batches, use of control groups. But this requires methodological rigor that many organizations lack.

The problem is that this statement remains vague on what Google concretely recommends. It acknowledges the difficulty but doesn't offer a framework for structuring the analysis. [To verify]: Does Google have internal attribution methodologies it could share with the SEO community?

Is "net neutral" really an acceptable objective?

During a technical migration—CMS change, HTTPS migration, architecture redesign—yes, absolutely. The priority is to not break what works. Any traffic gain is a bonus, but stability is already a victory.

Conversely, on a routine optimization project—content addition, internal linking adjustment, on-page optimization—aiming for neutrality would be a failure. The objective must remain growth. Using "net neutral" as an excuse for flat results on actions that should generate growth is misdiagnosing the situation.

Practical impact and recommendations

How should you structure tracking when multiple optimizations are deployed at the same time?

First rule: document everything. Rigorous changelog, timestamped deployments, segmentation of impacted pages. Even if fine-grained attribution is difficult, this traceability allows you to cross-reference data retroactively and identify correlations.

Next, you must segment your analysis. Rather than looking at overall traffic, observe by page type, search intent, and depth level. If an optimization touches only product sheets, the analysis should focus on that segment—not the entire site.

Finally, accept that some conclusions will be probabilistic, not certain. "It's likely that X contributed to Y" is a more honest formulation than "X caused Y." And with large traffic volumes, this approach remains solid enough to guide decisions.

What errors should you avoid in client reporting?

Don't oversell attribution. If you've deployed five optimizations in three weeks and traffic rises 15%, don't claim it's solely due to title tag adjustments. That's dishonest and weakens SEO's credibility.

Another trap: treating a neutral result as always a failure. On a migration, maintaining 98% of organic traffic is solid performance. You need to explain it that way to the client, with transparency.

What concretely should you do to improve attribution despite these challenges?

- Maintain a precise SEO changelog with dates, impacted pages, and nature of modifications

- Segment analysis by page type rather than reasoning about the whole site

- Use control groups when possible (unmodified pages for comparison)

- Cross-reference multiple data sources: Search Console, Analytics, ranking data, crawl

- On migrations, define "net neutral" as the primary objective and communicate clearly about it

- Accept that some conclusions are probabilistic, not causal, and state it that way in reporting

- Train clients and stakeholders in this reality to adjust their expectations

❓ Frequently Asked Questions

Peut-on vraiment mesurer l'impact d'une optimisation SEO isolée ?

Qu'est-ce qu'un objectif « net neutral » en SEO ?

Comment convaincre un client qu'une migration sans perte de trafic est une réussite ?

Faut-il espacer les optimisations pour mieux mesurer leur impact ?

Comment segmenter l'analyse pour améliorer l'attribution ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 20/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.