Official statement

Other statements from this video 11 ▾

- □ La documentation Google Search Central bénéficie-t-elle d'un avantage dans les résultats de recherche ?

- □ Faut-il vraiment consulter Search Console tous les jours ?

- □ Faut-il vraiment toujours utiliser une redirection 301 pour un changement d'URL permanent ?

- □ Faut-il vraiment corriger tous les 404 de votre site ?

- □ Faut-il vraiment automatiser les balises hreflang pour gérer le multilingue ?

- □ Les titres et meta descriptions influencent-ils vraiment le SEO au-delà du CTR ?

- □ Google réécrit-il vraiment vos balises title comme bon lui semble ?

- □ Faut-il vraiment utiliser des liens nofollow dans vos études de cas clients ?

- □ Comment convaincre une équipe de développement de prioriser les Core Web Vitals ?

- □ Les FAQ en structured data sont-elles vraiment efficaces pour générer des rich snippets ?

- □ Comment mesurer le succès SEO quand vous modifiez plusieurs éléments en même temps ?

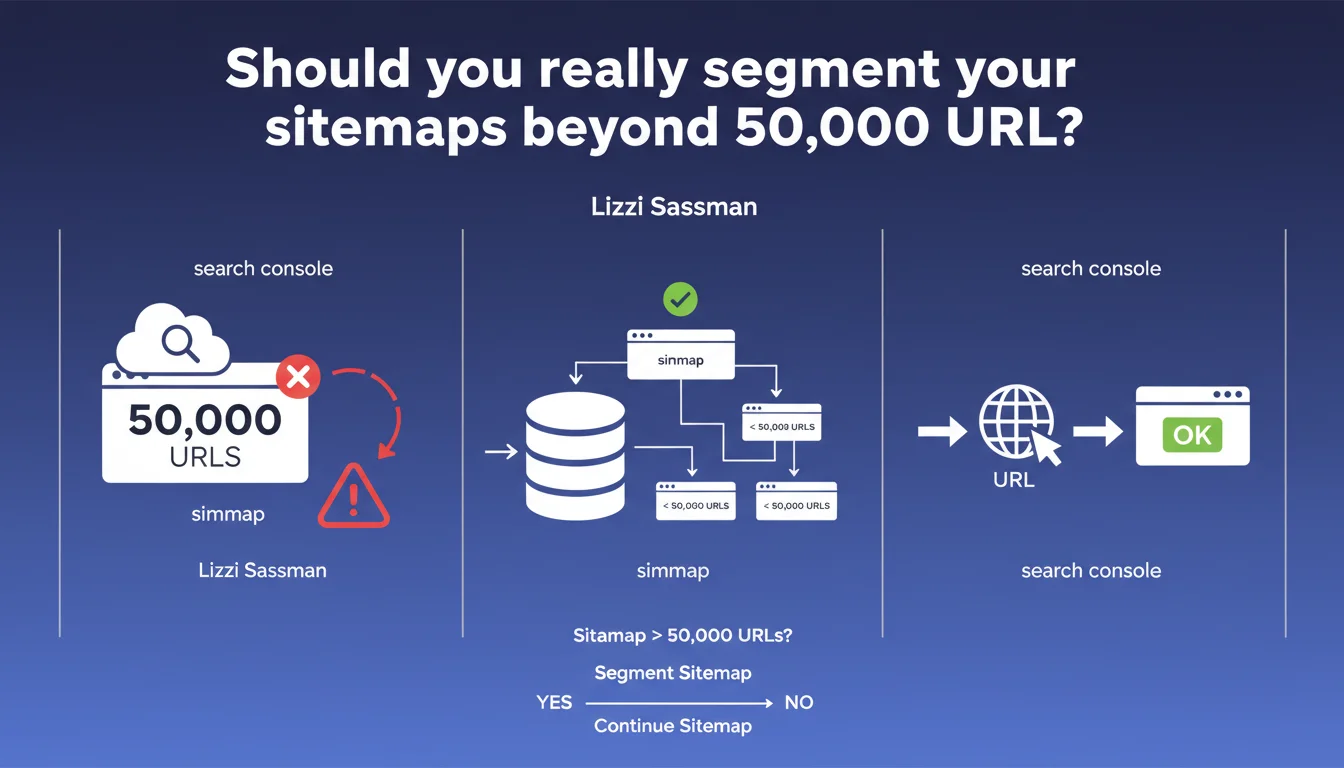

Google generates errors in Search Console for sitemaps exceeding 50,000 URLs. Lizzi Sassman recommends segmentation while clarifying that a site can technically function without a sitemap. The limit is not new, but the alert reminds us that it remains strictly enforced on the reporting side.

What you need to understand

Why does this 50,000 URL limit exist?

The sitemap protocol has defined a technical limit of 50,000 URLs per file since its inception. This constraint is not arbitrary: it aims to guarantee efficient processing by search engines, which must parse and validate these files regularly.

Beyond this limit, Search Console reports an explicit error. This does not mean your site will be penalized or deindexed — but rather that Google will not process the excess file correctly.

Is a sitemap really essential to get indexed?

No. Lizzi Sassman reminds us: a site can function without a sitemap. Google explores sites through their internal links, backlinks, and other discovery signals.

The sitemap mainly serves to accelerate the discovery of new pages and signal content that might escape standard crawling (deep pages, poorly linked, new sections). For a site with solid internal linking and clear structure, the impact of a misconfigured sitemap remains limited.

What actually happens if you exceed the limit?

Search Console displays an error, but Google does not block exploration as a result. The engine may ignore URLs beyond the threshold, or partially process the file depending on its internal architecture.

The main risk? A loss of visibility on the indexation status of certain pages. You no longer know which URLs were submitted, which are ignored, and why some do not appear in the index.

- The 50,000 URL limit per sitemap file is an official technical constraint

- Exceeding this limit generates an error in Search Console, without blocking the site's overall indexation

- A sitemap is not required to be crawled and indexed by Google

- Segmentation allows better tracking and more reliable validation of submitted URLs

SEO Expert opinion

Is this limit really enforced strictly?

Yes — and it is an observable fact from years of experience. Oversized sitemaps systematically generate an error in Search Console. Some sites work around this by submitting multiple files via a sitemap index, but the per-file limit remains firm.

What is less clear is how Google handles URLs beyond the threshold. Are they completely ignored? Partially processed? Google does not document this behavior in detail. [To verify]: the actual impact on indexation likely varies depending on the site's authority and crawl frequency.

Should you really worry if your site functions without a sitemap?

Let's be honest: many high-performing sites have never had a sitemap. If your internal architecture is clean, your linking structure coherent, and you have a reasonable volume of backlinks, the sitemap is just an accelerator.

However, on large sites (e-commerce, aggregators, media), the sitemap becomes a management tool. It allows you to prioritize crawling, quickly signal new URLs, and especially monitor indexation via Search Console. Neglecting its configuration means depriving yourself of a valuable diagnostic lever.

Is segmentation enough to guarantee optimal indexation?

No. Segmenting a sitemap into 50,000 URL chunks only solves a technical problem. If your pages are thin on content, duplicated, or poorly linked, they will not be indexed regardless.

The sitemap is not an automatic green light for indexation — it is a suggestion. Google then decides based on its own criteria: quality, relevance, available crawl budget. A clean sitemap does not index your pages: it makes them visible to crawling.

Practical impact and recommendations

How do you effectively segment your sitemaps?

The classic method: create a sitemap index file that references multiple sub-files. Each contains a maximum of 50,000 URLs. This architecture is standard and recognized by all search engines.

Prioritize thematic or typological segmentation: one sitemap for products, one for categories, one for editorial content. This facilitates diagnosis in case of error and allows you to quickly identify which section is problematic.

What errors should you avoid when implementing?

Do not generate dynamic sitemaps that fluctuate in volume. If a file oscillates between 48,000 and 52,000 URLs depending on updates, you risk intermittent errors that are difficult to trace.

Another pitfall: including URLs blocked by robots.txt, or redirects. Google flags these inconsistencies in Search Console, which pollutes your reports and complicates tracking.

- Verify the number of URLs per sitemap using an XML parser or audit script

- Create a sitemap index file if you exceed 50,000 URLs total

- Segment by type (products, categories, blog) rather than by arbitrary chunk

- Exclude non-indexable URLs (robots.txt, noindex, redirects)

- Test the XML validity of each file before submission

- Monitor Search Console errors after each update

What should you do if your current architecture far exceeds this limit?

Start with an audit of your indexable URLs. How many are actually strategic? Eliminate zombie pages, duplicates, unnecessary parameters. Often, a site with 200,000 URLs only needs to submit 80,000.

Next, automate generation and segmentation. A static sitemap becomes unmanageable beyond 100,000 URLs. Integrate the logic directly into your CMS or tech stack.

❓ Frequently Asked Questions

Que se passe-t-il si je dépasse les 50 000 URLs dans un sitemap ?

Un site sans sitemap peut-il être correctement indexé ?

Comment créer un fichier index sitemap ?

Faut-il segmenter par tranche de 50 000 URLs ou par typologie ?

Un sitemap mal configuré peut-il nuire à mon indexation ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 20/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.