Official statement

Other statements from this video 11 ▾

- □ Le crawl intensif garantit-il vraiment un site de qualité ?

- □ Faut-il forcer Google à crawler davantage pour améliorer son classement ?

- □ Peut-on vraiment augmenter le crawl budget de son site en contactant Google ?

- □ Pourquoi Google crawle-t-il certains sites plus souvent que d'autres ?

- □ Pourquoi Google insiste-t-il sur l'implémentation du header If-Modified-Since ?

- □ Les paramètres d'URL créent-ils vraiment un espace de crawl infini pour Google ?

- □ Pourquoi les hashtags et ancres d'URL compliquent-ils le crawl de Google ?

- □ Pourquoi Google insiste-t-il autant sur les statistiques d'exploration dans Search Console ?

- □ Pourquoi un temps de réponse serveur lent tue-t-il votre crawl budget ?

- □ Googlebot suit-il vraiment les liens comme un utilisateur navigue de page en page ?

- □ Faut-il vraiment optimiser le crawl budget si Google a des ressources illimitées ?

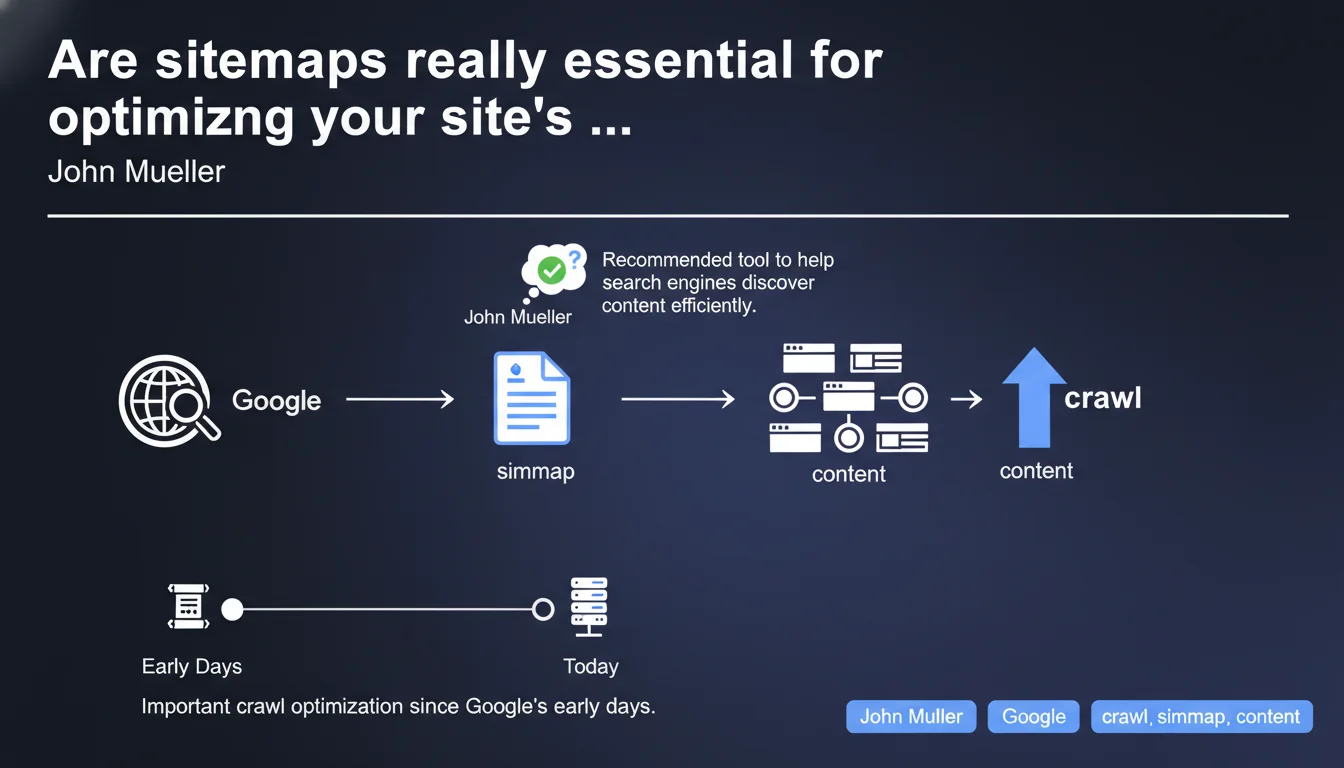

Google confirms that sitemaps remain a recommended tool for facilitating content discovery, despite sometimes being used incorrectly. John Mueller emphasizes their historical importance while clarifying that they constitute a crawl optimization, not a technical requirement. The key issue is configuring them properly to gain genuine benefit.

What you need to understand

Why is Google still pushing sitemaps in 2025?

Sitemaps have existed since Google's inception and represent one of the rare crawl optimizations officially recommended by the search engine. Unlike other practices that fall into myth or experimentation territory, sitemaps are explicitly validated levers.

This statement comes at a time when some SEOs question their utility — especially for small, well-structured sites. Google is reminding us here that even if the tool can be misused, its core principle remains valid and relevant for facilitating content discovery, particularly on medium to large sites.

What does Google mean by "incorrect use" of sitemaps?

Mueller doesn't explicitly detail common errors, but problematic practices are easily identified in the field. Sitemaps bloated with non-indexable URLs, duplicates, orphaned pages listed without internal linking, or outdated sitemaps never updated represent the main pitfalls.

Poor configuration can not only eliminate the positive effect of the sitemap but also send confusing signals to Google about your site's actual structure. The engine then crawls unnecessary resources, degrading your crawl budget instead of optimizing it.

Do sitemaps remain relevant for all types of sites?

For a 20-page blog with clean internal linking, the sitemap adds little value. Google will naturally discover content through internal and external links. However, for an e-commerce site with thousands of products, a news site with frequent publishing, or any site with deeply nested pages, the sitemap becomes essential.

It also serves as a safety net for recent or isolated content that natural crawling might take time to reach. It's a question of discovery speed and crawl resource prioritization.

- Sitemaps remain an official optimization recommended by Google

- Their usefulness depends heavily on site size and structure

- Poor configuration can harm crawl rather than improve it

- They primarily serve to accelerate content discovery and prioritize important URLs

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. Sitemaps continue to play a measurable role on medium and large-scale sites. During crawl audits, we regularly observe that URLs listed in a well-configured XML sitemap are crawled faster than those discovered solely through internal links — especially if they're deep in the site hierarchy.

However — and this is where Mueller remains vague — the actual impact varies enormously depending on context. On an already well-crawled site with solid linking structure, adding a sitemap won't produce noticeable changes. On a site with structural issues, the sitemap becomes a temporary fix, but doesn't address the underlying problem.

What nuances should we add to this recommendation?

Google doesn't specify the quality criteria for an effective sitemap. Yet many are auto-generated without validation, producing files full of errors: noindex URLs, 301 redirects, 404 codes, pages blocked by robots.txt.

A polluted sitemap becomes counterproductive. Google wastes time crawling unnecessary URLs, which reduces the budget available for truly important content. [To verify]: Mueller provides no concrete metrics to assess whether a sitemap is well-configured — Google Search Console data would be needed to validate actual effectiveness.

In what cases does this rule not apply?

For very small sites (under 50 pages) with flat structure and good internal linking, the sitemap adds marginal value. Google will naturally crawl all content without issue.

Similarly, if a site faces deep crawl problems — such as non-existent internal linking, thousands of orphaned pages, or a crawl budget saturated by unnecessary facets — the sitemap will only mask the symptom. You must first fix site architecture before expecting meaningful sitemap effects.

Practical impact and recommendations

What should you concretely do to optimize your sitemap?

First, clean it up. An effective sitemap contains only indexable URLs: HTTP 200 codes, no noindex tags, no redirects, accessible to crawlers. Each URL must be strategically relevant for SEO — no technical pages, unnecessary filters, or duplicate content.

Next, structure it. For large sites, segmenting sitemaps by content type (products, categories, blog) allows better control of crawl priorities. Using the <lastmod> tag to signal recent updates helps Google prioritize fresh pages.

Finally, monitor it. Google Search Console provides detailed reports on sitemap status: URLs submitted vs URLs indexed, detected errors, warnings. A significant gap between submitted and indexed URLs signals a problem — either content quality or technical configuration.

What errors should you absolutely avoid?

Never include noindex URLs in a sitemap. It's a contradictory signal for Google: you're requesting indexation while blocking it. Same for pages blocked by robots.txt or 301/302 redirects.

Also avoid static sitemaps never updated. A sitemap listing removed or outdated URLs pollutes crawl and degrades Google's trust in your file. Automating generation and updates is essential for dynamic sites.

Finally, don't confuse quantity with quality. A sitemap of 50,000 URLs where 80% don't deserve indexing is worse than a sitemap of 5,000 strategic URLs. Less, but better.

How can you verify your sitemap is correctly configured?

First step: submit the sitemap via Google Search Console and analyze error reports. Google flags problematic URLs (404s, redirects, noindex, blocked by robots.txt). Fix these errors before refreshing the file.

Second step: compare the number of URLs submitted with actually indexed URLs. A significant gap indicates either content quality issues or technical obstacles to indexation. Examining server logs reveals whether Google actually crawls your sitemap URLs.

Third step: regularly audit the XML file for inconsistencies — orphaned URLs without internal links, low-quality pages, duplicates. A clean sitemap reflects healthy architecture.

- List only indexable URLs (HTTP 200, no noindex, accessible to crawlers)

- Segment sitemaps by content type on large sites

- Use the <lastmod> tag to signal recent updates

- Automate sitemap generation and updates

- Monitor Google Search Console reports for errors

- Analyze the gap between submitted and indexed URLs

- Exclude low-value SEO URLs (filters, technical pages, duplicates)

❓ Frequently Asked Questions

Un sitemap est-il obligatoire pour être indexé par Google ?

Quelle est la taille maximale d'un sitemap XML ?

Faut-il inclure toutes les pages d'un site dans le sitemap ?

À quelle fréquence faut-il mettre à jour son sitemap ?

Que faire si Google n'indexe pas les URLs de mon sitemap ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 08/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.