Official statement

Other statements from this video 11 ▾

- □ Does intensive crawling really guarantee a high-quality website?

- □ Does forcing Google to crawl more pages actually boost your search rankings?

- □ Why does Google crawl some sites more frequently than others?

- □ Is Google Really Pushing the If-Modified-Since Header to Cut Crawl Costs?

- □ Do URL parameters really create an infinite crawl space for Google?

- □ Why do hashtags and URL anchors complicate Google's crawling process?

- □ Is Google really hiding the secret to faster indexing in your Crawl Stats?

- □ Is a slow server response time killing your crawl budget?

- □ Does Googlebot really crawl links sequentially like a user navigating from page to page?

- □ Should you really optimize crawl budget when Google has unlimited resources?

- □ Are sitemaps really essential for optimizing your site's crawl performance?

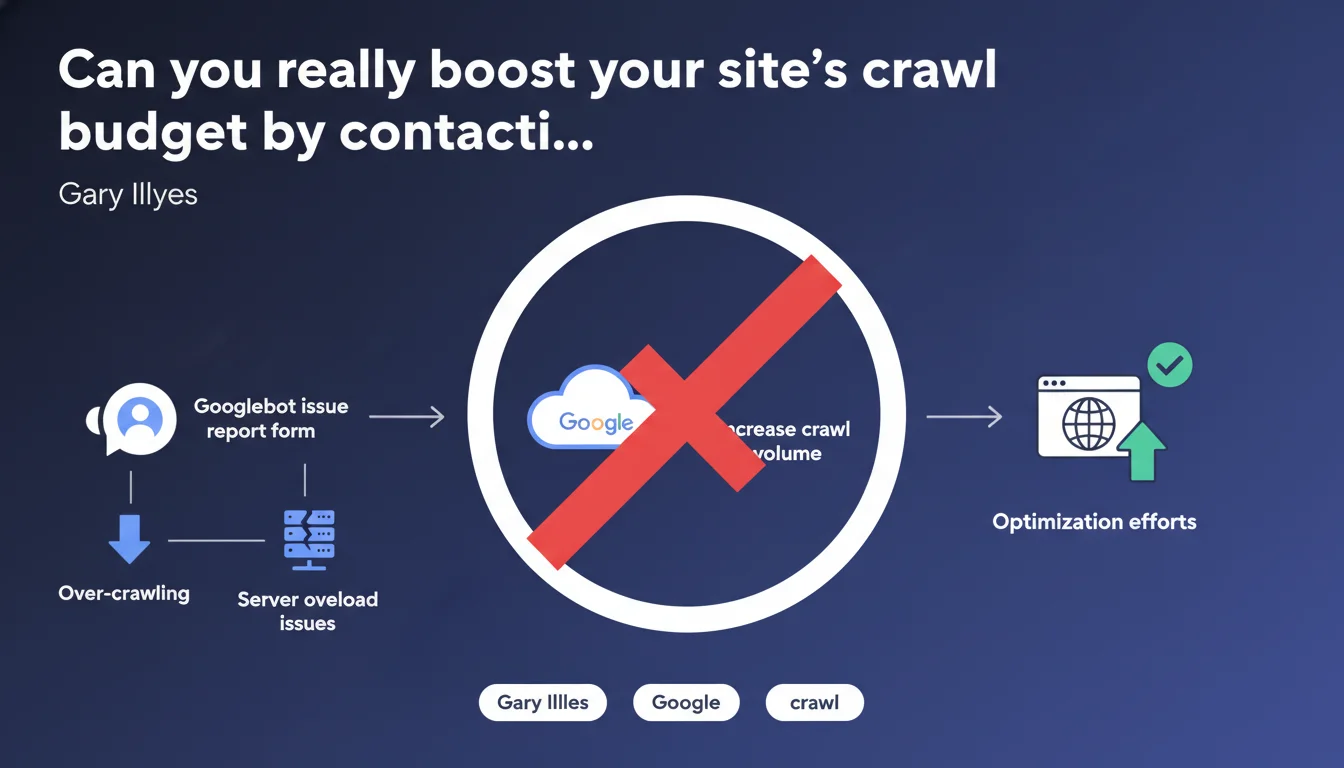

Google confirms that the Googlebot issue report form is not designed to increase crawl volume. This channel is exclusively reserved for reporting over-crawling or server overload issues. Any request to accelerate site exploration through this form will be ignored.

What you need to understand

What is the real purpose of the Googlebot report form?

The Googlebot issue report form exists to flag technical malfunctions — specifically when the bot crawls too aggressively and causes server slowdowns. It's a defensive tool, not an offensive one.

Concretely, this channel serves to throttle Googlebot when it becomes too resource-hungry, not to ask it to speed up. The distinction matters: Google offers no direct way for webmasters to negotiate a higher crawl budget through a form.

Why won't Google allow you to increase crawl on demand?

Allowing webmasters to manually boost their crawl budget would create a systemic imbalance. Crawl resources are finite — if everyone could request more, nobody would actually get more.

Google adjusts crawl budget based on algorithmic criteria: page popularity, update frequency, site technical health. Leaving this calculation in the hands of publishers would amount to surrendering control over resource distribution.

What are the practical implications of this limitation?

If you notice insufficient crawling, there's no way to call Mountain View and accelerate things. The only option: build solid technical foundations to organically earn a higher budget.

- Crawl budget isn't negotiated, it's earned through technical excellence and content freshness

- The Googlebot form is a brake, not an accelerator — save it for over-crawling emergencies

- Any fast indexing strategy must go through internal optimization, not through a request to Google

- The Indexing API remains the only official exception for specific content types (job postings, live videos)

SEO Expert opinion

Does this statement really reflect what happens in practice?

Fundamentally, yes — nobody in the industry has ever managed to sustainably increase their crawl budget by filling out this form. Field experience points in the same direction: Google doesn't process acceleration requests.

But let's be honest: the form's very existence fuels confusion. Many webmasters discover this channel and logically imagine they could use it both ways. Illyes' clarification was therefore necessary, even though it confirms what practitioners already knew.

What gray areas remain despite this statement?

Google remains vague about the exact criteria that determine crawl budget allocation. We know that server speed, page quality, and popularity play a role — but the precise weights? A mystery. [To verify]: the actual impact of certain signals like content refresh rate or crawl depth.

Another unclear point: what happens when you report legitimate over-crawling? Does Google reduce immediately, or does it apply an observation period? Processing delays vary based on reports — sometimes a few days, sometimes several weeks.

In what cases might this rule appear to be contradicted?

The Indexing API constitutes an official exception — it allows you to push certain content (job listings, livestreams) for rapid indexing. But this channel is reserved for specific content types, not open to everything.

Some publishers also report sudden crawl increases after fixing massive server errors or restructuring their site architecture. This isn't a response to a request, but a logical consequence: by making the site more crawlable, you naturally deserve more budget.

Practical impact and recommendations

What should you do if your site lacks crawl budget?

Forget the Googlebot form for solving this problem. Focus on technical optimization: reduce server response times, eliminate unnecessary pages from crawling via robots.txt, consolidate URLs by canonicalizing variations.

Monitor your server logs to identify crawl patterns — which sections are ignored, which ones monopolize the budget. Then redirect Googlebot toward your strategic pages by improving their accessibility and internal linking.

What mistakes should you absolutely avoid?

Don't waste time filling out the Googlebot report form to request more crawl. Not only is it ineffective, but it clogs a channel meant for genuine technical emergencies.

Also avoid submitting repeatedly through Search Console — forcing indexation URL by URL doesn't solve a structural crawl budget problem. If Google isn't exploring enough, it's because the site doesn't (yet) justify more resources.

How can you verify that your strategy is working?

Regularly analyze the crawl statistics report in Search Console. A gradual increase in pages crawled per day indicates you're moving in the right direction.

- Audit your server logs to map Googlebot's actual behavior

- Eliminate zombie pages (thin content, duplicates, unnecessary parameters) that dilute budget

- Optimize server response times — a fast site deserves more crawl

- Strengthen internal linking toward strategic, under-explored pages

- Use your robots.txt file to block sections without SEO value

- Monitor 5xx errors and timeouts — they heavily handicap crawl budget

- Don't count on the Googlebot form to accelerate anything

❓ Frequently Asked Questions

Peut-on utiliser l'API Indexing pour augmenter le crawl de n'importe quel contenu ?

Combien de temps faut-il pour qu'un signalement de sur-crawl soit pris en compte ?

Si je bloque des sections via robots.txt, le budget économisé est-il redistribué ailleurs sur mon site ?

Les soumissions d'URLs via Search Console consomment-elles du crawl budget ?

Un site avec un fort trafic a-t-il automatiquement un crawl budget plus élevé ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 08/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.