Official statement

Other statements from this video 11 ▾

- □ Faut-il forcer Google à crawler davantage pour améliorer son classement ?

- □ Peut-on vraiment augmenter le crawl budget de son site en contactant Google ?

- □ Pourquoi Google crawle-t-il certains sites plus souvent que d'autres ?

- □ Pourquoi Google insiste-t-il sur l'implémentation du header If-Modified-Since ?

- □ Les paramètres d'URL créent-ils vraiment un espace de crawl infini pour Google ?

- □ Pourquoi les hashtags et ancres d'URL compliquent-ils le crawl de Google ?

- □ Pourquoi Google insiste-t-il autant sur les statistiques d'exploration dans Search Console ?

- □ Pourquoi un temps de réponse serveur lent tue-t-il votre crawl budget ?

- □ Googlebot suit-il vraiment les liens comme un utilisateur navigue de page en page ?

- □ Faut-il vraiment optimiser le crawl budget si Google a des ressources illimitées ?

- □ Les sitemaps sont-ils vraiment indispensables pour optimiser le crawl de votre site ?

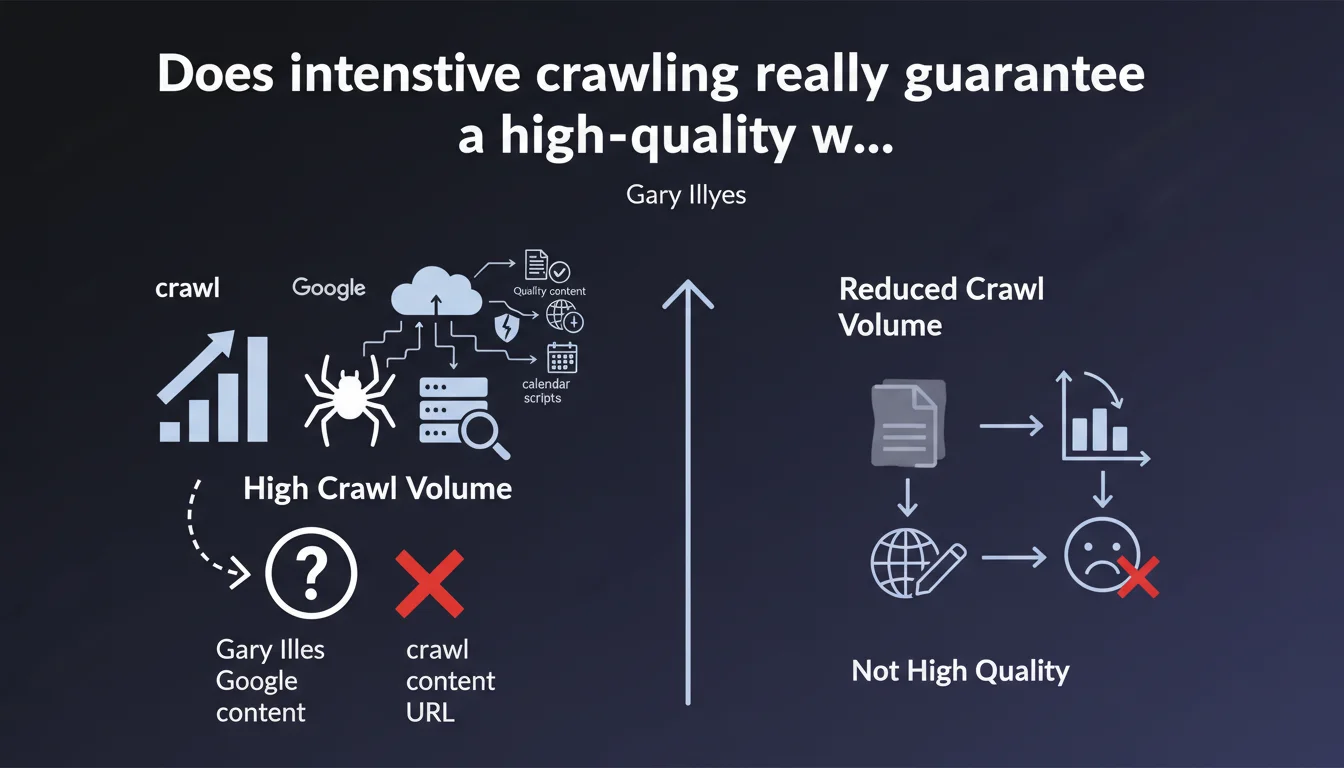

Google crawls a site more frequently for various reasons — quality content, but also hacks, new URLs, or automated scripts. A high crawl volume is therefore not a reliable signal of the quality perceived by Google. Conversely, reduced crawling can reveal weak content or a static site without recent modifications.

What you need to understand

Why does Google crawl some sites more than others?

Crawl volume depends on multiple technical and editorial factors. Google allocates crawl resources based on content freshness, update frequency, site popularity, and server capacity.

But be careful — intensive crawling can also result from negative events: a malware infection, a script generating infinite URLs (calendar, product facets), or poorly optimized architecture that forces Googlebot to explore thousands of useless pages.

Does high crawl volume mean my site is well-rated by Google?

No. This is precisely what Gary Illyes wants to clarify. Crawl volume is not an indicator of quality in the eyes of the algorithm. Google can crawl a compromised or misconfigured site massively without judging it relevant to users.

Conversely, a stable site with few modifications may see its crawl decrease — which does not necessarily reflect a penalty, but simply the absence of new content to index.

What does reduced crawl really reveal?

Low crawl volume can indicate low-quality content, orphaned pages, flat architecture without depth, or a site that never evolves. Google optimizes its resources — if nothing changes, why crawl?

But reduced crawl can also be normal for a brochure website of 10 pages updated quarterly. It all depends on business context.

- Crawl volume ≠ quality perceived by Google

- High crawl volume can result from a hack, infinite URLs, or an automated calendar

- Low crawl volume may signal outdated or rarely modified content

- Business context and site architecture are decisive

- Google optimizes crawl resources based on freshness and popularity

SEO Expert opinion

Does this statement contradict field-observed practices?

Not really. In the field, we indeed observe that infected or misconfigured sites generate massive crawl spikes — without improving their visibility. Server logs show that Googlebot can explore thousands of unnecessary faceted or paginated pages.

On the other hand, the statement remains evasive on one crucial point: what is the optimal crawl threshold for a given site? Gary Illyes provides no numerical data, no diagnostic method. [To verify] — difficult to take action without concrete benchmarks.

What nuances should be added to this claim?

The statement is correct in its logic, but it lacks granularity. A 50,000-product e-commerce site does not have the same challenges as a 200-article blog. Crawl budget remains a strategic lever for large sites — even if Google regularly downplays its importance.

Another nuance: high crawl can be an indirect signal of quality if combined with other indicators (click-through rate, session duration, natural backlinks). Isolated, it means nothing. Combined, it can strengthen a diagnosis.

In what cases does this rule not apply?

For news sites or UGC (User Generated Content) platforms with high volume, intensive crawl often correlates with strong editorial activity — thus indirectly with quality. Google must keep pace with publishing frequency.

But even there, a crawl spike can reveal a problem: thousands of automatically generated spam profiles, indexed toxic comments, or a poorly secured API creating random URLs. Server log diagnosis becomes essential.

Practical impact and recommendations

What should you do concretely to optimize your crawl?

First, analyze server logs to understand what Googlebot actually explores. Identify pages crawled at high frequency that add no value (filters, sessions, tracking URLs). Block them via robots.txt or noindex.

Next, prioritize strategic pages in your XML sitemap. Google does not guarantee it will crawl everything, but you can guide its resources to what matters: active product pages, recent articles, main categories.

Finally, monitor loading speed and server capacity. A slow site hinders crawling — Google slows down to avoid overloading your resources. Optimize TTFB (Time To First Byte) and enable Gzip or Brotli compression.

What mistakes must you absolutely avoid?

Never accidentally block critical resources (CSS, JS, images) via robots.txt. Google needs these files to assess rendering quality. A block prevents mobile-first indexation.

Avoid redirect chains (A → B → C → D). Each hop consumes crawl budget and dilutes PageRank. Go directly from A to D.

Do not leave orphaned pages or duplicate content lying around. Google wastes time crawling unnecessary variants instead of your priority pages.

- Analyze server logs to identify crawl waste

- Block unnecessary URLs (filters, tracking, sessions) via robots.txt

- Prioritize strategic pages in the XML sitemap

- Optimize server speed (TTFB, compression, cache)

- Fix redirect chains

- Eliminate duplicate content and orphaned pages

- Allow crawl of critical resources (CSS, JS)

How do you verify that your site is being crawled correctly?

Log into Google Search Console and check the Coverage stats report. Observe trends: stable crawling is not alarming if your site evolves little. A sudden drop deserves investigation (server errors, robots.txt accidentally modified).

Compare the volume of crawled pages to the volume of indexed pages. If Google crawls 10,000 pages but indexes only 500, you have a quality or duplication problem, not a crawl problem.

❓ Frequently Asked Questions

Un crawl élevé signifie-t-il que mon site est bien référencé ?

Mon site a un crawl très faible, dois-je m'inquiéter ?

Comment puis-je influencer le volume de crawl de Google ?

Le crawl budget est-il encore pertinent pour les petits sites ?

Un pic soudain de crawl peut-il indiquer un problème de sécurité ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 08/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.