Official statement

Other statements from this video 14 ▾

- □ Les liens sortants de sites pénalisés sont-ils vraiment ignorés par Google ?

- □ Faut-il abandonner définitivement les annuaires et le bookmarking social pour son SEO ?

- □ Google ignore-t-il vraiment les liens spam automatiquement ?

- □ Faut-il vraiment utiliser l'outil de désaveu de liens Google ou simplement les ignorer ?

- □ Le choix de votre CMS et du langage de programmation affecte-t-il vraiment votre SEO ?

- □ Les mots-clés dans les URL ont-ils vraiment un impact sur le référencement ?

- □ Les données Search Console reflètent-elles vraiment ce que voient vos utilisateurs ?

- □ Faut-il abandonner le dynamic rendering pour le SEO ?

- □ Faut-il vraiment optimiser les noms de fichiers images pour le SEO ?

- □ Googlebot rend-il vraiment TOUTES les pages crawlées avec succès ?

- □ Le schema markup invalide pénalise-t-il vraiment votre référencement ?

- □ Faut-il vraiment se préoccuper de la différence entre redirections 301 et 302 ?

- □ Le contenu boilerplate étendu pénalise-t-il vraiment votre référencement ?

- □ Un changement de domaine peut-il vraiment se faire sans perte de trafic SEO ?

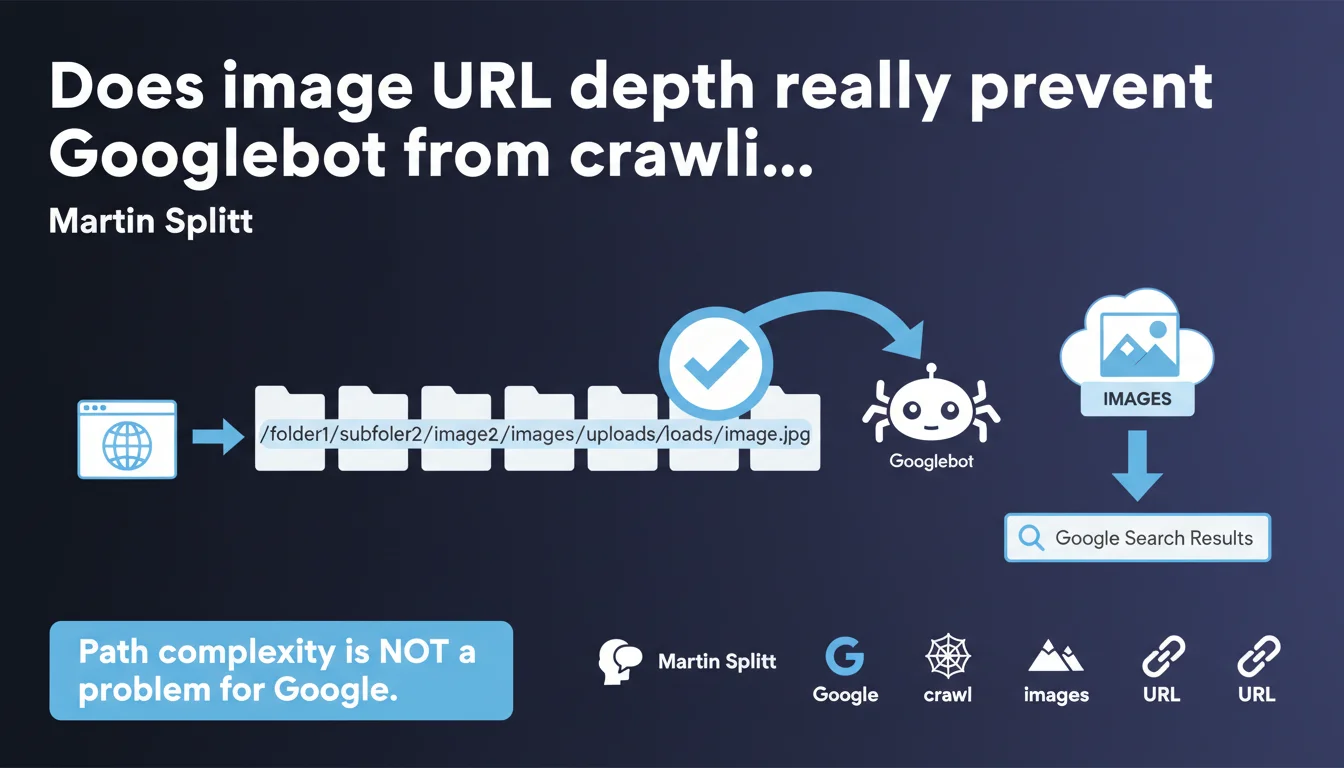

According to Martin Splitt, the number of levels in an image URL does not prevent Googlebot from crawling and indexing that image. Path complexity is not a limiting factor for Google. Good news for complex e-commerce architectures — bad news for those looking for a scapegoat for their indexation problems.

What you need to understand

What exactly do we mean by "URL depth"?

The URL depth refers to the number of levels (or segments) in the path of a resource. For example, /images/products/category/subcategory/brand/image.jpg has six levels. Some e-commerce or media sites generate extremely deep image URLs, sometimes 8 to 10 levels.

This structural complexity has long worried SEOs, who feared it would negatively impact crawl budget or indexation. The conventional wisdom: the deeper it is, the less efficiently Googlebot accesses it.

Why does this question keep coming up?

Because Google has historically communicated about the importance of URL simplicity for text content. Many have extrapolated this advice to images. Furthermore, CMSs and e-commerce platforms sometimes generate absurdly long image URLs, creating confusion between length and depth.

Add to that empirical observations: some deeply nested images don't appear in Google Images. But correlation is not causation — other factors come into play.

What does Martin Splitt actually say?

Splitt asserts that path complexity is not a problem for Googlebot. In other words: if an image is located at /a/b/c/d/e/f/g/image.jpg, Google can crawl and index it exactly the same as if it were at /image.jpg.

Attention: he's talking about the technical capability of crawling, not indexation priority. A crucial nuance we'll explore further.

- URL depth (number of levels) does not technically prevent image crawling

- Googlebot can access complex URLs without structural blocking

- This statement does not mean all images will be indexed

- Other factors determine indexation priority: quality, context, overall crawl budget

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On one hand, we do observe that Google crawls images located very deep in the directory structure. Server logs confirm it: Googlebot doesn't shy away from /wp-content/uploads/2023/05/category/subcat/image.jpg.

On the other hand, sites with poorly optimized image architectures do suffer from indexation problems. But the culprit isn't depth itself — it's rather the lack of internal popularity, absence of semantic context, or oversized files. Depth becomes a marker of these problems, not their direct cause.

What nuances absolutely must be clarified?

Splitt is talking about crawling capacity, not indexation guarantee. Googlebot can crawl a deep image, but will it crawl it? And more importantly, will it index it? [To be verified] because Google remains vague on exact prioritization criteria.

On a site with 100,000 images, Google won't index everything — crawl budget permitting. Deep images, often orphaned or poorly referenced internally, naturally fall to the back of the queue. So technically accessible ≠ strategically indexable.

In what cases does this rule change nothing for you?

If your business-critical images (product sheets, differentiating visuals) are buried eight levels deep, you have an architecture problem — regardless of crawling. Users won't find them, internal linking is non-existent, semantic context is weak.

Googlebot could technically crawl them. But why would it prioritize them? You may have 1,000 other more accessible, better contextualized resources that deserve crawl budget. In short, don't take this statement as a green light to let your URLs spiral out of control.

Practical impact and recommendations

Should you still worry about image URL depth?

Less than before, but don't throw the baby out with the bathwater. Depth itself is no longer a technical brake on crawling. But it often remains correlated with other defects: poor internal linking, missing relevant alt attributes, low page authority.

Focus on what really matters: internal accessibility, strong semantic context, optimized title and alt tags. If your architecture naturally generates deep URLs but everything else is perfect, don't waste time refactoring.

What mistakes should you avoid despite this statement?

First mistake: using this info as an excuse to change nothing about a messy architecture. If your business images are at /assets/tmp/cache/2023/12/05/product-xyz-variant-42.jpg, you have bigger problems than depth.

Second mistake: ignoring internal linking to images. Google can technically crawl a deep image, but it needs to discover it. Without internal links, without an optimized XML sitemap, even a flat URL remains invisible.

- Audit internal linking to your strategic images — absolute priority

- Verify that critical images appear in your dedicated XML sitemap

- Optimize

alttags,titletags, and text context around images - Analyze server logs to identify crawled vs. ignored images

- Only refactor URL architecture if other indicators justify it (UX, maintenance, performance)

- Test your image presence in Google Images via

site:yourdomain.comand URL Inspection Tool

How do you verify your images are being properly crawled?

Use Search Console, Coverage tab, and filter for images. Cross-reference with your server logs to see which URLs Googlebot actually requests. If important images are missing, look for the cause elsewhere than depth.

Set up regular monitoring of image crawl budget: how many requests is Googlebot making to your visual assets? What are the trends? While technical, these optimizations may require support to cross data from logs, analytics, and Search Console — working with a specialized SEO agency can accelerate diagnosis and ensure a cohesive global approach.

❓ Frequently Asked Questions

La profondeur d'URL impacte-t-elle le référencement des images sur Google Images ?

Dois-je modifier mes URLs d'images si elles sont très profondes ?

Pourquoi certaines de mes images profondes ne sont-elles pas indexées alors ?

Le sitemap XML images reste-t-il pertinent avec cette déclaration ?

Quelle est la différence entre profondeur et longueur d'URL pour les images ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 04/05/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.