Official statement

Other statements from this video 16 ▾

- □ Google attribue-t-il vraiment le même poids à tous vos backlinks ?

- □ L'emplacement des liens internes a-t-il vraiment un impact sur le SEO ?

- □ Google classe-t-il vraiment les sites dans des catégories fixes ?

- □ La cohérence NAP impacte-t-elle vraiment le référencement local ou seulement le Knowledge Graph ?

- □ Comment éviter que Google se trompe à cause d'informations conflictuelles entre votre site et votre profil d'établissement ?

- □ Les liens réciproques sont-ils vraiment sans risque pour votre SEO ?

- □ La fréquence des mots-clés influence-t-elle vraiment le classement Google ?

- □ Faut-il vraiment nettoyer TOUTES les pages hackées ou peut-on laisser Google faire le tri ?

- □ Les emojis dans les balises title et meta description apportent-ils un avantage SEO ?

- □ L'API Search Console et l'interface affichent-elles vraiment les mêmes données ?

- □ Pourquoi vos FAQ n'apparaissent-elles pas en rich results malgré un balisage correct ?

- □ Faut-il vraiment réutiliser la même URL pour les pages saisonnières chaque année ?

- □ Les Core Web Vitals n'affectent-ils vraiment ni le crawl ni l'indexation ?

- □ Pourquoi Google réinitialise-t-il l'évaluation d'un site lors d'une migration de sous-domaine vers domaine principal ?

- □ Le TLD .edu booste-t-il vraiment votre référencement ?

- □ Les géo-redirects peuvent-ils réellement bloquer l'indexation de votre contenu ?

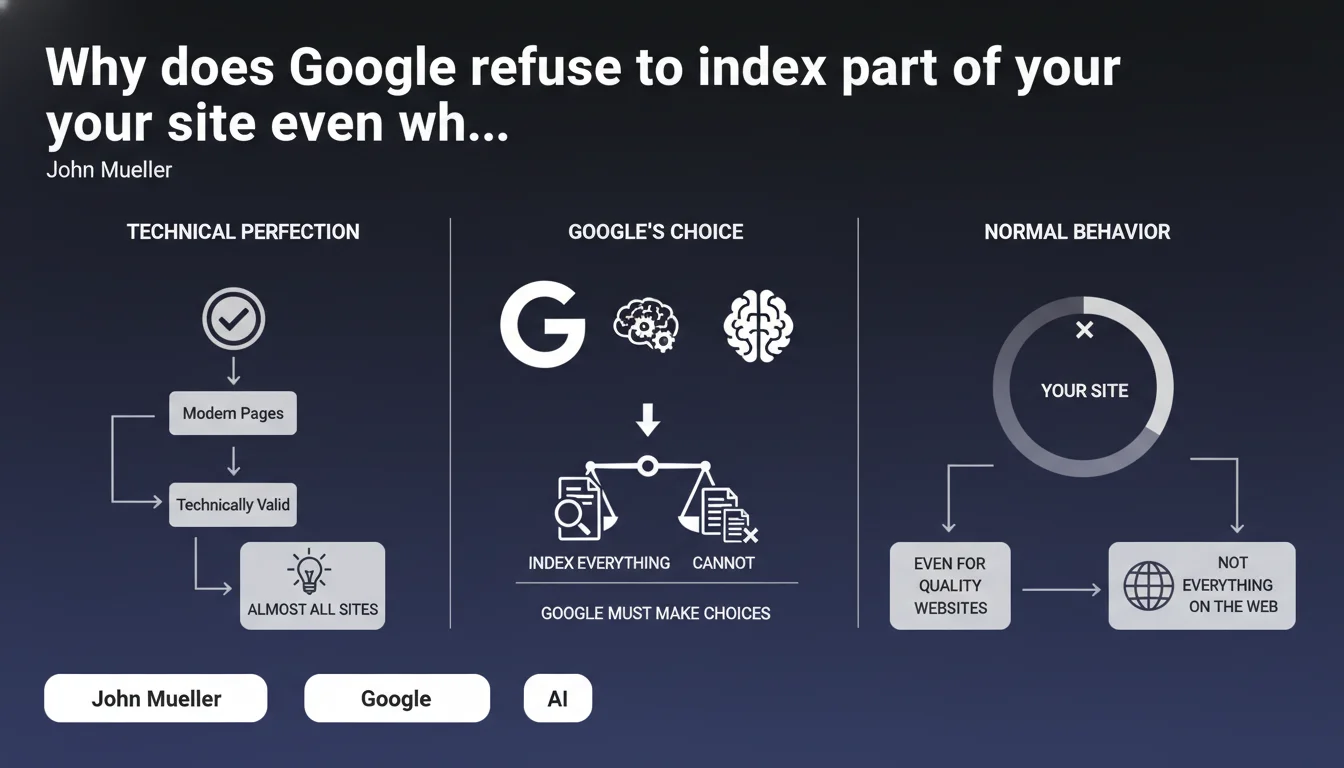

Google does not index the entire web or all content on your site, even if everything is technically valid. It's a deliberate selection strategy: the search engine makes choices based on relevance, quality, and available resources. Having unindexed pages is not necessarily a red flag, even for reference websites.

What you need to understand

Does Google really apply such a drastic selection policy?

Yes, and it's a structural reality. Google does not operate on a principle of exhaustiveness but of efficiency. The engine has finite resources — crawl time, computing power, storage — and must prioritize what deserves to be indexed.

Concretely, even if your page is technically flawless (clean tags, optimal loading time, mobile-friendly), it guarantees nothing. Google evaluates the perceived added value: does this page bring something unique? Is it likely to answer real search queries? If the answer is no, it can stay out of the index.

What determines whether a page will not be indexed?

Google does not communicate a precise grid, but several factors come into play. The crawl budget allocated to your site plays a major role: if Google estimates that certain sections have little interest, it won't waste resources on them.

Content duplication — even partial — is a classic barrier. Pages that are too similar to each other, product filters generating infinite variations, archives without editorial value: all candidates for exclusion. But there are also more subtle cases, like orphaned pages or URLs buried three clicks from the homepage without internal linking.

Is it serious if part of my site is not indexed?

It depends. Not all pages deserve to be indexed, and that's where Mueller brings an essential nuance. If your legal notices, terms and conditions or order confirmation pages are not in the index, it's not a problem — it's actually desirable.

The real issue appears when strategic pages (category pages, flagship product sheets, pillar articles) are not indexed. There, it's an alert signal that deserves investigation: crawl issue, content perceived as weak, internal cannibalization, misconfigured robots.txt or noindex.

- Google makes choices: not all valid pages are indexed by default.

- The crawl budget is a limited resource — Google prioritizes what has perceived value.

- Having unindexed pages is not automatically a problem, especially for utility or redundant content.

- Monitor the indexation of strategic pages: if they're excluded, that's where you need to act.

SEO Expert opinion

Does this statement really reflect what we observe in the field?

Yes, and it's been a constant for years. Selective indexation is not new, but Google rarely communicates about it so frankly. Mueller sets a clear framework here: stop panicking if 100% of your URLs are not indexed.

That said, this statement remains deliberately vague about selection criteria. Google doesn't explicitly say what makes a page "indexable" or not. We know that duplicate content, weak editorial quality, and lack of relevance signals play a role, but the exact thresholds? Unknown. [To verify]: impossible to know whether Google applies uniform quality thresholds or whether they vary by sector.

What nuances should be added to this claim?

The phrase "it's normal that certain parts of a site are not indexed" can be interpreted as a blank check to do nothing. Wrong. Normal doesn't mean desirable or optimal. If your strategic pages are not indexed, it's a signal of structural inefficiency.

Another point: Mueller says that "almost all modern pages are technically valid." This is false — or at least, it's an excessive generalization. In practice, a majority of sites, even well-designed ones, have technical issues that slow down indexation: chain redirects, server response times too long, JavaScript poorly handled. [To verify]: this claim underestimates the reality in the field, where technical problems remain common currency.

In what cases does this rule not apply?

If you have a small to medium-sized site (say, fewer than 1,000 pages) with unique, well-structured content, indexation should be nearly total. If it's not, it's probably a technical or editorial problem — not an arbitrary Google decision.

Conversely, on massive sites (e-commerce with tens of thousands of references, media with substantial archives), partial indexation is inevitable. But again, the question is: are the right pages indexed? If Google overlooks in-stock products in favor of obsolete sheets, that's not normal, that's a malfunction.

Practical impact and recommendations

How do you know if the right pages are indexed?

First step: identify strategic pages. List your main categories, your pillar articles, your priority product sheets. Check their indexation status via Google Search Console ("Coverage" tab) or with a site:yourdomain.com/specific-url query.

Next, cross-reference with your analytics data. If a page generates organic traffic, it's indexed and considered relevant. If it generates nothing but should (quality content, targeted keywords, correct internal linking), it's an alert signal. Dig deeper: crawl issue, content too weak, cannibalization by another page?

What mistakes should you avoid to maximize indexation of priority pages?

Don't dilute crawl budget on pages without value. Block via robots.txt useless sections (infinite product filters, internal search pages, session URLs). Use noindex for utility pages (terms and conditions, legal notices, confirmation pages).

Avoid content duplication, even partial. If you have product variants (color, size), consolidate them on a single sheet with selectors, or use canonicals to consolidate signals. Don't create low-value pages just to "build volume" — Google will ignore them anyway.

Strengthen internal linking toward your strategic pages. An orphaned page or one accessible in 5 clicks has little chance of being crawled regularly, let alone indexed. Place your priority pages maximum 2-3 clicks from the home, and create contextual links from your editorial content.

What should you do concretely to optimize indexation?

- Audit your site with Google Search Console: identify excluded pages and reasons ("Crawled, currently not indexed," "Discovered, currently not indexed," etc.).

- Prioritize strategic pages: verify they are properly indexed and crawled regularly.

- Block useless sections via robots.txt (filters, internal search, temporary URLs).

- Use noindex on utility pages (terms and conditions, legal notices, confirmation pages).

- Consolidate duplicate content: canonical tags, product variant grouping.

- Strengthen internal linking toward priority pages (maximum 2-3 clicks from the home).

- Improve editorial quality of strategic pages: unique, structured content answering a clear search intent.

- Monitor indexation evolution over time: a sudden drop can signal a technical issue or penalty.

❓ Frequently Asked Questions

Est-ce grave si 30% de mes pages ne sont pas indexées ?

Comment forcer Google à indexer une page spécifique ?

Le crawl budget est-il vraiment un facteur limitant pour les petits sites ?

Une page techniquement parfaite peut-elle ne pas être indexée ?

Faut-il utiliser le noindex ou le robots.txt pour bloquer les pages inutiles ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 30/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.