Official statement

Other statements from this video 14 ▾

- □ Comment Google comptabilise-t-il les impressions et clics dans les People Also Ask ?

- □ Les liens depuis un sous-domaine vers le domaine principal ont-ils moins de valeur en SEO ?

- □ Tous les liens dans Search Console sont-ils vraiment utiles pour votre SEO ?

- □ Une page AMP invalide peut-elle quand même être indexée par Google ?

- □ Les liens massifs en footer tuent-ils vraiment le contexte de votre site ?

- □ Le texte caché est-il encore un problème pour le SEO ?

- □ Pourquoi Google refuse-t-il d'indexer certaines de vos pages ?

- □ Quelques liens d'affiliation sans attribut peuvent-ils vraiment échapper à toute pénalité ?

- □ Pourquoi vos images n'apparaissent-elles jamais dans Google Images malgré un bon SEO ?

- □ Pourquoi Google insiste-t-il pour que les sitemaps ne soient jamais votre seul filet de sécurité ?

- □ Faut-il vraiment utiliser des canonicals sur vos pages de recherche interne filtrées ?

- □ Les Core Web Vitals peuvent-ils vraiment faire chuter votre positionnement de 48 places ?

- □ Pourquoi le validateur schema.org contredit-il les outils de Google ?

- □ Pourquoi Google ignore-t-il certains paramètres d'URL de langue ?

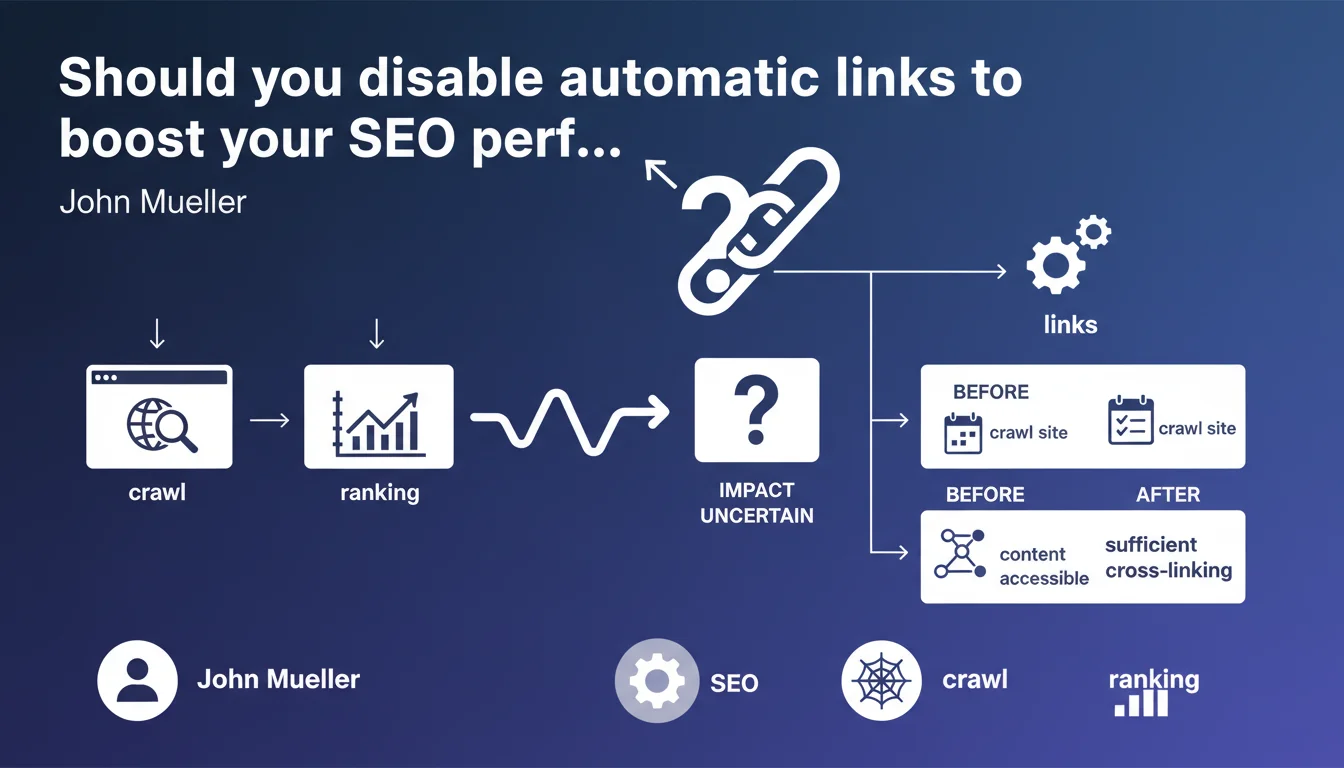

Massively removing automatically generated internal links will likely impact your rankings — but nobody can predict which way. Google recommends crawling your site before and after to verify everything stays accessible and your internal linking structure remains solid. Bottom line: test, measure, but you're essentially flying blind.

What you need to understand

What does Google mean by "automatic links"?

These are links generated automatically by a CMS, plugin, or script: navigation menus, breadcrumbs, contextual links, related posts, tag clouds, footers. Basically, everything that builds itself without manual intervention. These links often account for 90% of a site's internal linking.

The problem? They're often redundant, generic, and semantically irrelevant. But they also serve a critical function: ensuring every page stays accessible and receives a minimum amount of link juice.

Why can't Google predict the impact?

Because the algorithm doesn't know what proportion of your internal linking you're going to cut, or whether what remains will be sufficient to maintain authority for your deeper pages. If you remove an bloated footer but keep solid contextual linking, that's probably positive.

If you strip out all automatic links without putting anything in place, some pages risk becoming orphaned or nearly orphaned. Result: crawl drop, loss of internal PageRank, ranking decline. That's why Google recommends crawling before and after — not to give you an answer, but to limit the damage.

What does "sufficient cross-linking" actually mean?

Google stays vague, as always. You could interpret it as: every page should be reachable within 3-4 clicks from the homepage, and should receive relevant contextual links from other content. Not just a link from the sitemap or a hidden menu.

Concretely, this means a well-thought-out manual or semi-automatic linking structure must exist before you cut automatic links. Otherwise, you're playing Russian roulette with your rankings.

- Automatic links: often generic, but ensure accessibility and juice distribution

- Unpredictable impact: depends on what remains after removal and the quality of alternative linking

- Google's recommendation: crawl before and after, verify accessibility and cross-linking

- Main risk: creating orphan pages or cutting off internal PageRank distribution

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. I've seen sites gain 30% organic traffic after ditching a bloated footer and toxic tag clouds. I've seen others lose that much because they stripped out all automatic links without putting anything in place.

The key point is that Google values the quality and relevance of internal links, not quantity. One well-placed contextual link in a paragraph beats 10 footer links. But if your contextual links don't exist or are insufficient, the footer is still your safety net.

What nuances should be added?

Google doesn't specify which types of automatic links cause problems. [To verify]: does a clean breadcrumb count as a problematic automatic link? Probably not. Does a "Related Posts" block generated by a plugin but semantically relevant cause issues? Unlikely.

The real target is links with no clear semantic value: 150-link footers, identical sidebars across the entire site, tag clouds, "back to top" links counted as internal links. But Google deliberately stays vague.

When doesn't this rule apply?

If your automatic linking is well-designed, contextualized, and limited, touching it is probably a waste of time. A clean breadcrumb, a structured main menu, contextual links generated by a good related posts plugin — nothing wrong there.

The problem mainly arises on sites that stack plugins, multiply identical link blocks across all pages, and bury useful content in noise. There, cutting can help. But you need to know what to cut.

Practical impact and recommendations

What should you do concretely before touching automatic links?

First, crawl your site with Screaming Frog, OnCrawl, or Botify. Identify pages that only receive links through automatic blocks (footer, sidebar). These are your at-risk pages.

Next, analyze internal PageRank distribution. Which pages receive the most juice? Which pages receive too little despite their strategic importance? If your footer distributes 70% of internal juice, removing it without a backup plan is suicidal.

Which mistakes must you absolutely avoid?

Never remove automatic links without having set up alternative linking in place. That means: manual or semi-automatic contextual links, semantic silos, relevant recommendations at the end of articles.

Another classic mistake: removing all links at once and waiting to see what happens. Test in phases. Remove one type of link (e.g., tag clouds), measure impact for 2-3 weeks, adjust.

How do you verify the change doesn't break anything?

Compare crawls before and after. Verify that all strategic pages remain accessible within 3-4 clicks from the homepage. Monitor orphan pages (no other pages link to them).

Track your rankings and organic traffic for at least 4 weeks after the change. If you observe a significant drop on critical pages, partially reactivate automatic links or strengthen contextual linking on those pages.

- Crawl your site before any modification (Screaming Frog, OnCrawl, Botify)

- Identify pages dependent on automatic links

- Analyze internal PageRank distribution

- Set up alternative linking (contextuals, semantic silos) before cutting

- Test in phases: one link type at a time

- Re-crawl after each phase and compare

- Verify no strategic pages become orphaned or inaccessible

- Monitor rankings and traffic for minimum 4 weeks

❓ Frequently Asked Questions

Les breadcrumbs sont-ils considérés comme des liens automatiques problématiques ?

Combien de liens internes par page est considéré comme trop ?

Faut-il supprimer tous les liens de footer pour améliorer le SEO ?

Quel outil utiliser pour crawler et comparer avant/après ?

Combien de temps faut-il pour voir l'impact d'une suppression de liens automatiques ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.