Official statement

Other statements from this video 14 ▾

- □ Comment Google comptabilise-t-il les impressions et clics dans les People Also Ask ?

- □ Les liens depuis un sous-domaine vers le domaine principal ont-ils moins de valeur en SEO ?

- □ Tous les liens dans Search Console sont-ils vraiment utiles pour votre SEO ?

- □ Une page AMP invalide peut-elle quand même être indexée par Google ?

- □ Les liens massifs en footer tuent-ils vraiment le contexte de votre site ?

- □ Faut-il désactiver les liens automatiques pour améliorer son SEO ?

- □ Le texte caché est-il encore un problème pour le SEO ?

- □ Pourquoi Google refuse-t-il d'indexer certaines de vos pages ?

- □ Quelques liens d'affiliation sans attribut peuvent-ils vraiment échapper à toute pénalité ?

- □ Pourquoi vos images n'apparaissent-elles jamais dans Google Images malgré un bon SEO ?

- □ Faut-il vraiment utiliser des canonicals sur vos pages de recherche interne filtrées ?

- □ Les Core Web Vitals peuvent-ils vraiment faire chuter votre positionnement de 48 places ?

- □ Pourquoi le validateur schema.org contredit-il les outils de Google ?

- □ Pourquoi Google ignore-t-il certains paramètres d'URL de langue ?

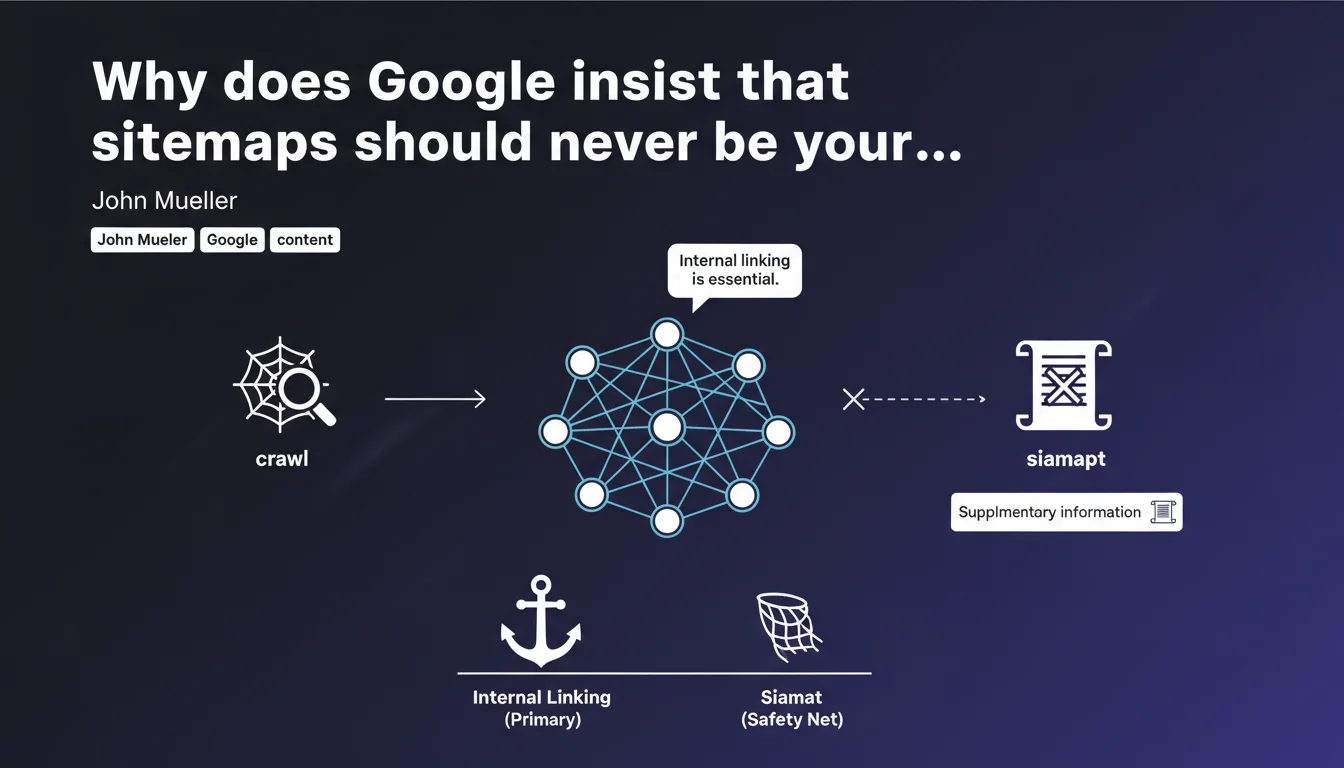

Google states that sitemaps should only complement natural content discovery, not replace it. If your crawl depends on the sitemap to discover pages, your internal architecture is failing. The message: fix your internal linking before relying on an XML crutch.

What you need to understand

What exactly does Google mean by "natural discovery"?

When Mueller talks about discovery without a sitemap, he's referring to organic crawling — Googlebot following internal links from page to page. If a URL is only accessible via the XML sitemap and not via a crawlable HTML link, that's a red flag.

This reveals either deficient internal linking or orphaned pages. Google wants to be able to reconstruct your entire site architecture starting from the homepage, without technical crutches.

Has the sitemap become useless then?

No. It remains a metadata tool: lastmod, priority (even though Google often ignores it), update frequency. It accelerates discovery of new pages and allows you to signal deep or recent content.

But it never compensates for a structural problem. If your strategic pages are only crawlable by consulting the sitemap, you have an architecture issue — and Google knows it.

Why this insistence on internal linking?

Because internal linking distributes PageRank and structures semantic understanding of the site. A sitemap does neither: it's a flat list of URLs, without relational context.

Google favors sites where logical structure is naturally apparent. Good internal linking also improves user experience — a human crawler should be able to navigate intuitively.

- The XML sitemap is a complement, not a workaround solution

- All important pages must be accessible via at least one crawlable internal link

- Orphaned pages only in the sitemap are a symptom, not a strategy

- Internal linking distributes authority and structures semantics — the sitemap does not

- Google regularly tests your architecture by crawling without consulting the sitemap

SEO Expert opinion

Is this statement consistent with field observations?

Yes — and it's actually observable in crawl logs. Googlebot doesn't systematically consult the sitemap before each crawl session. It follows links. Sites with solid internal linking see their new pages indexed faster, even if the sitemap takes a few hours to be refetched.

On the other hand, e-commerce sites with thousands of non-linked product pages see significant indexation delays, even with a perfectly structured sitemap. The sitemap speeds things up, but guarantees nothing if linking is absent.

What nuances should we add to this rule?

Let's be honest: on sites with hundreds of thousands of URLs, it's impossible to link everything effectively. Facets, archives, seasonal content — some content is legitimately less accessible.

In these cases, the sitemap remains a relevant discovery tool for deep or temporary content. But this should remain the exception. If 80% of your strategic pages are only findable via the sitemap, you have a major architectural problem.

[To verify]: Google claims that the sitemap "must provide supplementary information", but remains vague about which exactly. Attributes like priority and changefreq are officially ignored or given little weight — so which metadata is actually exploited? The lastmod date, probably. The rest is folklore.

In which cases does this rule not fully apply?

Sites with dynamically generated content or infinite paginated archives pose problems. A blog with 10 years of articles can legitimately have very deep pages, accessible only through heavy pagination or internal search.

In this case, the sitemap becomes an acceptable safety net — but you still need thematic entry points (categories, tags, related content) to facilitate natural navigation.

Practical impact and recommendations

What should you concretely do to comply with this logic?

Audit your internal linking architecture. Use Screaming Frog, Oncrawl, or your server logs to identify orphaned pages — those present in the sitemap but inaccessible through internal crawl.

Then fix them. Add contextual links from relevant pages, integrate these URLs into your category menus, create hub content that aggregates them. The goal: every strategic page should be accessible in maximum 3-4 clicks from the homepage.

What mistakes should you absolutely avoid?

Never rely on the sitemap to "force" indexation of poorly linked pages. Google guarantees nothing. If a page has no internal links, even a perfect sitemap isn't enough to prioritize it in the crawl budget.

Another common mistake: creating gigantic sitemaps with thousands of low-quality URLs. That dilutes the signal. Better to have a targeted sitemap focusing on strategic pages, complemented by effective internal linking.

How can you verify that your site respects this recommendation?

Crawl your site with a standard tool (Screaming Frog, Sitebulb) starting from the homepage, without providing it the sitemap. Compare the discovered URLs with those in your XML sitemap.

If you find a significant gap — hundreds of pages only in the sitemap — you have a linking problem. Prioritize strategic pages and add relevant internal links.

- Crawl the site without the sitemap to identify orphaned pages

- Analyze server logs to see if Googlebot accesses pages without consulting the sitemap

- Add contextual internal links to important poorly-linked pages

- Reduce click depth of strategic pages (max 3-4 clicks from homepage)

- Clean up the sitemap: keep only quality and up-to-date URLs

- Monitor indexation delays before/after internal linking optimization

❓ Frequently Asked Questions

Est-ce que je peux supprimer mon sitemap si mon maillage interne est bon ?

Comment identifier les pages orphelines présentes uniquement dans mon sitemap ?

Quelle profondeur de clic est acceptable pour qu'une page soit bien crawlée ?

Les attributs priority et changefreq dans le sitemap sont-ils pris en compte par Google ?

Un site e-commerce avec des milliers de produits doit-il tous les linker en interne ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.