Official statement

Other statements from this video 10 ▾

- □ Les snippets mal optimisés peuvent-ils vraiment faire chuter votre trafic organique ?

- □ Pourquoi vos requêtes de crawl tombent-elles à zéro dans Search Console ?

- □ Search Console suffit-il vraiment à détecter tous vos problèmes de crawl ?

- □ Search Console suffit-elle vraiment pour diagnostiquer vos problèmes d'indexation ?

- □ Quels outils Google faut-il vraiment utiliser pour auditer correctement un site ?

- □ Lighthouse peut-il vraiment remplacer un audit SEO professionnel ?

- □ Un robots.txt mal configuré peut-il vraiment bloquer vos snippets et votre crawl ?

- □ Faut-il vraiment monitorer votre robots.txt en continu ?

- □ Faut-il vraiment tester son robots.txt avant chaque modification ?

- □ Faut-il bloquer certaines sections de votre site dans le robots.txt ?

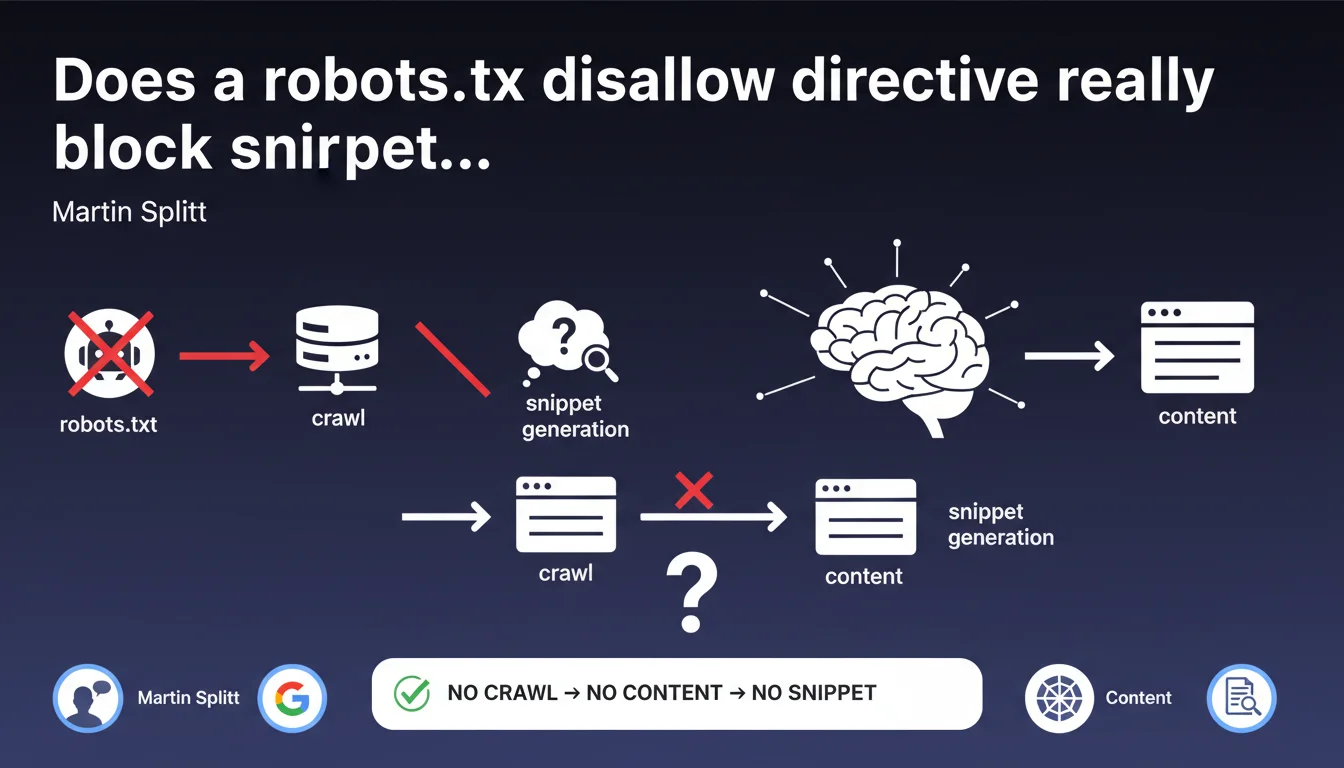

Martin Splitt confirms that a blocking robots.txt prevents Google from generating snippets in search results. Without access to the content through crawling, Google has no data to compose a description. The result therefore appears without an excerpt, which drastically degrades CTR.

What you need to understand

Why does a disallow in robots.txt block snippets?

When a disallow directive appears in robots.txt, Googlebot cannot crawl the affected pages. Without crawling, there's no reading of HTML content, meta description tags, or visible text.

The search engine is therefore unable to compose a snippet — that excerpt which displays under the title in search results. The page can be indexed through external links, but it appears bare: title only, without description.

What's the difference between indexation and snippets?

A page blocked in robots.txt can still be indexed if Google discovers it through backlinks. This is a crucial nuance that many practitioners overlook.

However, without access to the content, it's impossible to generate a snippet. Google then displays a generic message like "No information available for this page".

What are the concrete impacts on visibility?

A result without a snippet means a collapsed CTR. The user has no idea of the page's content, no element to judge its relevance.

Competitors with well-written snippets mechanically capture more clicks, even with equivalent ranking. Organic traffic suffers directly.

- Robots.txt disallow prevents crawling, and therefore content reading

- Without crawled content, no snippet is generated in search results

- The page can be indexed through external links, but remains "empty"

- CTR collapses due to lack of informative excerpt

- This situation applies to all pages, not just new ones

SEO Expert opinion

Does this statement align with real-world observations?

Yes, and it's even a classic finding in SEO audits. We regularly find sites with a misconfigured robots.txt that blocks entire sections, sometimes by mistake after a migration.

The affected pages appear in the index, but without a snippet — a typical sign of a crawlability issue. Google Search Console even flags these URLs as "Indexed, though blocked by robots.txt".

What nuances should be added?

Martin Splitt's statement is factual but incomplete. He doesn't clarify that Google can still index a URL blocked in robots.txt if it receives backlinks — this is a frequent source of confusion among beginners.

Another point: even if content is crawlable, a noindex in X-Robots-Tag or meta tag prevents indexation altogether. So no presence in search results, snippet or not. The two mechanisms (robots.txt and noindex) complement each other but don't substitute for one another. [To verify]: Google has never publicly clarified whether certain types of content (images, embedded videos) can generate partial snippets despite a partial disallow.

What's the most common pitfall encountered?

The classic case: a site blocks /wp-admin/ and /wp-includes/ in robots.txt — normal — but accidentally adds a line Disallow: /wp-content/ that blocks access to CSS, JS, and image resources.

Google can no longer properly render pages, which impacts indexation on mobile (incomplete rendering) and degrades perceived experience. The snippet may be generated, but truncated or incoherent.

Practical impact and recommendations

What should you check first on your site?

First step: audit your robots.txt. Download it directly (mysite.com/robots.txt) and scrutinize each directive. Look for overly broad disallows, misplaced wildcards, critical sections blocked by mistake.

Then cross-reference with Google Search Console: the "Coverage" tab flags URLs indexed despite robots.txt blocking. If you find any, it means they're receiving backlinks — but without a snippet.

How do you fix a robots.txt that blocks snippets?

Remove or refine the Disallow directive in question. If you want to prevent indexation, use a meta name="robots" content="noindex" tag or an HTTP X-Robots-Tag header instead.

After modifying robots.txt, request a reindex via Google Search Console. Google recrawls, discovers the content, generates the snippet. This can take a few days depending on crawl budget.

What mistakes must you absolutely avoid?

Never mix robots.txt disallow and noindex on the same URL. The robots.txt blocks crawling, so Google cannot read the noindex tag — the page remains indexed, without a snippet. This is counterproductive.

Another pitfall: blocking URL parameters (ex: Disallow: /*?) without thinking it through. Some parameters serve tracking but others generate unique content — you risk cutting off access to useful pages.

- Download and analyze the robots.txt file line by line

- Check in Google Search Console for URLs "Indexed, though blocked by robots.txt"

- Remove disallows from content intended for search results

- Use meta noindex or X-Robots-Tag to prevent indexation, not robots.txt

- Test robots.txt with the Google Search Console tool before any changes

- Never block CSS, JS, images in robots.txt — it breaks rendering

- Request reindexing after correction to speed up recrawling

❓ Frequently Asked Questions

Une page bloquée en robots.txt peut-elle quand même apparaître dans Google ?

Quelle différence entre robots.txt disallow et meta noindex ?

Combien de temps faut-il pour que Google génère un snippet après correction du robots.txt ?

Peut-on bloquer certaines sections en robots.txt sans perdre les snippets ailleurs ?

Faut-il bloquer les ressources CSS et JS en robots.txt pour économiser le crawl budget ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 10/01/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.