Official statement

Other statements from this video 10 ▾

- □ Pourquoi robots.txt suffit-il (presque toujours) à bloquer l'indexation d'un site de staging ?

- □ La protection par mot de passe est-elle vraiment la solution pour bloquer l'indexation d'un site de staging ?

- □ La balise no-index bloque-t-elle vraiment toute indexation sans exception ?

- □ Les pages orphelines sont-elles vraiment invisibles pour Google ?

- □ Google peut-il vraiment découvrir tous vos sous-domaines ?

- □ Faut-il vraiment soumettre manuellement ses pages importantes au lancement d'un site ?

- □ La qualité du contenu bloque-t-elle réellement l'indexation de masse ?

- □ Un nom de domaine propre améliore-t-il vraiment la mémorisation de votre marque ?

- □ Les listes blanches IP suffisent-elles vraiment à protéger vos sites de staging du crawl Google ?

- □ Faut-il vraiment faire du SEO pour un site à fonctionnalité ?

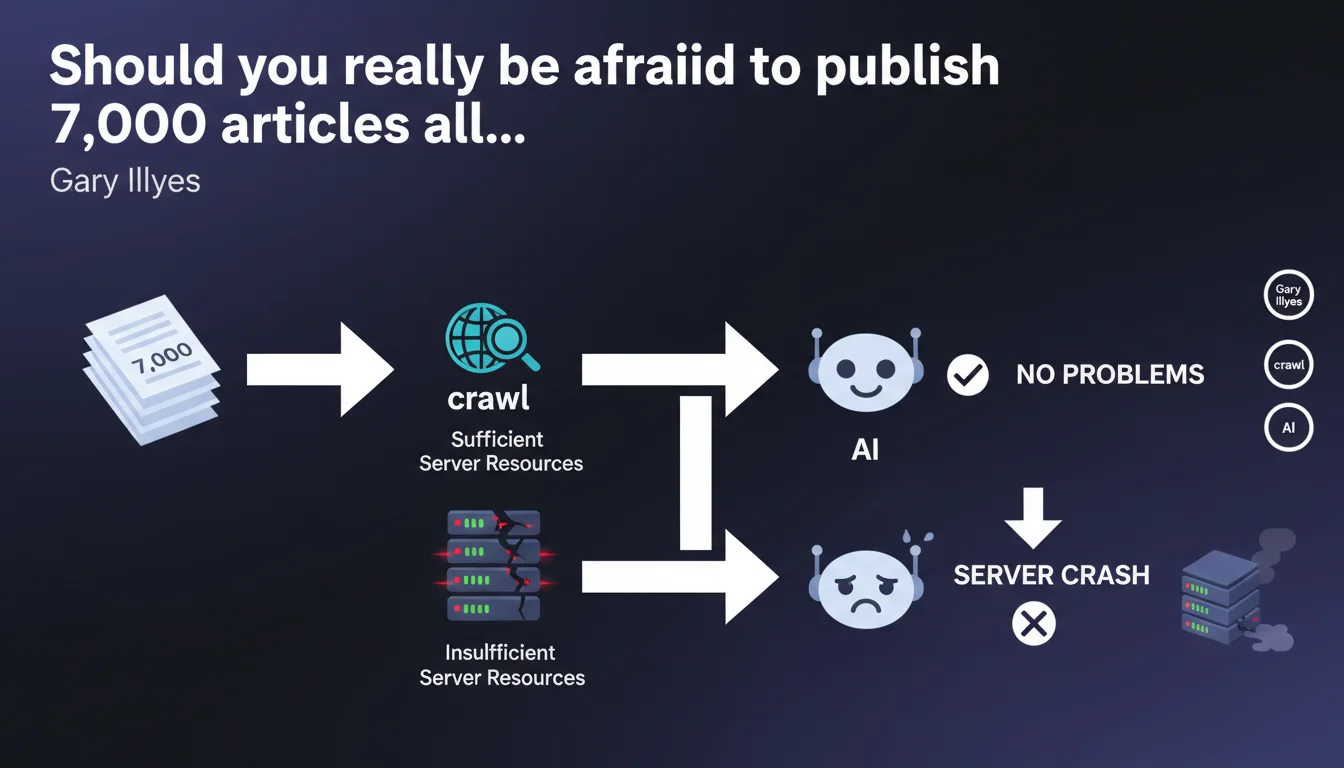

Gary Illyes confirms that a massive content launch (7,000 articles for example) poses no indexation problems if your server can handle crawl traffic multiplied by 1,000. Otherwise, your infrastructure risks collapsing under the load. The bottleneck isn't at Google's end, but in your server resources.

What you need to understand

Why does Google talk about crawl multiplied by 1,000?

Google doesn't just crawl each URL once during a massive launch. The algorithm tests content freshness, verifies linked resources (CSS, JS, images), explores internal linking, and revisits pages to detect modifications.

A site publishing 7,000 new pages can easily generate tens of thousands of server requests within a few hours. If your infrastructure isn't designed to absorb this brutal spike, response times explode and Googlebot automatically slows down — or worse, your server crashes.

Does this mean Google has no limits on its end?

No. Google has a crawl budget allocated to each site based on its popularity, authority, and content quality. What Gary Illyes clarifies here is that this budget won't be the limiting factor in a massive launch.

The real problem lies on the server side: if you can't serve pages quickly enough, Googlebot will space out its requests to avoid saturating you. Result: your indexation will take weeks instead of days.

What criteria should I use to determine if my server will handle the load?

You need to measure several indicators: average response time (TTFB), concurrent processing capacity (number of concurrent requests), available CPU/RAM resources, and 5xx error rate under load. A properly sized server should maintain a TTFB under 200ms even with 500 requests/second.

Budget shared hosting or small VPS will collapse. A CDN, aggressive caching, and a scalable architecture (cloud auto-scalable, high-performance dedicated servers) are essential.

- A massive content launch generates crawl traffic multiplied by 1,000 or more

- Google's crawl budget is not the limiting factor — your server is

- An undersized server slows down indexation or crashes completely

- TTFB, concurrent capacity, and 5xx error rate are the key metrics

- A CDN and scalable infrastructure are mandatory to absorb the spike

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. I've seen sites with quality content remain stuck for weeks after a massive launch, not because Google refused to index, but because the server couldn't keep up. Logs clearly show Googlebot spacing out requests when response times exceed 500ms.

Conversely, sites with solid architecture (Cloudflare + dedicated servers + optimized cache) saw 10,000 pages indexed in under 72 hours. The difference doesn't come from crawl budget but from the ability to serve content quickly.

What nuances should we add to this statement?

Gary Illyes intentionally simplifies. [To verify]: the notion of "crawl multiplied by 1,000" remains vague. Is this an observed average? A theoretical maximum? No precise data is provided.

Furthermore, this recommendation assumes that launched content is of sufficient quality to deserve indexation. If Google detects spam or thin content, even with an iron-solid server, indexation will be limited. Server power never compensates for editorial mediocrity.

In what cases does this rule not apply?

On brand new sites with zero authority, the initial crawl budget is so low that a massive launch makes no sense. Google will crawl 50 pages per day regardless — whether you publish 100 or 10,000 makes no difference.

Another exception: sites with algorithmic penalties or a history of duplicate content. Even with a NASA-grade server, indexation will remain deliberately slowed by Google to limit index pollution.

Practical impact and recommendations

What should you do concretely before a massive launch?

First and foremost, test your infrastructure under load. Use tools like Apache Bench, JMeter, or LoadImpact to simulate 500-1,000 requests/second and observe how your server reacts. If TTFB exceeds 300ms or 503 errors appear, you're not ready.

Deploy a high-performance CDN (Cloudflare, Fastly, Akamai) and configure aggressive caching. Static resources (images, CSS, JS) should never hit your origin server. Enable HTML caching for anonymous pages if your CMS allows it.

Prepare a clean, segmented XML sitemap containing only new URLs. Submit it via Search Console just before launch to signal Google that a large volume is coming. Then monitor server logs in real-time to detect any anomalies.

What mistakes should you avoid at all costs?

Never launch 7,000 pages without first having audited content quality. Google won't index blindly: if pages are thin, duplicated, or stuffed with keywords, you're wasting server resources for nothing.

Also avoid publishing everything at once on a Friday evening or just before a holiday weekend. If a technical issue occurs (crawl crashing your server, indexation blocked), you'll have no one to react quickly. Plan the launch for a Tuesday or Wednesday morning.

Don't neglect post-launch monitoring. Set up alerts on critical metrics: CPU spikes, response time, 5xx error rate, and indexation rate in Search Console. Gradual degradation can go unnoticed without monitoring tools.

How can I verify that my infrastructure is suitable?

Check your Core Web Vitals under real-world load. An LCP that explodes to 4 seconds when crawl is happening indicates an undersized server. Also consult the "Crawl statistics" reports in Search Console: an average response time above 500ms is a warning sign.

Test the horizontal scalability of your stack. If you're on a single VPS, consider migrating to cloud architecture (AWS, GCP, Azure) with auto-scaling. A server that can double its capacity in 5 minutes absorbs unexpected spikes much better.

- Test infrastructure with 500-1,000 simulated requests/second

- Deploy a high-performance CDN and enable aggressive HTML caching

- Segment XML sitemap and submit it just before launch

- Audit content quality before massive publication

- Schedule launch on a weekday with available team

- Install real-time monitoring on CPU, TTFB, 5xx errors

- Verify Core Web Vitals and response time in Search Console

- Plan for horizontal scalability (cloud auto-scalable)

❓ Frequently Asked Questions

Quel est le bon moment pour lancer 7000 articles d'un coup ?

Un CDN suffit-il à absorber un crawl massif ?

Combien de temps faut-il pour indexer 7000 pages dans le meilleur des cas ?

Faut-il augmenter le crawl budget manuellement avant un lancement massif ?

Que faire si le serveur plante pendant le crawl massif ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 05/04/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.