Official statement

Other statements from this video 10 ▾

- □ Pourquoi robots.txt suffit-il (presque toujours) à bloquer l'indexation d'un site de staging ?

- □ La protection par mot de passe est-elle vraiment la solution pour bloquer l'indexation d'un site de staging ?

- □ La balise no-index bloque-t-elle vraiment toute indexation sans exception ?

- □ Les pages orphelines sont-elles vraiment invisibles pour Google ?

- □ Google peut-il vraiment découvrir tous vos sous-domaines ?

- □ Faut-il vraiment craindre de publier 7000 articles d'un coup ?

- □ La qualité du contenu bloque-t-elle réellement l'indexation de masse ?

- □ Un nom de domaine propre améliore-t-il vraiment la mémorisation de votre marque ?

- □ Les listes blanches IP suffisent-elles vraiment à protéger vos sites de staging du crawl Google ?

- □ Faut-il vraiment faire du SEO pour un site à fonctionnalité ?

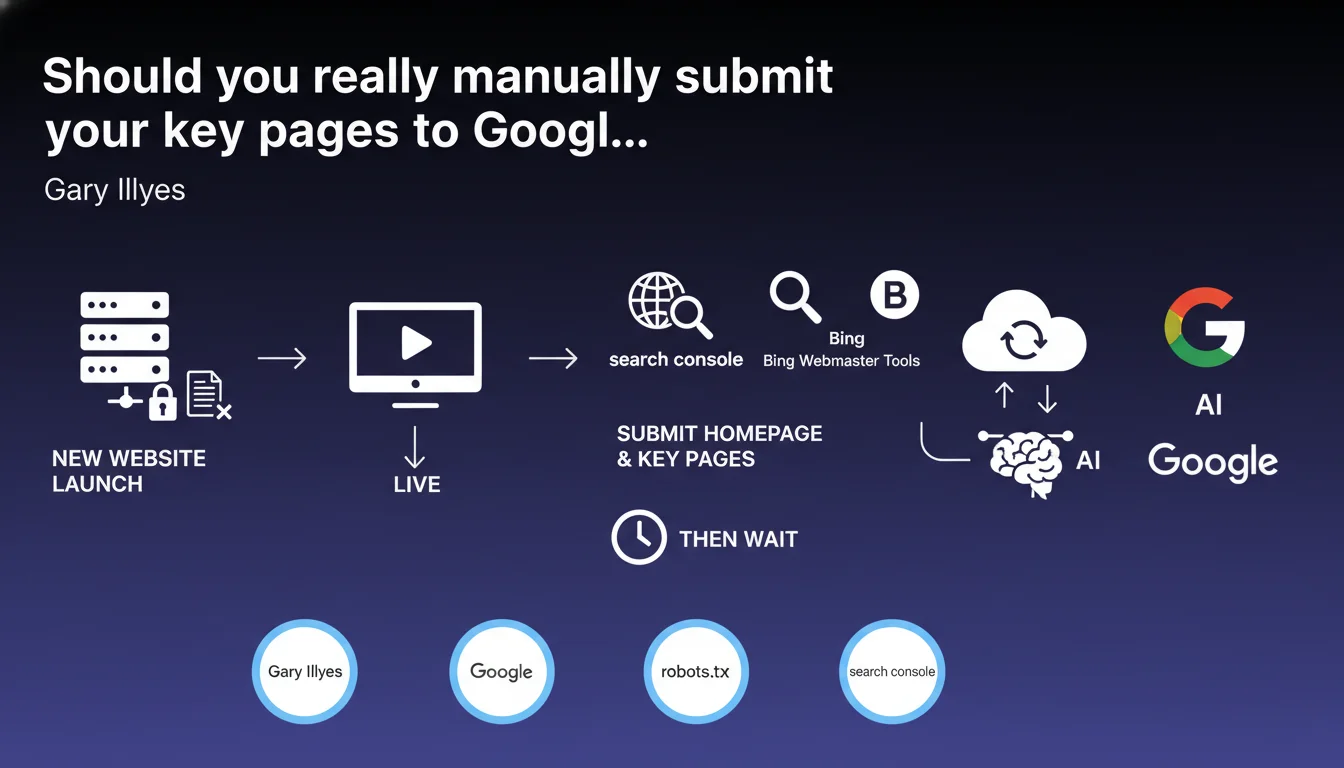

Google recommends manually submitting your homepage and a few strategic pages via Search Console after removing the robots.txt block, then waiting. This minimalist approach aims to avoid overloading the crawler while prioritizing critical URLs. Contrary to what you might think, bombarding Google with indexing requests doesn't speed up the process.

What you need to understand

Why does Google insist on this minimalist approach?

Gary Illyes' central idea is to avoid saturating Googlebot from the start. A new site has virtually no crawl budget. If you submit 500 URLs at once, you force Google to make arbitrary choices rather than letting it naturally discover your priority architecture.

By submitting only the homepage and a few key pages, you let the crawler follow your internal linking to identify important content itself. It's an indirect test of your structure: if Google can't find your critical pages starting from the homepage, your site architecture has a problem.

What exactly is meant by "a few important pages"?

Here, Google intentionally remains vague. We're generally talking about 5 to 10 URLs maximum: main category pages, a flagship product page if it's an e-commerce site, a contact or services page if it's a corporate website. The goal isn't to index your entire site, but to create strategic entry points for Googlebot.

These pages should be representative of your semantic structure and perfectly optimized. No thin content, no technical pages. These are your showcases — the ones that will give Google its first impression of your thematic relevance.

Why wait after submission?

"Waiting" means not harassing the inspection tool with dozens of new requests each day. Google has its own crawl rhythms, conditioned by perceived freshness, domain authority, and the quality of already-indexed content. Multiplying submissions doesn't speed anything up and can even degrade your quality perception in the eyes of algorithms.

- Submit only the homepage and 5-10 strategic pages at launch

- Let Googlebot discover the rest via internal linking

- Don't bombard Search Console with daily indexing requests

- Also complete submission on Bing Webmaster Tools

- Verify that your robots.txt is properly unblocked before any action

SEO Expert opinion

Is this recommendation consistent with real-world observations?

Yes, but with important nuances. On new domains with no history, I've observed that mass submissions often generate chaotic crawling where Google indexes secondary pages (legal notices, terms of service) first at the expense of strategic content. In contrast, targeted submission produces more structured and coherent crawling.

That said — and Google doesn't specify this — indexing speed also depends on external factors: early backlinks, social mentions, domain authority if it's a migration. A site with quality links from day one will be crawled much faster than an isolated site, regardless of your manual submissions. [To verify]: Google has never officially confirmed the weight of initial social signals on crawling a new domain.

In what cases is this approach insufficient?

On sites with thousands of pages (e-commerce, marketplaces), waiting passively after submitting 10 URLs can take weeks before complete indexation. In these cases, you need to combine manual submission with a perfectly segmented XML sitemap, where each file reflects a clear business priority.

Another limitation: sites with dynamically generated content or product facets. Google can get lost in parameterized URLs if your canonicalization isn't flawless from the start. Manual submission never compensates for failing technical architecture.

Do you really need to wait without doing anything else?

"Waiting" doesn't mean staying inactive. While Google crawls, you must actively monitor: which pages are indexed first? How much time between submission and effective indexation? Are there errors in the coverage reports?

If after 7-10 days no pages are indexed beyond the homepage, that's a red flag. Crawl budget issue, content deemed too weak, non-existent internal linking — you need to investigate rather than continue "waiting". Prolonged inactivity on a new site can create an irreversible delay against your competition.

Practical impact and recommendations

What exactly should you do at launch?

Start by removing any blocking in your robots.txt and verify that no noindex tags are present on your strategic pages. Next, connect your property in Search Console and Bing Webmaster Tools. Once verified, use the URL inspection tool to manually submit your homepage and 5-10 key pages maximum.

Don't submit your entire sitemap at once. Let Google progressively discover your content via internal linking from these entry points. Configure email alerts to be notified of critical indexing errors. Wait 48-72 hours before checking server logs for the first traces of crawling.

What errors should you absolutely avoid?

Never submit pages still under construction, with placeholder content or missing images. Google records this first quality impression and it conditions its future recrawl frequency. Another trap: submitting URLs with parameters or sessions, which pollutes the index and dilutes crawl budget from the start.

Also avoid submitting the same pages daily via the inspection tool thinking you'll speed up the process. This doesn't work and can even create a negative perception — a site that pushes too hard is often low-value. Trust Googlebot's natural rhythm once initial submissions are made.

- Remove all robots.txt blocking and verify absence of noindex on strategic pages

- Connect Search Console and Bing Webmaster Tools before any submission

- Submit only the homepage and 5-10 critical business pages via the inspection tool

- Wait 48-72 hours before checking server logs for crawl traces

- Configure Search Console alerts to monitor indexing errors

- Don't re-submit the same URLs daily — let Google work

- Complete with an XML sitemap segmented by business priority

- Verify that your internal linking allows reaching all strategic pages from the homepage

❓ Frequently Asked Questions

Combien de pages faut-il soumettre exactement au lancement ?

Peut-on soumettre l'intégralité du sitemap XML au lancement ?

Combien de temps attendre avant de re-soumettre des pages non indexées ?

Faut-il aussi soumettre sur Bing Webmaster Tools ?

Cette approche fonctionne-t-elle pour les migrations de sites existants ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 05/04/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.