Official statement

Other statements from this video 16 ▾

- □ Les Web Components JavaScript sont-ils vraiment crawlables par Google ?

- □ Le balisage FAQ Schema impose-t-il un format strict de présentation ?

- □ Le balisage FAQ Schema garantit-il vraiment l'affichage des FAQ snippets dans Google ?

- □ Faut-il vraiment éviter de dupliquer son propre contenu pour le SEO ?

- □ Pourquoi Google pénalise-t-il les variations excessives d'un même contenu ?

- □ Comment vérifier si Googlebot voit vraiment votre contenu JavaScript ?

- □ WordPress pénalise-t-il vraiment le référencement par rapport au HTML statique ?

- □ Pourquoi vos pages ne sont-elles pas indexées malgré un site techniquement irréprochable ?

- □ Pourquoi les études utilisateurs externes sont-elles devenues incontournables pour résoudre les problèmes de qualité ?

- □ Faut-il vraiment faire confiance au rel=canonical pour contrôler l'indexation ?

- □ Les backlinks vers des 404 sont-ils vraiment perdus pour le SEO ?

- □ Le disavow tool efface-t-il vraiment toute trace des liens toxiques dans les algorithmes Google ?

- □ Un certificat SSL peut-il vraiment pénaliser votre référencement ?

- □ Les problèmes techniques SEO ont-ils vraiment un impact immédiat sur vos rankings ?

- □ Bloquer Google Translate impacte-t-il vraiment votre référencement ?

- □ La balise meta notranslate peut-elle vraiment bloquer le lien « Traduire cette page » dans les SERP Google ?

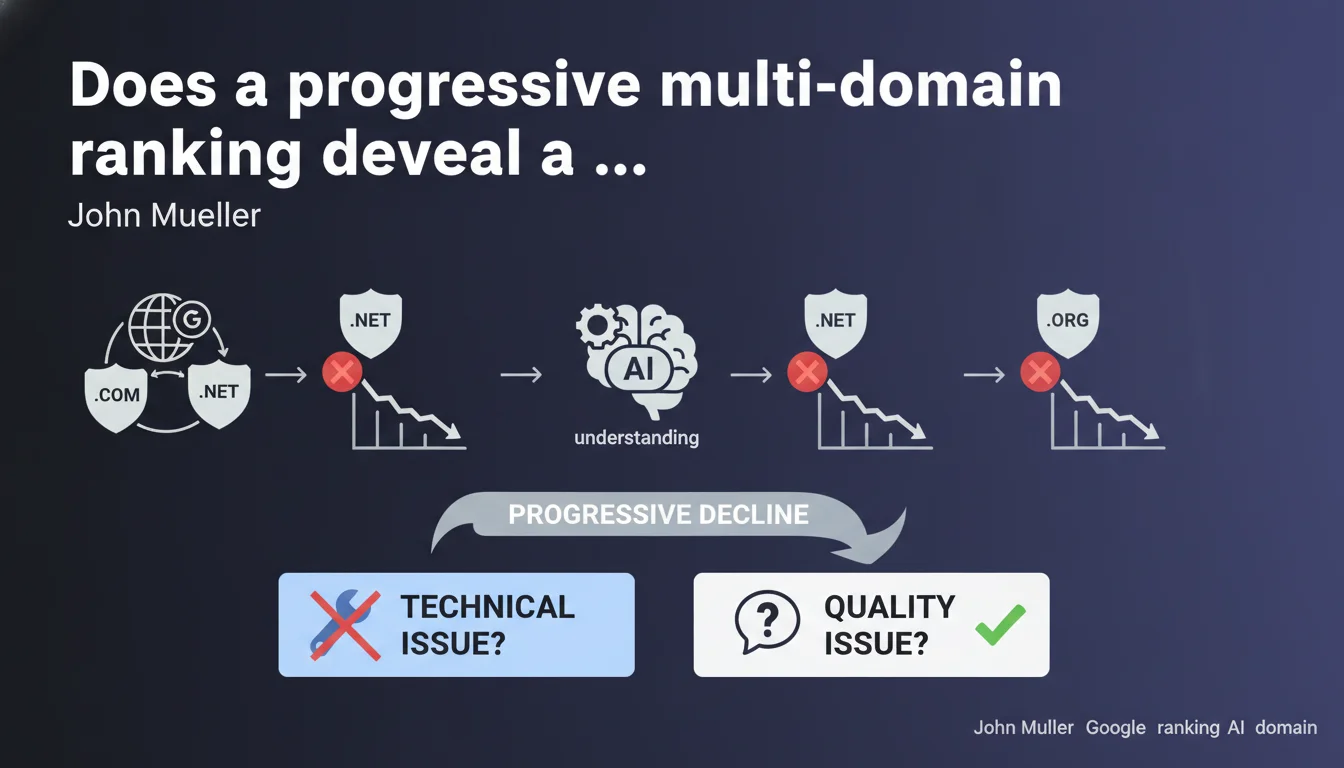

When ranking losses first appear on one TLD and then spread to other site versions, Google is signaling a fundamental content comprehension problem rather than an isolated technical issue. The search engine struggles to grasp the overall quality or relevance of your content, regardless of language or geographic version. This is a red flag requiring strategic content overhaul, not just a quick technical fix.

What you need to understand

What does a progressive decline between TLDs really mean in practice?

Picture a site existing across multiple top-level domains (.fr, .com, .de, .uk). A decline starts on .fr, then three weeks later hits .com, then .de. This pattern reveals something fundamental: Google hasn't identified a localized technical bug, but is questioning the intrinsic value of the site itself.

If the problem were technical — say, a crawl budget issue or loading speed problem — it would hit all domains simultaneously or randomly. The methodical progression between TLDs indicates that Google is progressively reevaluating its overall understanding of what the site offers, version by version.

Why does Google roll out changes in waves rather than all at once?

Google's algorithms don't process all domains at the same time. Quality updates deploy in waves, region by region, index by index. When a weak quality signal emerges, it progressively contaminates other site versions because Google starts to associate the global entity (the brand, the company) with that quality level.

This is especially visible on international sites where each TLD is initially treated as a semi-autonomous entity, then Google connects the dots and applies a consolidated evaluation. Let's be honest: if your content is mediocre in French, why would it be excellent in English with just machine translation?

How do you distinguish a quality problem from a technical one?

A technical problem strikes brutally and uniformly: all domains lose traffic on the same day, or an entire section disappears from the index. A quality problem creeps in like wildfire: first a few pages, then sections, then entire domains, over several weeks.

Technical issues leave clear traces in Google Search Console: mass 404 errors, crawl drops, indexation problems. Quality issues? Just progressive ranking erosion, without any obvious technical alert. That's where it gets tricky: you're hunting for a bug, but the real issue is editorial.

- Technical pattern: simultaneous decline, GSC errors, visible indexation issues

- Quality pattern: progressive multi-domain decline, no technical alert, erosion over weeks

- Key distinction: a technical fix restores traffic quickly; a quality problem requires fundamental overhaul

- GSC signals: in case of quality issues, pages remain indexed but lose positions — they're seen, just judged less relevant

SEO Expert opinion

Does Mueller's distinction between technical and quality issues really hold up?

On paper, Mueller's logic is compelling. In practice, it's more nuanced. I've seen sites with cross-domain content duplication issues experience exactly this pattern: progressive decline as Google detects duplicate versions and arbitrarily selects which to canonicalize. Technically, it's an hreflang and canonical management issue, but Google interprets it as a degraded quality signal.

Similarly, a site gradually rolling out a template redesign — say, first on .fr, then on other TLDs — can see decline spread if the redesign hurts UX or loading time. Is that technical or quality? Both, and that's precisely where Mueller's statement gets fuzzy. [To verify]: Does Google really distinguish between the two, or does it treat all UX/technical problems as degraded quality signals?

When doesn't this rule apply?

If your TLDs target radically different markets — say, .fr for fashion and .com for electronics — a decline on one likely won't affect the other. Google won't generalize a quality problem across two sites with zero shared content, even if they share the same owner.

Another case: manual penalties. If your .de gets a manual action for spam, it doesn't automatically spread to other domains. However, if Google detects a manipulation pattern (link buying, mass-generated content), it can apply a progressive algorithmic devaluation across all your properties. The line is thin.

What if Mueller's statement seems to contradict your observations?

Frankly, Google's official statements are often general guidelines, not absolute truths. If you find that a technical fix (resolving redirect chains, fixing hreflang) reverses the progressive decline, great — but that doesn't contradict Mueller.

What he's saying is that most of the time, progressive decline points to quality. That doesn't mean there are no cases where a technical problem can mimic this pattern. Test, fix, measure. And if nothing changes, it's probably that the content itself is at fault.

Practical impact and recommendations

How do you diagnose whether your decline is qualitative or technical?

First step: Google Search Console, Coverage and Performance tabs. Compare decline dates across TLDs. If they're spaced days or weeks apart, investigate Core Web Vitals and indexation errors. If everything looks green on the technical side, the problem is likely editorial.

Next, analyze your most-affected pages. Are they thin on content? Duplicated across domains? Stuffed with keywords without real added value? Use a tool like Screaming Frog to detect similar content across domains and verify that your hreflang tags point correctly.

What actions should you prioritize?

If diagnosis points to quality, not technical: there's no magic solution. You need to rewrite, enrich, differentiate. Start with your strategic pages — those that historically generated the most organic traffic. Add structured data, original visuals, concrete examples. Aim for 30-40% additional unique content, not just rephrasing.

At the same time, ensure each TLD has a localized value proposition. Simple translation isn't enough anymore. Adapt examples, currencies, cultural references. Google detects mechanically translated generic content — and gradually devalues it.

How do you prevent this problem from recurring?

Establish a recurring quality audit process. Every quarter, scrutinize your top 100 pages per TLD and verify they offer real differentiation. Monitor engagement metrics (time on page, bounce rate): if they degrade before traffic drops, that's an early warning signal.

Invest in genuinely expert content, written by subject matter specialists, not generic writers or unsupervised AI. Google increasingly values E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) — and it's measurable, particularly through behavioral signals.

- Compare decline dates across TLDs in GSC to identify a progressive pattern

- Audit Core Web Vitals and indexation errors to rule out technical causes

- Identify duplicated or auto-translated content across domains

- Verify hreflang and canonical tag configuration across domains

- Enrich strategic content with 30-40% additional unique material

- Truly localize each version (examples, currencies, cultural references)

- Implement quarterly editorial quality monitoring

- Track engagement metrics as leading indicators of quality problems

❓ Frequently Asked Questions

Une baisse progressive peut-elle être causée par un problème technique isolé ?

Combien de temps faut-il pour inverser une baisse progressive liée à la qualité ?

Si un seul TLD est touché, est-ce forcément un problème technique localisé ?

Les mises à jour Core Update peuvent-elles créer ce pattern de baisse progressive ?

Faut-il traiter tous les TLDs simultanément ou prioriser celui le plus touché ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 08/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.