Official statement

Other statements from this video 16 ▾

- □ Les Web Components JavaScript sont-ils vraiment crawlables par Google ?

- □ Le balisage FAQ Schema impose-t-il un format strict de présentation ?

- □ Le balisage FAQ Schema garantit-il vraiment l'affichage des FAQ snippets dans Google ?

- □ Pourquoi Google pénalise-t-il les variations excessives d'un même contenu ?

- □ Comment vérifier si Googlebot voit vraiment votre contenu JavaScript ?

- □ WordPress pénalise-t-il vraiment le référencement par rapport au HTML statique ?

- □ Pourquoi vos pages ne sont-elles pas indexées malgré un site techniquement irréprochable ?

- □ Pourquoi les études utilisateurs externes sont-elles devenues incontournables pour résoudre les problèmes de qualité ?

- □ Faut-il vraiment faire confiance au rel=canonical pour contrôler l'indexation ?

- □ Les backlinks vers des 404 sont-ils vraiment perdus pour le SEO ?

- □ Le disavow tool efface-t-il vraiment toute trace des liens toxiques dans les algorithmes Google ?

- □ Un certificat SSL peut-il vraiment pénaliser votre référencement ?

- □ Une baisse progressive multi-domaines révèle-t-elle un problème de qualité plutôt que technique ?

- □ Les problèmes techniques SEO ont-ils vraiment un impact immédiat sur vos rankings ?

- □ Bloquer Google Translate impacte-t-il vraiment votre référencement ?

- □ La balise meta notranslate peut-elle vraiment bloquer le lien « Traduire cette page » dans les SERP Google ?

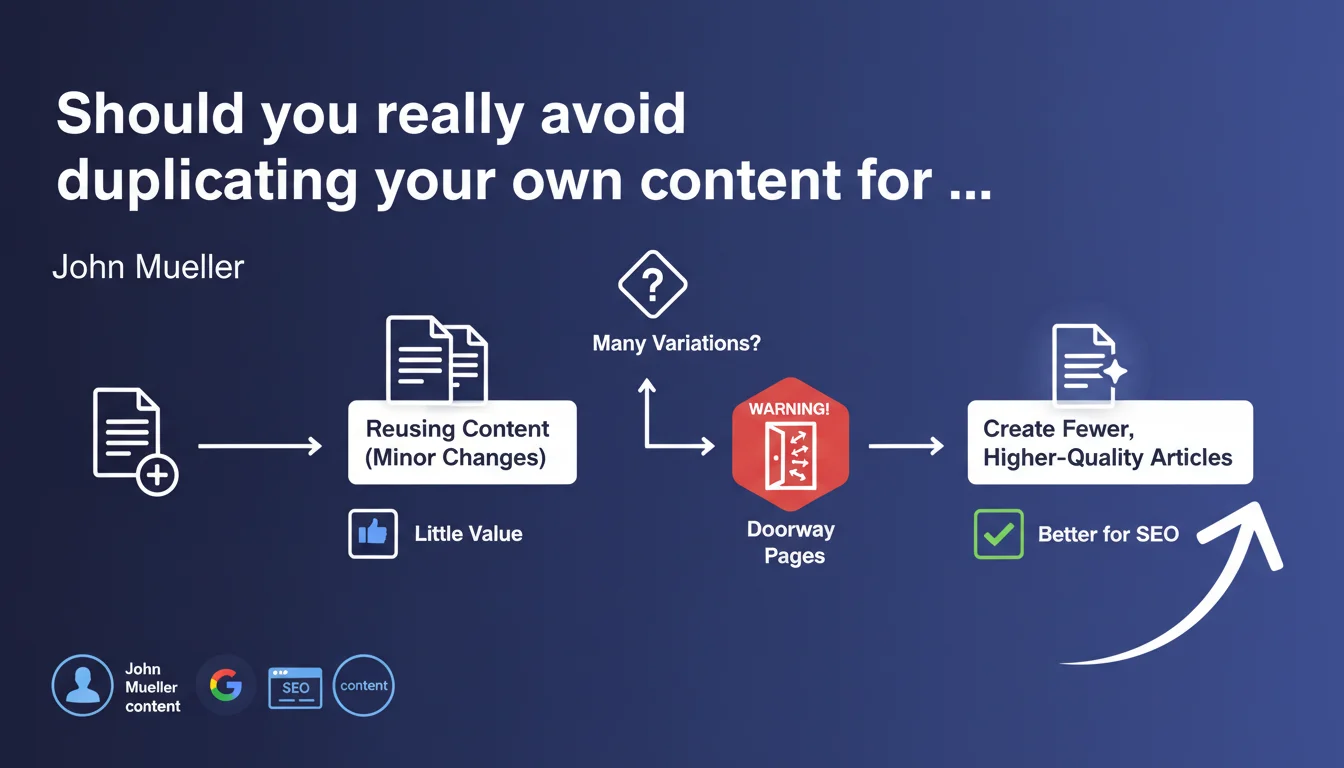

Reusing your own content by changing a few words is not against Google's rules, but delivers nearly zero SEO value. It's better to focus your efforts on fewer articles with superior quality. Warning: multiplying variations can cross into doorway pages territory and trigger penalties.

What you need to understand

What exactly does Google mean by "duplicating your own content"?

We're talking about near-identical content published across multiple URLs on your own site. A typical example: the same product description with a few words changed (color, size) or a blog post spun into three versions to target three variants of the same keyword.

Google doesn't consider this plagiarism — you own the original content. But the search engine judges this approach as poor in added value: why index five pages saying the same thing?

Why does Google discourage this approach if it's not penalized?

The distinction is important. Google doesn't systematically penalize internal duplicate content, but it does filter it. In practice: only one version gets indexed and ranked, the others disappear from results or lag far behind.

The real risk? Crawl budget dilution and link equity dispersal. Your resources get spread across weak URLs instead of being concentrated on solid content.

Where's the line with doorway pages?

That's where it gets tricky. If you create many variations — for example, 50 near-identical pages each targeting a different city — Google can reclassify that as doorway pages. And then you face penalties.

The limit is fuzzy. Three variants? Probably safe. Thirty? You're playing with fire. The core question: do these pages deliver a distinct user experience or are they just gateways to capture SEO traffic?

- Duplicating your own content isn't penalized in itself, but delivers little SEO value

- Google prioritizes one URL per unique piece of content: duplicates are filtered, not indexed

- Multiplying variations can cross into doorway pages and trigger manual penalties

- The boundary depends on the number of variants and their actual user value

SEO Expert opinion

Is this statement consistent with real-world practices?

Yes, and it's confirmed by daily experience. Sites that massively duplicate internal content — e-commerce with near-identical product sheets, multi-location sites with cloned pages — regularly see their URLs filtered from the index. Not a brutal penalty, but progressive invisibility.

However, the concept of "many variations" remains deliberately vague. [To verify]: Google gives no numerical threshold. Five pages? Twenty? One hundred? Impossible to draw a clear line, and that's probably intentional to preserve manual judgment flexibility.

What nuances should be added to this rule?

Mueller's statement implies quality over quantity, but there are real-world exceptions. Some high-authority sites do very well with slightly duplicated content — because they compensate with solid architecture and intelligent internal linking.

Another point: Mueller mentions "changing a few words," but what about structurally identical content with variable data (prices, availability, hours)? These pages are technically duplicated, but deliver genuine user value. Google generally tolerates them if the rest of the content is differentiated.

In what cases doesn't this rule really apply?

Technical sites — documentation, knowledge bases, technical sheets — often need to repeat structured information. Here, partial duplication is necessary for consistency. Google seems to accept it if the broader context differs.

Practical impact and recommendations

What should you do concretely if you've already duplicated content?

First step: audit your URLs to identify near-identical content. Use duplicate detection tools (Screaming Frog, Siteliner, or simply site: searches on Google with text snippets).

Next, three options — depending on context:

- Merge duplicated pages into one enriched version, then 301 redirect old URLs

- Genuinely differentiate each page's content (add specific information, use cases, local testimonials, etc.)

- Use the canonical tag to signal to Google which version to prioritize (but this doesn't solve the user value problem)

- Remove weak pages outright that add no value and drain crawl budget

What mistakes must you absolutely avoid?

Don't create "cosmetic" variations just to target keyword variants. Google understands synonyms and search intent — you don't need one page for "buy running shoes" and another for "purchase running shoes".

Also avoid the internal "spinning" trap: rewriting the same content by just changing sentence structure. Google detects semantic similarity, not just identical words.

- Don't multiply pages to target minor keyword variants

- Don't rely on the canonical tag to "fix" a massive duplicate content strategy

- Don't underestimate the risk of doorway pages reclassification if you exceed a certain volume

How do you verify your site is compliant after cleanup?

Run a site:yourdomain.com search on Google and browse the results. If you see nearly identical pages indexed, there's still work to do. Also check your coverage reports in Google Search Console: pages marked as "Excluded" with "Duplicate content" are a clear signal.

Monitor your Core Web Vitals and crawl budget via server logs: fewer unnecessary pages = better crawl efficiency, better overall performance.

❓ Frequently Asked Questions

Est-ce que Google pénalise le duplicate content interne ?

Combien de variations d'une même page peut-on créer sans risque ?

La balise canonical suffit-elle pour gérer le duplicate content ?

Peut-on republier un même article sur plusieurs sections du site ?

Qu'est-ce qu'une doorway page exactement dans ce contexte ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 08/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.