Official statement

Other statements from this video 16 ▾

- □ Les Web Components JavaScript sont-ils vraiment crawlables par Google ?

- □ Le balisage FAQ Schema impose-t-il un format strict de présentation ?

- □ Le balisage FAQ Schema garantit-il vraiment l'affichage des FAQ snippets dans Google ?

- □ Faut-il vraiment éviter de dupliquer son propre contenu pour le SEO ?

- □ Pourquoi Google pénalise-t-il les variations excessives d'un même contenu ?

- □ Comment vérifier si Googlebot voit vraiment votre contenu JavaScript ?

- □ WordPress pénalise-t-il vraiment le référencement par rapport au HTML statique ?

- □ Pourquoi vos pages ne sont-elles pas indexées malgré un site techniquement irréprochable ?

- □ Faut-il vraiment faire confiance au rel=canonical pour contrôler l'indexation ?

- □ Les backlinks vers des 404 sont-ils vraiment perdus pour le SEO ?

- □ Le disavow tool efface-t-il vraiment toute trace des liens toxiques dans les algorithmes Google ?

- □ Un certificat SSL peut-il vraiment pénaliser votre référencement ?

- □ Une baisse progressive multi-domaines révèle-t-elle un problème de qualité plutôt que technique ?

- □ Les problèmes techniques SEO ont-ils vraiment un impact immédiat sur vos rankings ?

- □ Bloquer Google Translate impacte-t-il vraiment votre référencement ?

- □ La balise meta notranslate peut-elle vraiment bloquer le lien « Traduire cette page » dans les SERP Google ?

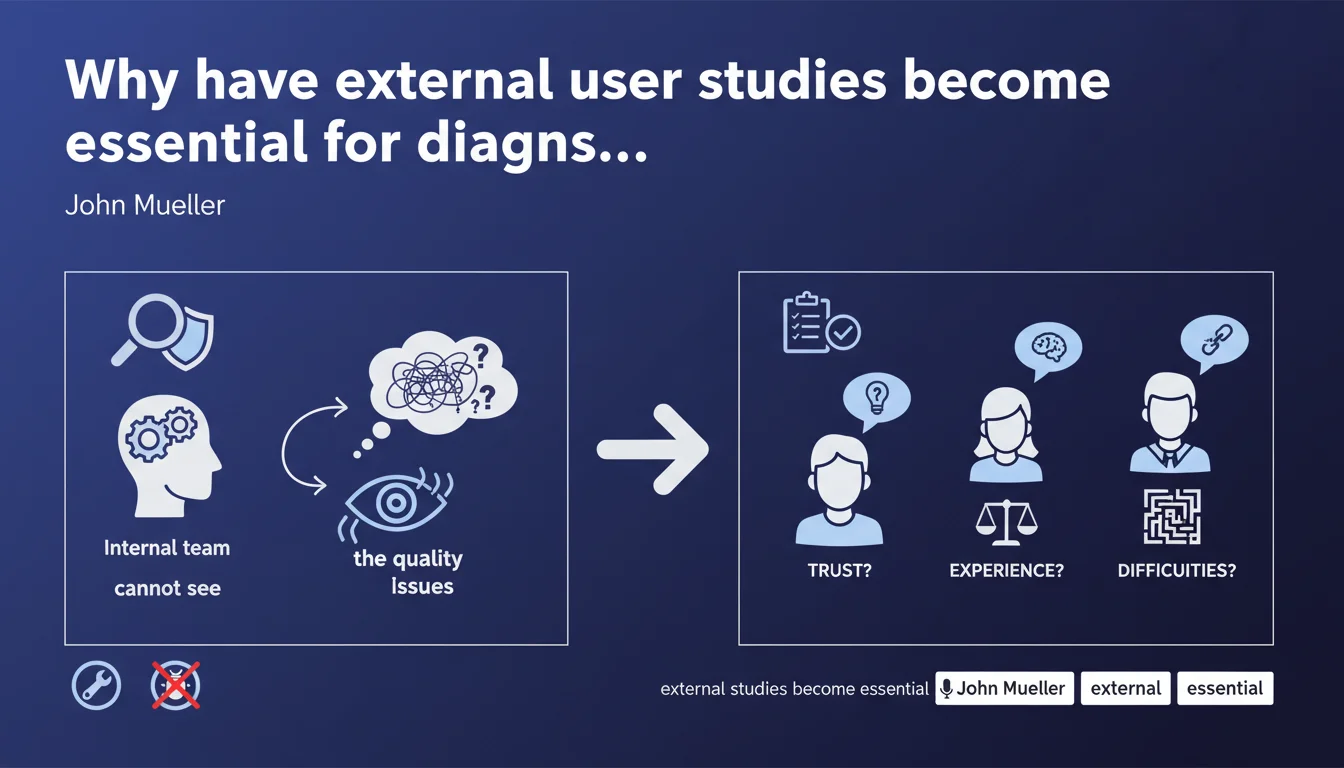

Google explicitly recommends conducting user studies with people external to your site to diagnose quality problems. The goal: identify trust gaps, experience friction, and blockers that your internal team has become blind to. This approach becomes strategic when Quality Raters Guidelines alone no longer explain why your site is stagnating.

What you need to understand

Why is Google pushing webmasters toward user studies?

Because self-evaluation has its limits. When you work on your own site, you gradually lose the ability to detect what's slowing down an average visitor. Cognitive biases pile up — you know where to click, you understand the jargon, you overlook interface flaws.

Google has realized that many sites penalized by quality updates (Helpful Content, Core Updates) don't understand what's wrong. Internal teams go in circles. Hence this recommendation: break out of your bubble and expose your site to fresh eyes.

What does "conducting user studies" specifically mean according to Google?

It's not about superficial surveys. Google mentions three investigation axes: trust (does the site inspire credibility?), experience (is navigation smooth and intuitive?), and pain points (where does the user get stuck or abandon?).

Practically? Observation sessions with representative users, qualitative A/B tests, semi-structured interviews. You don't need a massive budget — even 5 to 10 well-chosen people can reveal critical patterns.

How is this different from the Quality Raters Guidelines?

The QRG provides the theoretical framework Google uses to train its algorithms. But they remain abstract. Asking an external user "Does this site seem credible to you?" produces a living answer, grounded in real experience.

The QRG evaluates E-E-A-T through documented criteria. User studies capture the micro-friction that guidelines don't detail: unreadable font, ambiguous CTA, anxiety-inducing conversion funnel.

- Break free from self-evaluation to shatter the internal biases that blind teams

- Three key axes: trust, user experience, blocking points

- Complementary to QRG: guidelines set the direction, studies reveal invisible obstacles

- Flexible budget: 5 to 10 users are often enough to identify recurring issues

SEO Expert opinion

Is this recommendation consistent with observed algorithmic shifts?

Absolutely. Since Helpful Content Update, Google penalizes sites that write for the algorithm rather than for humans. User studies force you to reverse the logic: start from what the visitor actually feels, not from what you think they expect.

In the field, we see that sites recovering post-penalty are those that radically rethought their UX and credibility — often after exposing their content to external beta-testers. Those that merely tweak tags or rewrite a few paragraphs stagnate.

What nuances should we add to this statement?

First nuance: Google doesn't specify what type of studies. Moderated user tests? Eye-tracking? Post-visit surveys? The methodology matters. A poor protocol (leading questions, biased panel) produces unusable results.

Second nuance: this recommendation targets mainly YMYL sites and e-commerce where trust is critical. For a niche blog with 500 visits/month, the effort can be disproportionate. [To verify]: Google doesn't indicate a traffic threshold or site type where this approach becomes cost-effective.

In what cases does this approach show its limits?

User studies reveal UX and perception issues, not technical flaws that only Google sees. If your site has a crawl budget problem, canonicalization issues, or broken structured data, your user panel will never detect it.

Another limit: users struggle to verbalize certain E-E-A-T criteria. They might find a site "sketchy" without knowing why — yet isolating the exact cause (missing author? outdated design? invasive ads?) requires rigorous post-study analysis.

Practical impact and recommendations

What do you need to do concretely to launch effective user studies?

First, define a precise objective. "Improve quality" is too vague. Better to target: "Understand why visitors leave the product page without adding to cart" or "Identify what undermines the credibility of our blog articles".

Next, recruit a representative panel: not your colleagues, not your family. Use platforms like UserTesting, Testapic, or recruit via relevant Facebook groups. 5 to 10 people suffice for a first iteration.

Typical protocol: observe the user navigating your site (screen share), ask open-ended questions ("What's your first impression of this page?", "Would you feel comfortable buying here?"), note hesitations, misclicks, spontaneous comments.

What mistakes should you avoid when implementing?

Mistake #1: asking leading questions. "Does this site seem professional to you?" pushes toward yes. Prefer: "What strikes you most when you land on this page?"

Mistake #2: testing only the homepage. Product pages, blog articles, category pages deserve equal attention — they're often where abandonments happen.

Mistake #3: not cross-referencing user feedback with analytics data. If 3 out of 5 people say the menu is confusing, but your bounce rate is low, dig deeper: maybe visitors adapt anyway, or your panel wasn't representative.

How do you ensure insights translate into SEO gains?

User studies reveal improvement areas — but you must then prioritize. Rank problems by potential impact: a credibility flaw on a YMYL page comes before a misplaced button on a contact page.

Document everything. Create a structured report with screenshots, verbatims, quantified recommendations ("7 out of 10 users didn't see the article author"). This report becomes crucial leverage to convince leadership to invest in redesign.

Finally, measure the effect of corrections. Roll out changes in waves, track Core Web Vitals evolution, time on page, conversion rate. Retest with a fresh panel 3 months later to validate hypotheses.

- Recruit an external representative panel (minimum 5 to 10 people)

- Define precise objectives (trust, UX, friction points)

- Observe without influencing: open questions, no leading suggestions

- Test multiple page types (not just the homepage)

- Cross-reference user feedback with analytics data

- Prioritize corrections by their E-E-A-T and conversion impact

- Document insights in an actionable report

- Measure the effect of optimizations post-deployment

❓ Frequently Asked Questions

Les études utilisateurs remplacent-elles un audit SEO technique ?

Combien de personnes faut-il interroger pour obtenir des résultats fiables ?

Peut-on réaliser ces études en interne ou faut-il externaliser ?

Comment savoir si les problèmes détectés impactent réellement le SEO ?

Cette approche est-elle pertinente pour tous les types de sites ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 08/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.