Official statement

Other statements from this video 16 ▾

- □ Les Web Components JavaScript sont-ils vraiment crawlables par Google ?

- □ Le balisage FAQ Schema impose-t-il un format strict de présentation ?

- □ Le balisage FAQ Schema garantit-il vraiment l'affichage des FAQ snippets dans Google ?

- □ Faut-il vraiment éviter de dupliquer son propre contenu pour le SEO ?

- □ Pourquoi Google pénalise-t-il les variations excessives d'un même contenu ?

- □ Comment vérifier si Googlebot voit vraiment votre contenu JavaScript ?

- □ WordPress pénalise-t-il vraiment le référencement par rapport au HTML statique ?

- □ Pourquoi les études utilisateurs externes sont-elles devenues incontournables pour résoudre les problèmes de qualité ?

- □ Faut-il vraiment faire confiance au rel=canonical pour contrôler l'indexation ?

- □ Les backlinks vers des 404 sont-ils vraiment perdus pour le SEO ?

- □ Le disavow tool efface-t-il vraiment toute trace des liens toxiques dans les algorithmes Google ?

- □ Un certificat SSL peut-il vraiment pénaliser votre référencement ?

- □ Une baisse progressive multi-domaines révèle-t-elle un problème de qualité plutôt que technique ?

- □ Les problèmes techniques SEO ont-ils vraiment un impact immédiat sur vos rankings ?

- □ Bloquer Google Translate impacte-t-il vraiment votre référencement ?

- □ La balise meta notranslate peut-elle vraiment bloquer le lien « Traduire cette page » dans les SERP Google ?

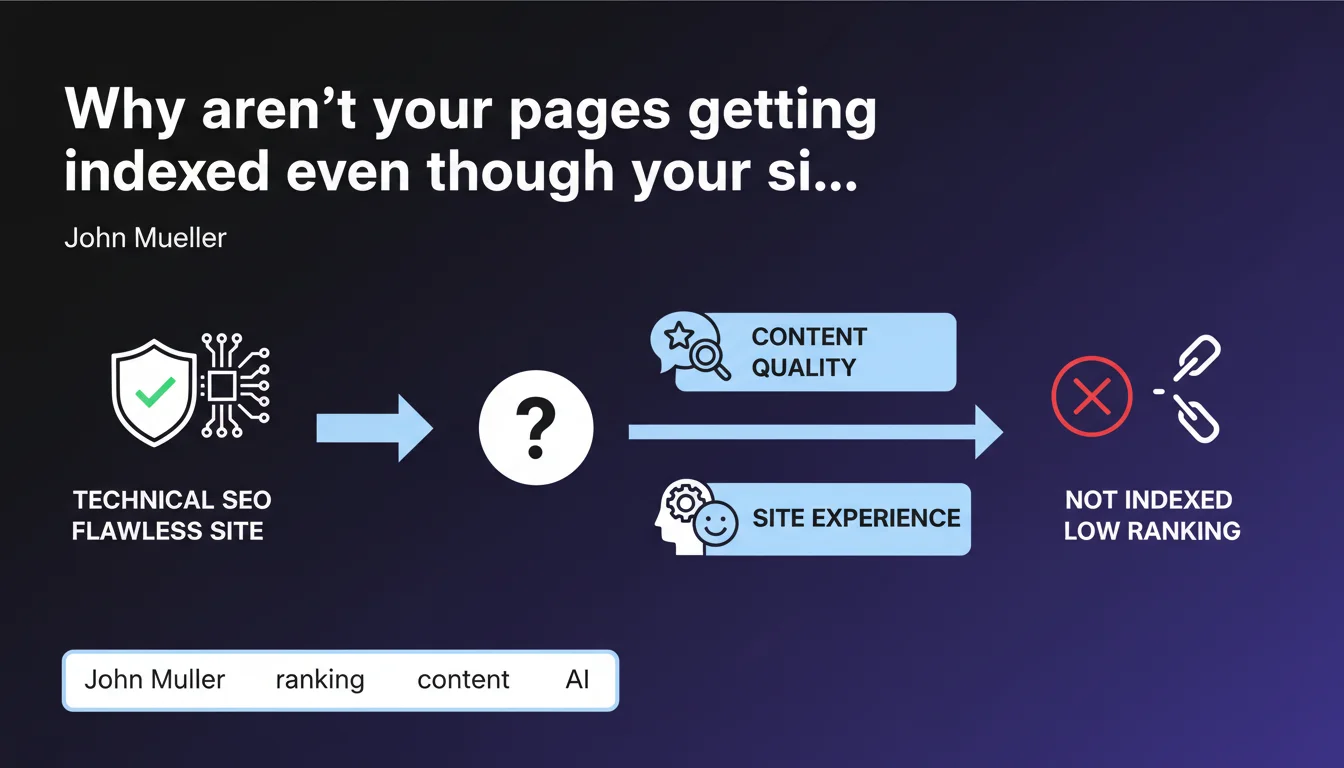

When your technical infrastructure is solid but certain pages aren't indexing or ranking well, Google points the finger at perceived content quality and overall site experience. The message is clear: stop looking for problems in robots.txt or sitemaps — it's your content that isn't meeting the bar.

What you need to understand

What does Google really mean by "perceived content quality"?

Google isn't talking about spelling mistakes or awkward phrasing here. Perceived quality refers to a page's ability to effectively answer the search intent, provide added value compared to what already exists, and engage the user.

In concrete terms, a page can be perfectly written but judged "low quality" if it simply reproduces what 50 other sites already say without adding anything new. Google also evaluates behavioral signals — time on page, bounce rate, interactions — even though the company remains vague about their exact weight.

Has technical infrastructure become secondary?

No. What Mueller is implying is that in the majority of diagnosed cases, the problem isn't there. If your site is crawlable, canonical tags are clean, and your XML sitemap is correct, continuing to hunt for a hypothetical technical bug is a waste of time.

That said, "correct technical aspects" remains a vague notion. What counts as "correct" in Google's eyes? A load time of 2 seconds or 4? "Average" or "good" Core Web Vitals? This statement assumes an exhaustive technical diagnosis has already been done — which, in reality, isn't always the case.

What does Google mean by "overall site experience"?

Overall experience goes well beyond text content. It encompasses navigation, architecture clarity, perceived speed, absence of aggressive ads, mobile readability, and editorial consistency.

A site that multiplies intrusive pop-ups, drowns users in contradictory call-to-action buttons, or has chaotic internal linking will be penalized — even if each page taken in isolation is "high quality." Google evaluates the context in which content is served, not just the content itself.

- Perceived quality ≠ writing quality : it's about added value versus competition and alignment with search intent.

- "Correct" technical is a fuzzy concept : Google doesn't specify exact thresholds for speed, Core Web Vitals, or structure that validate this criterion.

- Overall experience matters as much as content : navigation, architecture, mobile UX, and friction-free experience are scrutinized.

- Behavioral signals probably play a role : even though Google doesn't say so explicitly, bounce rate and engagement influence quality perception.

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On the "yes" side: we do see technically impeccable sites struggle to rank when their content is generic, duplicated, or mass-produced. Google's algorithms — especially the Helpful Content Update — explicitly target weak or churned-out content.

On the "no" side: saying "the problem usually comes from quality" dismisses some more complex edge cases. Sites can suffer algorithmic penalties linked to subtle technical patterns — poorly managed pagination, e-commerce facets indexed incorrectly, cross-domain duplication — that standard tools don't always catch. [Needs verification] : how many sites diagnosed with "quality issues" actually had an unidentified technical problem?

What nuances should be added to this claim?

Mueller is speaking to a diagnostic process of elimination: when the technique is OK, then it's the content. The problem: who defines "technique OK"? Google doesn't publish an official audit checklist. A "standard" technical audit doesn't necessarily cover all the signals Google observes — think of Real User Monitoring metrics, security signals, or potential anti-spam filters applied discreetly.

Another nuance: this statement assumes indexation and ranking follow the same logic. Yet, a page can be indexed but invisible due to a domain-level perceived E-E-A-T problem, independent of the page's intrinsic quality. Google is mixing two distinct issues here.

In what cases doesn't this rule apply?

Some industries — large-scale e-commerce, multi-author news sites, content aggregators — can encounter structural indexation problems unrelated to content quality. Poor crawl budget allocation, overly deep site architecture, or misconfigured URL parameters can block the indexation of otherwise useful pages.

Similarly, multilingual or multi-regional sites can suffer from issues tied to hreflang, canonical tags, or server geolocation — technical topics that Google sometimes downplays in public communications. In these contexts, the knee-jerk reaction of "it must be the content" is a dead end.

Practical impact and recommendations

What should you do concretely if your pages aren't indexing?

Start by exhaustively validating the technical layer. Don't just say "it looks right": audit crawling via server logs, verify consistency of robots.txt, canonical, and meta robots directives, test real-world speed (not just Lighthouse scores), and track JavaScript errors that block rendering.

If truly nothing surfaces, only then pivot to content. Analyze your competitors' indexed pages: what do they offer that you don't? What formats are they using (video, infographics, data-backed insights)? Does your content address a clear search intent or does it try to be everything to everyone?

What errors should you avoid in diagnosis?

Don't fall into the "content-as-alibi" trap : adding 500 words of filler to a page that had 300 won't help if those 500 words add no value. Google doesn't count words; it evaluates relevance and depth of treatment.

Also avoid neglecting UX signals : a page can be excellent substantively but penalized if it's drowning in ads, if the CLS is catastrophic, or if internal linking doesn't showcase it properly. Overall experience includes how you present the content.

- Audit server logs to confirm Google is actually crawling the pages in question

- Verify consistency of indexation directives (robots.txt, meta robots, canonical, sitemap)

- Test JavaScript rendering as Google sees it via the Search Console URL inspection tool

- Analyze Core Web Vitals under real conditions (Real User Monitoring), not just lab tests

- Compare your page content with well-ranking competitors: what do they offer that you don't?

- Evaluate overall experience: navigation, internal linking, ad intrusiveness, mobile readability

- Identify domain-level E-E-A-T signals: external mentions, identified authors, editorial consistency

How can you ensure your diagnosis is complete?

A thorough SEO diagnosis requires crossing multiple analysis layers — technical, semantic, UX, authority — and not stopping at the first identified symptom. Many indexation problems actually result from a cumulative effect of micro-defects that, in isolation, seem minor.

❓ Frequently Asked Questions

Une page techniquement parfaite peut-elle vraiment ne pas être indexée à cause de sa qualité ?

Comment savoir si mon problème est technique ou lié au contenu ?

Qu'entend Google par « expérience globale du site » ?

Est-ce que les Core Web Vitals sont suffisants pour valider l'aspect technique ?

Faut-il négliger la technique et se concentrer uniquement sur le contenu ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 08/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.