Official statement

Other statements from this video 16 ▾

- □ Les Web Components JavaScript sont-ils vraiment crawlables par Google ?

- □ Le balisage FAQ Schema impose-t-il un format strict de présentation ?

- □ Le balisage FAQ Schema garantit-il vraiment l'affichage des FAQ snippets dans Google ?

- □ Faut-il vraiment éviter de dupliquer son propre contenu pour le SEO ?

- □ Pourquoi Google pénalise-t-il les variations excessives d'un même contenu ?

- □ Comment vérifier si Googlebot voit vraiment votre contenu JavaScript ?

- □ WordPress pénalise-t-il vraiment le référencement par rapport au HTML statique ?

- □ Pourquoi vos pages ne sont-elles pas indexées malgré un site techniquement irréprochable ?

- □ Pourquoi les études utilisateurs externes sont-elles devenues incontournables pour résoudre les problèmes de qualité ?

- □ Faut-il vraiment faire confiance au rel=canonical pour contrôler l'indexation ?

- □ Les backlinks vers des 404 sont-ils vraiment perdus pour le SEO ?

- □ Le disavow tool efface-t-il vraiment toute trace des liens toxiques dans les algorithmes Google ?

- □ Un certificat SSL peut-il vraiment pénaliser votre référencement ?

- □ Une baisse progressive multi-domaines révèle-t-elle un problème de qualité plutôt que technique ?

- □ Bloquer Google Translate impacte-t-il vraiment votre référencement ?

- □ La balise meta notranslate peut-elle vraiment bloquer le lien « Traduire cette page » dans les SERP Google ?

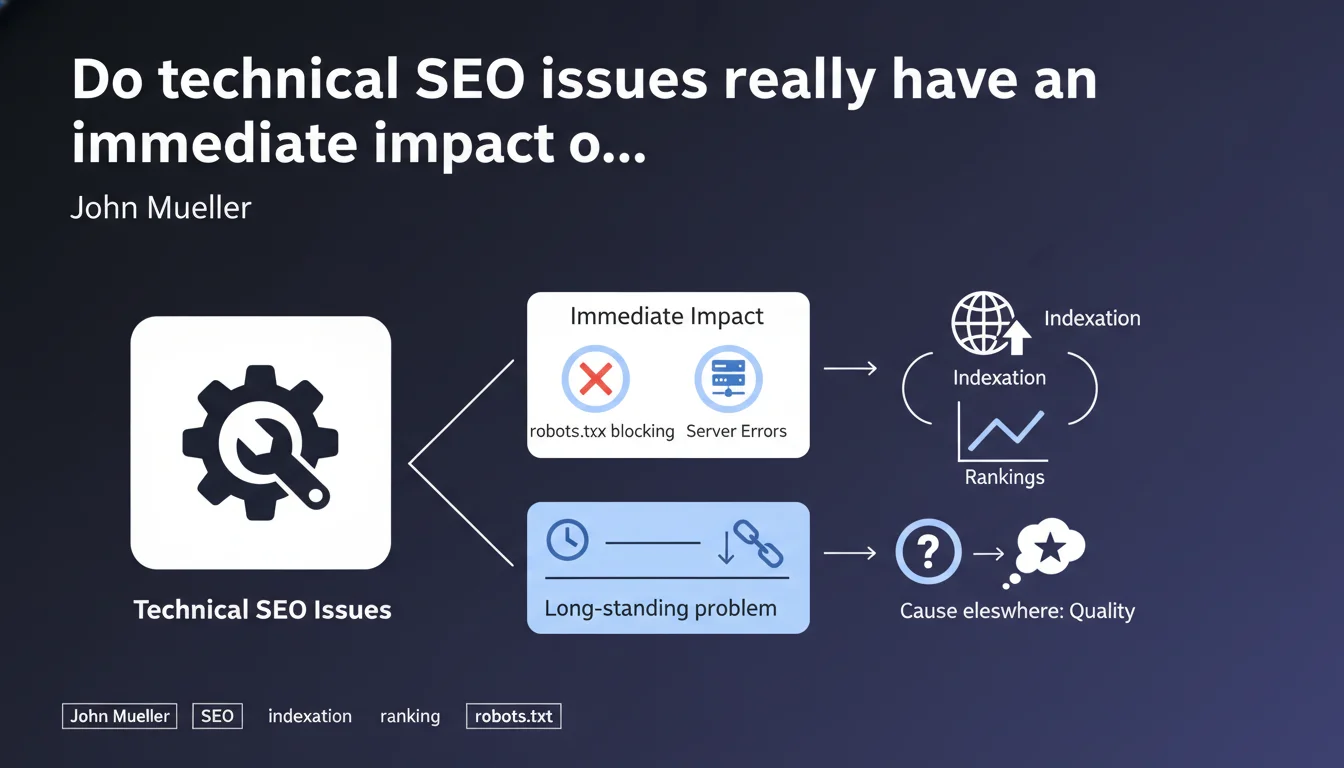

Real technical blockers (robots.txt, server errors) impact indexation instantly. If a technical issue has existed for months without visible consequences and traffic suddenly drops, look toward content quality rather than technical factors. Google clearly distinguishes between resource access problems and relevance problems.

What you need to understand

What are the "real" technical issues according to Google?

Mueller points the finger at access blockers and critical server errors. We're talking about a misconfigured robots.txt that prevents Googlebot from accessing entire sections, repeated HTTP 500 errors, or chronic server timeouts.

These issues physically prevent Google from crawling and indexing your pages. The effect is mechanical and immediate — no crawl, no indexation, no visibility. It's binary.

Why this distinction between technical and quality?

Many SEOs look for technical culprits when traffic drops. It's reassuring — a technical issue can be fixed, unlike content that has become outdated or surpassed by competitors.

Mueller reminds us here that if your site has been functioning correctly for months and Google crawls it without issue, a sudden drop is probably not technical. Quality algorithms (Helpful Content, Core Updates) then intervene, and that's a completely different battle.

What is the timeframe of a real technical issue?

The impact is almost instantaneous. A blocking robots.txt deployed by mistake? Your pages disappear from the index within days maximum. A server that regularly crashes? Google immediately reduces its crawl budget.

Conversely, if your supposed technical issue has existed for six months without consequence and a drop occurs today, you need to look elsewhere. Correlation is not causation.

- Immediate impact: blocking robots.txt, massive 5xx errors, DNS down, expired SSL certificate

- Progressive impact: moderate server slowness, soft 404, poorly managed pagination

- No direct impact: content quality, light internal duplication, non-optimized but valid HTML structure

- An old problem without visible effect is probably not the cause of a recent decline

SEO Expert opinion

Does this assertion hold up to field observations?

Yes, and it's actually one of the rare points where Google is perfectly aligned with what we observe. Technical blockers generate immediate alerts in Search Console. Indexation errors appear within 48-72 hours.

However — and this is where things get tricky — Mueller oversimplifies to an extreme. He opposes "technical" and "quality" as two separate universes. Reality? Many issues live in a gray zone.

What nuances should be added to this statement?

Take client-side rendered JavaScript. Technically, Google can crawl. But if rendering is slow or unstable, indexation becomes chaotic. Is it technical? Is it quality? Both, my friend.

Same observation for keyword cannibalization. Architectural problem (therefore technical) that generates semantic confusion (therefore quality). Or degraded Core Web Vitals — technically measurable, but the impact on ranking remains fuzzy and progressive, not binary.

[To verify]: Google never precisely defines where "technical" ends and where "quality" begins. This blurry boundary allows all interpretations.

In what cases does this rule not fully apply?

Site migrations are a textbook case. Technically, everything can work (301 redirects in place, robots.txt ok, fast server), but Google can take weeks to reevaluate authority and relevance in the new context.

Crawl budget issues on very large sites (millions of pages) also have a different effect. Google progressively reduces its exploration, the impact is not immediate but cumulative.

Practical impact and recommendations

How do you distinguish a real technical issue from a quality problem?

Analyze the timeframe. A major technical issue leaves immediate traces in Search Console: sharp drop in indexed pages, spike in server errors, explosion of crawl errors.

If your traffic curve plummets but Search Console remains silent on indexation errors, you're probably facing an algorithmic problem related to content quality or relevance.

What should you check first when traffic drops?

Start by eliminating obvious technical causes. Verify that Google can access your pages, that the server responds correctly, that the robots.txt hasn't been accidentally modified.

If everything is green on the technical side, pivot immediately to qualitative analysis: competitor positioning, content freshness, alignment with search intent, EEAT signals.

What tools should you use for quick diagnosis?

- Search Console: Coverage tab for indexation errors, Exploration statistics tab for crawl health

- Screaming Frog or Oncrawl: comprehensive audit of HTTP codes, response times, redirect chains

- Server logs: verify that Googlebot can access critical resources (JS, CSS, images)

- PageSpeed Insights: Core Web Vitals and rendering issues

- SERP comparison before/after: identify competitors who've taken your positions

- Content analysis: freshness, depth, differentiation vs competition

❓ Frequently Asked Questions

Combien de temps faut-il pour qu'un problème robots.txt impacte l'indexation ?

Une erreur 500 ponctuelle peut-elle faire chuter mon trafic durablement ?

Si mon site est lent mais accessible, est-ce un problème technique au sens de Mueller ?

Comment savoir si ma baisse de trafic vient d'une mise à jour algorithme ou d'un problème technique ?

Un contenu dupliqué est-il un problème technique ou de qualité ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 08/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.