Official statement

Other statements from this video 12 ▾

- □ Google indexe-t-il vraiment tout le contenu de votre site ?

- □ Pourquoi Googlebot ignore-t-il vos liens JavaScript si vous n'utilisez pas de balises <a> ?

- □ Google a-t-il vraiment abandonné l'idée d'un score SEO global ?

- □ Peut-on créer des liens vers des sites HTTP sans risque SEO ?

- □ Faut-il vraiment écrire « naturellement » pour ranker sur Google ?

- □ Faut-il vraiment supprimer son fichier de désaveu de liens ?

- □ Faut-il vraiment éviter d'implémenter le Schema markup via Google Tag Manager ?

- □ Robots.txt vs meta robots : pourquoi bloquer le crawl peut-il nuire à la désindexation ?

- □ Peut-on dupliquer la même URL dans plusieurs fichiers sitemap sans risque SEO ?

- □ Comment indexer le contenu d'une iframe sans indexer la page source ?

- □ HSTS et preload list : une fausse piste pour le référencement ?

- □ Pourquoi un nom de domaine descriptif ne garantit-il pas votre classement sur sa requête ?

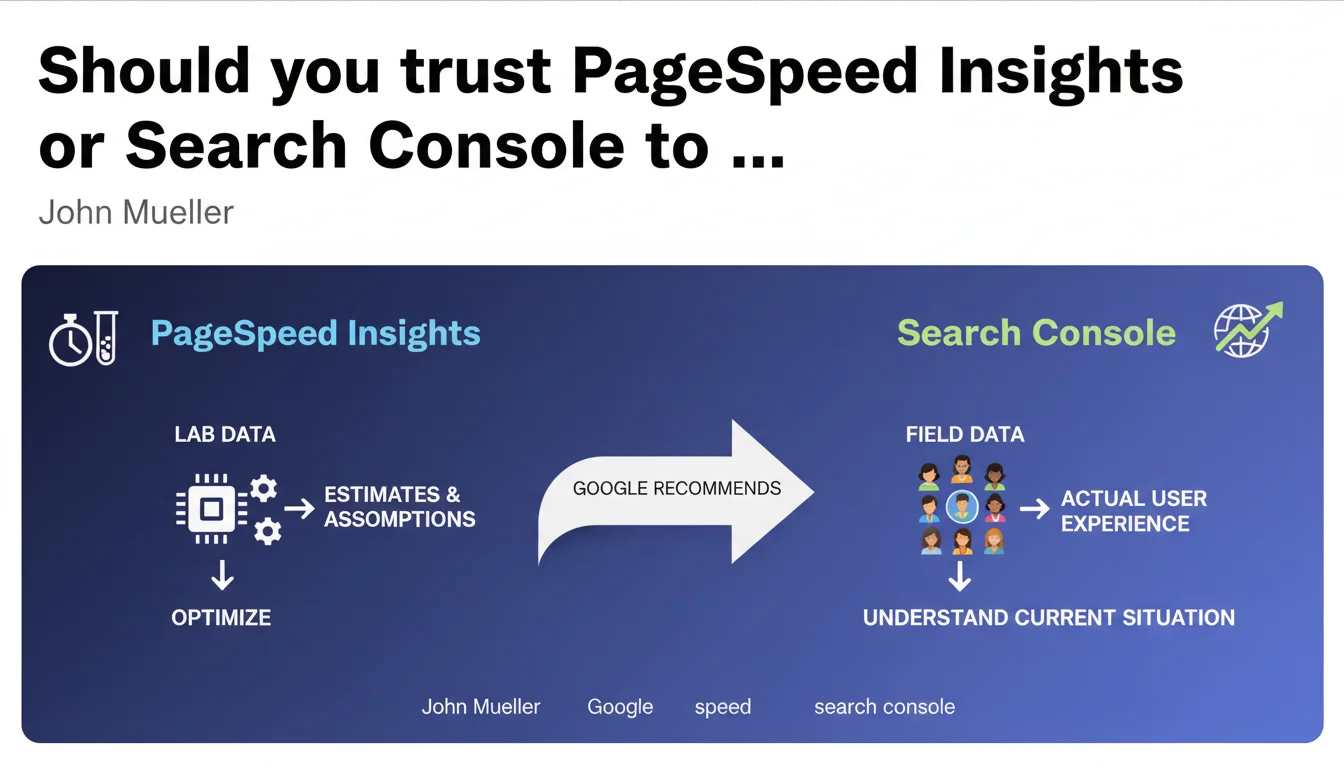

Google distinguishes between two types of speed data: PageSpeed Insights lab data (estimates based on assumptions) and Search Console field data (real user experience). Official recommendation: prioritize Search Console to diagnose your current situation, use lab data to optimize.

What you need to understand

Why does this distinction between lab data and field data actually matter?

PageSpeed Insights simulates page loading in a controlled environment — standardized connection, reference device, empty cache. It's a theoretical estimate that isolates the technical factors of your site. Field data from Search Console, on the other hand, aggregates what your real visitors actually experience: fluctuating 4G connections, saturated Wi-Fi, aging devices.

The problem? These two indicators can diverge dramatically. A site can score 95 in the lab and crash at 40 in real conditions because its visitors come mostly from low-bandwidth areas. The opposite also exists: a mediocre lab score can mask a decent field experience if your audience has solid infrastructure.

Which metric does Google actually use for ranking?

Core Web Vitals that impact rankings come from the Chrome User Experience Report (CrUX) — in other words, field data. This is what Search Console returns to you in its dedicated report. PageSpeed Insights also displays this CrUX data when it exists, but optimization recommendations are based on lab analysis via Lighthouse.

Concretely: if your URL doesn't have enough traffic to generate CrUX data, Google won't evaluate it on Core Web Vitals. The lab score then has no direct impact on your ranking, even if it remains useful for detecting technical flaws.

How should you interpret the absence of an "absolutely correct number"?

Mueller insists: there is no magic threshold. An LCP of 2.4 seconds is not systematically better than 2.6. The official thresholds (good / needs improvement / poor) are benchmarks, not hard barriers. Google assesses speed relative to your niche and weights this signal with 200+ other criteria.

This nuance is rarely understood: two sites with identical metrics can experience different ranking impacts depending on their competitive context and content relevance.

- Lab data: reproducible, controlled, ideal for testing optimization hypotheses before deployment

- Field data: reflect real user experience, serve as the basis for Core Web Vitals calculation for ranking

- Performance thresholds are not absolutes but relative indicators to each site's context

- A URL without CrUX data (insufficient traffic) is not evaluated on Core Web Vitals in the algorithm

SEO Expert opinion

Does this distinction truly reflect real-world practice?

Yes, and it's a point rarely explained by Google with such clarity. In 80% of audits I conduct, the lab/field gap is explained by three factors: audience geography, mobile connection quality, and the presence of third-party scripts undetected in a controlled environment. CrUX data captures these variables — Lighthouse does not.

But be careful: Search Console aggregates over 28 rolling days. If you deploy a major optimization, you won't see the full impact for about a month. Lab data, on the other hand, reflects improvement immediately. This is why Mueller emphasizes the complementarity.

What limitations must you keep in mind?

First limitation: [To verify] Google has never precisely documented the traffic threshold needed to generate CrUX data. Empirical observations suggest you need several hundred Chrome visitors monthly, but no official figure exists. Result: thousands of sites optimize their speed without knowing if they reach this threshold.

Second limitation: field data is aggregated averages. If 70% of your visitors have an excellent experience but 30% have a catastrophic one (satellite connection, for example), you could end up in the red even though the majority of your audience is satisfied. PageSpeed Insights won't show you this distribution — you need to dig into CrUX via BigQuery to get it.

Third point — and this is where Google's discourse becomes evasive: what is the exact weight of speed in ranking? Mueller talks about a signal among many others but refuses to quantify. A/B tests I've conducted on e-commerce sites show that improving LCP from 4s to 2s rarely generates more than 5% gains in organic positions if content remains mediocre. Speed is a differentiator at equal competency, not a magic elevator.

Practical impact and recommendations

What methodology should you adopt concretely?

Step 1: Log into Search Console, Core Web Vitals section. If you have data, focus on "Needs improvement" and "Poor" URLs. These are the ones potentially hurting your ranking. Ignore PageSpeed Insights for initial diagnosis — you risk fixing theoretical problems that don't affect your real visitors.

Step 2: Once problematic URLs are identified, switch to PageSpeed Insights and run the lab analysis. Lighthouse recommendations will give you precise optimization leads: image compression, JavaScript removal, caching. Test these changes in staging before deployment.

Step 3: After deployment, wait 4 to 6 weeks before judging impact. CrUX data refreshes slowly. Track progress in Search Console, not PageSpeed Insights — the latter will show improvement immediately but won't predict ranking impact.

What mistakes should you absolutely avoid?

Mistake #1: optimizing for lab score at the expense of real experience. I've seen sites remove Google Analytics, ad pixels, or chatbots to gain 10 Lighthouse points. Result: perfect score, conversions in free fall. Lab data is a guide, not a goal.

Mistake #2: ignoring segmentation. If your traffic is 60% mobile from South Asia and 40% desktop from Europe, you have two distinct problems. Search Console aggregates everything — dig into CrUX via BigQuery to isolate critical segments.

Mistake #3: underestimating third-party resource impact. Ad scripts, social widgets, and tracking solutions often escape your control but tank your metrics. Use a tag manager to load these resources asynchronously and conditionally.

How do you verify that optimizations are working?

- Set up weekly CrUX data tracking in Search Console — export reports to spot trends

- Compare lab scores before/after deployment to validate that technical fixes were properly applied

- Monitor business metrics (bounce rate, conversions) alongside Core Web Vitals — a technical improvement that degrades business is a failure

- If you lack CrUX data, use Real User Monitoring (RUM) tools like SpeedCurve or Cloudflare Web Analytics to capture your own field data

- Document each change and its impact — build an internal repository to capitalize on your learnings

❓ Frequently Asked Questions

Les données de PageSpeed Insights influencent-elles directement mon classement Google ?

Que se passe-t-il si mon site n'a pas assez de trafic pour générer des données CrUX ?

Pourquoi mon score PageSpeed Insights est excellent alors que la Search Console signale des problèmes ?

Combien de temps faut-il pour voir l'impact d'une optimisation de vitesse dans la Search Console ?

Faut-il viser un score de 100 sur PageSpeed Insights ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/07/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.