Official statement

Other statements from this video 12 ▾

- □ Faut-il se fier à PageSpeed Insights ou à la Search Console pour mesurer la vitesse de son site ?

- □ Google indexe-t-il vraiment tout le contenu de votre site ?

- □ Pourquoi Googlebot ignore-t-il vos liens JavaScript si vous n'utilisez pas de balises <a> ?

- □ Google a-t-il vraiment abandonné l'idée d'un score SEO global ?

- □ Peut-on créer des liens vers des sites HTTP sans risque SEO ?

- □ Faut-il vraiment écrire « naturellement » pour ranker sur Google ?

- □ Faut-il vraiment supprimer son fichier de désaveu de liens ?

- □ Faut-il vraiment éviter d'implémenter le Schema markup via Google Tag Manager ?

- □ Robots.txt vs meta robots : pourquoi bloquer le crawl peut-il nuire à la désindexation ?

- □ Comment indexer le contenu d'une iframe sans indexer la page source ?

- □ HSTS et preload list : une fausse piste pour le référencement ?

- □ Pourquoi un nom de domaine descriptif ne garantit-il pas votre classement sur sa requête ?

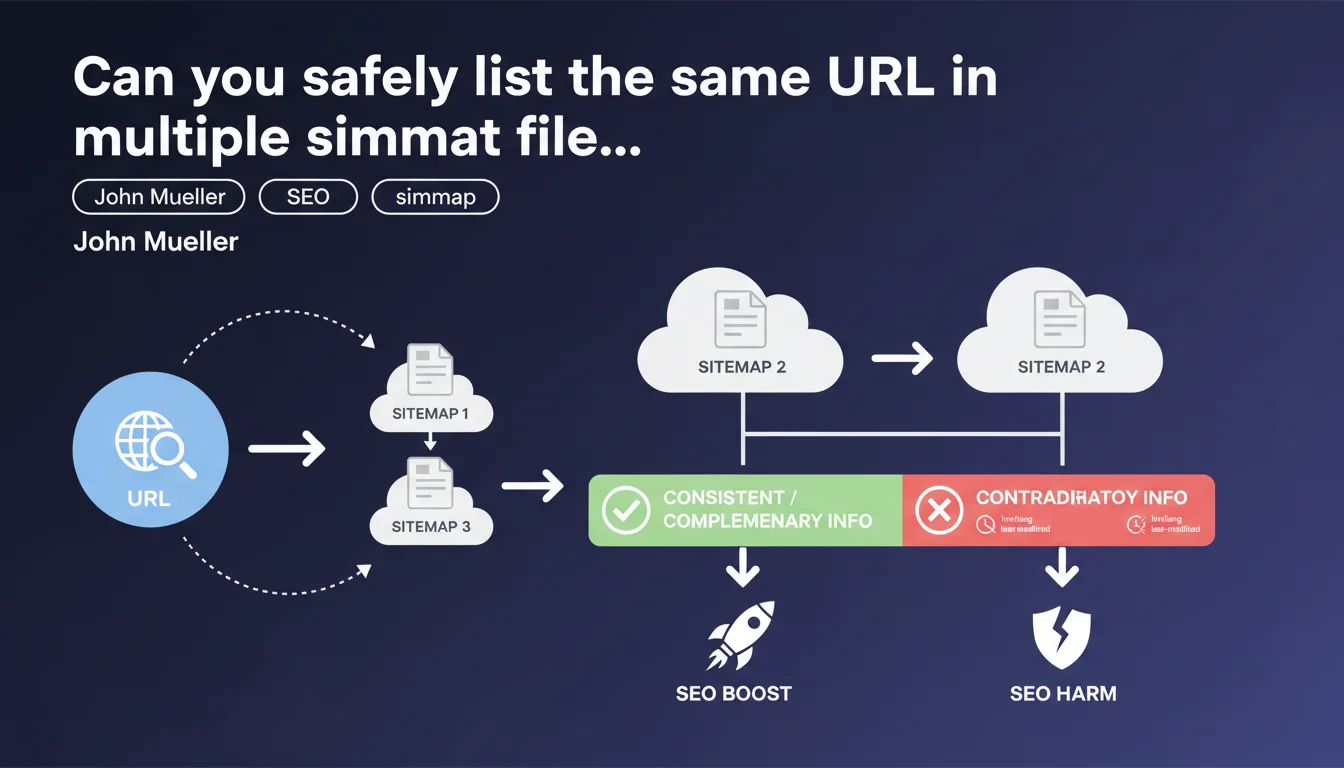

Google confirms that the same URL can appear in multiple sitemaps without any issues. The only requirement: avoid contradictory information such as conflicting hreflang annotations or differing last-modified dates. If the data remains consistent or complementary, this duplication is perfectly acceptable.

What you need to understand

Why does Google allow this URL duplication?

Contrary to popular belief, Google does not penalize having the same URL appear in multiple sitemap files. This flexibility is explained by the technical architecture of many websites: multiple systems can generate different sitemaps for organizational reasons.

In practice, if you have a products sitemap and a categories sitemap that both reference the same hybrid page, Google sees no issue with this. The crawler consolidates information from all sources and treats them as a coherent whole.

What really causes problems according to Google?

The real risk lies in contradictory information. If one sitemap indicates a different last-modified date than another for the same URL, Google must make a judgment call — and this process can create confusion in indexation.

Divergent hreflang annotations represent a typical case: if one sitemap declares that a French page's equivalent English version is a certain URL, while another sitemap claims otherwise, Google receives mixed signals that weaken the reliability of your directives.

When does this situation occur in practice?

This configuration happens frequently on multi-source websites. One CMS can generate a global sitemap, while a third-party solution (blog, forum, e-commerce) produces its own. Both can naturally reference common URLs — section homepages, hybrid product/content pages.

International sites with multiple sitemaps per language/region also encounter this scenario. A page accessible from multiple geographic paths can legitimately appear in several regional sitemaps, as long as the metadata remains aligned.

- URL duplication across sitemaps is accepted by Google with no negative impact

- Information must remain consistent: no conflicting last-modified dates or hreflang annotations

- Complementary data is allowed: one sitemap can provide information that another doesn't contain

- This tolerance reflects the technical reality of complex web architectures with multiple sitemap generators

SEO Expert opinion

Does this statement match real-world observations?

Yes, absolutely. Audits conducted on sites with URL duplication across sitemaps reveal no correlation with indexation problems — provided that the metadata remains consistent. Google does indeed apply an intelligent consolidation logic.

What we observe: Google takes the most recent last-modified date if multiple ones are provided and they're not too divergent. However, date discrepancies spanning several weeks for URLs supposed to be identical generate unnecessary repeated crawls — a symptom of algorithmic confusion.

What nuances should be added to this statement?

Mueller deliberately remains vague about the tolerance threshold for contradictions. At what degree of inconsistency does Google start ignoring certain signals? [To verify] — no official data clarifies this point.

Another gray area: "complementary information." If one sitemap includes alternative images and another doesn't, does Google systematically merge them? Tests show yes in 90% of cases, but erratic behaviors persist, particularly with video and news tags. Caution remains warranted.

In what contexts does this flexibility become a handicap?

On sites with limited crawl budget (millions of pages, low authority), multiplying references to the same URL across multiple sitemaps amplifies noise. Google must cross-reference more sources for the same information, which slows overall processing.

Sites with dynamic sitemap generation must also monitor drift. If a bug produces slightly different metadata each time a sitemap is generated, you artificially create "contradictions" that Google will need to resolve — and which will degrade the perceived reliability of your directives.

Practical impact and recommendations

What should you do concretely with your current sitemaps?

Start by auditing your sitemap files to identify URLs present in multiple ones. A simple script or tool like Screaming Frog allows you to extract and compare the content of all your indexes.

For each duplicate URL, verify that the metadata is strictly identical: same lastmod, same hreflang annotations, same image/video tags if applicable. Any discrepancy must be corrected at the source — manually editing each sitemap is not sustainable over time.

What errors must you absolutely avoid?

Don't create redundant sitemaps for convenience. If your CMS generates a complete sitemap and you manually add an "important pages" sitemap containing the same URLs, you add no value — you just add noise.

Avoid systems that generate last-modified dates on the fly based on sitemap generation time. A URL that changes its lastmod every hour when its content remains stable sends the wrong signal to Google and causes unnecessary crawls.

- Identify all URLs present in multiple sitemaps via a crawl or extraction script

- Compare metadata (lastmod, hreflang, images) to detect inconsistencies

- Correct contradictions at the source in the sitemap generators, not manually

- Avoid multiplying sitemaps without clear organizational justification

- Prioritize last-modified dates based on actual content, not generation time

- Monitor crawl logs to detect abnormal re-crawls linked to contradictory signals

- Document the logic behind URL distribution across sitemaps to facilitate maintenance

❓ Frequently Asked Questions

Si une URL apparaît dans 5 sitemaps différents, Google la crawle-t-il 5 fois ?

Peut-on avoir des lastmod différentes pour la même URL si elles reflètent des mises à jour partielles ?

Les annotations hreflang doivent-elles être identiques dans tous les sitemaps contenant la même URL ?

Vaut-il mieux un sitemap unique ou plusieurs sitemaps thématiques avec duplication ?

Comment détecter rapidement les incohérences de métadonnées entre sitemaps ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/07/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.