Official statement

Other statements from this video 15 ▾

- □ Les fluctuations de classement sont-elles vraiment normales ou cachent-elles un problème technique ?

- □ Google utilise-t-il vraiment un seul index mondial pour tous les pays ?

- □ L'engagement utilisateur influence-t-il réellement le classement Google ?

- □ Pourquoi les pages à fort trafic pèsent-elles plus dans le score Core Web Vitals ?

- □ Google segmente-t-il vraiment les sites par type de template pour évaluer la Page Experience ?

- □ Combien de liens internes faut-il placer par page pour optimiser son SEO ?

- □ Pourquoi la structure en arbre de votre maillage interne compte-t-elle vraiment pour Google ?

- □ La distance depuis la homepage influence-t-elle vraiment la vitesse d'indexation ?

- □ Pourquoi la structure d'URL n'a-t-elle aucune importance pour Google ?

- □ Pourquoi les positions Search Console ne reflètent-elles pas la réalité du classement ?

- □ Google distingue-t-il vraiment 'edit video' et 'video editor' comme des intentions différentes ?

- □ Le balisage FAQ doit-il obligatoirement figurer sur la page indexée pour générer un rich snippet ?

- □ Les liens en footer ont-ils la même valeur SEO que les liens dans le contenu ?

- □ L'indexation mobile-first a-t-elle un impact sur vos classements Google ?

- □ Faut-il vraiment qu'un robots.txt inexistant retourne un 404 pour éviter de bloquer Googlebot ?

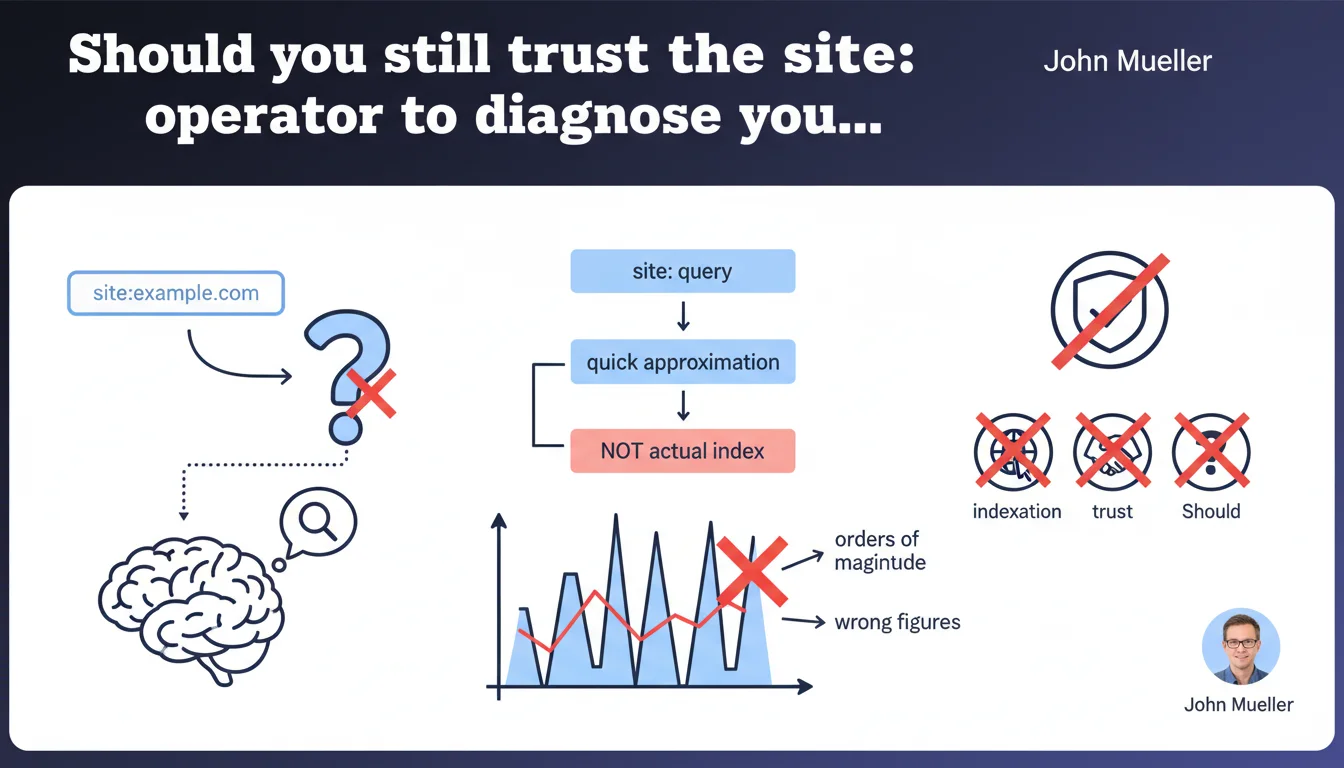

The numbers displayed by site: queries do not reflect actual indexation reality. Google confirms these are rough approximations that can be off by several orders of magnitude. No reliable diagnostic value for SEO audits.

What you need to understand

Why is Google questioning the reliability of the site: operator?

The site:example.com query has long been used by SEO professionals as a quick indicator of the number of indexed pages. Except that Google never designed this tool for that purpose. The site: operator is meant to filter search results, not to precisely count the URLs present in the index.

Mueller clarifies that the discrepancy can reach several orders of magnitude. Concretely? You see 10,000 results, there could actually be 100,000 indexed. Or 1,000. The algorithm provides a rough estimate to quickly display something to the user — nothing more.

How does this approximation actually work?

Google doesn't exhaustively count pages every time a user types site:domain.com. The search engine samples a portion of the index, extrapolates, and returns a rounded figure. This process is optimized for speed, not diagnostic accuracy.

The direct consequence: two identical site: queries performed a few hours apart can display radically different results. Without any pages being added or deindexed in between.

What are the implications for indexation diagnosis?

- Stop panicking when the site: figure drops sharply — it's probably just an approximation variation

- Never use site: as a KPI metric in client reporting — the data is too unstable

- Prioritize Google Search Console to know the real number of indexed pages

- Understand that the gap can mask real indexation issues — or create false ones

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. For years, SEO practitioners have observed massive and unexplained variations in site: results. A site with 50,000 pages displays sometimes 48,000 results, sometimes 12,000, with no correlation to Search Console reports.

What's new is that Google is explicitly acknowledging it. For a long time, the site: operator benefited from excessive trust — particularly from clients who demanded "having all their pages in the site: results". Mueller puts an end to this ambiguity: this number means nothing.

What nuances should be added to this claim?

If the absolute number is unusable, orders of magnitude remain indicative. A 10,000-page site displaying 150 results in site: probably has a problem. Not absolute certainty, but a strong enough signal to investigate via Search Console.

The other nuance: site: remains useful for qualitative search. Checking whether a specific URL is indexed, spotting duplicates, identifying orphan pages that Google still crawls — all of that works. It's only the quantitative aspect that needs to be discarded.

Are there cases where this rule doesn't apply?

There are no exceptions — the rule applies universally. Even on very small sites (fewer than 100 pages), the approximation remains an approximation. [To be verified]: one could theoretically imagine that Google refines accuracy on reduced indexes, but Mueller mentions no threshold.

The real problem is that no public alternative exists to get precise counting outside of Search Console. And Search Console itself has its biases — it only counts pages discovered and deemed indexable, not necessarily all those you would like indexed.

Practical impact and recommendations

What should you do concretely to audit indexation?

Permanently forget about site: as a metric. Switch entirely to Google Search Console, Coverage section (or Pages in the new interface). This is the only reliable source provided by Google to know the actual status of your URLs.

Set up regular monitoring of indexed pages versus submitted pages. If you have a sitemap of 5,000 URLs and GSC only indexes 2,000, investigate. But never compare these 2,000 to the figure displayed by site: — it makes no sense.

What errors should you avoid in indexation diagnosis?

- Never report the site: figure to a client as a performance indicator

- Don't panic if the site: number fluctuates without apparent reason — check GSC before drawing conclusions

- Avoid comparing site: between competitors to estimate their index size — the gaps are too significant

- Don't use third-party tools that rely on site: to calculate indexation metrics

- Never base a crawl budget strategy or pagination approach on these approximations

How can you ensure your site is properly indexed?

Cross-reference multiple sources. Google Search Console for official status, server logs to understand what Googlebot actually visits, sitemaps to declare your priorities. If a critical page doesn't appear in GSC after several weeks, that's a strong signal — far more reliable than an absence in site:

Implement automated GSC monitoring: alerts on sharp drops in indexed pages, tracking of 404 errors, monitoring of excluded pages due to duplicate content. This data is actionable, unlike the random variations of site:

The site: command was never designed to diagnose indexation — and Google officially confirms this. SEO practitioners must migrate their audit methods toward reliable tools: Search Console as a priority, server logs as a complement. These technical and methodological adjustments may require a overhaul of audit workflows, particularly on large-scale sites. If your organization lacks internal resources to operate this transition, the support of a specialized SEO agency can accelerate compliance and secure your indexation diagnostics.

❓ Frequently Asked Questions

Peut-on encore utiliser site: pour vérifier qu'une URL spécifique est indexée ?

Quelle est la marge d'erreur acceptable pour les résultats site: ?

Search Console affiche-t-il le nombre exact de pages indexées ?

Les outils SEO tiers qui affichent un nombre de pages indexées sont-ils fiables ?

Comment expliquer à un client que le chiffre site: ne compte pas ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 14/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.