Official statement

Other statements from this video 15 ▾

- □ Les fluctuations de classement sont-elles vraiment normales ou cachent-elles un problème technique ?

- □ Google utilise-t-il vraiment un seul index mondial pour tous les pays ?

- □ Faut-il encore se fier aux résultats de la requête site: pour diagnostiquer l'indexation ?

- □ L'engagement utilisateur influence-t-il réellement le classement Google ?

- □ Pourquoi les pages à fort trafic pèsent-elles plus dans le score Core Web Vitals ?

- □ Google segmente-t-il vraiment les sites par type de template pour évaluer la Page Experience ?

- □ Combien de liens internes faut-il placer par page pour optimiser son SEO ?

- □ Pourquoi la structure en arbre de votre maillage interne compte-t-elle vraiment pour Google ?

- □ La distance depuis la homepage influence-t-elle vraiment la vitesse d'indexation ?

- □ Pourquoi la structure d'URL n'a-t-elle aucune importance pour Google ?

- □ Pourquoi les positions Search Console ne reflètent-elles pas la réalité du classement ?

- □ Le balisage FAQ doit-il obligatoirement figurer sur la page indexée pour générer un rich snippet ?

- □ Les liens en footer ont-ils la même valeur SEO que les liens dans le contenu ?

- □ L'indexation mobile-first a-t-elle un impact sur vos classements Google ?

- □ Faut-il vraiment qu'un robots.txt inexistant retourne un 404 pour éviter de bloquer Googlebot ?

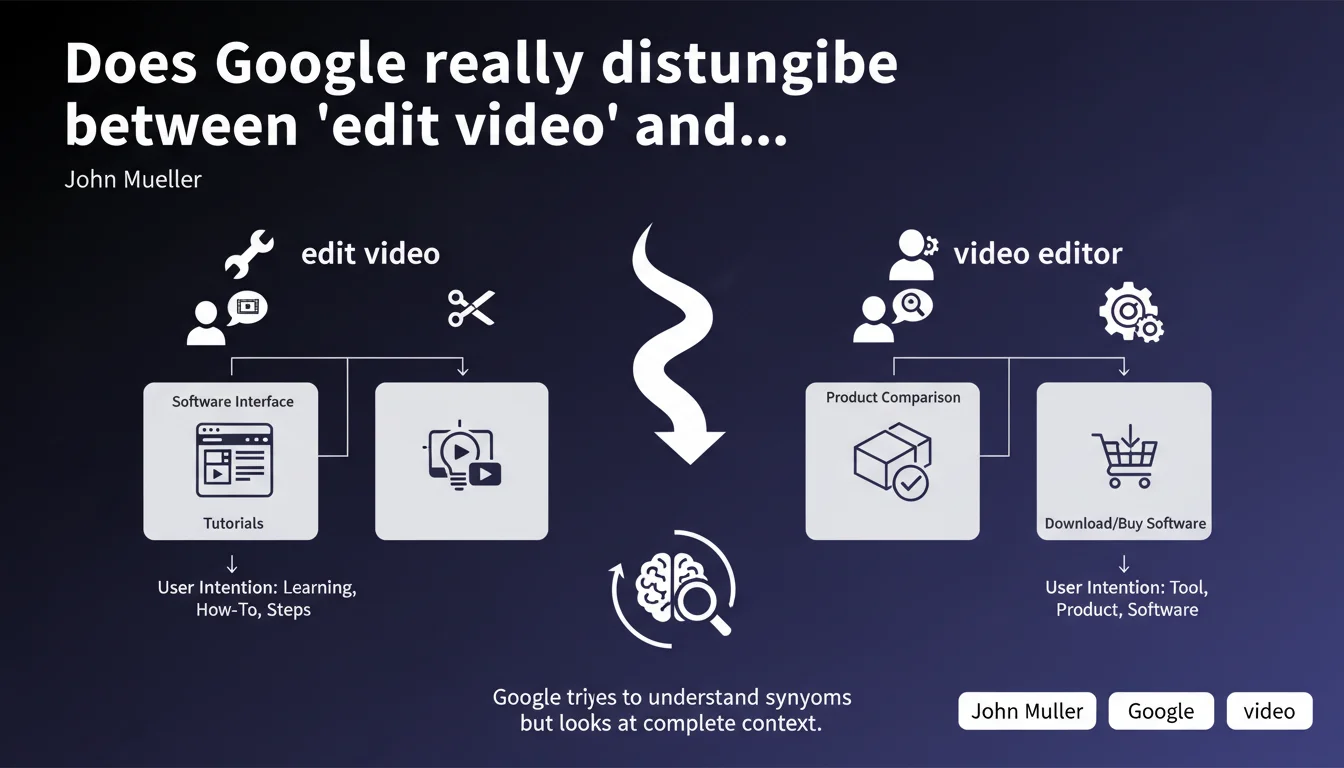

Google doesn't treat synonyms as simple lexical equivalences — the algorithm analyzes the broader context of the query to determine user intent. Two seemingly interchangeable terms like 'edit video' and 'video editor' can trigger radically different SERPs if user expectations differ. This contextual nuance requires you to work with the real intention behind each semantic variation, not just word proximity.

What you need to understand

What does 'contextual treatment of synonyms' really mean?

Google no longer relies on simple keyword matching between equivalent terms. The algorithm analyzes the hidden intent behind each query by scrutinizing user behavior, click signals, and time spent on results.

Take the example given: 'edit video' expresses an action (I want to modify a video right now), while 'video editor' suggests a tool search (I'm looking for software). Same semantic root, opposite intentions — therefore opposite results.

Why does this distinction change everything for SEO?

Because optimizing for a term without understanding its usage context means missing the mark. If you rank a tutorial page for 'video editor', Google will judge it irrelevant for that query — it targets software, not guides.

This breaks the 'synonyms list' approach we still see too often. You can't just stuff a page with semantic variations hoping to cast a wide net anymore.

How does Google determine when an intention diverges?

Officially, Google stays vague. But we know it crosses multiple signals: differentiated bounce rates by query, type of content clicked (product pages vs articles), query reformulation patterns. If users type A then reformulate to B with different clicks, the algorithm learns that A ≠ B.

- Lexical synonyms ≠ intention synonyms: two close terms can target opposite needs

- Context beats pure semantics: the algorithm reads between the lines of the query

- Semantic optimization must integrate behavioral analysis: watch what users actually click

- A single page can't serve two divergent intentions: better to create two distinct content pieces

SEO Expert opinion

Does this statement match real-world observations?

Yes, and it's been observable in SERPs for years. Type 'buy laptop' vs 'laptop reviews': the results are radically different despite semantic closeness. Google has learned to separate transactional, informational, and navigational intentions with ruthless precision.

But — and this is where it gets tricky — Mueller deliberately stays vague about how Google measures this divergence. No metrics, no thresholds, nothing actionable. [To verify]: we don't know if it's pure machine learning, manual rules, or a mix.

What nuances should we add to this claim?

First, not all synonyms receive this level of sophistication. For low-frequency or ambiguous queries, Google can still merge intentions due to insufficient behavioral data. I've seen cases where 'buy X' and 'price X' returned nearly identical SERPs on niche topics.

Second, this statement says nothing about spelling variations (singular/plural, accents, typos). These are handled differently — often with cruder normalization. Don't confuse contextual synonyms with formal variants.

In which cases does this logic break down?

On multi-intentional queries by nature. 'Python' can target the programming language or the snake — Google then displays a mix of results to cover both. But there are bugs: I've seen 'Paris' (the French city) polluted by Paris Hilton results for weeks after celebrity news.

Another limit: long-tail queries with little history. Google doesn't have enough behavioral data to refine, so it can fall back on literal synonym interpretation. There, you might still gain traffic with an old-school 'semantic stuffing' approach — but it's temporary.

Practical impact and recommendations

What should you concretely do on your pages?

Stop treating synonyms as an SEO checklist. For each target term, map the real intention by analyzing SERPs: content type (article, product sheet, video), editorial angle, related queries. If two terms show divergent SERPs, they deserve two distinct content pieces.

Then adapt your semantic architecture per page. An 'edit video' page should discuss actions, tutorials, techniques. A 'video editor' page should compare tools, list features, include purchase CTAs. Vocabulary, structure, media — everything must answer the mapped intention.

What mistakes must you avoid?

Don't create 'catch-all' pages trying to serve multiple intentions at once. Google will judge you irrelevant on all of them — and you risk being cannibalized by more focused competitors. Better two average pages than one bloated, unfocused page.

Another trap: believing that synonym tools or LSI Keywords are enough. These lists are generated by lexical proximity, not intention analysis. Use them as a starting point, but always validate with manual SERP analysis.

How do you verify your site aligns with these principles?

Audit pages targeting semantically close terms. For each one, compare the actual SERPs: if they diverge 50%+, your pages should too. Otherwise, you're off-target on at least one of them.

Track behavioral metrics per landing page: bounce rate, time on page, conversion rate. If a page shows abnormally high bounce rate on certain queries, it's often an intention mismatch — Google sends you traffic you don't satisfy.

- Map real intentions by analyzing SERPs for each target synonym

- Create distinct content for semantically close terms with divergent intentions

- Adapt vocabulary, structure, and media to each page's specific intention

- Avoid catch-all pages attempting to serve multiple intentions simultaneously

- Regularly audit alignment between received queries and delivered content via GSC + Analytics

- Test semantic variants on SERP tools (SEMrush, Ahrefs) to detect intention divergences

❓ Frequently Asked Questions

Google fusionne-t-il parfois des synonymes malgré des intentions différentes ?

Comment savoir si deux termes ont des intentions divergentes ?

Peut-on quand même utiliser des synonymes sur une même page ?

Les variations orthographiques sont-elles traitées comme des synonymes contextuels ?

Comment tracker les mismatches d'intention sur mon site ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 14/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.