Official statement

Other statements from this video 15 ▾

- □ Les fluctuations de classement sont-elles vraiment normales ou cachent-elles un problème technique ?

- □ Google utilise-t-il vraiment un seul index mondial pour tous les pays ?

- □ Faut-il encore se fier aux résultats de la requête site: pour diagnostiquer l'indexation ?

- □ L'engagement utilisateur influence-t-il réellement le classement Google ?

- □ Pourquoi les pages à fort trafic pèsent-elles plus dans le score Core Web Vitals ?

- □ Google segmente-t-il vraiment les sites par type de template pour évaluer la Page Experience ?

- □ Combien de liens internes faut-il placer par page pour optimiser son SEO ?

- □ La distance depuis la homepage influence-t-elle vraiment la vitesse d'indexation ?

- □ Pourquoi la structure d'URL n'a-t-elle aucune importance pour Google ?

- □ Pourquoi les positions Search Console ne reflètent-elles pas la réalité du classement ?

- □ Google distingue-t-il vraiment 'edit video' et 'video editor' comme des intentions différentes ?

- □ Le balisage FAQ doit-il obligatoirement figurer sur la page indexée pour générer un rich snippet ?

- □ Les liens en footer ont-ils la même valeur SEO que les liens dans le contenu ?

- □ L'indexation mobile-first a-t-elle un impact sur vos classements Google ?

- □ Faut-il vraiment qu'un robots.txt inexistant retourne un 404 pour éviter de bloquer Googlebot ?

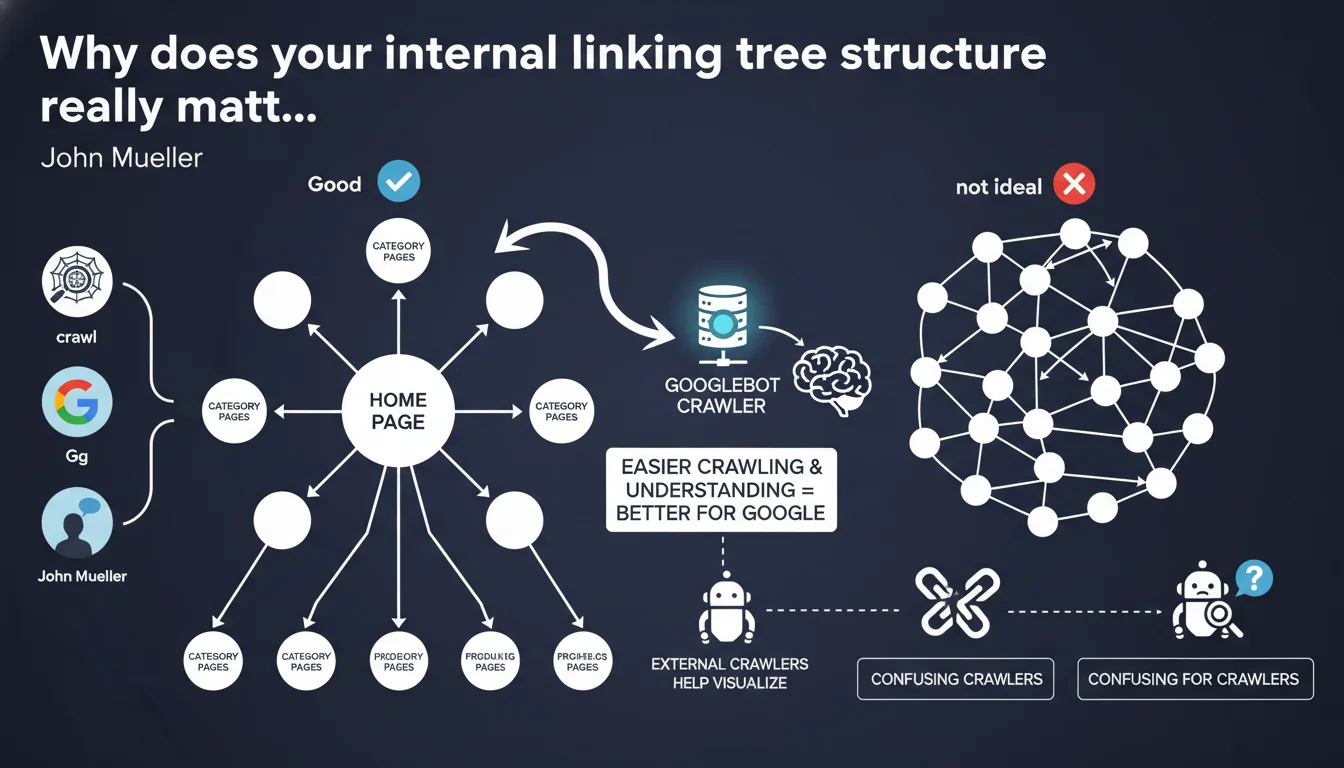

Google recommends visualizing your internal linking structure with external crawlers to verify it resembles a tree with branches, not a mesh where everything points to everything. A chaotic structure dilutes the engine's understanding of hierarchy and harms efficient crawling.

What you need to understand

What does a "tree structure" concretely mean?

Mueller talks about a central point — typically the homepage — from which progressive branches develop. Each hierarchical level deploys logically: main categories, subcategories, product sheets or articles.

The opposite image? A graph where each page links to 15 other pages with no apparent logic. The crawler loses track, Google doesn't grasp which page takes priority over which other.

- Central point: homepage or strong thematic hub

- Branches: coherent depth levels (1→2→3)

- Avoid: circular linking where everything is connected to everything

Why does Google insist on using external crawlers?

Because Search Console doesn't show the linking graph. Screaming Frog, OnCrawl, Botify or Gephi generate a visualization — and it's often eye-opening.

You discover orphaned pages, poorly connected clusters, silos pierced everywhere. Without this overview, it's impossible to diagnose the disorganization.

What's the direct implication for crawl budget?

A chaotic structure wastes crawl resources. Googlebot spends time on low-priority pages because the linking structure artificially overvalues them.

Conversely, a well-designed tree channels internal PageRank flow and guides the bot toward priority content. Result: faster indexing, better freshness capture.

SEO Expert opinion

Is this recommendation actually applied in the field?

Let's be honest: most e-commerce or editorial sites have tangled linking structures, not clean trees. Faceted filters, aggressive cross-selling, "similar articles" widgets everywhere — all this generates interconnections that drown the hierarchy.

Yet some of these sites rank correctly. Why? Because Google tolerates some disorder if click depth remains reasonable and strategic pages receive enough internal authority. But this tolerance doesn't mean optimal.

What nuances should be added to the tree metaphor?

A pure tree — strictly hierarchical with no cross-links — is rarely optimal for modern UX or SEO. Airtight silos prevent PageRank distribution between related topics.

What Mueller targets is the opposite excess: the tentacular linking where each page links to 20 others "just in case". The good balance? A tree-like framework reinforced by a few strategic bridges between silos — not a web.

In which cases does this rule not fully apply?

Media sites or content aggregators: editorial logic often imposes dense contextual linking. An article can legitimately link to 10 related articles. Google adapts if thematic relevance is strong.

Complex marketplaces: filters, comparators, dynamic pages — impossible to maintain a pure tree. Here, focus shifts to crawl control via robots.txt, canonical, selective nofollow. [To verify]: Google has never clarified a quantitative threshold beyond which linking becomes "too dense".

Practical impact and recommendations

How do you concretely audit your linking structure?

Crawl your site with Screaming Frog, OnCrawl or Sitebulb. Export the internal links graph and visualize it with Gephi or the crawler's native tool.

Identify the central point: is it really the homepage? Are major categories directly connected? Spot isolated nodes (orphaned pages) and poorly connected clusters.

- Crawl the site in "follow internal links only" mode

- Generate a linking graph (CSV or GEXF format)

- Identify pages with high centrality (natural hubs)

- Spot orphaned or poorly linked pages

- Verify that maximum click depth stays under 3-4 clicks from the homepage

What critical errors must you avoid?

First error: adding links everywhere "to do linking". A footer with 50 links to random categories doesn't create structure — it creates noise.

Second error: neglecting internal link anchor text. A perfect structure with generic anchors ("click here", "learn more") loses part of its semantic effectiveness.

Third error: believing that an XML sitemap compensates for chaotic linking. The sitemap helps initial indexation, not hierarchy understanding or PageRank distribution.

What must you concretely do to improve your structure?

Define your pillar pages (thematic hubs) and ensure they're one click from the homepage. From each pillar, deploy subcategories or articles logically.

Limit cross-links to strict necessity: contextual relevance only. A "similar products" link should stay within the same category, not point to your entire catalog.

Regularly review your linking structure after adding content. A site that grows organically always eventually drifts toward chaos if nobody monitors it.

❓ Frequently Asked Questions

Quel outil gratuit permet de visualiser rapidement un graphe de maillage interne ?

Un site e-commerce avec filtres à facettes peut-il avoir une structure en arbre propre ?

Faut-il supprimer tous les liens transversaux entre silos pour respecter la structure en arbre ?

Google pénalise-t-il un site dont le maillage n'est pas en arbre ?

Quelle profondeur de clic maximale Google recommande-t-il ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 14/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.