Official statement

Other statements from this video 7 ▾

- □ Les ccTLDs imposent-ils vraiment un ciblage géographique automatique impossible à contourner ?

- □ Hreflang : HTML ou sitemap XML, quelle méthode choisir pour votre référencement international ?

- □ Peut-on vraiment utiliser des balises noscript dans le <head> sans pénalité SEO ?

- □ Pourquoi Google distingue-t-il le HTML source du DOM rendu ?

- □ Les iframes dans le <head> peuvent-ils vraiment casser votre SEO technique ?

- □ La Search Console affiche-t-elle vraiment les variations d'impressions en temps réel ?

- □ Comment tester le user-agent Googlebot directement dans Chrome sans extension tierce ?

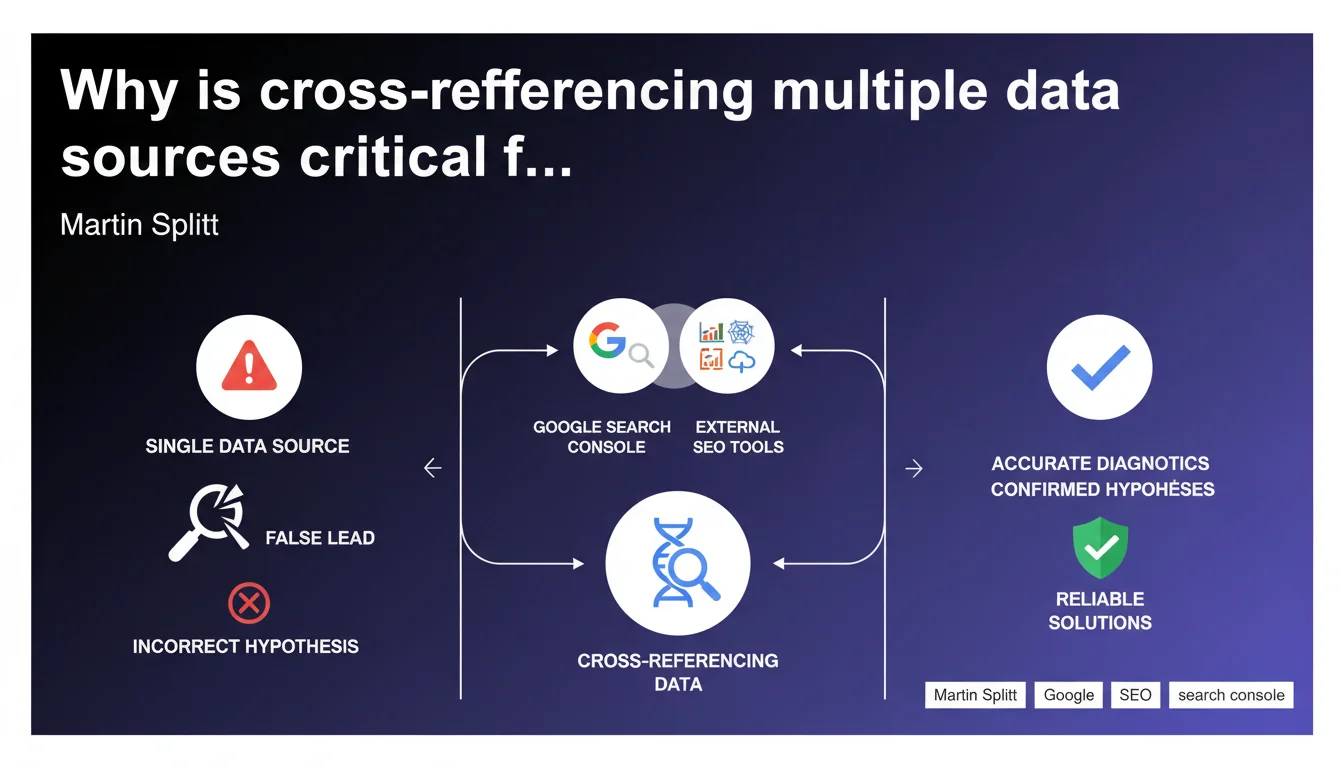

Google advises against relying solely on Search Console to diagnose SEO problems. Martin Splitt emphasizes: you must systematically cross-reference with third-party tools to avoid false leads and validate hypotheses. A single-source approach is a guaranteed way to miss the real problem.

What you need to understand

Why does Google warn against relying exclusively on Search Console?

Because Search Console is only a partial window into what Google actually sees. The data is aggregated, sometimes delayed, and certain crucial metrics — like real user behavior or competitive landscape — simply aren't there.

A technical issue detected in GSC can mask a deeper problem — or be a symptom with no real consequences. Without external perspective, you risk treating the symptom instead of the root cause.

What are the concrete limitations of Search Console?

GSC doesn't show all organic traffic, only what comes through Google Search. Data is sampled beyond a certain threshold, which skews analysis for large sites. Performance reports sometimes aggregate different queries under a single line.

Another key point: GSC doesn't capture competitive signals. It's impossible to know if your traffic drop stems from a technical issue or a competitor who simply optimized their content better.

What does "cross-referencing sources" mean for an SEO practitioner?

Concretely: compare GSC data with Google Analytics (or GA4), a crawl tool (Screaming Frog, Oncrawl), a rank tracker (SEMrush, Ahrefs), and potentially server logs. Each source provides a different angle.

A typical example: GSC shows a drop in impressions, but Analytics shows total traffic remains stable. Likely conclusion? The problem comes from keyword drift or cannibalization, not mass deindexing.

- Search Console: view of what Google indexes and how it ranks your pages

- Analytics: real user behavior, bounce rate, conversions

- Crawl tools: detection of technical errors (404s, redirects, missing tags)

- Rank trackers: precise tracking of fluctuations by keyword and competitor

- Server logs: actual frequency of Googlebot visits, pages crawled vs ignored

SEO Expert opinion

Is this recommendation truly new or just common sense?

Let's be honest: experienced SEOs have been cross-referencing sources for years. What's interesting here is that Google is making it official publicly. It means they're aware of GSC's limitations — and they don't want to be held responsible for bad diagnostics that result from it.

But this statement remains vague. Martin Splitt doesn't specify which external tools to prioritize, or how to arbitrate when two sources contradict each other. It's generic advice that leaves the practitioner alone in interpreting the data. [To verify] whether Google plans to enhance GSC to fill these gaps or if they believe it's up to the SEO to figure it out.

When can you rely solely on Search Console?

Almost never — except perhaps for one-off checks like an obvious indexing coverage issue (pages with 4xx errors, robots.txt blocking). But even then, an external crawl will confirm faster whether the problem is widespread or isolated.

The danger is falling into the opposite trap: multiplying tools without structuring your analysis. Result? You drown relevant data in noise. You need a clear methodology: start with a hypothesis, choose sources that validate or refute it, then cross-reference.

What stark discrepancies do we see between GSC and real-world data?

A classic: GSC shows an average position of 8, but your rank tracker reveals massive volatility (anywhere from 3 to 25) depending on the day. GSC smooths the data, hiding sharp fluctuations that may explain traffic drops.

Another frequent case: GSC flags a Core Web Vitals issue on URLs that receive almost no traffic. Without cross-checking with Analytics, you risk wasting time optimizing pages with no business impact.

Practical impact and recommendations

How do you organize an effective multi-source SEO diagnostic?

Start by defining your hypothesis before diving into tools. Example: "Traffic is down 30% in the last two weeks." First check GSC (coverage, indexing errors, rankings), then Analytics (affected pages, bounce rate), then run a crawl (recent technical issues).

Next, compare timelines. If GSC shows an impression drop on the 10th, your crawl reveals a template change on the 8th, and Analytics confirms a bounce rate spike on the 11th, you've probably found your culprit.

Which external tools should you prioritize to cross-reference with GSC?

For technical analysis: Screaming Frog (one-time audits) or Oncrawl (continuous monitoring). For rankings: SEMrush or Ahrefs, which also let you monitor competitors. For user behavior: GA4 obviously, combined with Hotjar if you want to dig deeper.

Server logs remain underutilized despite revealing what Googlebot actually does — not what we assume it does. Botify or OnCrawl integrate this analysis, but it requires server access and basic technical skills.

What mistakes should you avoid when cross-referencing data?

Never compare inconsistent time periods across tools. GSC aggregates by click date, Analytics by session date — a lag of a few days can skew the entire analysis. Align your date ranges before drawing conclusions.

Another trap: assuming an external metric contradicts GSC when it's actually measuring something different. GSC gives the average position in SERPs, not actual CTR or traffic. A tool like Ahrefs estimates traffic, but it's a projection — not absolute truth.

- Define a clear hypothesis before opening tools

- Compare the same date ranges across GSC, Analytics, and third-party tools

- Verify consistency between metrics (GSC impressions vs Analytics sessions)

- Use a crawl to confirm any technical issues detected in GSC

- Cross-reference with a rank tracker to catch variations hidden by GSC averages

- Consult server logs if in doubt about Googlebot's actual behavior

- Document each diagnostic step to avoid going in circles

Cross-referencing data isn't optional, it's the foundation of reliable SEO diagnostics. GSC alone is like driving with only one mirror — you see part of the road, but you miss the blind spots.

But beware: multiplying tools without rigorous methodology creates more confusion than clarity. If you haven't yet structured your diagnostic process or if your site's complexity makes analysis time-consuming, working with a specialized SEO agency can save you precious time — and crucially, help you avoid false leads that drain resources.

❓ Frequently Asked Questions

Peut-on se passer de Search Console si on a déjà Analytics et un outil de crawl ?

Pourquoi GSC et Analytics montrent-ils parfois des écarts de trafic importants ?

Combien de temps faut-il pour qu'une modification apparaisse dans Search Console ?

Quel est le minimum d'outils à combiner pour un diagnostic fiable ?

Comment savoir si un problème vient de Google ou de mon site ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 18/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.