Official statement

Other statements from this video 11 ▾

- □ Googlebot est-il vraiment un seul programme ou une infrastructure distribuée ?

- □ Le crawl Google fonctionne-t-il vraiment par API avec des paramètres configurables ?

- □ Pourquoi Google ne documente-t-il pas tous ses crawlers dans sa liste officielle ?

- □ Crawlers vs Fetchers : pourquoi Google utilise-t-il deux systèmes distincts pour accéder à vos pages ?

- □ Google réutilise-t-il vraiment le cache entre ses différents crawlers ?

- □ Pourquoi Googlebot crawle-t-il principalement depuis les États-Unis ?

- □ Pourquoi Google ne crawle-t-il pas massivement votre contenu géobloqué ?

- □ Le crawl budget est-il vraiment protégé automatiquement par Google ?

- □ Pourquoi Google impose-t-il une limite de 15 Mo par page crawlée ?

- □ Pourquoi Google impose-t-il une limite de 2 Mo pour crawler vos pages web ?

- □ Pourquoi Google limite-t-il le crawl des PDFs à 64 Mo alors que le HTML plafonne à 2 Mo ?

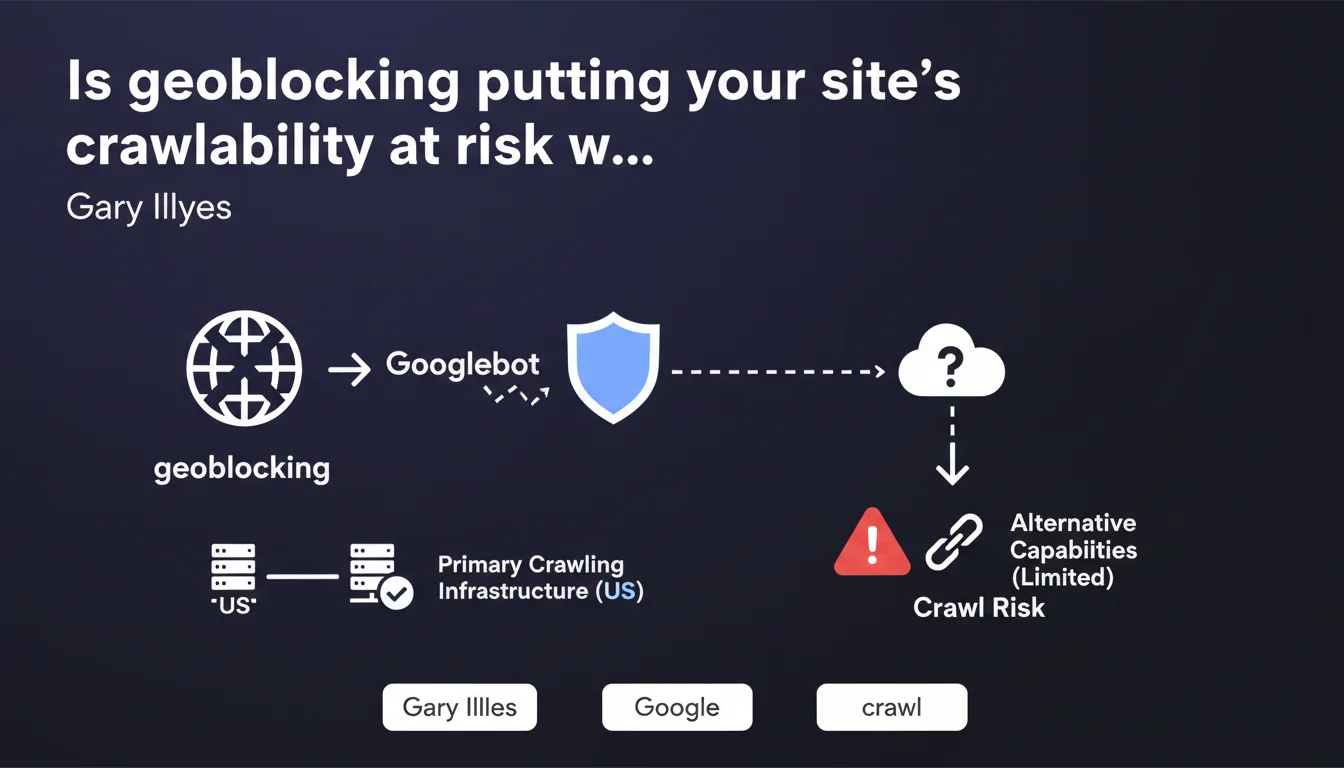

Google explicitly advises against blocking Googlebot based on geolocation. The primary crawling infrastructure is based in the United States, and Google's alternative capabilities for crawling from other geographic zones remain severely limited. If your site geoblocks American IP addresses, you risk simply not being crawled properly by Google.

What you need to understand

What is geoblocking and why do some sites use it?

Geoblocking consists of restricting access to a website based on the geographic location of the user. This practice relies on the visitor's IP address to determine their country of origin and decide whether access should be granted or denied.

Several reasons prompt certain companies to geoblocking their sites. First, there are legal constraints — GDPR, sector-specific regulations, broadcast rights. Some content can legally only be accessible from certain territories. Then there are commercial motivations: avoiding price comparisons between markets, protecting exclusive distribution agreements, or simply not offering service in a given geographic zone.

Where exactly is Google's crawling infrastructure located?

Gary Illyes is explicit: the primary crawling infrastructure operates from the United States. Googlebot therefore sends the majority of its requests from American IP addresses.

Google does have alternative capabilities for crawling from other regions, but Illyes specifies that these capabilities are "very limited." In concrete terms? If you're counting on crawling from Europe or Asia because your site blocks US IPs, you're playing roulette with your indexation.

What are the concrete risks to your search rankings?

Risk number one is straightforward: not being crawled at all. Or at least, being crawled so sporadically that your new pages take weeks to be discovered, your updates aren't taken into account, your crawl budget is drastically reduced.

Second risk: partial and inconsistent indexation. Some sections of your site could be accessible during rare visits from an alternative IP, others never. Result: erratic visibility, orphaned pages in the index, an inability to predict what will actually be indexed.

- Crawling from the United States represents the bulk of Googlebot traffic

- Crawling capabilities from other regions are very limited

- Blocking American IPs = risking faulty crawling

- Indexation becomes unpredictable and partial

- Crawl budget drops drastically if Googlebot is geoblocked

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Log analysis consistently shows an overwhelming predominance of American IPs in Googlebot traffic. Crawls from other regions do exist, but represent a tiny fraction — often less than 5% of total volume.

What Gary Illyes doesn't explicitly say is that these alternative crawls seem primarily dedicated to specific use cases: spot checks for localized results, geographic performance tests, perhaps validation crawls. But counting on it for systematic crawling of your site? Pure illusion.

In what cases is geoblocking still unavoidable?

Let's be realistic: some sites have no choice. Strict legal constraints — distribution of content subject to territorial licenses, regulated financial services, healthcare sectors with country-by-country authorizations. In these cases, geoblocking isn't an option but an obligation.

The real question then becomes: how can you minimize SEO impact? And here Google remains surprisingly vague. Illyes advises against geoblocking but offers no concrete alternative solutions for sites forced to use it. [To verify]: do there actually exist reliable mechanisms allowing Googlebot to identify itself to be exempted from geoblocking, beyond simple DNS reverse verification?

What are the gray areas in this recommendation?

First unclear point: Google doesn't specify whether this American infrastructure concerns all types of crawling. Googlebot Desktop, Mobile, Image, News — all from the US? Or do certain specialized bots operate from other regions? Radio silence.

Second gray area: the "very limited alternative capabilities." Limited how? In request volume? In frequency? In geographic coverage? This phrasing remains desperately evasive. For a practitioner who needs to make decisions, it's frustrating.

Practical impact and recommendations

How can you verify if your site is geoblocking Googlebot?

First step: analyze your server logs. Look for Googlebot requests and map their originating IPs. If you're seeing almost exclusively American IPs and your Googlebot traffic has dropped since implementing geoblocking, the diagnosis is clear.

Use Google Search Console. Check crawl statistics: a sharp drop in the number of crawled pages, an increase in server errors (403, 451), inconsistent response times — all warning signals.

Test manually with an American VPN. If your site is accessible from the US but blocked from Europe, and you simultaneously notice indexation issues, the correlation is obvious.

What technical solutions exist to reconcile geoblocking and SEO?

Classic solution: whitelist Googlebot IP addresses. Google publishes the IP ranges of its bots. Configure your firewall or CDN to allow these IPs specifically, even if they come from a normally blocked geographic zone.

The problem? These IP lists evolve. You need to set up an automatic update system, ideally by regularly querying Google's reverse DNS to validate bot authenticity. It's doable but requires solid technical infrastructure.

Alternative: use the user-agent rather than IP geolocation to authorize Googlebot. Be careful however — this method opens potential security loopholes if not coupled with reverse DNS verification. Anyone can spoof a user-agent.

What should you do if geoblocking is a legal requirement?

Document precisely the legal reasons that impose this geoblocking. Then implement a specific technical exception for Googlebot, ensuring that this exception doesn't violate regulatory constraints — which is generally the case since Googlebot isn't an end user.

Communicate with legal and technical teams. Geoblocking for legal reasons targets human users in certain jurisdictions, not search engines indexing content. Legally, allowing Googlebot generally poses no problem.

- Audit your logs to identify where Googlebot requests originate

- Check in Search Console if your crawl rate has dropped

- Whitelist the official Googlebot IP ranges in your firewall

- Implement reverse DNS verification to authenticate Googlebot

- Automate the update of authorized IP lists

- Regularly test your site's accessibility from different Googlebot IPs

- Document your geoblocking exceptions for security audits

❓ Frequently Asked Questions

Google peut-il crawler mon site depuis l'Europe si je bloque les IPs américaines ?

Comment autoriser Googlebot sans désactiver complètement mon géoblocage ?

Le géoblocage affecte-t-il tous les types de Googlebot (Desktop, Mobile, Image) ?

Mon site est légalement obligé de géobloquer certains pays, que faire ?

Comment savoir si mon site bloque actuellement Googlebot ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 12/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.