Official statement

Other statements from this video 15 ▾

- □ Les attributs ARIA améliorent-ils vraiment le SEO de votre site ?

- □ Faut-il vraiment rediriger les URL canonicalisées pour améliorer son référencement ?

- □ Google ignore-t-il vraiment les fragments d'URL (#) pour le référencement ?

- □ Pourquoi l'optimisation technique seule ne fait-elle plus ranker un site ?

- □ Comment vérifier si votre site est sous pénalité manuelle dans Search Console ?

- □ Pourquoi le balisage Product ne sert à rien pour l'immobilier ?

- □ Hreflang fonctionne-t-il vraiment pour du contenu non traduit mais ciblant des pays différents ?

- □ Le contraste des couleurs impacte-t-il vraiment le référencement naturel ?

- □ La balise HTML <article> améliore-t-elle vraiment le référencement ?

- □ Liens relatifs vs absolus : y a-t-il vraiment un impact SEO ?

- □ Faut-il vraiment imposer l'anglais dans les données structurées pour les jours de la semaine ?

- □ Comment vérifier qu'un crawler est réellement Googlebot et bloquer les imposteurs ?

- □ Faut-il vraiment utiliser prefetch et prerender pour améliorer son SEO ?

- □ Faut-il vraiment oublier le cache Google pour diagnostiquer l'indexation ?

- □ Pourquoi Google indexe-t-il du contenu qui n'existe pas sur votre site ?

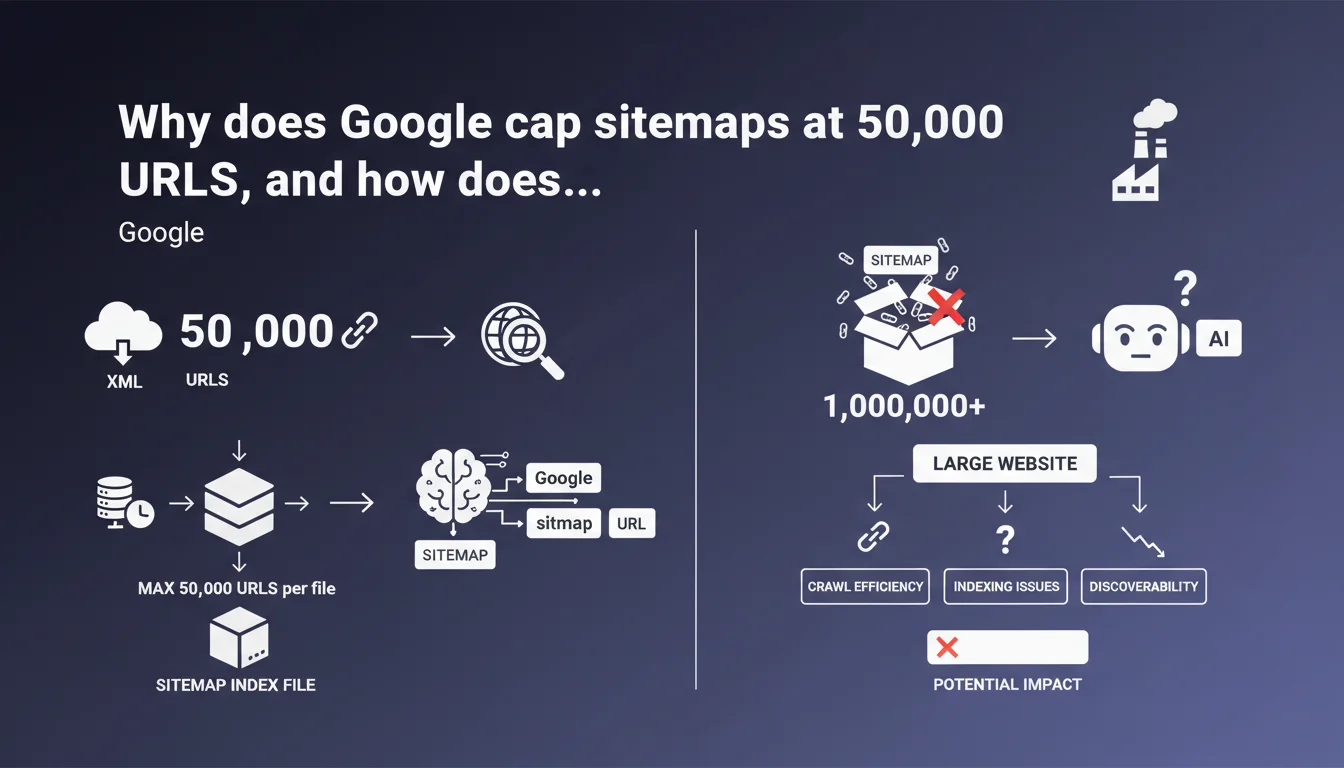

Google enforces a strict limit of 50,000 URLs per XML sitemap file. This same constraint applies to sitemap index files, which can only reference up to 50,000 other sitemaps. For large sites, this rule requires intelligent structuring of the sitemap hierarchy.

What you need to understand

Is the 50,000 URL limit really that constraining?

Yes, especially for e-commerce or media sites that generate thousands of pages. A site with 500,000 products will need to split its sitemaps into at least 10 separate files, then reference them via a sitemap index.

The problem intensifies when you exceed millions of URLs. With 2 million pages, you'll need 40 individual sitemaps — and if you use a sitemap index to organize them, that index can only point to a maximum of 50,000 other sitemap files. In practice, this leaves plenty of room, but the structure becomes an organizational headache.

Why does Google impose this technical limit?

It's about server performance and parsing efficiency. An XML file with hundreds of thousands of URLs would be heavy, slow down crawling, and could cause timeout issues on Googlebot's end. By fragmenting, Google ensures each request remains manageable.

This limit has existed since the first versions of the sitemap protocol. It's never been increased, even though server power has exploded. Google prefers to maintain stable architecture rather than constantly adapt its specs.

Does the limit also apply to file size in MB?

Yes. Beyond the number of URLs, Google also enforces a 50 MB uncompressed limit (10 MB compressed with gzip). If your sitemap exceeds either of these two constraints — URL count or file weight — you must split it.

In practice, you'll almost always hit the URL limit before the file size limit, unless your <lastmod>, <priority>, and <changefreq> tags bloat each entry. But Google largely ignores these tags anyway, so you might as well remove them.

- Maximum 50,000 URLs per XML sitemap

- Maximum 50,000 sitemaps referenced in a sitemap index

- File size limit: 50 MB uncompressed, 10 MB compressed

- Mandatory fragmentation for large sites

- No official documented exceptions

SEO Expert opinion

Is this rule respected by all CMS platforms and sitemap generators?

No, and that's where things get tricky. Some WordPress or PrestaShop plugins generate monolithic sitemaps that significantly exceed 50,000 URLs. Google crawls them anyway, but truncates beyond the limit — meaning some URLs will never be discovered via the sitemap.

I've seen sites with 80,000 products and a single sitemap. Result: 30,000 URLs ignored, with no alert in Search Console. [To verify]: Google doesn't consistently surface explicit errors when a sitemap exceeds the limit — it simply processes the first 50,000 entries and stops.

Is the sitemap index limit really problematic?

Let's be honest: very rarely. Having 50,000 distinct sitemap files means managing several million URLs. At that scale, the real challenge isn't the technical limit anymore, but governance: how do you maintain this architecture without losing track?

However, the rule forces you to think about structure from the start. A site that generates a new sitemap daily (common strategy for media outlets) must anticipate volume over several years. After 137 years, you'd exceed the limit — okay, nobody worries about that.

Does Google communicate stats about rejected sitemaps for exceeding limits?

No, and that's frustrating. Search Console displays generic errors like "unreadable sitemap" or "too large", but never specifies whether it's due to URL count or file weight. Impossible to know how many sites are affected.

[To verify]: Field observation suggests Google sometimes crawls beyond 50,000 URLs if the sitemap is well-formed and the server responds quickly. But relying on this is playing roulette — officially, the limit holds.

Practical impact and recommendations

What should you do concretely to stay compliant?

Fragment your sitemaps before reaching the limit. If you have 30,000 URLs today, already plan the structure for 100,000. Create thematic sitemaps (products, categories, articles) or time-based ones (by month, by year).

Use a sitemap index to orchestrate everything. Declare it in your robots.txt and in Search Console. Test the structure with an XML validator to verify that no file exceeds the thresholds.

What mistakes should you absolutely avoid?

Never generate a single sitemap for a large site. Never nest multiple levels of sitemap indexes. Never forget to compress with gzip — it reduces bandwidth and improves response times.

Also avoid stuffing your sitemaps with unnecessary URLs: duplicate pages, those canonicalized elsewhere, blocked by robots.txt, or with noindex. Each URL counts toward the 50,000 limit, so only include those deserving to be crawled.

How do you verify that your site respects these rules?

Inspect your sitemaps with a tool like Screaming Frog or a simple grep on the XML. Count the number of <url> or <sitemap> tags. Check file weights before and after compression.

In Search Console, monitor errors like "sitemap too large" or "missing URL". If you notice certain pages never appearing in the index despite their presence in the sitemap, it's often a sign of silent exceeding.

- Split sitemaps beyond 40,000 URLs to maintain a safety margin

- Create a sitemap index to orchestrate multiple files

- Compress systematically with gzip

- Declare the sitemap index in robots.txt and Search Console

- Exclude canonicalized, noindexed, or blocked URLs

- Automate generation to avoid oversights during updates

- Monitor errors in Search Console every week

❓ Frequently Asked Questions

Que se passe-t-il si mon sitemap dépasse 50 000 URLs ?

Puis-je imbriquer plusieurs niveaux de sitemap index ?

La limite de 50 Mo concerne-t-elle le fichier compressé ou non ?

Faut-il inclure les balises lastmod, priority et changefreq ?

Comment structurer mes sitemaps pour un site de plusieurs millions de pages ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 09/08/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.