Official statement

Other statements from this video 10 ▾

- □ Pourquoi l'écart entre URLs découvertes et indexées révèle-t-il des problèmes critiques ?

- □ Pourquoi les problèmes d'indexation se concentrent-ils sur certains dossiers de votre site ?

- □ Le no-index libère-t-il vraiment du crawl budget pour les pages importantes ?

- □ Les chaînes de redirections tuent-elles vraiment l'expérience utilisateur ?

- □ Faut-il vraiment supprimer toutes les redirections internes de votre site ?

- □ Pourquoi Google ralentit-il son crawl quand votre serveur faiblit ?

- □ L'instabilité serveur peut-elle vraiment déclasser votre site dans Google ?

- □ Faut-il vraiment multiplier les outils de crawl pour diagnostiquer efficacement vos problèmes SEO ?

- □ Pourquoi faut-il détecter les erreurs techniques avant que Google ne les trouve ?

- □ Les Developer Tools du navigateur suffisent-ils vraiment pour auditer vos redirections SEO ?

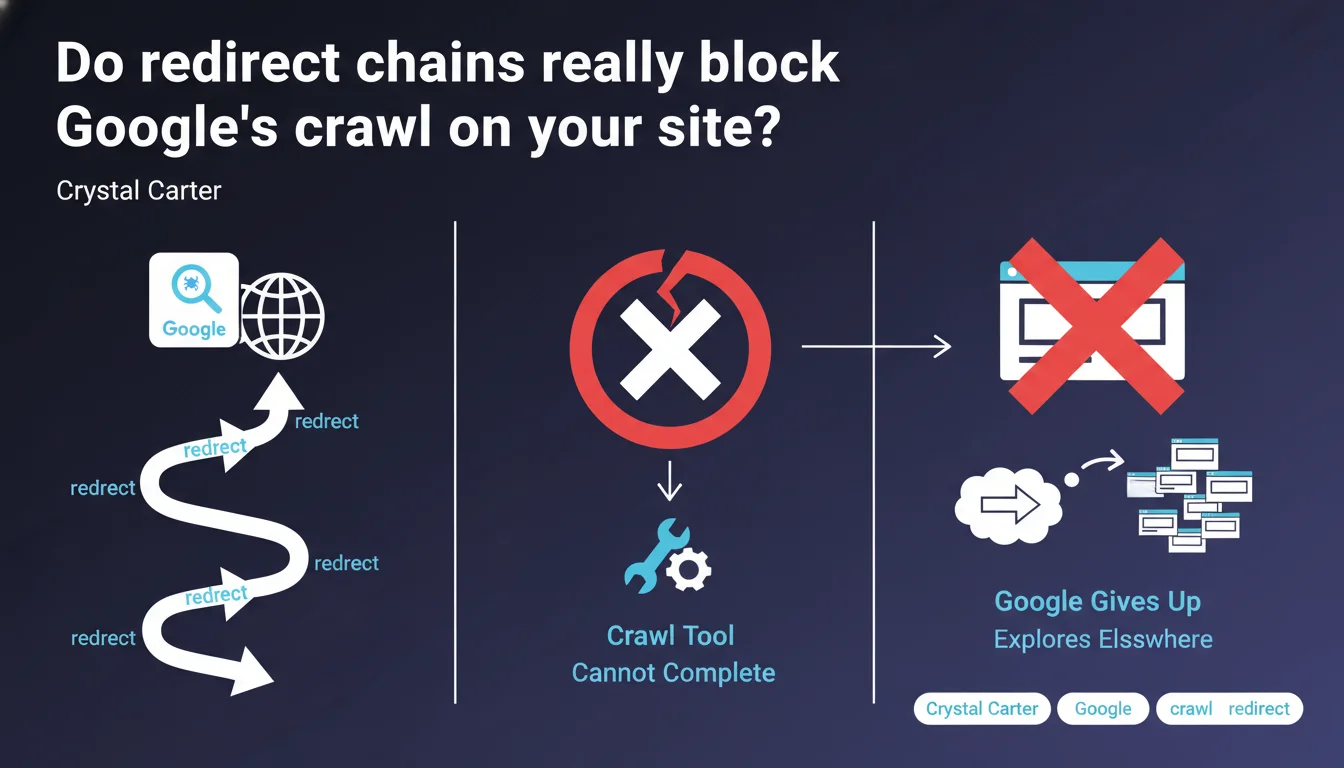

Google simply abandons exploring a website if redirect chains slow down its crawl too much. In other words: too many cascading 301s, and Googlebot moves on. The direct consequence? Entire sections of your site may never be indexed.

What you need to understand

Crystal Carter is crystal clear: Google doesn't insist on a site presenting complex redirect chains. If the crawler encounters too many successive jumps (A → B → C → D), it gives up and redistributes its crawl budget elsewhere.

This statement confirms what many have already suspected in the field: crawl time is not infinite, and Googlebot optimizes its resources. A site that multiplies chained redirects shoots itself in the foot.

What exactly is a redirect chain?

We speak of a chain when one URL redirects to a second URL, which itself redirects to a third, or even a fourth. Classic example: old-url.com/page → new-url.com/page → new-url.com/en/page → new-url.com/en/final-page.

Google generally tolerates 1 to 2 jumps, but beyond that, the risk of abandonment increases significantly. And it's not just a theoretical question — professional crawl tools encounter exactly the same limitations.

Why does Google give up rather than persist?

Crawl budget is a limited resource. Google cannot spend hours following redirects in loops on a single site when millions of other pages are waiting to be explored.

If your site forces Googlebot to consume its time on unnecessary redirects, it simply considers that you're not facilitating its work. Result: it will return less frequently, explore fewer pages, and your indexation will suffer directly.

What are the concrete impacts on indexation?

The consequences are multiple and measurable. First, valid pages will never be indexed — even if they are technically accessible, Googlebot will have given up before reaching them.

Next, the refresh rate of your content will slow down. An important update risks taking weeks to be accounted for if it sits at the end of a redirect chain. Finally, your overall crawl budget decreases: Google redistributes its time differently and indirectly penalizes you.

- Google abandons exploration when redirect chains slow crawl too much

- A chain is defined by successive redirects (A → B → C → D...)

- Beyond 2 jumps, the risk of abandonment becomes significant

- Direct impact: unindexed pages, slow refresh, wasted crawl budget

- Third-party crawl tools encounter exactly the same limitations as Googlebot

SEO Expert opinion

Does this statement really reflect what we observe in the field?

Yes, and it's even an understatement. In audits of large-scale websites, we regularly find that entire sections are never crawled because of poorly managed redirects. Server logs confirm it: Googlebot follows 1, sometimes 2 redirects, then disappears.

What's missing from Crystal Carter's statement is the precise limit: how many jumps exactly? 3? 5? Google remains deliberately vague. [To verify] with your own tests, because this tolerance likely varies depending on the "trust" Google grants your site (internal PageRank, crawl history, etc.).

Are all types of redirects affected the same way?

No, and this is an important distinction. 301 (permanent) and 302 (temporary) redirects are not treated identically by Google. In theory, a 301 should signal "this page has permanently moved, update your index," while a 302 says "check back later."

In practice, a chain of 302s is even worse than a chain of 301s: Googlebot must regularly come back to verify if the temporary redirect has changed. Result: crawl budget massacred. And if you mix 301s, 302s, and even 307s in the same chain? You're signing the death warrant for your crawl.

Are there cases where Google tolerates more jumps?

Honestly? Unlikely. Some SEOs claim that "authoritative" sites would benefit from greater leeway, but [To verify] — no official data supports this hypothesis.

What we know for certain: even a powerful site wastes crawl budget with chained redirects. You can have 10 million backlinks, but if Googlebot spends 80% of its time following your redirects, it will explore fewer useful pages. The "tolerance" is a dangerous myth.

Practical impact and recommendations

How do you identify redirect chains on your site?

First step: crawl your site with a dedicated tool (Screaming Frog, OnCrawl, Botify, etc.). Configure it to follow all redirects and generate a specific report on detected chains.

Then analyze your server logs. You'll see exactly where Googlebot gives up. If you notice that certain sections receive zero Googlebot visits even though they're linked from your navigation, look for upstream redirects. Often, the problem comes from a poorly cleaned migration or a CMS change that stacked layers.

What corrective actions should you implement immediately?

Simplify your redirects so they point directly to the final destination. If you have A → B → C, modify A so it redirects directly to C. Same for B if it's still referenced somewhere.

Review your migration history. Each redesign leaves traces: old URLs that redirect to temporary URLs, which themselves redirect to the current version. Clean all of that up. And update your internal links so they point directly to final URLs — never rely on a redirect to "catch" a misconfigured link.

What errors must you absolutely avoid?

Never leave a 302 redirect in place if the change is permanent. Google will eventually treat it as a 301, but in the meantime, you're losing time and crawl budget.

Avoid circular redirects (A → B → A) or loops (A → B → C → A). They crash crawlers and give a catastrophic image of your site. And above all, don't create new chains during migrations: always map the old URL to the new final URL, not to an intermediate URL.

- Crawl your site with a professional tool to map all redirects

- Analyze server logs to find where Googlebot abandons

- Simplify each chain so it points directly to the final destination

- Update internal links to avoid triggering unnecessary redirects

- Replace all 302s with 301s if changes are permanent

- Verify that no loops or circular redirects exist

- Clean up migration history to remove successive layers of redirects

❓ Frequently Asked Questions

Combien de redirections en chaîne Google tolère-t-il avant d'abandonner ?

Les redirections 301 et 302 ont-elles le même impact sur le crawl ?

Un site avec beaucoup d'autorité peut-il se permettre plus de redirections en chaîne ?

Comment vérifier si Google abandonne le crawl de certaines pages à cause de redirections ?

Dois-je corriger en priorité les chaînes de redirections ou d'autres problèmes techniques ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 29/11/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.